以前传统方式去监控一个mysql服务,首先需要安装mysql-exporter,获取mysql metrics,并且暴露一个Port端口,等待prometheus服务来拉取监控信息,然后去Prometheus Server的prometheus.yaml文件中在scarpe_config中添加mysql-exporter的job,配置mysql-exporter的地址和端口等信息,再然后重启Prometheus服务,就完成添加一个mysql监控的任务。

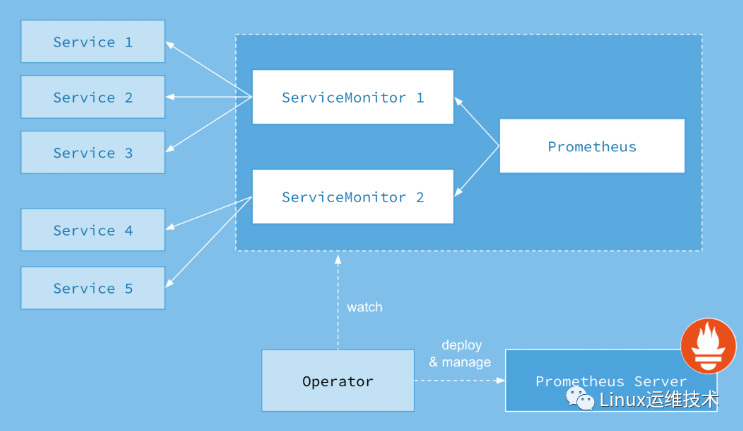

现在以Prometheus-Operator的方式来部署Prometheus,当需要添加一个mysql服务监控会怎么做,首先第一步和传统方式一样,部署一个mysql-exporter来获取mysql监控项,然后编写一个ServiceMonitor通过labelSelector选择刚才部署的mysql-exporter,由于Operator在部署Prometheus的时候默认指定了Prometheus选择label为:prometheus: kube-prometheus的ServiceMonitor,所以只需要在ServiceMonitor上打上prometheus: kube-prometheus标签就可以被Prometheus选择了,完成以上两步就完成了对mysql服务的监控,不需要改Prometheus配置文件,也不需要重启Prometheus服务,Operator观察到ServiceMonitor发生变化,会动态生成Prometheus配置文件,并保证配置文件实时生效。

添加自定义监控项流程

创建ServiceMonitor对象,用于Kube-Prometheus添加监控项

创建Service对象,提供metrics数据接口,并将其和ServiceMonitor关联

确保Service对象可以正确获取metrics数据

如何编写ServiceMonitor

ServiceMonitor是一个kubernetes的自定义资源,所以得遵循Kubernetes ServiceMonitor编写规范,这里通过动态添加一个mysql监控的示例来演示如何编写ServiceMonitor。

先决条件:

1. 已搭建好kubernetes集群 (Kubernetes 1.18.2集群部署 (单Master)+docker)

2. 已通过使用prometheus-operator来部署好了Prometheus服务 (此处加链接)

3. 已经成功安装好了mysql ( Kubernetes Prometheus监控MySQL ——安装mysql 用于监控测试,创建账号并授权)

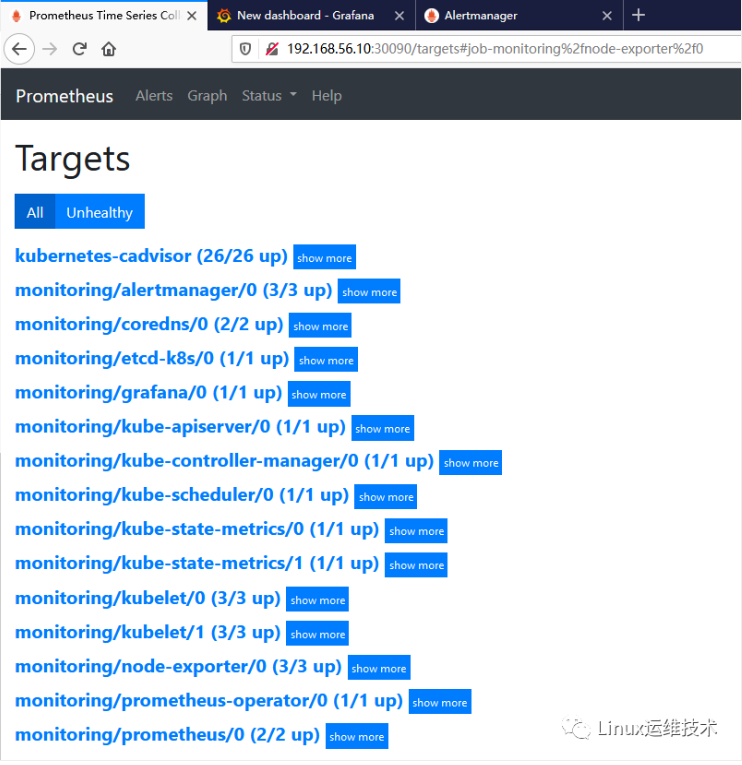

在满足上述先决条件的情况下,首先打开prometheus server的界面,如下图,可以在Targets下看到已经有prometheus和kubernetes集群自身的监控了,目前还没有mysql监控。

接下来在kubernetes集群中添加mysql监控的exporter:prometheus-mysql-exporter

https://github.com/helm/charts/tree/master/stable/prometheus-mysql-exporter

这里采用helm的方式安装prometheus-mysql-exporter,按照github上的步骤进行安装。

预先安装helm (参考文章 加链接)

#验证helm 和 tiller版本[root@k8s-master01 helm]# helm versionClient: &version.Version{SemVer:"v2.13.1", GitCommit:"618447cbf203d147601b4b9bd7f8c37a5d39fbb4", GitTreeState:"clean"}Server: &version.Version{SemVer:"v2.13.1", GitCommit:"618447cbf203d147601b4b9bd7f8c37a5d39fbb4", GitTreeState:"clean"

安装方法1:

修改values.yaml中的datasource为安装在kubernetes中mysql的地址,然后执行命令

helm install --name my-release -f values.yaml stable/prometheus-mysql-exporter

安装方法2:(选择此方法)

Specify each parameter using the --set key=value[,key=value] argument to helm install. For example,

添加helm仓库地址[root@k8s-master01 helm]# helm repo add stable https://kubernetes.oss-cn-hangzhou.aliyuncs.com/charts[root@k8s-master01 helm]# helm repo add apphub https://apphub.aliyuncs.com[root@k8s-master01 helm]# helm repo add azure http://mirror.azure.cn/kubernetes/charts查看helm仓库列表[root@k8s-master01 helm]# helm repo listNAME URL stable https://kubernetes.oss-cn-hangzhou.aliyuncs.com/chartslocal http://127.0.0.1:8879/charts apphub https://apphub.aliyuncs.com azure http://mirror.azure.cn/kubernetes/charts更新helm仓库chart[root@k8s-master01 helm]# helm repo updateHang tight while we grab the latest from your chart repositories......Skip local chart repository...Successfully got an update from the "stable" chart repository...Successfully got an update from the "apphub" chart repository...Successfully got an update from the "azure" chart repositoryUpdate Complete. ⎈ Happy Helming!⎈查找chart prometheus-mysql-exporter[root@k8s-master01 helm]# helm search prometheus-mysql-exporterNAME CHART VERSION APP VERSION DESCRIPTION apphub/prometheus-mysql-exporter 0.5.2 v0.11.0 A Helm chart for prometheus mysql exporter with cloudsqlp...azure/prometheus-mysql-exporter 0.6.0 v0.11.0 A Helm chart for prometheus mysql exporter with cloudsqlp...kubernetes集群中已有成功安装好mysql数据库,mysql信息如下:mysql.user="mysql_exporter1",mysql.pass="Abcdef123",mysql.host="localhost",mysql.port="3306"安装chart prometheus-mysql-exporter[root@k8s-master01 helm]# helm install --namespace monitoring --name my-release apphub/prometheus-mysql-exporter --set mysql.user="mysql_exporter1",mysql.pass="Abcdef123",mysql.host="localhost",mysql.port="3306" NAME: my-releaseLAST DEPLOYED: Wed Jul 15 14:35:46 2020NAMESPACE: monitoringSTATUS: DEPLOYEDRESOURCES:==> v1/DeploymentNAME READY UP-TO-DATE AVAILABLE AGEmy-release-prometheus-mysql-exporter 0/1 1 0 2s==> v1/Pod(related)NAME READY STATUS RESTARTS AGEmy-release-prometheus-mysql-exporter-58ff67f687-22qpk 0/1 ContainerCreating 0 1s==> v1/SecretNAME TYPE DATA AGEmy-release-prometheus-mysql-exporter Opaque 1 2s==> v1/ServiceNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEmy-release-prometheus-mysql-exporter ClusterIP 10.101.57.225 <none> 9104/TCP 2sNOTES:1. Get the application URL by running these commands: export POD_NAME=$(kubectl get pods --namespace monitoring -l "app=prometheus-mysql-exporter,release=my-release" -o jsonpath="{.items[0].metadata.name}") kubectl port-forward $POD_NAME 8080:9104 echo "Visit http://127.0.0.1:8080 to use your application"

接下来查看刚才安装的mysql-exporter的service:

[root@k8s-master01 helm]# kubectl get svc -n monitoringNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEalertmanager-main NodePort 10.96.186.209 <none> 9093:30093/TCP 25halertmanager-operated ClusterIP None <none> 9093/TCP,9094/TCP,9094/UDP 25hgrafana NodePort 10.106.221.60 <none> 3000:32000/TCP 25hkube-state-metrics ClusterIP None <none> 8443/TCP,9443/TCP 25hmy-release-prometheus-mysql-exporter ClusterIP 10.101.57.225 <none> 9104/TCP 32snode-exporter ClusterIP None <none> 9100/TCP 25hprometheus-adapter ClusterIP 10.96.89.246 <none> 443/TCP 25hprometheus-k8s NodePort 10.105.143.61 <none> 9090:30090/TCP 25hprometheus-operated ClusterIP None <none> 9090/TCP 25hprometheus-operator ClusterIP None <none> 8443/TCP 25h

找到my-release-prometheus-mysql-exporter 说明服务已经起来了

查看该service信息

[root@k8s-master01 helm]# kubectl describe service my-release-prometheus-mysql-exporter -n monitoring Name: my-release-prometheus-mysql-exporterNamespace: monitoringLabels: app=prometheus-mysql-exporter chart=prometheus-mysql-exporter-0.5.2 heritage=Tiller release=my-releaseAnnotations: Selector: app=prometheus-mysql-exporter,release=my-releaseType: ClusterIPIP: 10.101.57.225Port: mysql-exporter 9104/TCPTargetPort: 9104/TCPEndpoints: 10.244.1.20:9104Session Affinity: NoneEvents:

可以看到该service 的TargetPort端口是 Port: mysql-exporter 9104/TCP

接下来编写ServiceMonitor文件

[root@k8s-master01 mysql]# mkdir mysql-exporter && cd mysql-exporter/[root@k8s-master01 mysql-exporter]# vim servicemonitor.yamlapiVersion: monitoring.coreos.com/v1kind: ServiceMonitor #资源类型为ServiceMonitormetadata: labels: prometheus: kube-prometheus #prometheus默认通过 prometheus: kube-prometheus发现ServiceMonitor,只要写上这个标签prometheus服务就能发现这个ServiceMonitor name: prometheus-exporter-mysqlspec: jobLabel: app #jobLabel指定的标签的值将会作为prometheus配置文件中scrape_config下job_name的值,也就是Target,如果不写,默认为service的name selector: matchLabels: #该ServiceMonitor匹配的Service的labels,如果使用mathLabels,则下面的所有标签都匹配时才会匹配该service,如果使用matchExpressions,则至少匹配一个标签的service都会被选择 app: prometheus-mysql-exporter #由于前面查看mysql-exporter的service信息中标签包含了app: prometheus-mysql-exporter这个标签,写上就能匹配到 namespaceSelector: any: true #表示从所有namespace中去匹配,如果只想选择某一命名空间中的service,可以使用matchNames: []的方式 endpoints: - port: mysql-exporter #前面查看mysql-exporter的service信息中,提供mysql监控信息的端口是Port: mysql-exporter 9104/TCP,所以这里填mysql-exporter interval: 30s #每30s获取一次信息 # path: /metrics HTTP path to scrape for metrics,默认值为/metrics honorLabels: true

通过kubectl更新yaml文件 (注意指定 namespace monitoring)[root@k8s-master01 mysql-exporter]# kubectl apply -f servicemonitor.yaml -n monitoring servicemonitor.monitoring.coreos.com/prometheus-exporter-mysql created查看是否有创建的 prometheus-exporter-mysql serviceMonitor[root@k8s-master01 mysql-exporter]# kubectl get serviceMonitor -n monitoring NAME AGEalertmanager 25hcoredns 25hetcd-k8s 20hgrafana 25hkube-apiserver 25hkube-controller-manager 25hkube-scheduler 25hkube-state-metrics 25hkubelet 25hnode-exporter 25hprometheus 25hprometheus-exporter-mysql 35sprometheus-operator 25h

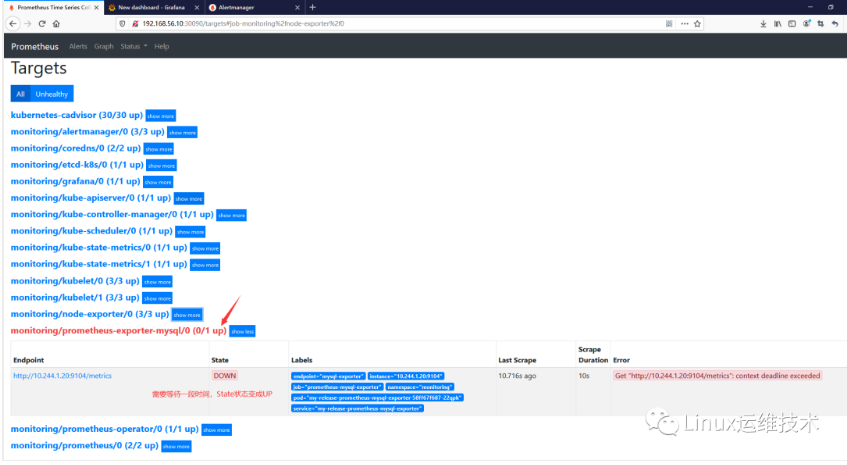

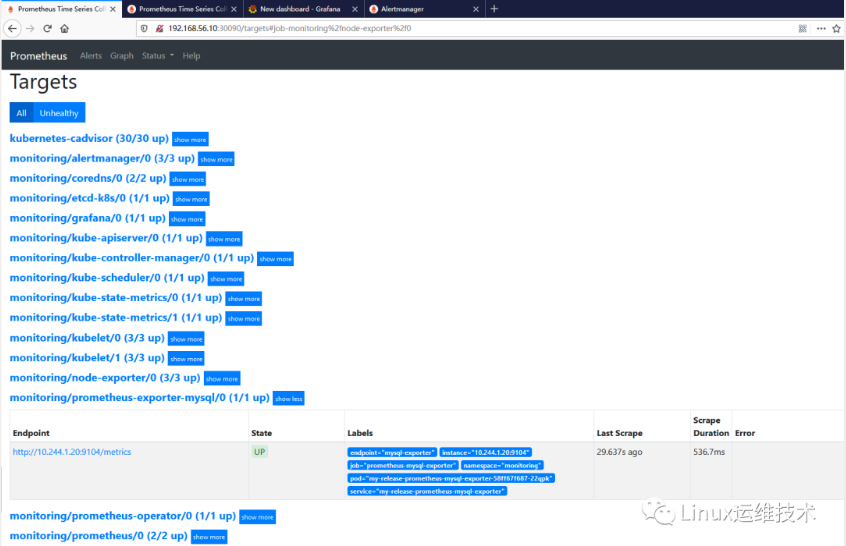

可以看到Prometheus-exporter-mysql已经存在了,表示创建成功了,过1分钟左右,在prometheus的界面中查看Targets,可以看到已经成功添加了mysql监控。

http://192.168.56.10:30090/targets

上面提到prometheus通过标签prometheus: kube-prometheus选择ServiceMonitor, 当然,可以通过在values.yaml中配置serviceMonitorsSelector来指定按照自己的规则选择serviceMonitor。

如何动态添加告警规则

当动态添加了监控对象,一般会对该对象配置告警规则,采用prometheus-operator的架构模式下,当需要动态配置告警规则的时候,可以使用另一种自定义资源(CRD)PrometheusRule,PrometheusRule和ServiceMonitor 都是一种自定义资源,ServiceMonitor用于动态添加监控实例,而PrometheusRule则用于动态添加告警规则,下面依然通过动态添加mysql的告警规则为例来演示如何使用PrometheusRule资源。

接下来编写mysql-rule.yaml文件

[root@k8s-master01 mysql-exporter]# vim mysql-rule.yamlapiVersion: monitoring.coreos.com/v1 #这和ServiceMonitor一样kind: PrometheusRule #该资源类型是Prometheus,这也是一种自定义资源(CRD)metadata: labels: app: "prometheus-rule-mysql" prometheus: kube-prometheus #同ServiceMonitor,ruleSelector也会默认选择标签为prometheus: kube-prometheus的PrometheusRule资源 name: prometheus-rule-mysqlspec: groups: #编写告警规则,和prometheus的告警规则语法相同 - name: mysql.rules rules: - alert: TooManyErrorFromMysql expr: sum(irate(mysql_global_status_connection_errors_total[1m])) > 10 labels: severity: critical annotations: description: mysql产生了太多的错误 summary: TooManyErrorFromMysql - alert: TooManySlowQueriesFromMysql expr: increase(mysql_global_status_slow_queries[1m]) > 10 labels: severity: critical annotations: description: mysql一分钟内产生了{{ $value }}条慢查询日志. summary: TooManySlowQueriesFromMysql

Prometheus选择PrometheusRule资源是通过ruleSelector来选择,默认也是通过标签:prometheus: kube-prometheus 来选择,在这里可以看到,ruleSelector 和 ServiceMonitorsSelector 都是可以配置的。

通过kubectl更新yaml文件 (注意指定 namespace: monitoring)[root@k8s-master01 mysql-exporter]# kubectl create -f mysql-rule.yaml -n monitoringprometheusrule.monitoring.coreos.com/prometheus-rule-mysql created可以看到刚才创建的PrometheusRule资源prometheus-rule-mysql[root@k8s-master01 mysql-exporter]# kubectl get prometheusRule -n monitoring NAME AGEprometheus-k8s-rules 26hprometheus-rule-mysql 27s

导出rule规则[root@k8s-master01 mysql-exporter]# kubectl get prometheusRule prometheus-k8s-rules -n monitoring -o yaml > prometheus-k8s-rules.yaml[root@k8s-master01 mysql-exporter]# kubectl get prometheusRule prometheus-rule-mysql -n monitoring -o yaml > prometheus-rule-mysql.yaml

等待1分钟左右,在prometheus图形界面中可以找到刚才添加的mysql.rule的内容了。

如何动态更新Alertmanager配置

Operator部署Alertmanager的时候会生成一个statefulset类型对象

通过命令可以查看到 alertmanager-main statefulset[root@k8s-master01 mysql-exporter]# kubectl get statefulset --all-namespacesNAMESPACE NAME READY AGEmonitoring alertmanager-main 3/3 26hmonitoring prometheus-k8s 2/2 26h

查看 alertmanager-main statefulset 详细信息[root@k8s-master01 mysql-exporter]# kubectl describe statefulset alertmanager-main -n monitoringName: alertmanager-mainNamespace: monitoringCreationTimestamp: Tue, 14 Jul 2020 13:01:36 +0800Selector: alertmanager=main,app=alertmanagerLabels: alertmanager=mainAnnotations: Replicas: 3 desired | 3 totalUpdate Strategy: RollingUpdatePods Status: 3 Running / 0 Waiting / 0 Succeeded / 0 FailedPod Template: Labels: alertmanager=main app=alertmanager Service Account: alertmanager-main Containers: alertmanager: Image: quay.io/prometheus/alertmanager:v0.21.0 Ports: 9093/TCP, 9094/TCP, 9094/UDP Host Ports: 0/TCP, 0/TCP, 0/UDP Args: --config.file=/etc/alertmanager/config/alertmanager.yaml --storage.path=/alertmanager --data.retention=120h --cluster.listen-address=[$(POD_IP)]:9094 --web.listen-address=:9093 --web.route-prefix=/ --cluster.peer=alertmanager-main-0.alertmanager-operated:9094 --cluster.peer=alertmanager-main-1.alertmanager-operated:9094 --cluster.peer=alertmanager-main-2.alertmanager-operated:9094 Requests: memory: 200Mi Liveness: http-get http://:web/-/healthy delay=0s timeout=3s period=10s #success=1 #failure=10 Readiness: http-get http://:web/-/ready delay=3s timeout=3s period=5s #success=1 #failure=10 Environment: POD_IP: (v1:status.podIP) Mounts: /alertmanager from alertmanager-main-db (rw) /etc/alertmanager/config from config-volume (rw) config-reloader: Image: jimmidyson/configmap-reload:v0.3.0 Port: Host Port: Args: -webhook-url=http://localhost:9093/-/reload -volume-dir=/etc/alertmanager/config Limits: cpu: 100m memory: 25Mi Environment: Mounts: /etc/alertmanager/config from config-volume (ro) Volumes: config-volume: Type: Secret (a volume populated by a Secret) SecretName: alertmanager-main Optional: false alertmanager-main-db: Type: EmptyDir (a temporary directory that shares a pod's lifetime) Medium: SizeLimit: Volume Claims: Events:

该 alertmanager-main statefulset 挂载了一个名为alertmanager-main的secret资源到alertmanager-main容器内部的 /etc/alertmanager/config/alertmanager.yaml

上面的Volumes: 下面的config-volume:标签下可以看到 Type:字段的值为Secret 表示挂载一个secret资源,SecrectName是 alertmanager-main。

通过以下命令查看 所有secret [root@k8s-master01 mysql-exporter]# kubectl get secrets -n monitoring NAME TYPE DATA AGEadditional-configs Opaque 1 19halertmanager-main Opaque 1 26halertmanager-main-token-shc2g kubernetes.io/service-account-token 3 26hdefault-token-f8l26 kubernetes.io/service-account-token 3 26hetcd-certs Opaque 3 21hgrafana-datasources Opaque 1 26hgrafana-token-h8mqf kubernetes.io/service-account-token 3 26hkube-state-metrics-token-nnwt7 kubernetes.io/service-account-token 3 26hmy-release-prometheus-mysql-exporter Opaque 1 74mnode-exporter-token-7bjpm kubernetes.io/service-account-token 3 26hprometheus-adapter-token-2glnq kubernetes.io/service-account-token 3 26hprometheus-k8s Opaque 1 26hprometheus-k8s-tls-assets Opaque 0 26hprometheus-k8s-token-c6dkd kubernetes.io/service-account-token 3 26hprometheus-operator-token-5jm2s kubernetes.io/service-account-token 3 26h

通过以下命令查看该 alertmanager-main secret 详细信息[root@k8s-master01 mysql-exporter]# kubectl describe secrets alertmanager-main -n monitoring Name: alertmanager-mainNamespace: monitoringLabels: Annotations: Type: OpaqueData====alertmanager.yaml: 686 bytes

可以看到该secert的Data项里面有一个key为alertmanager.yaml的属性,其value包含686bytes。

这个alertmanager.yaml的值其实就是alertmanager-main 容器的 /etc/alertmanager/config/alertmanager.yaml 中的内容,statefulset通过挂载的方式将 /etc/alertmanager/config 挂载成一个secret。

查看该 alertmanager-main secret的内容[root@k8s-master01 mysql-exporter]# kubectl edit secrets alertmanager-main -n monitoringapiVersion: v1data: alertmanager.yaml: Imdsb2JhbCI6CiAgInJlc29sdmVfdGltZW91dCI6ICI1bSIKImluaGliaXRfcnVsZXMiOgotICJlcXVhbCI6CiAgLSAibmFtZXNwYWNlIgogIC0gImFsZXJ0bmFtZSIKICAic291cmNlX21hdGNoIjoKICAgICJzZXZlcml0eSI6ICJjcml0aWNhbCIKICAidGFyZ2V0X21hdGNoX3JlIjoKICAgICJzZXZlcml0eSI6ICJ3YXJuaW5nfGluZm8iCi0gImVxdWFsIjoKICAtICJuYW1lc3BhY2UiCiAgLSAiYWxlcnRuYW1lIgogICJzb3VyY2VfbWF0Y2giOgogICAgInNldmVyaXR5IjogIndhcm5pbmciCiAgInRhcmdldF9tYXRjaF9yZSI6CiAgICAic2V2ZXJpdHkiOiAiaW5mbyIKInJlY2VpdmVycyI6Ci0gIm5hbWUiOiAiRGVmYXVsdCIKLSAibmFtZSI6ICJXYXRjaGRvZyIKLSAibmFtZSI6ICJDcml0aWNhbCIKInJvdXRlIjoKICAiZ3JvdXBfYnkiOgogIC0gIm5hbWVzcGFjZSIKICAiZ3JvdXBfaW50ZXJ2YWwiOiAiNW0iCiAgImdyb3VwX3dhaXQiOiAiMzBzIgogICJyZWNlaXZlciI6ICJEZWZhdWx0IgogICJyZXBlYXRfaW50ZXJ2YWwiOiAiMTJoIgogICJyb3V0ZXMiOgogIC0gIm1hdGNoIjoKICAgICAgImFsZXJ0bmFtZSI6ICJXYXRjaGRvZyIKICAgICJyZWNlaXZlciI6ICJXYXRjaGRvZyIKICAtICJtYXRjaCI6CiAgICAgICJzZXZlcml0eSI6ICJjcml0aWNhbCIKICAgICJyZWNlaXZlciI6ICJDcml0aWNhbCI=kind: Secretmetadata: annotations: kubectl.kubernetes.io/last-applied-configuration: | {"apiVersion":"v1","data":{},"kind":"Secret","metadata":{"annotations":{},"name":"alertmanager-main","namespace":"monitoring"},"stringData":{"alertmanager.yaml":"\"global\":\n \"resolve_timeout\": \"5m\"\n\"inhibit_rules\":\n- \"equal\":\n - \"namespace\"\n - \"alertname\"\n \"source_match\":\n \"severity\": \"critical\"\n \"target_match_re\":\n \"severity\": \"warning|info\"\n- \"equal\":\n - \"namespace\"\n - \"alertname\"\n \"source_match\":\n \"severity\": \"warning\"\n \"target_match_re\":\n \"severity\": \"info\"\n\"receivers\":\n- \"name\": \"Default\"\n- \"name\": \"Watchdog\"\n- \"name\": \"Critical\"\n\"route\":\n \"group_by\":\n - \"namespace\"\n \"group_interval\": \"5m\"\n \"group_wait\": \"30s\"\n \"receiver\": \"Default\"\n \"repeat_interval\": \"12h\"\n \"routes\":\n - \"match\":\n \"alertname\": \"Watchdog\"\n \"receiver\": \"Watchdog\"\n - \"match\":\n \"severity\": \"critical\"\n \"receiver\": \"Critical\""},"type":"Opaque"} creationTimestamp: "2020-07-14T05:01:35Z" managedFields: - apiVersion: v1 fieldsType: FieldsV1 fieldsV1: f:data: .: {} f:alertmanager.yaml: {} f:metadata: f:annotations: .: {} f:kubectl.kubernetes.io/last-applied-configuration: {} f:type: {} manager: kubectl operation: Update time: "2020-07-14T05:01:35Z" name: alertmanager-main namespace: monitoring resourceVersion: "14842" selfLink: /api/v1/namespaces/monitoring/secrets/alertmanager-main uid: 84b6385a-ae5c-45f6-b845-5172e818f9b7type: Opaque

其中data:下面的alertmanager.yaml这个key对应的值是一串base64编码过后的字符串,将这段字符串复制出来通过base64反编码之后内容如下:

base64 解密方法1:https://base64.supfree.net/

base64 解密方法2:命令行

base64解密 alertmanager.yaml 中被base64编码过的字符串[root@k8s-master01 mysql-exporter]# echo Imdsb2JhbCI6CiAgInJlc29sdmVfdGltZW91dCI6ICI1bSIKImluaGliaXRfcnVsZXMiOgotICJlcXVhbCI6CiAgLSAibmFtZXNwYWNlIgogIC0gImFsZXJ0bmFtZSIKICAic291cmNlX21hdGNoIjoKICAgICJzZXZlcml0eSI6ICJjcml0aWNhbCIKICAidGFyZ2V0X21hdGNoX3JlIjoKICAgICJzZXZlcml0eSI6ICJ3YXJuaW5nfGluZm8iCi0gImVxdWFsIjoKICAtICJuYW1lc3BhY2UiCiAgLSAiYWxlcnRuYW1lIgogICJzb3VyY2VfbWF0Y2giOgogICAgInNldmVyaXR5IjogIndhcm5pbmciCiAgInRhcmdldF9tYXRjaF9yZSI6CiAgICAic2V2ZXJpdHkiOiAiaW5mbyIKInJlY2VpdmVycyI6Ci0gIm5hbWUiOiAiRGVmYXVsdCIKLSAibmFtZSI6ICJXYXRjaGRvZyIKLSAibmFtZSI6ICJDcml0aWNhbCIKInJvdXRlIjoKICAiZ3JvdXBfYnkiOgogIC0gIm5hbWVzcGFjZSIKICAiZ3JvdXBfaW50ZXJ2YWwiOiAiNW0iCiAgImdyb3VwX3dhaXQiOiAiMzBzIgogICJyZWNlaXZlciI6ICJEZWZhdWx0IgogICJyZXBlYXRfaW50ZXJ2YWwiOiAiMTJoIgogICJyb3V0ZXMiOgogIC0gIm1hdGNoIjoKICAgICAgImFsZXJ0bmFtZSI6ICJXYXRjaGRvZyIKICAgICJyZWNlaXZlciI6ICJXYXRjaGRvZyIKICAtICJtYXRjaCI6CiAgICAgICJzZXZlcml0eSI6ICJjcml0aWNhbCIKICAgICJyZWNlaXZlciI6ICJDcml0aWNhbCI= | base64 -d"global": "resolve_timeout": "5m""inhibit_rules":- "equal": - "namespace" - "alertname" "source_match": "severity": "critical" "target_match_re": "severity": "warning|info"- "equal": - "namespace" - "alertname" "source_match": "severity": "warning" "target_match_re": "severity": "info""receivers":- "name": "Default"- "name": "Watchdog"- "name": "Critical""route": "group_by": - "namespace" "group_interval": "5m" "group_wait": "30s" "receiver": "Default" "repeat_interval": "12h" "routes": - "match": "alertname": "Watchdog" "receiver": "Watchdog" - "match": "severity": "critical" "receiver": "Critical"

这就是alertmanager-main的config配置,该内容会被挂载到alertmanager-main容器的/etc/alertmanager/config/alertmanager.yaml

进入alertmanager-main容器去看看该文件

[root@k8s-master01 mysql-exporter]# kubectl get pods -n monitoring NAME READY STATUS RESTARTS AGEalertmanager-main-0 2/2 Running 4 27halertmanager-main-1 2/2 Running 2 27halertmanager-main-2 2/2 Running 4 27hgrafana-668c4878fd-js4cb 1/1 Running 2 27hkube-state-metrics-957fd6c75-r2b9v 3/3 Running 3 27hmy-release-prometheus-mysql-exporter-58ff67f687-22qpk 1/1 Running 0 91mnode-exporter-btz5d 2/2 Running 4 27hnode-exporter-k6f96 2/2 Running 0 27hnode-exporter-lphjx 2/2 Running 2 27hprometheus-adapter-66b855f564-22vxf 1/1 Running 1 27hprometheus-k8s-0 3/3 Running 7 22hprometheus-k8s-1 3/3 Running 4 22hprometheus-operator-5b96bb5d85-jdbrf 2/2 Running 2 27h

[root@k8s-master01 mysql-exporter]# kubectl exec -it alertmanager-main-0 -n monitoring -- /bin/shDefaulting container name to alertmanager.Use 'kubectl describe pod/alertmanager-main-0 -n monitoring' to see all of the containers in this pod./alertmanager $ cat /etc/alertmanager/config/alertmanager.yaml"global": "resolve_timeout": "5m""inhibit_rules":- "equal": - "namespace" - "alertname" "source_match": "severity": "critical" "target_match_re": "severity": "warning|info"- "equal": - "namespace" - "alertname" "source_match": "severity": "warning" "target_match_re": "severity": "info""receivers":- "name": "Default"- "name": "Watchdog"- "name": "Critical""route": "group_by": - "namespace" "group_interval": "5m" "group_wait": "30s" "receiver": "Default" "repeat_interval": "12h" "routes": - "match": "alertname": "Watchdog" "receiver": "Watchdog" - "match": "severity": "critical" "receiver": "Critical"

在alertmanager-main的pod中还有另一个container容器叫做config-reloader,这个容器会监听/etc/alertmanager/config目录,当/etc/alertmanager/config目录下的文件发生变化的时候,config-reloader会向alertmanager发起http://localhost:9093/-/reloadPOST 请求,alertmanager会重新加载/etc/alertmanager/config目录下的配置文件,从而实现了动态配置更新。

如何操作

在理解了alertmanager动态配置的原理之后,问题就很清晰了,需要动态配置alertmanager只需要更新名为alertmanager-main (你的secret名不一定为这个名字,但一定是alertmanager-{*}格式) 的secret的data属性中的alertmanager.yaml字段的值就可以了。

更新secret有两种方法:

一种是通过 kubectl edit secret 的方式,

一种是通过 kubectl patch secret 的方式

但是两种方式更新secret都需要输入base64编码之后的字符串,这里通过linux下的base64命令进行编码。

首先修改上面base64反编译后的文件,修改完成之后保存file文件,然后通过命令 base64 file > test.txt 的方式,将配置通过base64编码并将编码结果输出到 test.txt 文件中,然后进入test.txt文件中复制编码之后的字符串。

如果通过第一种方式更新secret

执行命令 kubectl edit secrets -n monitoring alertmanager-main

然后data下面的alertmanager.yaml的值为刚才复制的字符串,

保存并退出就可以了。

如果通过第二种方式更新secert

执行命令kubectl patch secert alertmanager-kube-prometheus -n -n monitoring -p '{"data":{"alertmanager.yaml":"此处填写刚才复制的base64编码之后的配置字符串"}' 即可完成更新

该命令中 -p 参数后面跟的是一个JSON字符串,将刚才复制的base64编码后的字符串填入正确的位置可以了

在完成更新之后可以访问alertmanager的界面http://192.168.56.10:30093/#/status 查看配置已经生效了

通过上面的操作,已经实现了 监控对象的动态发现,监控告警规则的动态添加,监控告警配置的动态更新,基本上已经实现了所有配置的动态配置。

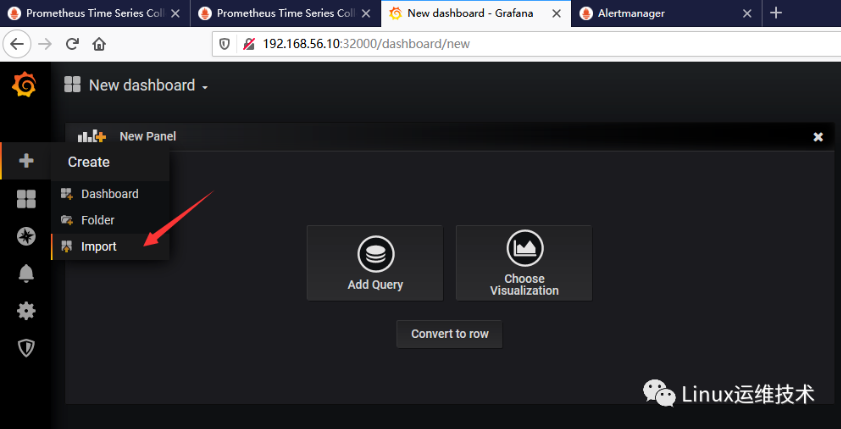

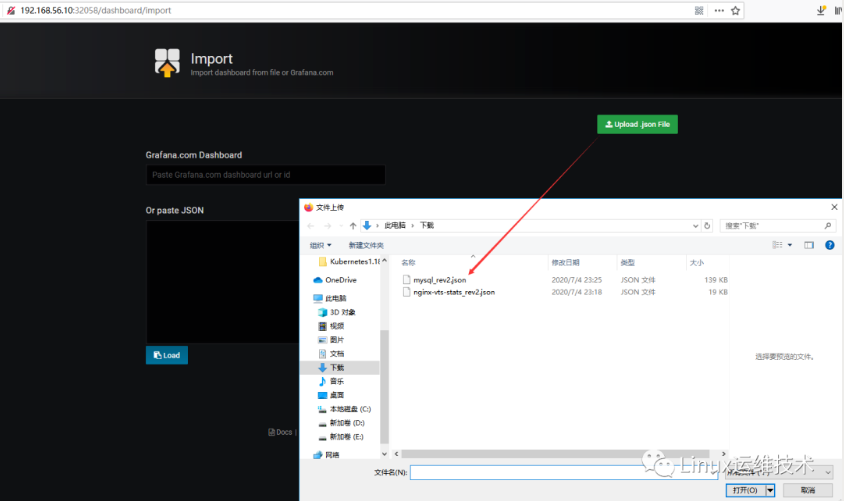

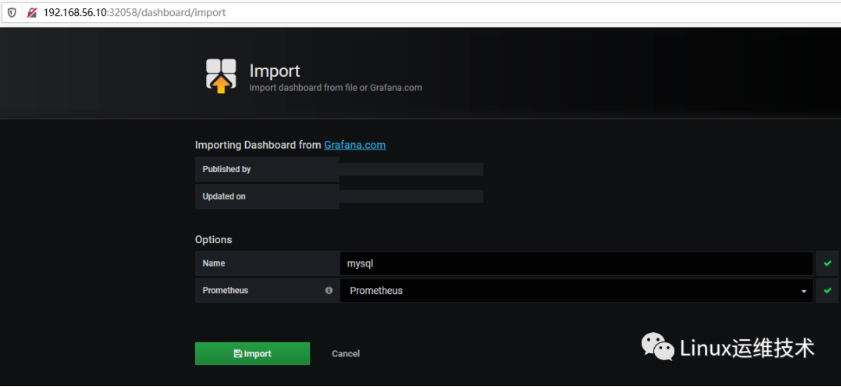

在grafana界面导入mysql_rev2.json图表

浏览器访问 http://192.168.56.10:32000

方法1:导入mysql_rev2.json

下载mysql_rev2.json

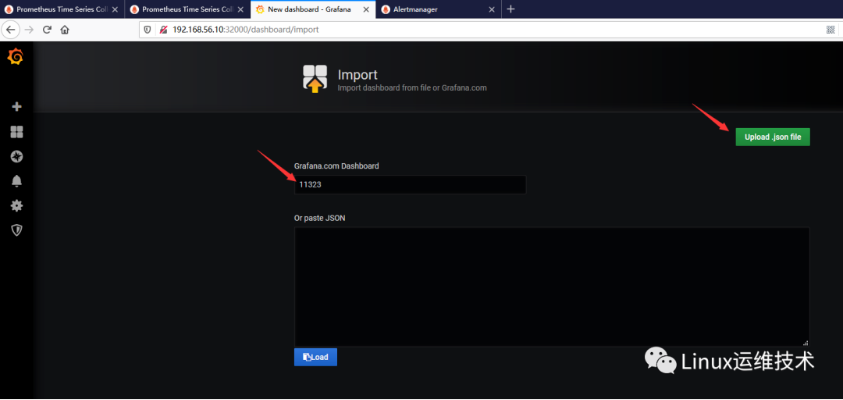

https://grafana.com/grafana/dashboards/11323

方法2:输入需要导入Dashboard ID即可

在grafana界面导入MySQL_Overview.json图表

下载MySQL_Overview.json

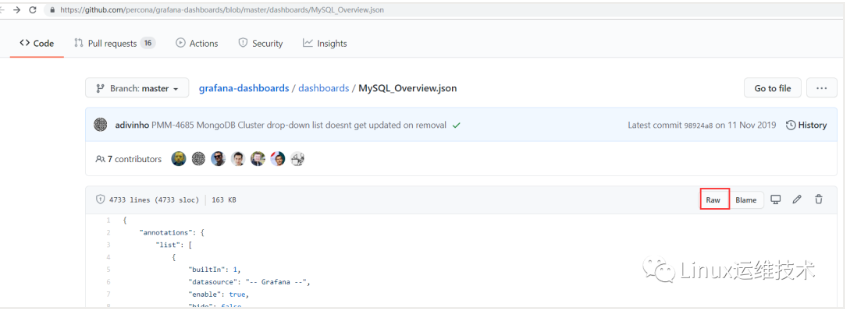

https://github.com/percona/grafana-dashboards/blob/master/dashboards/MySQL_Overview.json

https://raw.githubusercontent.com/percona/grafana-dashboards/master/dashboards/MySQL_Overview.json

复制以上json文件,导入grafana。

往期文章

Kubernetes 是什么?

Kubernetes 基础概念

Kubernetes 1.18.2集群部署 (单Master)+docker—kubeadm方式

Kubernetes 1.18.2集群部署 (多Master)+docker—kubeadm方式

Kubernetes 1.18.2集群部署 (多Master)+docker—二进制方式

Kubernetes 一条命令快速部署 Kubernetes 高可用集群

Kubernetes Harbor v2.0.0私有镜像仓库部署-更新

Kubernetes kubectx/kubens切换context和namespace

Kubernetes 删除namespace时卡在Terminating状态

Kubernetes kubeadm初始化kubernetes集群延长证书过期时间

Kubernetes kubeadm升级集群

Kubernetes kubectl命令

Kubernetes 创建、更新应用

Kubernetes 资源清单

Kubernetes Pod状态和生命周期管理

Kubernetes Pod Controller

Kubernetes ReplicaSet Controller

Kubernetes Deployment Controller

Kubernetes DamonSet Controller

Kubernetes 服务发现—Service

Kubernetes 内部服务发现—Coredns

Kubernetes 外部服务发现—Traefik ingress

Kubernetes 外部服务发现—Nginx Ingress Controller

Kubernetes 存储卷—Volumes

Kubernetes 特殊存储卷—Secret和ConfigMap

Kubernetes StatefulSet Controller

Kubernetes 认证、授权和准入控制

Kubernetes dashboard认证访问-更新

Kubernetes 网络模型和网络策略

Kubernetes 网络原理解析

Kubernetes 网络插件-flannel

Kubernetes 网络插件-calico

Kubernetes Pod资源调度

Kubernetes 资源指标和集群监控

Kubernetes 部署Prometheus+Grafana+Alertmanager监控告警系统

Kubernetes Prometheus监控Nginx

Kubernetes Prometheus监控MySQL

Kubernetes Prometheus监控tomcat

Kubernetes 部署kube-prometheus监控告警系统

Kubernetes kube-prometheus中添加自定义监控项-监控etcd

Kubernetes kube-prometheus监控指标targets

Kubernetes kube-prometheus配置kubernetes-cadvisor服务自动发现

Kubernetes kube-prometheus Configuration信息

Kubernetes 使用Elastic Stack构建Kubernetes全栈监控

Kubernetes 使用StatefulSet部署MySQL高可用集群

Kubernetes 使用StatefulSet部署MongoDB高可用集群

Kubernetes 部署WordPress博客

Kubernetes 部署Nginx+php-fpm+MySQL并运行Discuz

Kubernetes 包管理工具—Helm2.13安装和使用

Kubernetes Helm2部署gitlab私有代码仓库

Kubernetes Helm2部署MySQL数据库

Kubernetes 包管理工具—Helm3.3安装和使用

Kubernetes Helm3部署MySQL数据库

Kubernetes Helm3部署kubernetes-dashboard

Kubernetes Helm3部署nginx-ingress NodePort方式

Kubernetes Helm3部署nginx-ingress LoadBalancer方式