一. 概述

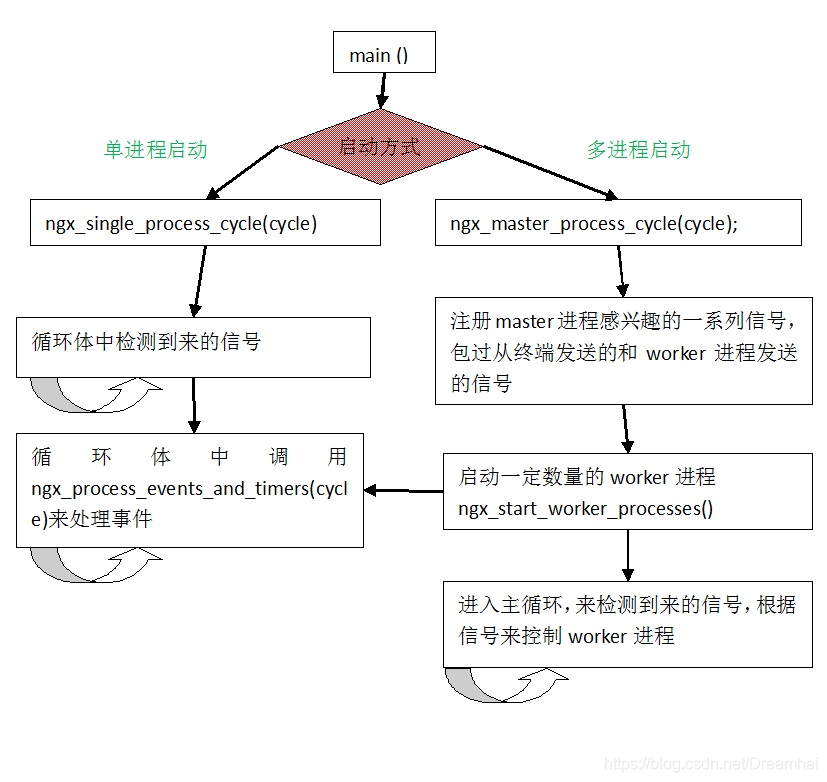

nginx有两类进程,一类称为master进程(相当于管理进程),另一类称为worker进程(实际工作进程)。启动方式有两种:

- 单进程启动:此时系统中仅有一个进程,该进程既充当master进程的角色,也充当worker进程的角色。

- 多进程启动:此时系统有且仅有一个master进程,至少有一个worker进程工作。

master进程主要进行一些全局性的初始化工作和管理worker的工作;事件处理是在worker中进行的。

首先简要的浏览一下nginx的启动过程,如下图:

二. 实现原理

这里只分析多进程下的工作原理。

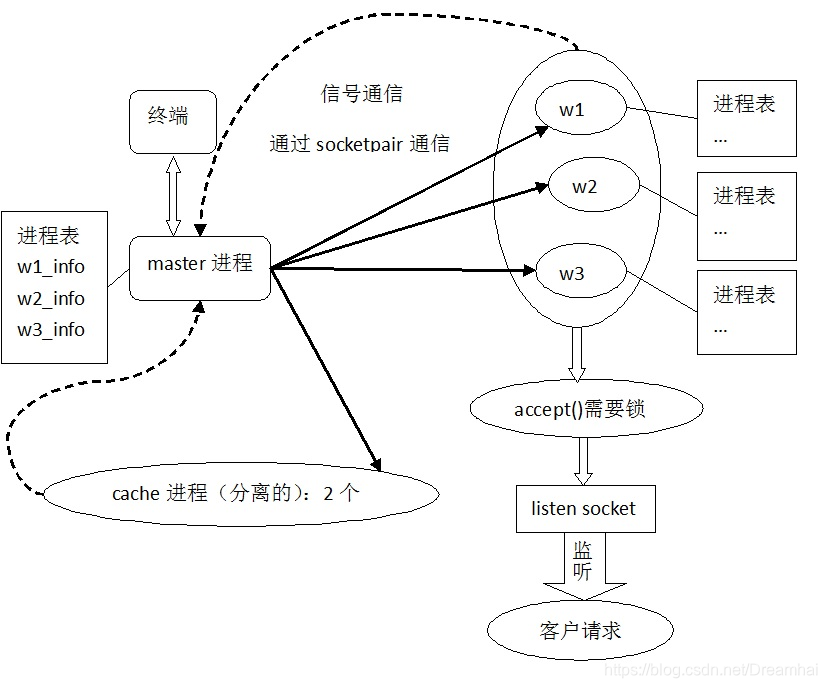

nginx的进程启动过程是在ngx_master_process_cycle(src/os/unix/ngx_process_cycle.c)中完成的,在ngx_master_process_cycle中,会根据配置文件的worker_processes值创建多个子进程,即一个master进程和多个worker进程。进程之间、进程与外部之间保持通信。

如下图所示:图中w1表示worker进程1,以此类推。虚线表示信号通信,实现表示socketpair通信。

nginx 的进程模型采用的是prefork方式,预先分配的worker子进程数量由配置文件指定,默认为1。

master主进程创建监听套接口,fork子进程以后,由worker进程监听客户连接,每个worker子进程独自尝试accept已连接套接口,accept是否上锁可以配置,默认会上锁,如果操作系统支持原子整型,才会使用共享内存实现原子上锁,否则使用文件上锁。

如果不使用锁,当多个进程同时accept,当一个连接来的时候多个进程同时被唤起,会导致惊群问题。使用锁的时候,只会有一个worker阻塞在accept上,其他的进程则会不能获取锁而阻塞,这样就解决了惊群的问题。

master进程通过socketpair向worker子进程发送命令,终端也可以向master发送各种命令,子进程通过发送信号给master进程的方式与其通信,worker之间通过unix套接口通信。

当master接收到worker发回的SIGCHLD信号时,(worker进程的退出信号),它会逐个检查每一个worker进程,如果发现有worker进程是异常退出,就会重新启动这个worker进程。另外nginx还有两个用于管理cache的进程,一个是cache manager process,另外一个是cache loader process,它们是专门服务于文件cache的进程,也服从master进程的管理,类似于worker进程,后面的分析将略去它们。下面从代码的角度,详细分析实现细节。

master启动的时候,有一些重要的全局数据会被设置,最重要的是进程表ngx_processes,master每创建一个worker都会把一个设置好的ngx_process_t结构变量放入ngx_processes中,新创建的进程存放在ngx_process_slot位置,ngx_last_process是进程表中最后一个存量进程的下一个位置,ngx_process_t是进程在nginx中的抽象:

typedef struct {

ngx_pid_t pid;

int status;

ngx_socket_t channel[2];

ngx_spawn_proc_pt proc;

void *data;

char *name;

unsigned respawn:1;

unsigned just_spawn:1;

unsigned detached:1;

unsigned exiting:1;

unsigned exited:1;

} ngx_process_t;

(src/os/unix/ngx_process.h)

master进程向worker子进程发送命令是通过socketpair创建的一对socket实现的,之间传输的是ngx_channel_t结构变量:

typedef struct {

ngx_uint_t command;

ngx_pid_t pid;

ngx_int_t slot;

ngx_fd_t fd;

} ngx_channel_t;

(src/os/unix/ngx_channel.h)

command是要发送的命令,有5种:

1. 首先分析master进程的代码的功能,(Ngx_process_cycle.c中):

main()函数首先做一系列的初始化工作调用各模块的初始化代码(例如创建监听套接口等)然后就会调用ngx_master_process_cycle代码(多进程启动情况下),cycle是一个全局结构体变量,存储有系统运行的所需要的一些信息。在分析进程关系的的时候可以先忽略它。

void ngx_master_process_cycle(ngx_cycle_t *cycle)

{

SIGCHLD,

SIGALRM,

SIGIO,

SIGINT,

NGX_RECONFIGURE_SIGNAL(SIGHUP),

NGX_REOPEN_SIGNAL(SIGUSR1),

NGX_NOACCEPT_SIGNAL(SIGWINCH),

NGX_TERMINATE_SIGNAL(SIGTERM),

NGX_SHUTDOWN_SIGNAL(SIGQUIT),

NGX_CHANGEBIN_SIGNAL(SIGUSR2);

ngx_new_binary = 0;

delay = 0;

live = 1;

for ( ;; ) {

if (delay) {

delay *= 2;

ngx_log_debug1(NGX_LOG_DEBUG_EVENT, cycle->log, 0,

"temination cycle: %d", delay);

itv.it_interval.tv_sec = 0;

itv.it_interval.tv_usec = 0;

itv.it_value.tv_sec = delay / 1000;

itv.it_value.tv_usec = (delay % 1000 ) * 1000;

if (setitimer(ITIMER_REAL, &itv, NULL) == -1) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

"setitimer() failed");

}

}

ngx_log_debug0(NGX_LOG_DEBUG_EVENT, cycle->log, 0, "sigsuspend");

sigsuspend(&set);

ngx_time_update(0, 0);

ngx_log_debug0(NGX_LOG_DEBUG_EVENT, cycle->log, 0, "wake up");

if (ngx_reap) {

ngx_reap = 0;

ngx_log_debug0(NGX_LOG_DEBUG_EVENT, cycle->log, 0, "reap children");

live = ngx_reap_children(cycle);

}

if (!live && (ngx_terminate || ngx_quit)) {

ngx_master_process_exit(cycle);

}

if (ngx_terminate) {

if (delay == 0) {

delay = 50;

}

if (delay > 1000) {

ngx_signal_worker_processes(cycle, SIGKILL);

} else {

ngx_signal_worker_processes(cycle,

ngx_signal_value(NGX_TERMINATE_SIGNAL));

}

continue;

}

if (ngx_quit) {

ngx_signal_worker_processes(cycle,

ngx_signal_value(NGX_SHUTDOWN_SIGNAL));

ls = cycle->listening.elts;

for (n = 0; n < cycle->listening.nelts; n++) {

if (ngx_close_socket(ls[n].fd) == -1) {

ngx_log_error(NGX_LOG_EMERG, cycle->log, ngx_socket_errno,

ngx_close_socket_n " %V failed",

&ls[n].addr_text);

}

}

cycle->listening.nelts = 0;

continue;

}

if (ngx_reconfigure) {

ngx_reconfigure = 0;

if (ngx_new_binary) {

ngx_start_worker_processes(cycle, ccf->worker_processes,

NGX_PROCESS_RESPAWN);

ngx_start_cache_manager_processes(cycle, 0);

ngx_noaccepting = 0;

continue;

}

ngx_log_error(NGX_LOG_NOTICE, cycle->log, 0, "reconfiguring");

cycle = ngx_init_cycle(cycle);

if (cycle == NULL) {

cycle = (ngx_cycle_t *) ngx_cycle;

continue;

}

ngx_cycle = cycle;

ccf = (ngx_core_conf_t *) ngx_get_conf(cycle->conf_ctx,

ngx_core_module);

ngx_start_worker_processes(cycle, ccf->worker_processes,

NGX_PROCESS_JUST_RESPAWN);

ngx_start_cache_manager_processes(cycle, 1);

live = 1;

ngx_signal_worker_processes(cycle,

ngx_signal_value(NGX_SHUTDOWN_SIGNAL));

}

if (ngx_restart) {

ngx_restart = 0;

ngx_start_worker_processes(cycle, ccf->worker_processes,

NGX_PROCESS_RESPAWN);

ngx_start_cache_manager_processes(cycle, 0);

live = 1;

}

if (ngx_reopen) {

ngx_reopen = 0;

ngx_log_error(NGX_LOG_NOTICE, cycle->log, 0, "reopening logs");

ngx_reopen_files(cycle, ccf->user);

ngx_signal_worker_processes(cycle,

ngx_signal_value(NGX_REOPEN_SIGNAL));

}

if (ngx_change_binary) {

ngx_change_binary = 0;

ngx_log_error(NGX_LOG_NOTICE, cycle->log, 0, "changing binary");

ngx_new_binary = ngx_exec_new_binary(cycle, ngx_argv);

}

if (ngx_noaccept) {

ngx_noaccept = 0;

ngx_noaccepting = 1;

ngx_signal_worker_processes(cycle,

ngx_signal_value(NGX_SHUTDOWN_SIGNAL));

}

}

}

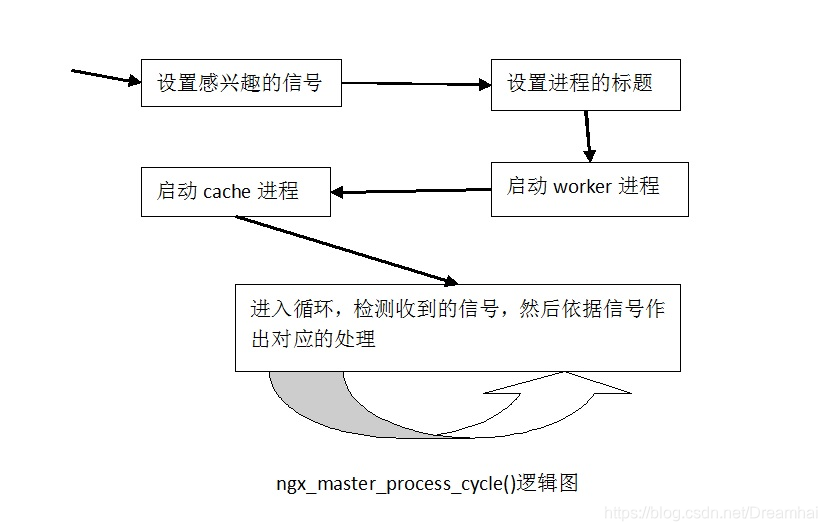

从代码中可以看书master主进程的逻辑是非常清晰的,如下图:

2. 接下来分析worker进程启动的代码ngx_start_worker_processes(),由于使用了socketpair通信,这里也包过了对socket设置的一些代码:

static void ngx_start_worker_processes(ngx_cycle_t *cycle, ngx_int_t n, ngx_int_t type)

{

ngx_int_t i;

ngx_channel_t ch;

ngx_log_error(NGX_LOG_NOTICE, cycle->log, 0, "start worker processes");

ch.command = NGX_CMD_OPEN_CHANNEL;

for (i = 0; i < n; i++) {

cpu_affinity = ngx_get_cpu_affinity(i);

ngx_spawn_process(cycle, ngx_worker_process_cycle, NULL,

"worker process", type);

ch.pid = ngx_processes[ngx_process_slot].pid;

ch.slot = ngx_process_slot;

ch.fd = ngx_processes[ngx_process_slot].channel[0]; ngx_pass_open_channel(cycle, &ch);

}

}

循环体中使用ngx_spawn_process来生成worker进程,这个后面说明。每次创建一个新的worker进程之后,都需要向之前创建的所有worker进程广播新创建的worker进程的信息。

ngx_pass_open_channel()会利用一个循环,将ch信息发送给其他的worker进程的channel[0]的socket上,worker收到以后就会将ch的信息添加到自己的进程表中,这样每个worker进程自己的进程表和master进程的进程表就会保持一致。在子进程创建的过程中,后面会有代码来设置各自的进程表项的ngx_socket_t字段。

3. 第2个函数中新建了一个进程以后,然后调用ngx_pass_open_channel(cycle,&ch)将ch数据对其他进程进行广播处理,下面分析它的实现。

static void ngx_pass_open_channel(ngx_cycle_t *cycle, ngx_channel_t *ch)

{

ngx_int_t i;

for (i = 0; i < ngx_last_process; i++) {

if (i == ngx_process_slot|| ngx_processes[i].pid == -1

|| ngx_processes[i].channel[0] == -1)

{

continue;

}

ngx_log_debug6(NGX_LOG_DEBUG_CORE, cycle->log, 0,

"pass channel s:%d pid:%P fd:%d to s:%i pid:%P fd:%d",

ch->slot, ch->pid, ch->fd,

i, ngx_processes[i].pid,

ngx_processes[i].channel[0]);

ngx_write_channel(ngx_processes[i].channel[0],

ch, sizeof(ngx_channel_t), cycle->log);

}

}

从代码中可以看出函数发送给除自己外而且正常工作的worker进程发送自己的进程信息,worker进程收到以后会将它添加到自己的进程表中。

4. 接下来分析ngx_pid_t ngx_spawn_process(ngx_cycle_t *cycle, ngx_spawn_proc_pt proc, void *data, char *name, ngx_int_t respawn)函数

ngx_pid_t ngx_spawn_process(ngx_cycle_t *cycle, ngx_spawn_proc_pt proc, void *data,char *name, ngx_int_t respawn)

{

u_long on;

ngx_pid_t pid;

ngx_int_t s;

if (respawn >= 0) {

s = respawn;

} else {

for (s = 0; s < ngx_last_process; s++) {

if (ngx_processes[s].pid == -1) {

break;

}

}

if (s == NGX_MAX_PROCESSES) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, 0,

"no more than %d processes can be spawned",

NGX_MAX_PROCESSES);

return NGX_INVALID_PID;

}

}

if (respawn != NGX_PROCESS_DETACHED) {

if (socketpair(AF_UNIX, SOCK_STREAM, 0, ngx_processes[s].channel) == -1)

{

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

"socketpair() failed while spawning \"%s\"", name);

return NGX_INVALID_PID;

}

ngx_log_debug2(NGX_LOG_DEBUG_CORE, cycle->log, 0,

"channel %d:%d",

ngx_processes[s].channel[0],

ngx_processes[s].channel[1]);

if (ngx_nonblocking(ngx_processes[s].channel[0]) == -1) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

ngx_nonblocking_n " failed while spawning \"%s\"",

name);

ngx_close_channel(ngx_processes[s].channel, cycle->log);

return NGX_INVALID_PID;

}

if (ngx_nonblocking(ngx_processes[s].channel[1]) == -1) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

ngx_nonblocking_n " failed while spawning \"%s\"",

name);

ngx_close_channel(ngx_processes[s].channel, cycle->log);

return NGX_INVALID_PID;

}

on = 1;

if (ioctl(ngx_processes[s].channel[0], FIOASYNC, &on) == -1) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

"ioctl(FIOASYNC) failed while spawning \"%s\"", name);

ngx_close_channel(ngx_processes[s].channel, cycle->log);

return NGX_INVALID_PID;

}

if (fcntl(ngx_processes[s].channel[0], F_SETOWN, ngx_pid) == -1) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

"fcntl(F_SETOWN) failed while spawning \"%s\"", name);

ngx_close_channel(ngx_processes[s].channel, cycle->log);

return NGX_INVALID_PID;

}

if (fcntl(ngx_processes[s].channel[0], F_SETFD, FD_CLOEXEC) == -1) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

"fcntl(FD_CLOEXEC) failed while spawning \"%s\"",name);

ngx_close_channel(ngx_processes[s].channel, cycle->log);

return NGX_INVALID_PID;

}

if (fcntl(ngx_processes[s].channel[1], F_SETFD, FD_CLOEXEC) == -1) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

"fcntl(FD_CLOEXEC) failed while spawning \"%s\"",name);

ngx_close_channel(ngx_processes[s].channel, cycle->log);

return NGX_INVALID_PID;

}

ngx_channel = ngx_processes[s].channel[1];

} else {

ngx_processes[s].channel[0] = -1;

ngx_processes[s].channel[1] = -1;

}

ngx_process_slot = s;

pid = fork();

switch (pid) {

case -1:

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

"fork() failed while spawning \"%s\"", name);

ngx_close_channel(ngx_processes[s].channel, cycle->log);

return NGX_INVALID_PID;

case 0:

ngx_pid = ngx_getpid();

proc(cycle, data);

break;

default:

break;

}

ngx_log_error(NGX_LOG_NOTICE, cycle->log, 0, "start %s %P", name, pid);

ngx_processes[s].pid = pid;

ngx_processes[s].exited = 0;

if (respawn >= 0) {

return pid;

}

ngx_processes[s].proc = proc;

ngx_processes[s].data = data;

ngx_processes[s].name = name;

ngx_processes[s].exiting = 0;

switch (respawn) {

case NGX_PROCESS_NORESPAWN:

ngx_processes[s].respawn = 0;

ngx_processes[s].just_spawn = 0;

ngx_processes[s].detached = 0;

break;

case NGX_PROCESS_JUST_SPAWN:

ngx_processes[s].respawn = 0;

ngx_processes[s].just_spawn = 1;

ngx_processes[s].detached = 0;

break;

case NGX_PROCESS_RESPAWN:

ngx_processes[s].respawn = 1;

ngx_processes[s].just_spawn = 0;

ngx_processes[s].detached = 0;

break;

case NGX_PROCESS_JUST_RESPAWN:

ngx_processes[s].respawn = 1;

ngx_processes[s].just_spawn = 1;

ngx_processes[s].detached = 0;

break;

case NGX_PROCESS_DETACHED://

ngx_processes[s].respawn = 0;

ngx_processes[s].just_spawn = 0;

ngx_processes[s].detached = 1;

break;

}

if (s == ngx_last_process) {

ngx_last_process++;

}

return pid;

}

5. 下面分析worker工作进程执行的函数:static voidngx_worker_process_cycle(ngx_cycle_t *cycle, void *data)。

static void ngx_worker_process_cycle(ngx_cycle_t *cycle, void *data)

{

ngx_uint_t i;

ngx_connection_t *c;

ngx_process = NGX_PROCESS_WORKER;

ngx_worker_process_init(cycle, 1);

ngx_setproctitle("worker process");

#if (NGX_THREADS)

{

ngx_int_t n;

ngx_err_t err;

ngx_core_conf_t *ccf;

ccf = (ngx_core_conf_t *) ngx_get_conf(cycle->conf_ctx, ngx_core_module);

if (ngx_threads_n)

if (ngx_init_threads(ngx_threads_n, ccf->thread_stack_size, cycle)

== NGX_ERROR)

{

exit(2);

}

err = ngx_thread_key_create(&ngx_core_tls_key);

if (err != 0) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, err,

ngx_thread_key_create_n " failed");

exit(2);

}

for (n = 0; n < ngx_threads_n; n++) {

ngx_threads[n].cv = ngx_cond_init(cycle->log);

if (ngx_threads[n].cv == NULL) {

exit(2);

}

if (ngx_create_thread((ngx_tid_t *) &ngx_threads[n].tid,

ngx_worker_thread_cycle,

(void *) &ngx_threads[n], cycle->log)

!= 0)

{

exit(2);

}

}

}

}

#endif

for ( ;; ) {

if (ngx_exiting) {

c = cycle->connections;

for (i = 0; i < cycle->connection_n; i++) {

if (c[i].fd != -1 && c[i].idle) {

c[i].close = 1;

c[i].read->handler(c[i].read);

}

}

if (ngx_event_timer_rbtree.root == ngx_event_timer_rbtree.sentinel)

{

ngx_log_error(NGX_LOG_NOTICE, cycle->log, 0, "exiting");

ngx_worker_process_exit(cycle);

}

}

ngx_log_debug0(NGX_LOG_DEBUG_EVENT, cycle->log, 0, "worker cycle");

ngx_process_events_and_timers(cycle);

if (ngx_terminate) {

ngx_log_error(NGX_LOG_NOTICE, cycle->log, 0, "exiting");

ngx_worker_process_exit(cycle);

}

if (ngx_quit) {

ngx_quit = 0;

ngx_log_error(NGX_LOG_NOTICE, cycle->log, 0,

"gracefully shutting down");

ngx_setproctitle("worker process is shutting down");

if (!ngx_exiting) {

ngx_close_listening_sockets(cycle);

ngx_exiting = 1;

}

}

if (ngx_reopen) {

ngx_reopen = 0;

ngx_log_error(NGX_LOG_NOTICE, cycle->log, 0, "reopening logs");

ngx_reopen_files(cycle, -1);

}

}

}

6. 接下来分析static void ngx_worker_process_init(ngx_cycle_t *cycle, ngx_uint_t priority),主要做的是work进程创建之前的初始化操作。

static void ngx_worker_process_init(ngx_cycle_t *cycle, ngx_uint_t priority)

{

for (n = 0; n < ngx_last_process; n++) {

if (ngx_processes[n].pid == -1) {

continue;

}

if (n == ngx_process_slot) {

continue;

}

if (ngx_processes[n].channel[1] == -1) {

continue;

}

if (close(ngx_processes[n].channel[1]) == -1) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

"close() channel failed");

}

}

if (close(ngx_processes[ngx_process_slot].channel[0]) == -1) {

ngx_log_error(NGX_LOG_ALERT, cycle->log, ngx_errno,

"close() channel failed");

}

if (ngx_add_channel_event(cycle, ngx_channel, NGX_READ_EVENT,

ngx_channel_handler)

== NGX_ERROR)

{

exit(2);

}

}

可以看出,通过第4步的操作,worker进程就可以再channel[1]上监听事件了,而master进程正好是将命令发往worker进程对应的channel[0]上,因此便实现了socketpair通信。当前worker还可以使用其他进程的channel[0]句柄发送消息,使用很少,但主要是监听channel[1]句柄上的事件消息。

7. ngx_add_channel_event()把句柄ngx_channel(当前worker的channel[1])上建立的连接的可读事件加入事件监控队列,事件处理函数为ngx_channel_hanlder(ngx_event_t *ev)。当有可读事件的时候,ngx_channel_handler负责处理消息,下面分析其实现:

static voidngx_channel_handler(ngx_event_t *ev)

{

ngx_int_t n;

ngx_channel_t ch;

ngx_connection_t *c;

if (ev->timedout) {

ev->timedout = 0;

return;

}

c = ev->data;

ngx_log_debug0(NGX_LOG_DEBUG_CORE, ev->log, 0, "channel handler");

for ( ;; ) {

n = ngx_read_channel(c->fd, &ch, sizeof(ngx_channel_t), ev->log);

ngx_log_debug1(NGX_LOG_DEBUG_CORE, ev->log, 0, "channel: %i", n);

if (n == NGX_ERROR) {

if (ngx_event_flags & NGX_USE_EPOLL_EVENT) {

ngx_del_conn(c, 0);

}

ngx_close_connection(c);

return;

}

if (ngx_event_flags & NGX_USE_EVENTPORT_EVENT) {

if (ngx_add_event(ev, NGX_READ_EVENT, 0) == NGX_ERROR) {

return;

}

}

if (n == NGX_AGAIN) {

return;

}

ngx_log_debug1(NGX_LOG_DEBUG_CORE, ev->log, 0,

"channel command: %d", ch.command);

switch (ch.command) {

case NGX_CMD_QUIT:

ngx_quit = 1;

break;

case NGX_CMD_TERMINATE:

ngx_terminate = 1;

break;

case NGX_CMD_REOPEN:

ngx_reopen = 1;

break;

case NGX_CMD_OPEN_CHANNEL:

ngx_log_debug3(NGX_LOG_DEBUG_CORE, ev->log, 0,

"get channel s:%i pid:%P fd:%d",

ch.slot, ch.pid, ch.fd);

ngx_processes[ch.slot].pid = ch.pid;

ngx_processes[ch.slot].channel[0] = ch.fd;

break;

case NGX_CMD_CLOSE_CHANNEL:

ngx_log_debug4(NGX_LOG_DEBUG_CORE, ev->log, 0,

"close channel s:%i pid:%P our:%P fd:%d",

ch.slot, ch.pid, ngx_processes[ch.slot].pid,

ngx_processes[ch.slot].channel[0]);

if (close(ngx_processes[ch.slot].channel[0]) == -1) {

ngx_log_error(NGX_LOG_ALERT, ev->log, ngx_errno,

"close() channel failed");

}

ngx_processes[ch.slot].channel[0] = -1;

break;

}

}

}

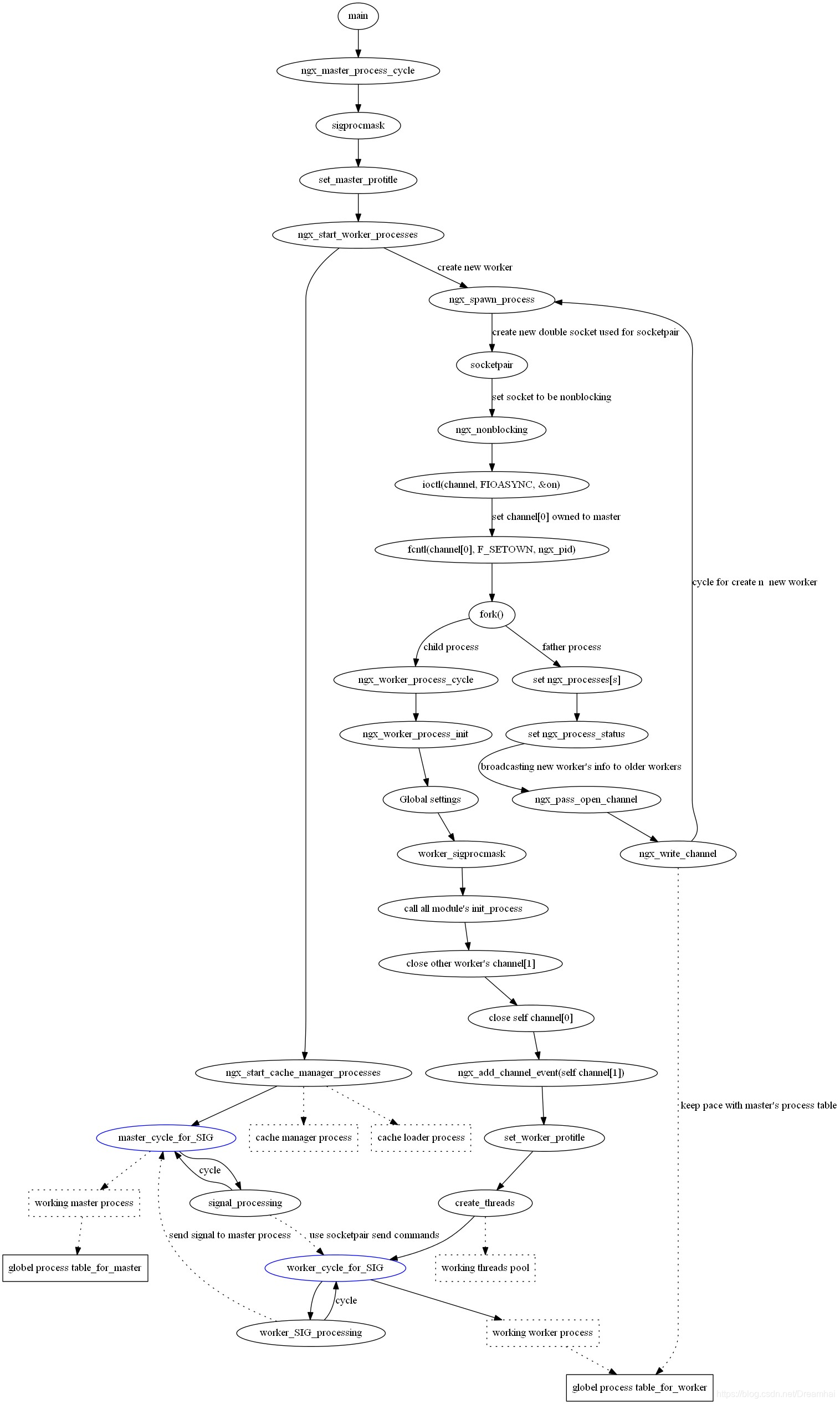

以上分析了nginx进程的通信机制以及工作逻辑模型,下面以图表的形式做个总结:

本文档也是以前研究分析的,难免会有不准确之处,希望大家一起研究探讨。

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)