虚拟机或服务器准备

安装centos7的操作系统

安装过程请自行百度

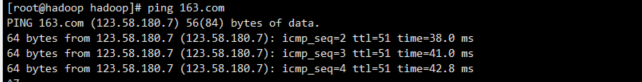

查看是否可以通网络

使用ping 163.com

如果ping不通,可以修改网卡。一般在/etc/sysconfig/network-scripts/的文件夹下

修改ifcfg-ens**

ONBOOT=NO

##改成

ONBOOT=YES

然后重启网络或重启机器

#重启网络

systemctl restart network

#重启机器

reboot

配置静态IP

vim /etc/sysconfig/network-scripts/ifcfg-ens33

BOOTPROTO=static

IPADDR="10.111.43.60"

NETMASK="255.255.255.0"

GATEWAY="10.111.43.254"

DNS1="10.5.90.2"

DNS2="202.98.198.167"

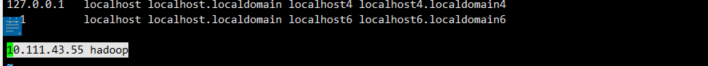

修改主机名

修改文件

#添加主机名

vim /etc/hostname

hadoop

#添加ip和hostname对应关系

vim /etc/sysconfig/network

10.111.43.55 hadoop

重启机器(reboot)生效

关闭防火墙

查看防火墙状态

[root@hadoop hadoop]# systemctl status firewalld

● firewalld.service - firewalld - dynamic firewall daemon

Loaded: loaded (/usr/lib/systemd/system/firewalld.service; enabled; vendor preset: enabled)

Active: inactive (dead) since Mon 2022-04-25 08:51:00 EDT; 13h ago

Docs: man:firewalld(1)

Process: 718 ExecStart=/usr/sbin/firewalld --nofork --nopid $FIREWALLD_ARGS (code=exited, status=0/SUCCESS)

Main PID: 718 (code=exited, status=0/SUCCESS)

inactive(dead)为关闭状态

如果是active(running),为开启状态,可以使用

systemctl stop firewalld

开启防火墙

systemctl start firewalld

安装hadoop伪分布式

映射IP

[root@hadoop hadoop]# vim /etc/hosts

在文件末尾 ,添加如下内容

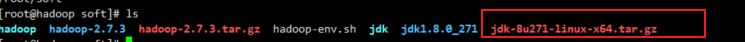

安装jdk

下载jar包,并上传到linux中,我的是/root/soft

解压安装包

tar -zxvf jdk-8u271-linux-x64.tar.gz

创建软链接

[hadoop@hadoop soft]$ ls

jdk1.8.0_271

#创建软链接

[hadoop@hadoop soft]$ ln -s jdk1.8.0_271 jdk

#查看软链接

[hadoop@hadoop soft]$ ls

jdk jdk1.8.0_271

配置环境变量

vim ~/.bashrc

在文件末尾添加一下内容

##JAVA_HOME 是你java 的解压的路径 一定要注意

export JAVA_HOME=~/soft/jdk

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=${JAVA_HOME}/bin:$PATH

让配置立即生效

source ~/.bashrc

验证是否配置成功

[root@hadoop soft]# java -version

java version "1.8.0_271"

Java(TM) SE Runtime Environment (build 1.8.0_271-b09)

Java HotSpot(TM) 64-Bit Server VM (build 25.271-b09, mixed mode)

出现以下内容说明配置成功

安装配置hadoop

下载并上传安装包到linux目录中,我的是/root/soft

解压

tar -zxvf hadoop-2.7.3.tar.gz

创建软链接

[hadoop@hadoop soft]$ ls hadoop-2.7.3 jdk jdk1.8.0_271

#创建软链接

[hadoop@hadoop soft]$ ln -s hadoop-2.7.3 hadoop

[hadoop@hadoop soft]$ ls hadoop hadoop-2.7.3 jdk jdk1.8.0_271

配置环境变量

vim ~/.bashrc

在文件末尾添加一下内容

## HADOOP_HOME 是刚才解压的路径

export HADOOP_HOME=~/soft/hadoop

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

让配置立即生效

source ~/.bashrc

验证是否配置成功

[root@hadoop soft]# hadoop version

Hadoop 2.7.3

Subversion https://git-wip-us.apache.org/repos/asf/hadoop.git -r baa91f7c6bc9cb92be5982de4719c1c8af91ccff

Compiled by root on 2016-08-18T01:41Z

Compiled with protoc 2.5.0

From source with checksum 2e4ce5f957ea4db193bce3734ff29ff4

This command was run using /root/soft/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar

出现以下内容说明配置成功

设置免密登录

执行 ssh-keygen -t rsa 命令后,再连续敲击3次回车

[hadoop@node1 soft]$ ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/hadoop/.ssh/id_rsa):

Created directory '/home/hadoop/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/hadoop/.ssh/id_rsa.

Your public key has been saved in /home/hadoop/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:sXZw/coSYm19gGSLdOLQGeskm+vPVr6lXENvmmQ8eeo hadoop@hadoop

The key's randomart image is:

+---[RSA 2048]----+

| ..+o+ |

| +oB + |

| . B + o |

| * * . o |

| o S = o o |

| + +.= = |

| . o. % + |

| . ....B O |

| .oo +oE |

+----[SHA256]-----+

查看生成的秘钥对

[hadoop@hadoop soft]$ ls ~/.ssh/

id_rsa id_rsa.pub

追加公钥,执行命令后,根据提示输入 yes 再次回车

ssh-copy-id hadoop

查看生成的认证文件 authorized_keys

[hadoop@hadoop soft]$ ls ~/.ssh/ authorized_keys id_rsa id_rsa.pub known_hosts

验证免密

[root@hadoop soft]# ssh hadoop

Last login: Mon Apr 25 21:13:35 2022 from 10.111.43.4

[root@hadoop ~]# exit

logout

Connection to hadoop closed.

##还可以ssh ip

[root@hadoop soft]# ssh 10.111.43.55

Last login: Mon Apr 25 22:57:59 2022 from hadoop

[root@hadoop ~]# exit

logout

Connection to 10.111.43.55 closed.

[root@hadoop soft]#

配置hadoop伪分布式

进入hadoop配置目录 cd ${HADOOP_HOME}/etc/hadoop

[root@hadoop soft]# cd ${HADOOP_HOME}/etc/hadoop

[root@hadoop hadoop]# ls

capacity-scheduler.xml core-site.xml hadoop-metrics2.properties hdfs-site.xml httpfs-signature.secret kms-env.sh log4j.properties mapred-queues.xml.template slaves yarn-env.cmd

configuration.xsl hadoop-env.cmd hadoop-metrics.properties httpfs-env.sh httpfs-site.xml kms-log4j.properties mapred-env.cmd mapred-site.xml ssl-client.xml.example yarn-env.sh

container-executor.cfg hadoop-env.sh hadoop-policy.xml httpfs-log4j.properties kms-acls.xml kms-site.xml mapred-env.sh mapred-site.xml.template ssl-server.xml.example yarn-site.xml

[root@hadoop hadoop]#

配置hadoop-env.sh

[hadoop@hadoop hadoop]$ vim hadoop-env.sh

配置JAVA_HOME

export JAVA_HOME=/root/soft/jdk

配置 core-site.xml , 在与之间添加如下配置

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop:8020</value>

<!-- 以上主机名hadoop要按实际情况修改 -->

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/hadoop/soft/hadoop/tmp</value>

</property>

配置 hdfs-site.xml

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

复制模板文件mapred-site.xml.template为mapred-site.xm

[hadoop@hadoop hadoop]$ cp mapred-site.xml.template mapred-site.xml

配置 mapred-site.xml

同样在与之间添加配置内容,配置内容如下:

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

以上设置了mapreduce运行在yarn框架之上。

配置 yarn-site.xml

同样在与之间添加配置内容,配置内容如下:

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadooop</value>

</property>

<!-- 以上主机名hadoop要按实际情况修改 -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

配置 slaves

vim slaves

将localhost修改为主机名,例如: hadoop

格式化文件系统

hdfs namenode -format

若能看到Exiting with status 0,则格式化成功

注意:格式化只需要做一次,格式化成功后,以后就不能再次格式化了。

启动hadoop

[root@hadoop hadoop]# start-all.sh

This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh

Starting namenodes on [hadoop]

hadoop: namenode running as process 2699. Stop it first.

hadoop: datanode running as process 2839. Stop it first.

Starting secondary namenodes [0.0.0.0]

0.0.0.0: secondarynamenode running as process 3026. Stop it first.

starting yarn daemons

resourcemanager running as process 9309. Stop it first.

hadoop: starting nodemanager, logging to /root/soft/hadoop-2.7.3/logs/yarn-root-nodemanager-hadoop.out

[root@hadoop hadoop]# jps

3026 SecondaryNameNode

16180 NodeManager

2839 DataNode

16328 Jps

2699 NameNode

9309 ResourceManager

[root@hadoop hadoop]#

验证进程hdfs是否正常,正常应三个包含:NameNode、DataNode、SecondaryNameNode

验证进程,yarn进程为:ResourceManager、NodeManager

验证

浏览器验证 10.111.43.55:50070