Def: a cipher defined over ( K , M , C ) (K,M,C) (K,M,C) is a pair of “efficient” algorithms ( E , D ) (E,D) (E,D) where

K

K

K: the set of all possible keys (also called key space)

M

M

M: the set of all possible messages

C

C

C: the set of all possible ciphertexts

E

E

E: the encryption algorithm

D

D

D: the decryption algorithm

E

:

K

×

M

→

C

E:K\times M\to C

E:K×M→C

D

:

K

×

C

→

M

D:K\times C\to M

D:K×C→M

∀

m

∈

M

,

k

∈

K

,

D

(

k

,

E

(

k

,

m

)

)

=

m

\forall m\in M,k\in K,D(k,E(k,m))=m

∀m∈M,k∈K,D(k,E(k,m))=m

[Consistency Equation]

E E E is often randomized, D D D is often deterministic

First example of a secure" cipher

M

=

C

=

{

0

,

1

}

n

M=C=\{0,1\}^n

M=C={0,1}n

K

=

{

0

,

1

}

n

K=\{0,1\}^n

K={0,1}n

K

e

y

=

Key=

Key=(rand. bit string as long as msg.)

c

:

=

E

(

k

,

m

)

=

k

⊕

m

c:=E(k,m)=k\oplus m

c:=E(k,m)=k⊕m

D

(

k

,

c

)

=

k

⊕

c

D(k,c)=k\oplus c

D(k,c)=k⊕c

I n d e e d : D ( k , E ( k , m ) ) = D ( k , k ⊕ m ) = k ⊕ ( k ⊕ m ) = m Indeed: D(k,E(k,m))=D(k,k\oplus m)=k\oplus(k\oplus m)=m Indeed:D(k,E(k,m))=D(k,k⊕m)=k⊕(k⊕m)=m

Very fast enc/dec !!

… but long keys (as long as plaintext)

Is OTP a good cipher? What is a good cipher?

Basic idea: CT should reveal no “info” about PT

Def: Acipher ( E , D ) (E,D) (E,D) over ( K , M , C ) (K,M,C) (K,M,C) has perfect secrecy if

∀

m

0

,

m

1

∈

M

,

(

∣

m

0

∣

=

∣

m

1

∣

)

\forall m_0,m_1\in M,(|m_0|=|m_1|)

∀m0,m1∈M,(∣m0∣=∣m1∣) and

∀

c

∈

C

\forall c\in C

∀c∈C,

P

r

[

E

(

k

,

m

0

)

=

c

]

=

P

r

[

E

(

k

,

m

1

)

=

c

]

Pr[E(k,m_0)=c]=Pr[E(k,m_1)=c]

Pr[E(k,m0)=c]=Pr[E(k,m1)=c]

where

k

←

R

K

k\overset{R}{\leftarrow}K

k←RK

Lemma: OTP has perfect secrecy

Proof:

∀

m

,

c

:

P

r

[

E

(

k

,

m

)

=

c

]

=

#

k

e

y

s

,

k

∈

K

,

s

.

t

,

E

(

k

,

m

)

=

c

∣

K

∣

\forall m,c: Pr[E(k,m)=c]=\frac{\#keys,k\in K,s.t, E(k,m)=c}{|K|}

∀m,c:Pr[E(k,m)=c]=∣K∣#keys,k∈K,s.t,E(k,m)=c

so:

∀

m

,

c

:

#

{

k

∈

K

:

E

(

k

,

m

)

=

c

}

=

c

o

n

s

t

.

\forall m,c: \#\{k\in K: E(k,m)=c\}=const.

∀m,c:#{k∈K:E(k,m)=c}=const.

⟹

\Longrightarrow

⟹ cipher has perfect secrecy

Thm: perfect secrecy ⟹ ∣ K ∣ ≥ ∣ M ∣ \Longrightarrow |K|\geq|M| ⟹∣K∣≥∣M∣

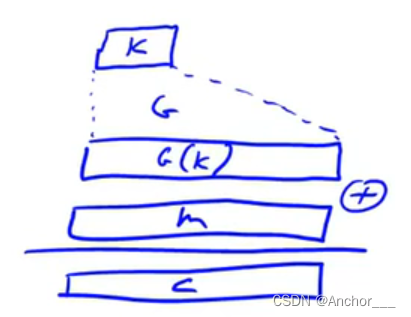

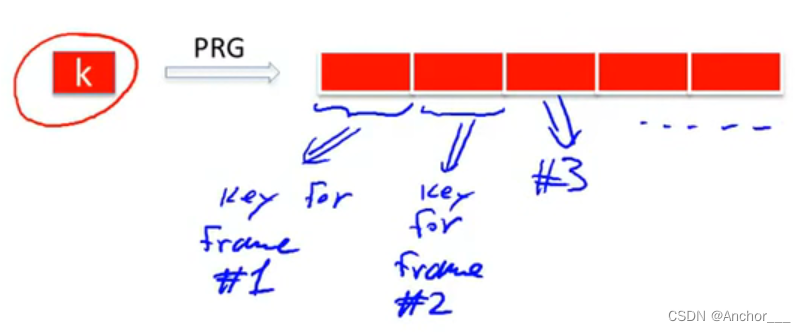

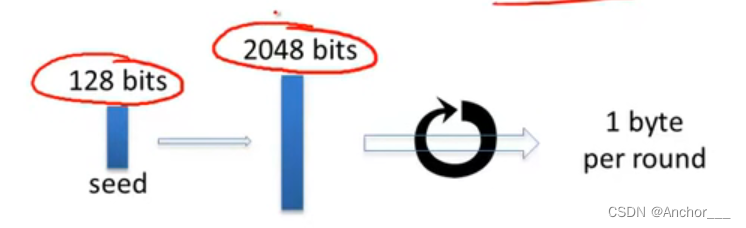

idea: replace “random” key by “pseudorandom” key

pseudo-random generator (PRG): is a func.

G

:

{

0

,

1

}

s

→

{

0

,

1

}

n

,

n

>

>

s

G:\{0,1\}^s\rightarrow\{0,1\}^n,n>>s

G:{0,1}s→{0,1}n,n>>s

{

0

,

1

}

s

\{0,1\}^s

{0,1}s: seed space

{

0

,

1

}

n

\{0,1\}^n

{0,1}n: output

(“efficient” computable by deterministic algorithm)

c

=

E

(

k

,

m

)

=

m

⊕

G

(

k

)

c=E(k,m)=m\oplus G(k)

c=E(k,m)=m⊕G(k)

D

(

c

,

m

)

=

c

⊕

G

(

k

)

D(c,m)=c\oplus G(k)

D(c,m)=c⊕G(k)

Stream cipher cannot have perfect secrecy !!

suppose PRG is predictable

∃

i

:

G

(

k

)

1

,

2

,

⋯

,

i

⟶

a

l

g

.

G

(

k

)

i

+

1

\exist i:G(k)_{1,2,\cdots,i}\overset{alg.}{\longrightarrow}G(k)_{i+1}

∃i:G(k)1,2,⋯,i⟶alg.G(k)i+1

is a problem !!

We say

G

:

K

→

{

0

,

1

}

n

G: K\rightarrow\{0,1\}^n

G:K→{0,1}n is predictable if:

∃

\exist

∃ eff. alg.

A

A

A and

∃

1

≤

i

≤

n

−

1

\exist 1\leq i\leq n-1

∃1≤i≤n−1

s

.

t

.

P

r

k

←

R

K

[

A

(

G

(

k

)

)

1

,

⋯

,

i

=

G

(

k

)

i

+

1

]

≥

1

2

+

ϵ

s.t. Pr_{k\overset{R}{\leftarrow}K}[A(G(k))_{1,\cdots,i}=G(k)_{i+1}]\geq \frac{1}{2}+\epsilon

s.t.Prk←RK[A(G(k))1,⋯,i=G(k)i+1]≥21+ϵ

For some “non-negligible”

ϵ

\epsilon

ϵ

Def: PRG is unpredictable if it is not predictable

⟹

\Longrightarrow

⟹

∀

i

:

\forall i:

∀i: no “eff.” adversary can predict bit

(

i

+

1

)

(i+1)

(i+1) for “non-neg.”

ϵ

\epsilon

ϵ

Linear Congruential Generator

r

[

0

]

≡

s

e

e

d

,

r

[

i

]

←

a

⋅

r

[

i

−

1

]

+

b

(

m

o

d

p

)

r[0]\equiv seed, r[i]\leftarrow a\cdot r[i-1]+b(modp)

r[0]≡seed,r[i]←a⋅r[i−1]+b(modp)

output few bits of

r

[

i

]

r[i]

r[i]

[easy to pred. !!]

glibc random()

r

[

i

]

←

(

r

[

i

−

3

]

+

r

[

i

−

31

]

)

r[i]\leftarrow(r[i-3]+r[i-31])%2^{32}

r[i]←(r[i−3]+r[i−31])

output

r

[

i

]

>

>

1

r[i]>>1

r[i]>>1

never use random() for crypto !!

In practice: ϵ \epsilon ϵ is a scalar and

In theory: ϵ \epsilon ϵ is a function ϵ : Z ≥ 0 → R ≥ 0 \epsilon:Z^{\geq0}\rightarrow R^{\geq0} ϵ:Z≥0→R≥0 and

ϵ

(

λ

)

=

1

/

2

λ

\epsilon(\lambda)=1/2^\lambda

ϵ(λ)=1/2λ: negligible

ϵ

(

λ

)

=

1

/

2

1000

\epsilon(\lambda)=1/2^{1000}

ϵ(λ)=1/21000: non-negligible

ϵ ( λ ) = { 1 / 2 λ f o r o d d λ 1 / 2 1000 f o r e v e n λ \epsilon(\lambda)=\begin{cases} 1/2^\lambda & for\ odd\ \lambda\\ 1/2^{1000} & for\ even\ \lambda \end{cases} ϵ(λ)={1/2λ1/21000for odd λfor even λ: Non-negligible

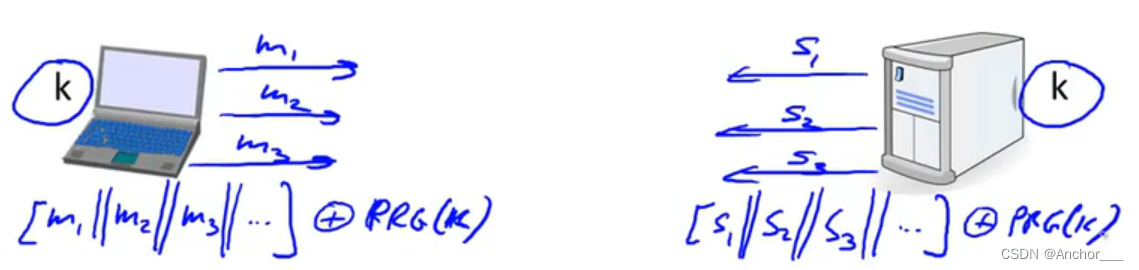

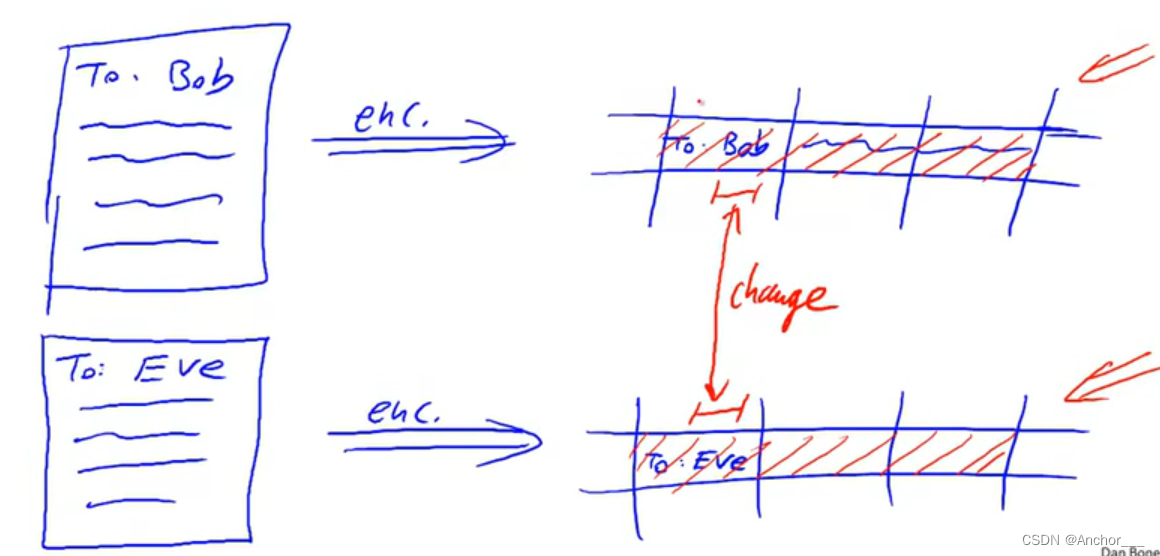

Never use stream cipher key more than once !!

c

1

←

m

1

⊕

P

R

G

(

k

)

c_1\leftarrow m_1\oplus PRG(k)

c1←m1⊕PRG(k)

c

2

←

m

2

⊕

P

R

G

(

k

)

c_2\leftarrow m_2\oplus PRG(k)

c2←m2⊕PRG(k)

Eavesdropper does:

c

1

⊕

c

2

→

m

1

⊕

m

2

c_1\oplus c_2\rightarrow m_1\oplus m_2

c1⊕c2→m1⊕m2

Enough redundancy in English and ASCII encoding that:

m

1

⊕

m

2

→

m

1

,

m

2

m_1\oplus m_2\rightarrow m_1,m_2

m1⊕m2→m1,m2

Project Venona (1941-1946)

MS-PPTP(windows NT)

Need different keys for

C

→

S

C\to S

C→S and

S

→

C

S\to C

S→C

k

=

(

k

S

→

C

,

k

C

→

S

)

k=(k_{S\to C},k_{C\to S})

k=(kS→C,kC→S)

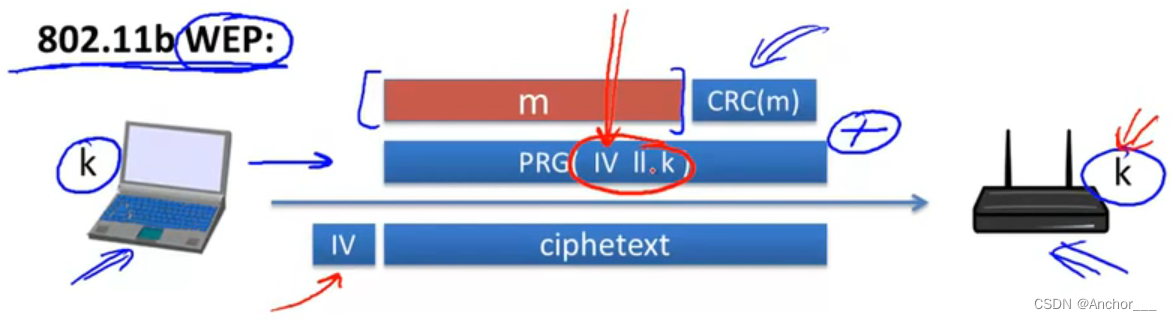

802.11b WEP:

Length of

I

V

IV

IV: 24 bits

Repeated I V IV IV after 2 24 ≈ 16 M 2^{24}\approx16M 224≈16M frames

On some 802.11 cards: I V IV IV resets to 0 0 0 after power cycle

For PRG in WEP (RC4) :

(by Fluhrer, Mantin, Shamir, 2011): 10^6 Frame can recover

k

k

k

Recently: 40000 Frames sufficient

WEP provides no security:

k

⟶

P

R

G

k\overset{PRG}{\longrightarrow}

k⟶PRG long stream of bits look essentially random

⟹

\Longrightarrow

⟹ now each frame has a pseudorandom key

if one change takes place, the it’s very easy to tell where that change occurred.

Never use stream cipher key more than once !!

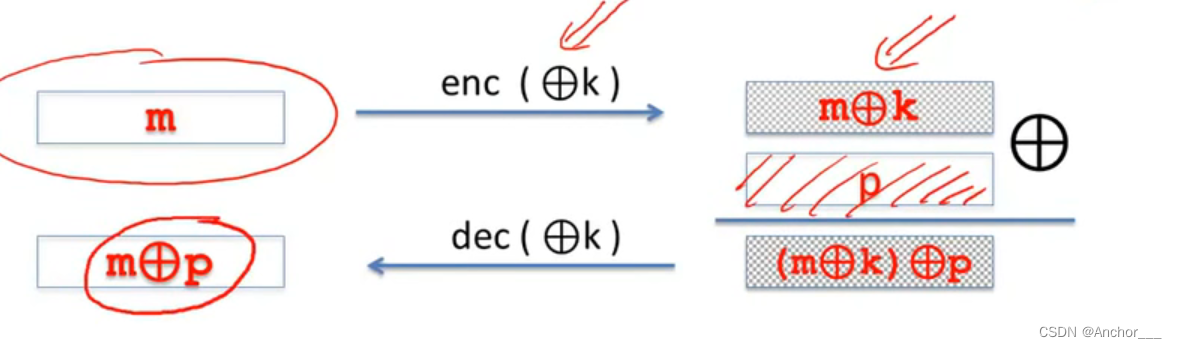

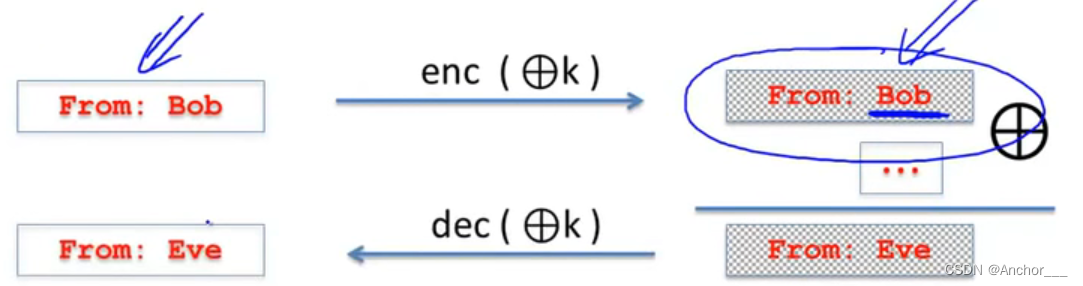

Modification ciphertext are undetected and have predictable impact on plaintext.

B

o

b

→

426

F

62

Bob\to426F62

Bob→426F62

E

v

e

→

457665

Eve\to457665

Eve→457665

B

o

b

⊕

E

v

e

→

071907

Bob\oplus Eve\to071907

Bob⊕Eve→071907

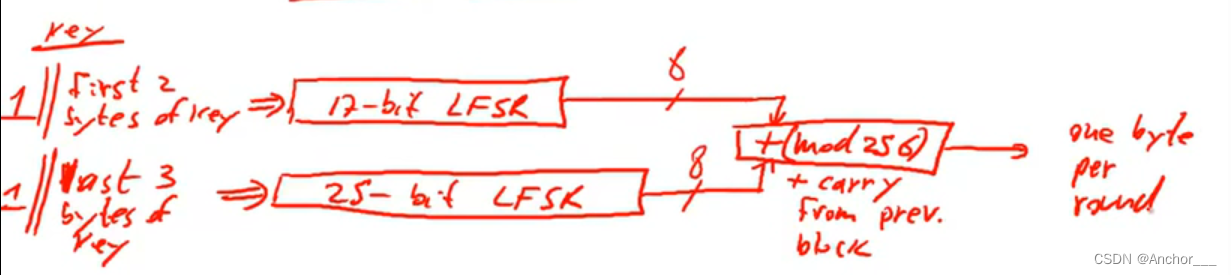

Linear feedback shift register (LFSR):

CSS:

s

e

e

d

=

5

b

y

t

e

s

=

40

b

i

t

s

seed=5bytes=40bits

seed=5bytes=40bits

Easy to break

≈

2

17

t

i

m

e

s

\approx 2^{17} times

≈217times:

suppose knowing first 20bytes of message

⟶

\longrightarrow

⟶ first 20bytes of message

⊕

\oplus

⊕ first 20bytes of ciphertext

=

=

= first 20bytes of stream cipher

⟶

\longrightarrow

⟶ try all 17-bits LFSR

⟶

\longrightarrow

⟶ get stream cipher of 25-bits LFSR (Check if right or not)

P

R

G

:

{

0

,

1

}

s

×

R

→

{

0

,

1

}

n

PRG: \{0,1\}^s\times R\to\{0,1\}^n

PRG:{0,1}s×R→{0,1}n

n

>

>

s

n>>s

n>>s

{

0

,

1

}

s

\{0,1\}^s

{0,1}s: seed

R

R

R: nonce

(Nonce: a non-repeating value for a given key)

E

(

k

,

m

;

r

)

=

m

⊕

P

R

G

(

k

;

r

)

E(k,m;r)=m\oplus PRG(k;r)

E(k,m;r)=m⊕PRG(k;r)

The pair

(

k

,

r

)

(k,r)

(k,r) is never used more than once.

⟹

\Longrightarrow

⟹ reuse the key because

(

k

,

r

)

(k,r)

(k,r) unique.

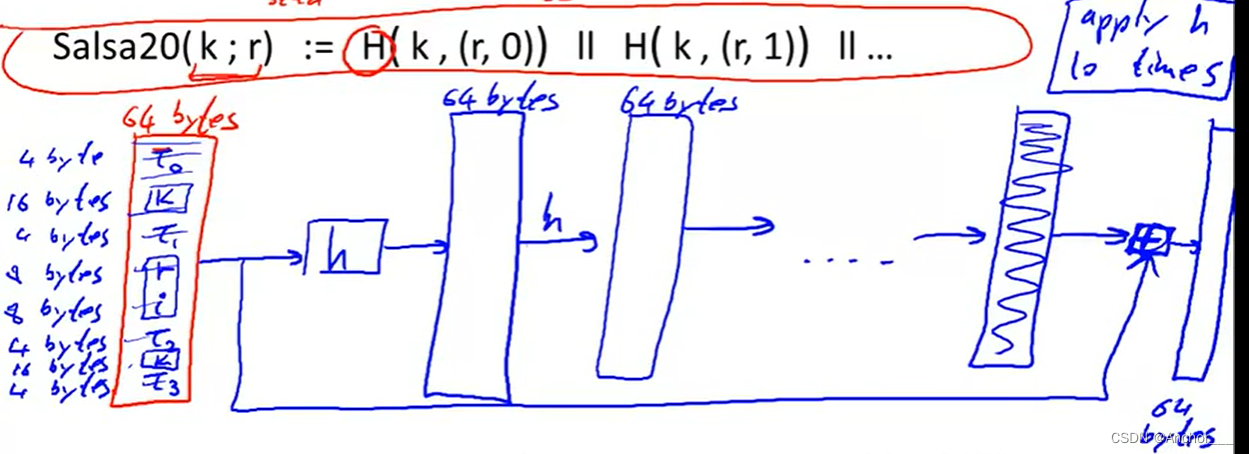

Salsa20: { 0 , 1 } 128 o r 256 × { 0 , 1 } 64 → { 0 , 1 } n \{0,1\}^{128or256}\times\{0,1\}^{64}\to \{0,1\}^n {0,1}128or256×{0,1}64→{0,1}n

S

a

l

s

a

20

(

k

;

r

)

:

=

H

(

k

,

(

r

,

0

)

)

∣

∣

H

(

k

,

(

r

,

1

)

)

.

.

.

Salsa20(k;r) := H(k,(r,0)) || H(k,(r,1))...

Salsa20(k;r):=H(k,(r,0))∣∣H(k,(r,1))...

h: invertible function. designed to be fast on x86 (SSE2)

Let G : K → { 0 , 1 } n G:K\to\{0,1\}^n G:K→{0,1}n be a PRG

Goal: define what it means that

[

k

←

R

K

,

o

u

t

p

u

t

G

(

k

)

]

[k\overset{R}{\leftarrow}K, output\ G(k)]

[k←RK,output G(k)]

is “indistinguishable” from

[

r

←

R

{

0

,

1

}

n

,

o

u

t

p

u

t

r

]

[r\overset{R}{\leftarrow}\{0,1\}^n,output\ r]

[r←R{0,1}n,output r]

Statistical test on

{

0

,

1

}

n

\{0,1\}^n

{0,1}n:

an alg.

A

A

A s.t.

A

(

x

)

A(x)

A(x) outputs “0” or “1”

Examples:

( # 0 ( x ) \#0(x) #0(x): the number of ‘0’ in the string)

Let G : K → { 0 , 1 } n G:K\to\{0,1\}^n G:K→{0,1}n be a PRG and A A A a stat.test on { 0 , 1 } n \{0,1\}^n {0,1}n

Define:

A

d

v

P

R

G

[

A

,

G

]

:

=

∣

P

k

←

R

K

[

A

(

G

(

k

)

)

=

1

]

−

P

r

←

R

{

0

,

1

}

n

[

A

(

r

)

=

1

]

∣

∈

[

0

,

1

]

\displaystyle Adv_{PRG}[A,G]:=|P_{k\overset{R}{\leftarrow}K}[A(G(k))=1]-P_{r\overset{R}{\leftarrow}\{0,1\}^n}[A(r)=1]|\in[0,1]

AdvPRG[A,G]:=∣Pk←RK[A(G(k))=1]−Pr←R{0,1}n[A(r)=1]∣∈[0,1]

Adv close to 1

⟹

\Longrightarrow

⟹

A

A

A can distinguish G from rand.

Adv close to 0

⟹

\Longrightarrow

⟹

A

A

A cannot distinguish G from rand.

Example:

Suppose

G

:

K

→

{

0

,

1

}

n

G:K\to\{0,1\}^n

G:K→{0,1}n satisfies

m

s

b

(

G

(

k

)

)

=

1

msb(G(k))=1

msb(G(k))=1 for

2

/

3

2/3

2/3 of keys in

K

K

K

Define stat. test

A

A

A as:

if

[

m

s

b

(

x

)

=

1

]

[msb(x)=1]

[msb(x)=1] output “1” else output “0”

Then

A

d

v

P

R

G

[

A

,

G

]

=

∣

P

[

A

(

G

(

k

)

)

=

1

]

−

P

[

A

(

r

)

=

1

]

∣

=

∣

2

/

3

−

1

/

2

∣

=

1

/

6

Adv_{PRG}[A,G]=|P[A(G(k))=1]-P[A(r)=1]|=|2/3-1/2|=1/6

AdvPRG[A,G]=∣P[A(G(k))=1]−P[A(r)=1]∣=∣2/3−1/2∣=1/6

⟹

A

\Longrightarrow A

⟹A breaks

G

G

G with adv.

1

/

6

1/6

1/6

Def: We say that

G

:

K

→

{

0

,

1

}

n

G:K\to\{0,1\}^n

G:K→{0,1}n is a secure PRG if

∀

\forall

∀ “eff.” stat. test

A

A

A:

A

d

v

P

R

G

[

A

,

G

]

Adv_{PRG}[A,G]

AdvPRG[A,G] is “negligible”

Are there provably secure PRGs?

Unknown !! (

⟹

P

≠

N

P

\Longrightarrow P\not= NP

⟹P=NP)

but we have heuristic candidates

We show: PRG predictable ⇒ \Rightarrow ⇒ PRG is insecure

Suppose

A

A

A is an efficient algorithm s.t.

P

k

←

R

K

[

A

(

G

(

k

)

∣

1

,

⋯

,

n

)

=

G

(

k

)

∣

i

+

1

]

=

1

/

2

+

ϵ

P_{k\overset{R}{\leftarrow}K}[A(G(k)|_{1,\cdots,n})=G(k)|_{i+1}]=1/2+\epsilon

Pk←RK[A(G(k)∣1,⋯,n)=G(k)∣i+1]=1/2+ϵ

for non-negligible

ϵ

\epsilon

ϵ (e.g.

ϵ

=

1

/

1000

\epsilon=1/1000

ϵ=1/1000)

Define statistical test

B

B

B as:

B

(

X

)

=

{

i

f

A

(

X

∣

1

,

⋯

,

i

=

X

i

+

1

)

o

u

t

p

u

t

1

e

l

s

e

o

u

t

p

u

t

0

B(X)=\begin{cases} if\ A(X|_{1,\cdots,i}=X_{i+1}) & output\ 1\\ else & output\ 0\\ \end{cases}

B(X)={if A(X∣1,⋯,i=Xi+1)elseoutput 1output 0

r

←

R

{

0

,

1

}

n

:

P

[

B

(

r

)

=

1

]

=

1

/

2

r\overset{R}{\leftarrow}\{0,1\}^n:P[B(r)=1]=1/2

r←R{0,1}n:P[B(r)=1]=1/2

k

←

R

K

:

P

[

B

(

r

)

=

1

]

>

1

/

2

+

ϵ

k\overset{R}{\leftarrow}K:P[B(r)=1]>1/2+\epsilon

k←RK:P[B(r)=1]>1/2+ϵ

⟹

A

d

v

P

R

G

[

B

,

G

]

>

ϵ

\Longrightarrow Adv_{PRG}[B,G]>\epsilon

⟹AdvPRG[B,G]>ϵ

Let

G

:

K

→

{

0

,

1

}

n

G:K\to\{0,1\}^n

G:K→{0,1}n be PRG

“Thm”: if

∀

i

∈

{

0

,

⋯

,

n

−

1

}

\forall i \in\{0,\cdots,n-1\}

∀i∈{0,⋯,n−1} PRG

G

G

G is unpredictable at pos.

i

i

i, then

G

G

G is a secure PRG.

If next-bit predictors cannot distinguish G G G from random then no statistical test can !!

Let P 1 P_1 P1 and P 2 P_2 P2 be two distributions over { 0 , 1 } n \{0,1\}^n {0,1}n

Def: We say

P

1

P_1

P1 and

P

2

P_2

P2 are computationally indistinguishable(denoted

P

1

≈

p

P

2

P_1\approx_{p} P_2

P1≈pP2)

if

∀

\forall

∀ “eff.” stat. tests

A

A

A:

∣

P

X

←

P

1

[

A

(

X

)

=

1

]

−

P

X

←

P

2

[

A

(

X

)

=

1

]

∣

<

n

e

g

.

\displaystyle |P_{X\leftarrow P_1}[A(X)=1]-P_{X\leftarrow P_2}[A(X)=1]|<neg.

∣PX←P1[A(X)=1]−PX←P2[A(X)=1]∣<neg.

Example: a PRG is secure if { k ← R K : G ( k ) } ≈ p u n i f o r m ( { 0 , 1 } n ) \{k\overset{R}{\leftarrow}K:G(k)\}\approx_{p} uniform(\{0,1\}^n) {k←RK:G(k)}≈puniform({0,1}n)

Goal: secure PRG ⟹ \Longrightarrow ⟹ “secure” stream cipher

Attacker’s abilities: obtains one ciphertext (for now)

Possible security requirements:

attempt #1: attacker cannot recover secret key

attempt #2: attacker cannot recover all of plaintext

Recall Shannon’s idea:

CT should reveal no “info” about PT

Let ( E , D ) (E,D) (E,D) be a cipher over ( K , M , C ) (K,M,C) (K,M,C)

(

E

,

D

)

(E,D)

(E,D) has perfect secrecy if

∀

m

0

,

m

1

∈

M

(

∣

m

0

∣

=

∣

m

1

∣

)

\forall m_0,m_1\in M(|m_0|=|m_1|)

∀m0,m1∈M(∣m0∣=∣m1∣)

{

E

(

k

,

m

0

)

}

=

{

E

(

k

,

m

1

)

}

w

h

e

r

e

k

←

K

\{E(k,m_0)\}=\{E(k,m_1)\}\ where\ k\leftarrow K

{E(k,m0)}={E(k,m1)} where k←K

⇓ \Downarrow ⇓weaken

(

E

,

D

)

(E,D)

(E,D) has perfect secrecy if

∀

m

0

,

m

1

∈

M

(

∣

m

0

∣

=

∣

m

1

∣

)

\forall m_0,m_1\in M(|m_0|=|m_1|)

∀m0,m1∈M(∣m0∣=∣m1∣)

{

E

(

k

,

m

0

)

}

≈

p

{

E

(

k

,

m

1

)

}

w

h

e

r

e

k

←

K

\{E(k,m_0)\}\approx_{p}\{E(k,m_1)\}\ where\ k\leftarrow K

{E(k,m0)}≈p{E(k,m1)} where k←K

but also need adversary to exhibit

m

0

,

m

1

∈

M

m_0,m_1\in M

m0,m1∈M explicitly

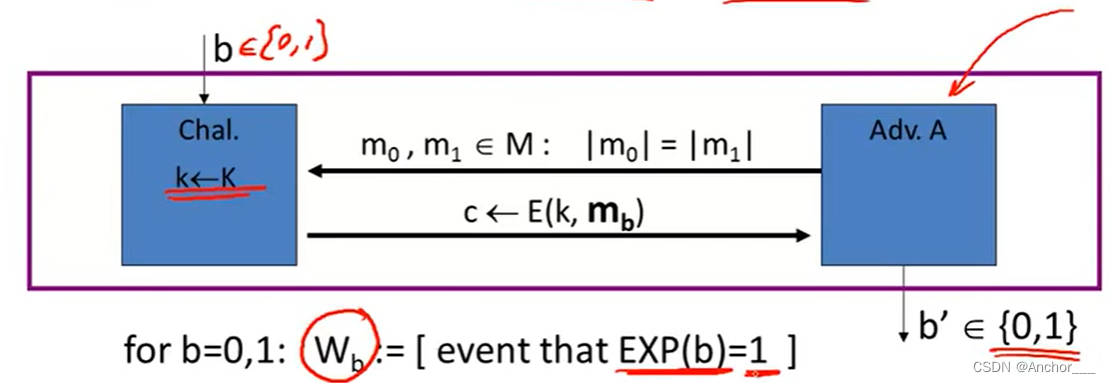

For

b

=

0

,

1

b=0,1

b=0,1 define experiments

E

X

P

(

0

)

EXP(0)

EXP(0) and

E

X

P

(

1

)

EXP(1)

EXP(1) as:

A

d

v

S

S

[

A

,

E

]

:

=

∣

P

[

W

0

]

−

P

[

W

1

]

∣

∈

[

0

,

1

]

Adv_{SS}[A,E]:= |P[W_0]-P[W_1]|\in[0,1]

AdvSS[A,E]:=∣P[W0]−P[W1]∣∈[0,1]

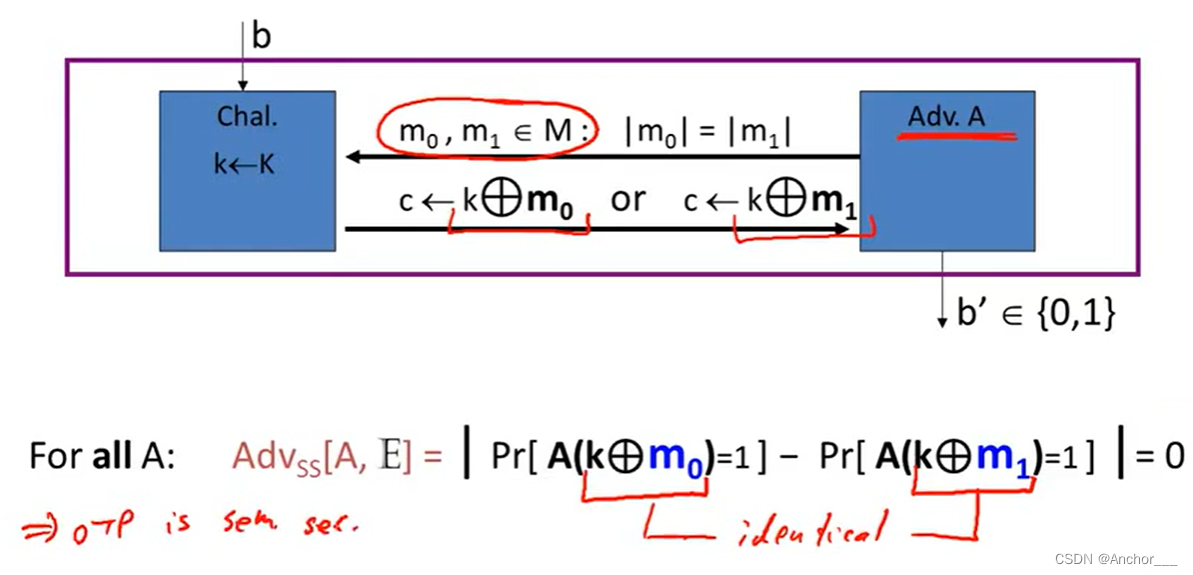

Def:

E

E

E is semantically secure if for all efficient

A

A

A,

A

s

v

S

S

[

A

,

E

]

Asv_{SS}[A,E]

AsvSS[A,E] is negligible.

⇒ \Rightarrow ⇒ for all explicit m 0 , m 1 ∈ M : { E ( k , m 0 ) } ≈ p { E ( k , m 1 ) } m_0,m_1\in M: \{E(k,m_0)\}\approx_p\{E(k,m_1)\} m0,m1∈M:{E(k,m0)}≈p{E(k,m1)}

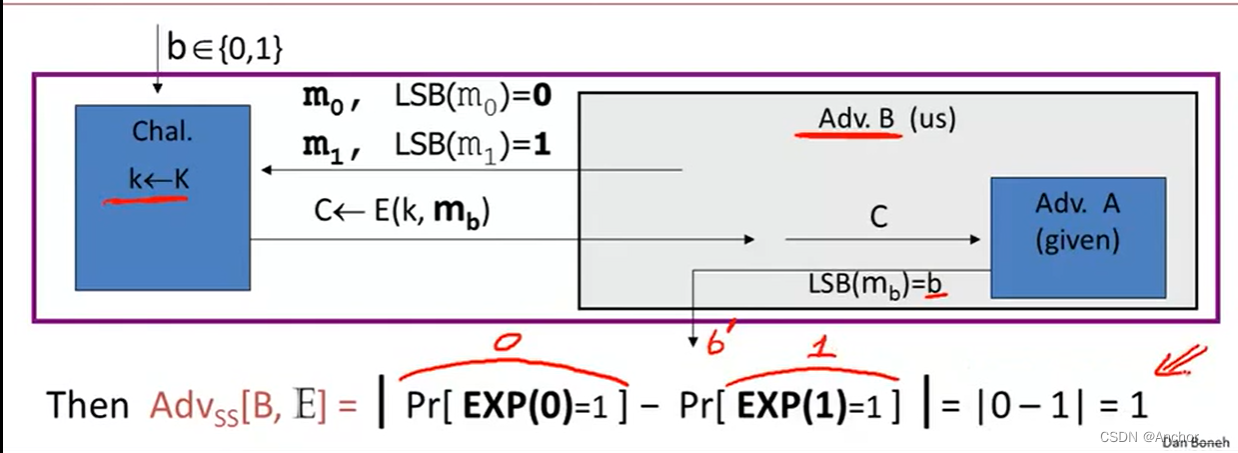

Suppose efficient

A

A

A can always deduce LSB of PT from CT

⇒

E

(

E

,

D

)

\Rightarrow E(E,D)

⇒E(E,D) is not semantically secure.

Any bit about the plaintext the adversary can be learned basically would mean that the system is not semantically secure.

Thm: G : K → { 0 , 1 } n G:K\to\{0,1\}^n G:K→{0,1}n is a secure PRG ⇒ \Rightarrow ⇒ stream cipher E E E derived from G G G is sem. sec.

⇓ \Downarrow ⇓ the same as to prove

∀

\forall

∀ sem. sec. adversary

A

A

A,

∃

\exist

∃ a PRG adversary

B

B

B,

s

.

t

.

A

d

v

S

S

[

A

,

E

]

≤

2

⋅

A

d

v

P

R

G

[

B

,

G

]

s.t. Adv_{SS}[A,E]\leq2\cdot Adv_{PRG}[B,G]

s.t.AdvSS[A,E]≤2⋅AdvPRG[B,G]

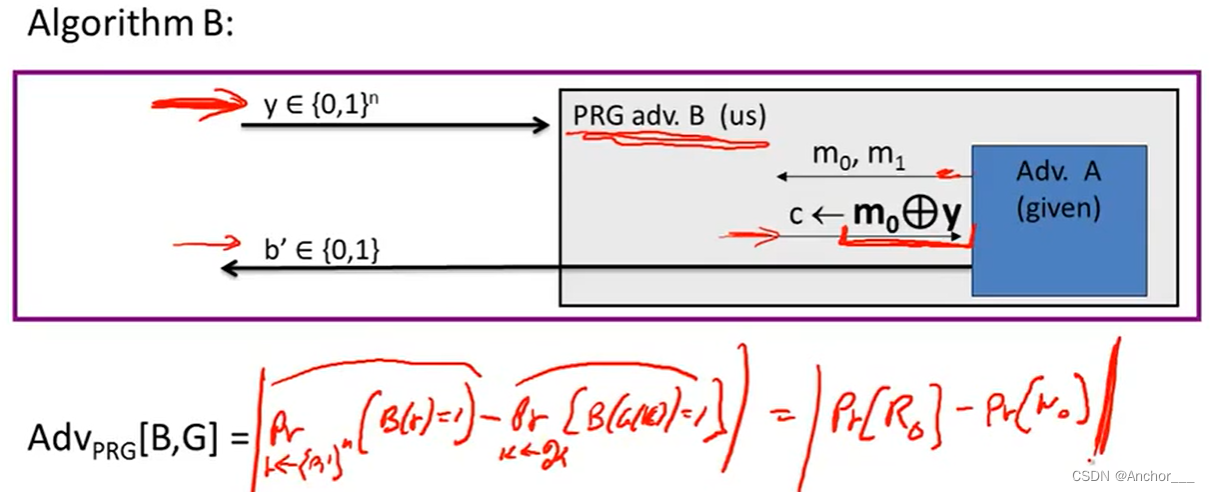

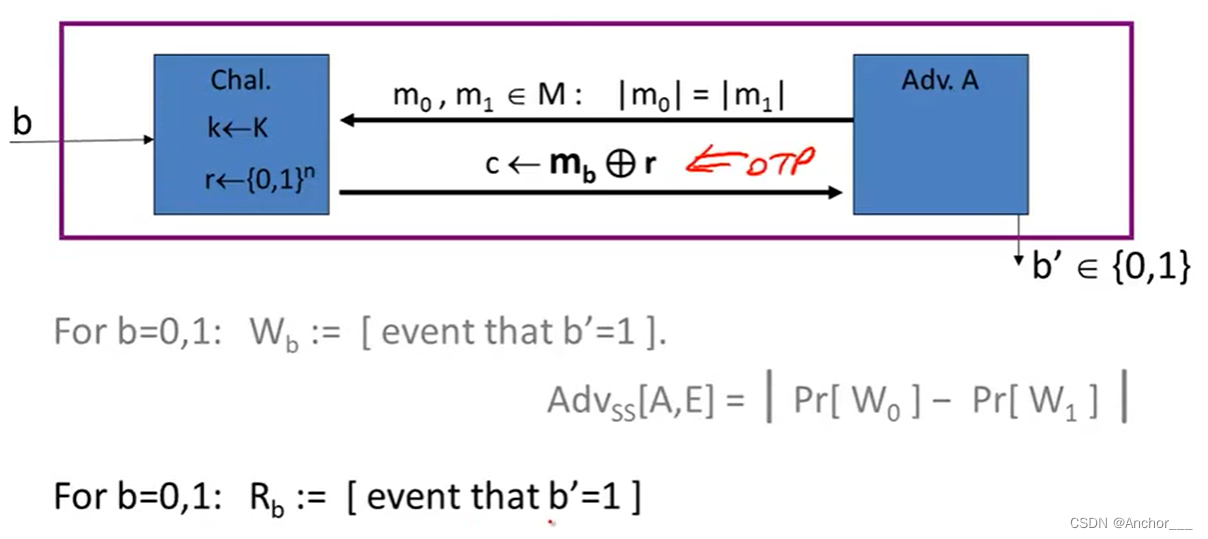

Proof:

Let

A

A

A be a sem. sec. adversary

Claim 1:

∣

P

[

R

0

]

−

P

[

R

1

]

∣

=

A

d

v

S

S

(

A

,

O

T

P

)

=

0

|P[R_0]-P[R_1]|=Adv_{SS}(A,OTP)=0

∣P[R0]−P[R1]∣=AdvSS(A,OTP)=0

Claim 2:

∃

B

:

∣

P

[

W

b

]

−

P

[

R

b

]

∣

=

A

d

v

P

R

G

(

B

,

G

)

f

o

r

b

=

0

,

1

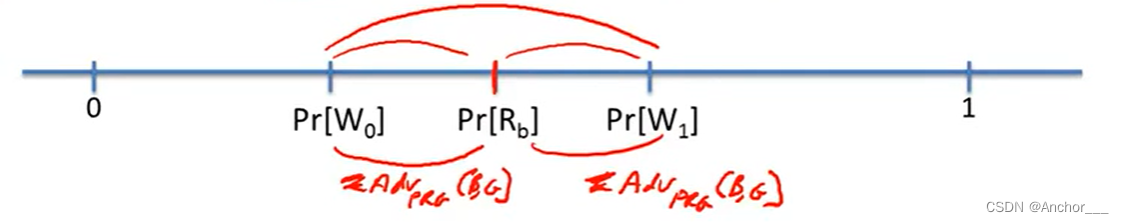

\exist B: |P[W_b]-P[R_b]|=Adv_{PRG}(B,G)\ for\ b=0,1

∃B:∣P[Wb]−P[Rb]∣=AdvPRG(B,G) for b=0,1

⇒

A

d

v

S

S

[

A

,

E

]

=

∣

P

[

W

0

]

−

P

[

W

1

]

∣

≤

2

⋅

A

d

v

P

R

G

[

B

,

G

]

\Rightarrow Adv_{SS}[A,E]=|P[W_0]-P[W_1]|\leq2\cdot Adv_{PRG}[B,G]

⇒AdvSS[A,E]=∣P[W0]−P[W1]∣≤2⋅AdvPRG[B,G]

Proof of Claim 2:

∃

B

:

∣

P

[

W

b

]

−

P

[

R

b

]

∣

=

A

d

v

P

R

G

(

B

,

G

)

\exist B: |P[W_b]-P[R_b]|=Adv_{PRG}(B,G)

∃B:∣P[Wb]−P[Rb]∣=AdvPRG(B,G)