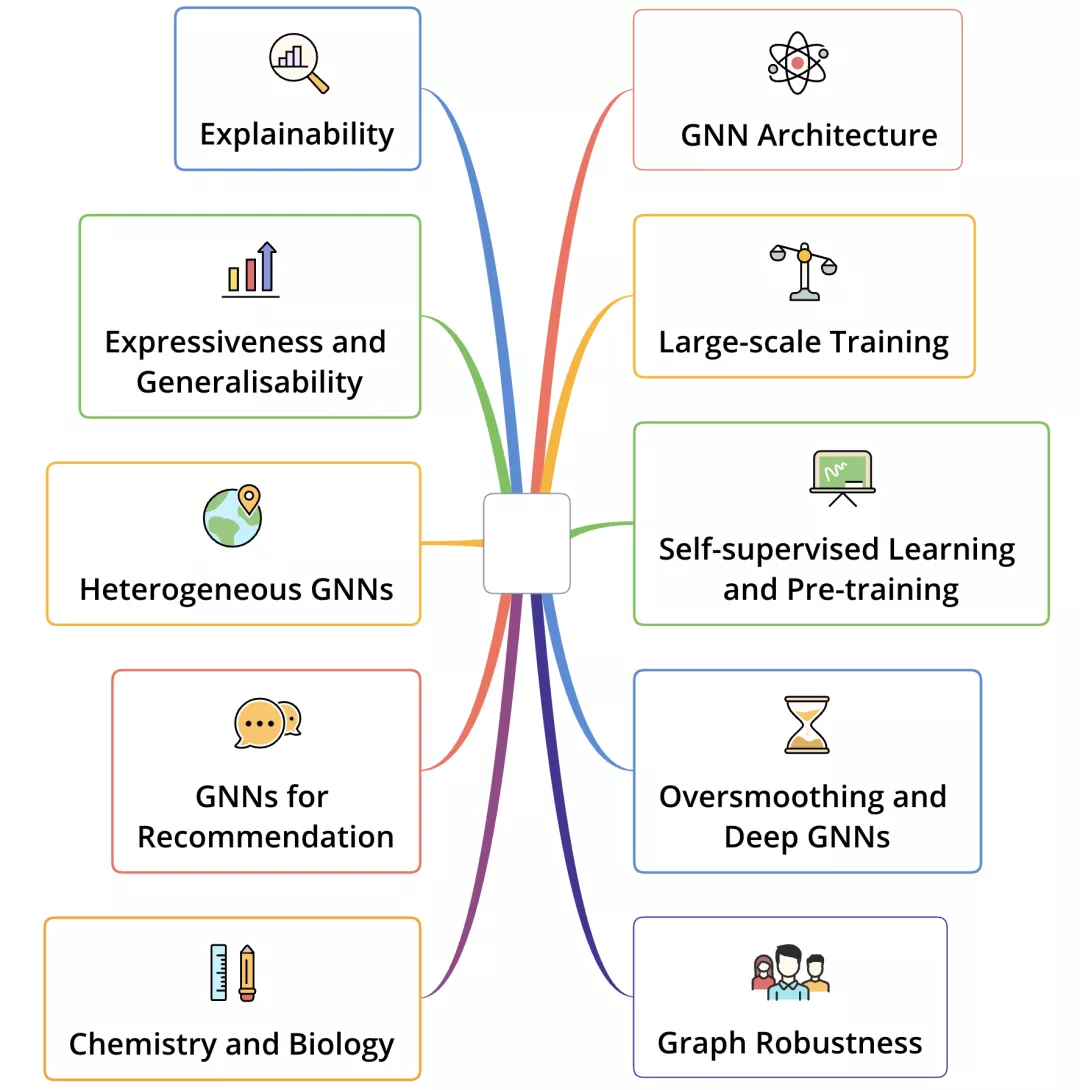

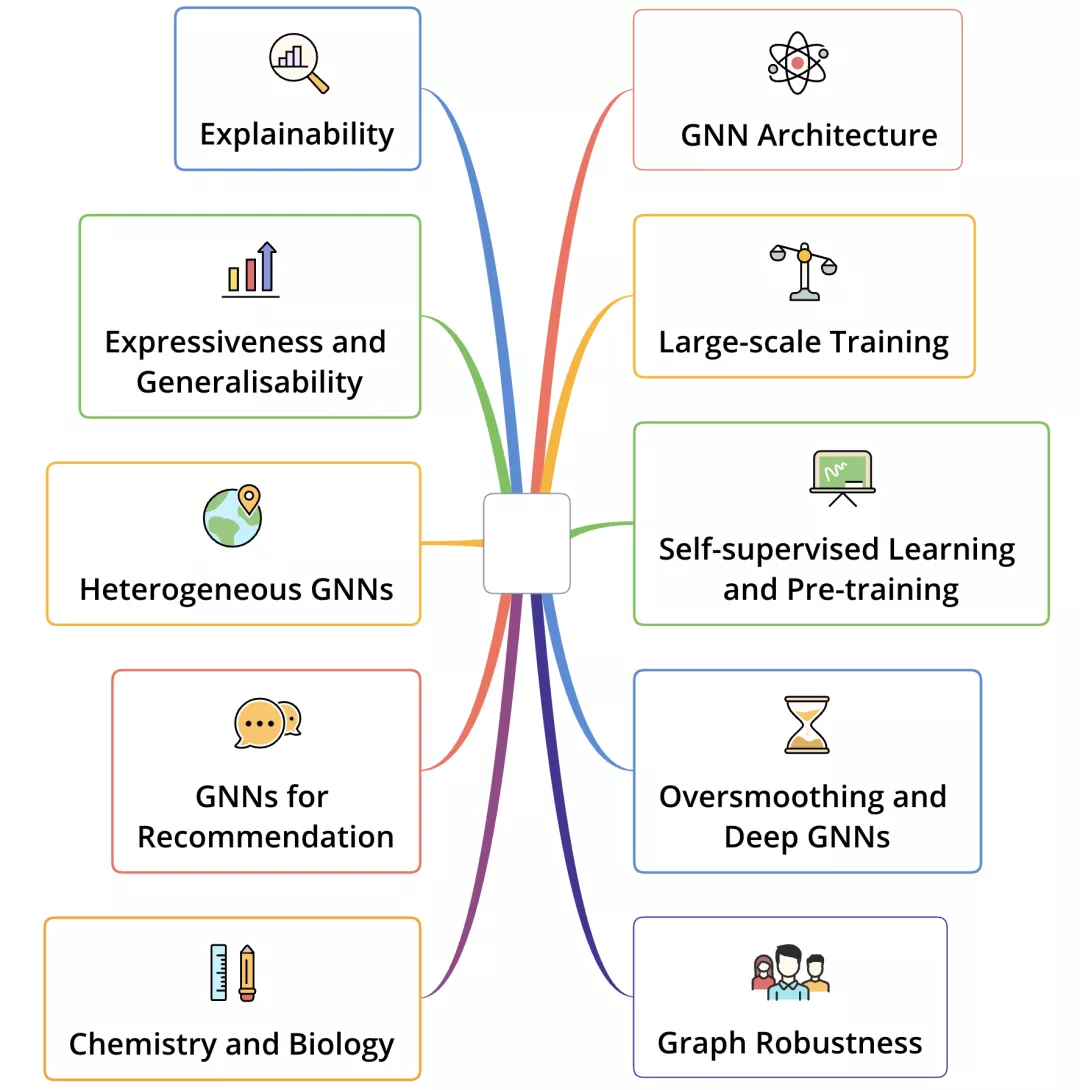

清华大学的Top 100 GNN papers,其中分了十个方向,每个方向10篇。此篇为自监督学习与预训练方向的阅读笔记。

Top100值得一读的图神经网络| 大家好,我是蘑菇先生,今天带来Top100 GNN Papers盘点文。此外,公众号技术交流群开张啦!可 https://mp.weixin.qq.com/s?__biz=MzIyNDY5NjEzNQ==&mid=2247491631&idx=1&sn=dfa36e829a84494c99bb2d4f755717d6&chksm=e809a207df7e2b1117578afc86569fa29ee62eb883fd35428888c0cc0be750faa5ef091f9092&mpshare=1&scene=23&srcid=1026NUThrKm2Vioj874F3gqS&sharer_sharetime=1635227630762&sharer_shareid=80f244b289da8c80b67c915b10efd0a8#rd

https://mp.weixin.qq.com/s?__biz=MzIyNDY5NjEzNQ==&mid=2247491631&idx=1&sn=dfa36e829a84494c99bb2d4f755717d6&chksm=e809a207df7e2b1117578afc86569fa29ee62eb883fd35428888c0cc0be750faa5ef091f9092&mpshare=1&scene=23&srcid=1026NUThrKm2Vioj874F3gqS&sharer_sharetime=1635227630762&sharer_shareid=80f244b289da8c80b67c915b10efd0a8#rd

架构篇连接:TOP 100值得读的图神经网络----架构_tagagi的博客-CSDN博客 https://blog.csdn.net/tagagi/article/details/121318308

https://blog.csdn.net/tagagi/article/details/121318308

同时推荐一个很好的博客: 图对比学习的最新进展_模型GCC以及我们提出的GRACE和同期GraphCL的工作采用了local-local的思路,即直接利用两个经过增强的view中节点的embedding特征,巧妙地绕开了设计一个单射读出函数的需求。 … https://www.sohu.com/a/496046127_121124371论文列表

https://www.sohu.com/a/496046127_121124371论文列表

- Strategies for pre-training graph neural networks. Weihua Hu, Bowen Liu, Joseph Gomes, Marinka Zitnik, Percy Liang, Vijay Pande, Leskovec Jure. ICLR 2020.

- Deep graph infomax. Velikovi Petar, Fedus William, Hamilton William L, Li Pietro, Bengio Yoshua, Hjelm R Devon. ICLR 2019.

- Inductive representation learning on large graphs. Hamilton Will, Zhitao Ying, Leskovec Jure. NeurIPS 2017.

- Infograph: Unsupervised and semi-supervised graph-level representation learning via mutual information maximization. Sun Fan-Yun, Hoffmann Jordan, Verma Vikas, Tang Jian. ICLR 2020.

- GCC: Graph contrastive coding for graph neural network pre-training. Jiezhong Qiu, Qibin Chen, Yuxiao Dong, Jing Zhang, Hongxia Yang, Ming Ding, Kuansan Wang, Jie Tang. KDD 2020.

- Contrastive multi-view representation learning on graphs. Hassani Kaveh, Khasahmadi Amir Hosein. ICML 2020.

- Graph contrastive learning with augmentations. Yuning You, Tianlong Chen, Yongduo Sui, Ting Chen, Zhangyang Wang, Yang Shen. NeurIPS 2020.

- GPT-GNN: Generative pre-training of graph neural networks. Ziniu Hu, Yuxiao Dong, Kuansan Wang, Kai-Wei Chang, Yizhou Sun. KDD 2020.

- When does self-supervision help graph convolutional networks?. Yuning You, Tianlong Chen, Zhangyang Wang, Yang Shen. ICML 2020.

- Deep graph contrastive representation learning. Yanqiao Zhu, Yichen Xu, Feng Yu, Qiang Liu, Shu Wu, Liang Wang. GRL+@ICML 2020.

目录

一、图神经网络预训练的策略 STRATEGIES FORPRE-TRAININGGRAPHNEURAL NETWORKS

二、DGI DEEP GRAPH INFOMAX

三、GraphSAGE大型图的归纳表示学习 Inductive Representation Learning on Large Graphs

四、INFOGRAPH: UNSUPERVISED ANDSEMI-SUPERVISED GRAPH-LEVEL REPRESENTATION LEARNING VIA MUTUAL INFORMATION MAXIMIZATION

五、GCC: Graph Contrastive Coding for Graph Neural Network Pre-Training

六、多视图对比学习 Contrastive multi-view representation learning on graphs

七、GraphCL Graph Contrastive Learning with Augmentations

八、GPT-GNN: Generative Pre-Training of Graph Neural Networks

九、When Does Self-Supervision Help Graph Convolutional Networks?

十、GRACE Deep Graph Contrastive Representation Learning

一、图神经网络预训练的策略 STRATEGIES FORPRE-TRAININGGRAPHNEURAL NETWORKS

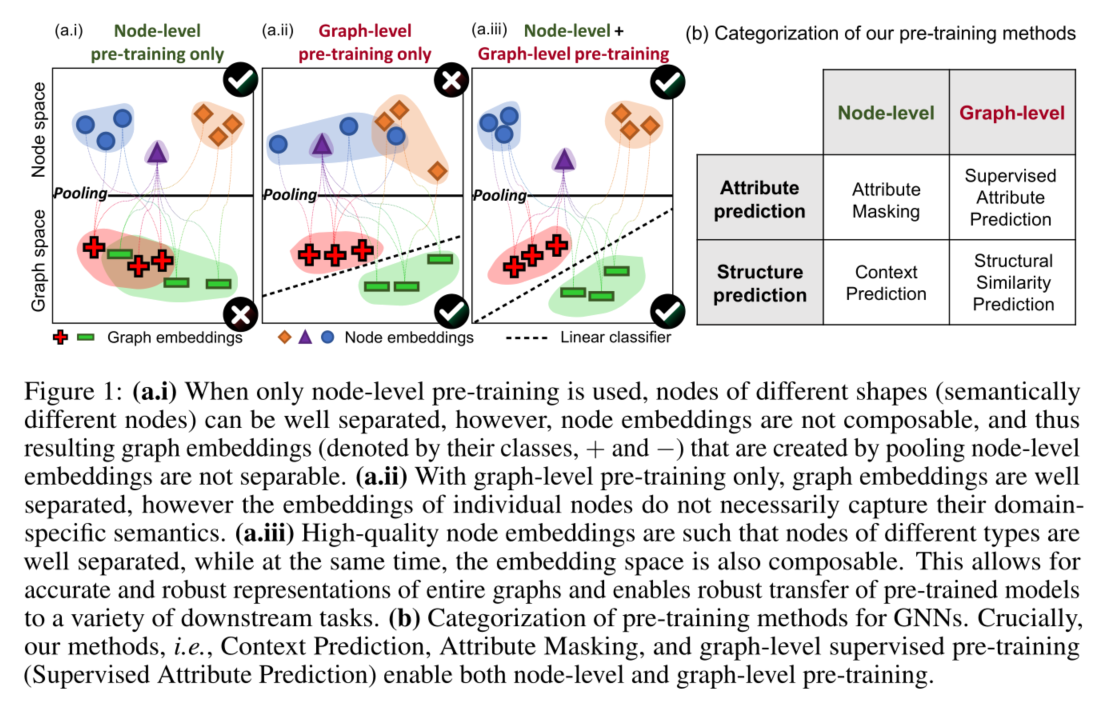

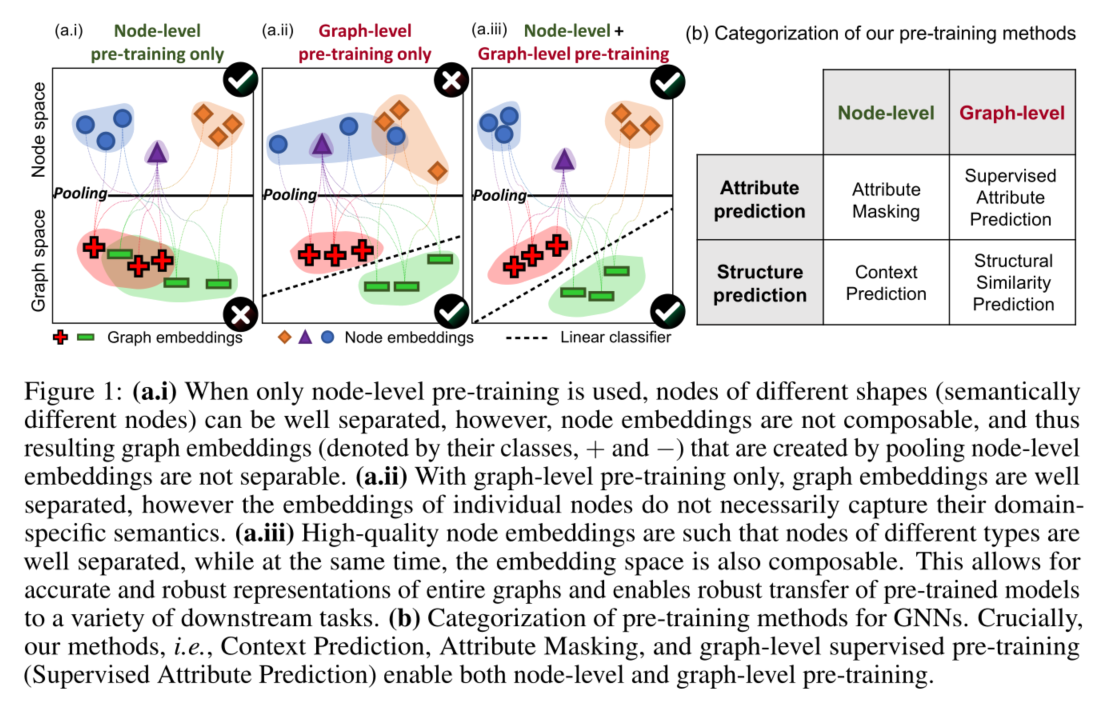

主要内容:提出了一种图神经网络的预训练方法,在ROC-AUC曲线上得到了11.7%的绝对值提升

1 INTRODUCTION

- 预训练是迁移学习的一种十分有效的方法,可有效解决

- 特定于任务的标签十分稀少

- 现实世界应用通常含有分布外(out-of-distribution)的样例,也就是说在训练集和测试集的图在结构上十分不一样

- 挑战:现有地模型没有系统地研究图上的预训练操作,因而我们不知道什么样的预训练操作有效,以及在实际应用中效果如何

- 贡献:

- 提出了首个系统的大型预训练模型

- 放出了两个大型的预训练图数据集

- 证明了现有的小数据量的benchmark不能够在统计上可靠地评估预训练

- 提出了预训练策略原则(principled pre-training strategy),并证明其的有效性以及在硬迁移学习(hard transfer learning)问题上的分布外泛化能力

- 预训练GNN不总是有效,很多都会导致下游任务的负迁移

- 预训练GNN的有效方法:使用简单可达的节点级信息,然后鼓励模型学习关于节点和边的领域特定的知识

- 这一思想对于生成健壮且可转移到不同下游任务的图级表示(通过池化节点表示获得)至关重要

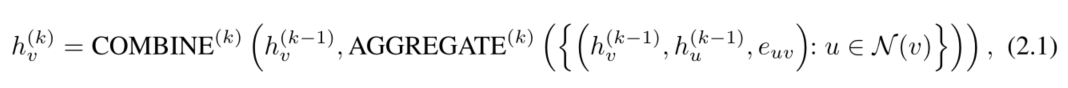

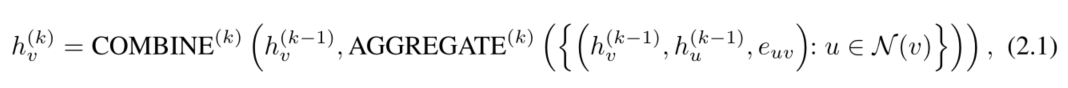

2 PRELIMINARIES ONGRAPHNEURALNETWORKS

- GNNs:

- 为了获取图级表征,在最后一次迭代(

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)

https://mp.weixin.qq.com/s?__biz=MzIyNDY5NjEzNQ==&mid=2247491631&idx=1&sn=dfa36e829a84494c99bb2d4f755717d6&chksm=e809a207df7e2b1117578afc86569fa29ee62eb883fd35428888c0cc0be750faa5ef091f9092&mpshare=1&scene=23&srcid=1026NUThrKm2Vioj874F3gqS&sharer_sharetime=1635227630762&sharer_shareid=80f244b289da8c80b67c915b10efd0a8#rd

![]() https://blog.csdn.net/tagagi/article/details/121318308

https://blog.csdn.net/tagagi/article/details/121318308