跑huggingface上的bart遇到的一系列问题

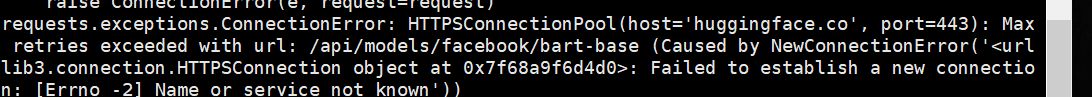

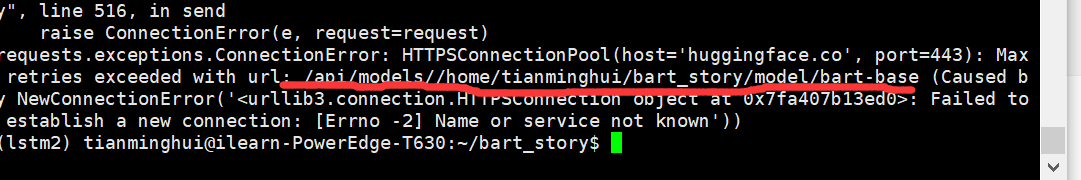

1.无法连接到huggingface

解决1:

使用git、wget方式下载:

失败

解决2:

从官网下载下来模型并上传,讲代码中模型导入的路径改为本地路径

下载过程可以参考该博客。

不知道为啥直接变成了路径接上,不过不重要,最后也不知道怎么捣鼓的反正就是接上了,成功导入,出现另一个错误:

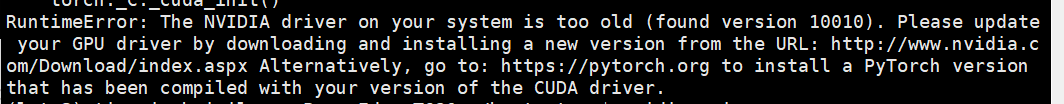

2.nvida版本过低

下午再解决,累了

RuntimeError: The NVIDIA driver on your system is too old (found version 10010). Please update your GPU driver by downloading and installing a new version from the URL: http://www.nvidia.com/Download/index.aspx Alternatively, go to: https://pytorch.org to install a PyTorch version that has been compiled with your version of the CUDA driver.

好像是不能直接下载最新版的pytorch,跟cuda的版本对不上(好像是),所以去找一下怎么下载特定版本的pytorch,另:昨天好像手滑把另一个配好的cuda环境的pytorch搞没了,记得找到怎么下载1.1.0版本的pytorch后,重新去那个版本里再下一个。

bart要求的pytorch版本因该是>=1.6.0的。

具体cuda和pytorch、torchvision对应版本的下载语句如下网页所示:

https://pytorch.org/get-started/previous-versions/#conda-3

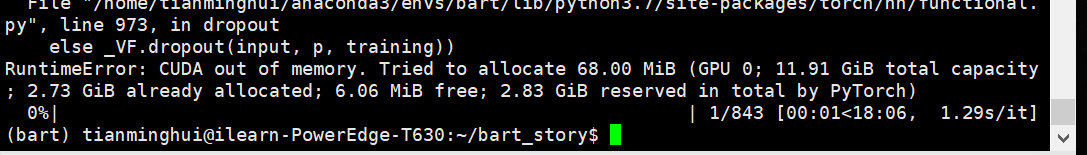

3.内存不够

查看显卡内存:

nvidia-smi

换卡3试试

指定使用哪块显卡的语句:

os.environ['CUDA_VISIBLE_DEVICES']='3'

但是依旧报错,考虑是所有卡的空间都不太够了

因为这份代码已经添加了去掉梯度的语句,所以不可再添加去掉梯度的语句了,添加梯度的博客:

https://www.cnblogs.com/dyc99/p/12664126.html

https://blog.csdn.net/weixin_43760844/article/details/113462431

明早等卡没人用了再说。

有卡了,还是报错,先看论文吧

load reference!

0%| | 0/843 [00:00<?, ?it/s]/home/tianminghui/anaconda3/envs/bart/lib/python3.7/site-packages/transformers/tokenization_utils_base.py:2217: FutureWarning: The `pad_to_max_length` argument is deprecated and will be removed in a future version, use `padding=True` or `padding='longest'` to pad to the longest sequence in the batch, or use `padding='max_length'` to pad to a max length. In this case, you can give a specific length with `max_length` (e.g. `max_length=45`) or leave max_length to None to pad to the maximal input size of the model (e.g. 512 for Bert).

FutureWarning,

/home/tianminghui/anaconda3/envs/bart/lib/python3.7/site-packages/transformers/tokenization_utils_base.py:2217: FutureWarning: The `pad_to_max_length` argument is deprecated and will be removed in a future version, use `padding=True` or `padding='longest'` to pad to the longest sequence in the batch, or use `padding='max_length'` to pad to a max length. In this case, you can give a specific length with `max_length` (e.g. `max_length=45`) or leave max_length to None to pad to the maximal input size of the model (e.g. 512 for Bert).

FutureWarning,

/home/tianminghui/anaconda3/envs/bart/lib/python3.7/site-packages/transformers/tokenization_utils_base.py:2217: FutureWarning: The `pad_to_max_length` argument is deprecated and will be removed in a future version, use `padding=True` or `padding='longest'` to pad to the longest sequence in the batch, or use `padding='max_length'` to pad to a max length. In this case, you can give a specific length with `max_length` (e.g. `max_length=45`) or leave max_length to None to pad to the maximal input size of the model (e.g. 512 for Bert).

FutureWarning,

/home/tianminghui/anaconda3/envs/bart/lib/python3.7/site-packages/transformers/tokenization_utils_base.py:2217: FutureWarning: The `pad_to_max_length` argument is deprecated and will be removed in a future version, use `padding=True` or `padding='longest'` to pad to the longest sequence in the batch, or use `padding='max_length'` to pad to a max length. In this case, you can give a specific length with `max_length` (e.g. `max_length=45`) or leave max_length to None to pad to the maximal input size of the model (e.g. 512 for Bert).

FutureWarning,

epoch 1, step 0, loss 5.0370: 0%| | 1/843 [00:02<05:19, 2.63it/s]Traceback (most recent call last):

File "main.py", line 217, in <module>

train_one_epoch(config, train_dataloader, model, optimizer, criterion, epoch + 1)

File "main.py", line 85, in train_one_epoch

loss.backward()

File "/home/tianminghui/anaconda3/envs/bart/lib/python3.7/site-packages/torch/tensor.py", line 185, in backward

torch.autograd.backward(self, gradient, retain_graph, create_graph)

File "/home/tianminghui/anaconda3/envs/bart/lib/python3.7/site-packages/torch/autograd/__init__.py", line 127, in backward

allow_unreachable=True)

RuntimeError: CUDA out of memory. Tried to allocate 1.08 GiB (GPU 0; 11.91 GiB total capacity; 10.66 GiB already allocated; 377.06 MiB free; 10.95 GiB reserved in total by PyTorch)

Exception raised from malloc at /opt/conda/conda-bld/pytorch_1595629403081/work/c10/cuda/CUDACachingAllocator.cpp:272 (most recent call first):

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

frame

epoch 1, step 0, loss 5.0370: 0%| | 1/843 [00:03<48:19, 3.44s/it

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)