本文接上次的博客海康威视工业相机SDK二次开发(VS+Opencv+QT+海康SDK+C++)(一),上个博客中并未用到QT,本文介绍项目内容及源码,供大家参考。

由于我的项目中是用海康相机作为拍照的一个中介,重点是在目标识别方向,请阅读源码时自动忽略。

如果对目标识别感兴趣,可以参考我的YOLO系列

https://blog.csdn.net/qq_45445740/category_9794819.html

目录

- 1.说明

-

- 2.源码

- MvCamera.h

- mythread.h

- PcbDetectv3.h

- main.cpp

- PcbDetectv3.cpp

- MvCamera.cpp

- mythread.cpp

- 效果

1.说明

1.1 环境配置

关于我在VS中的软件版本及相关的环境配置,请移步

海康威视工业相机SDK二次开发(VS+Opencv+QT+海康SDK+C++)(一)(里面有详细的软硬件介绍)

1.2 背景说明

简单介绍下我的项目需求:

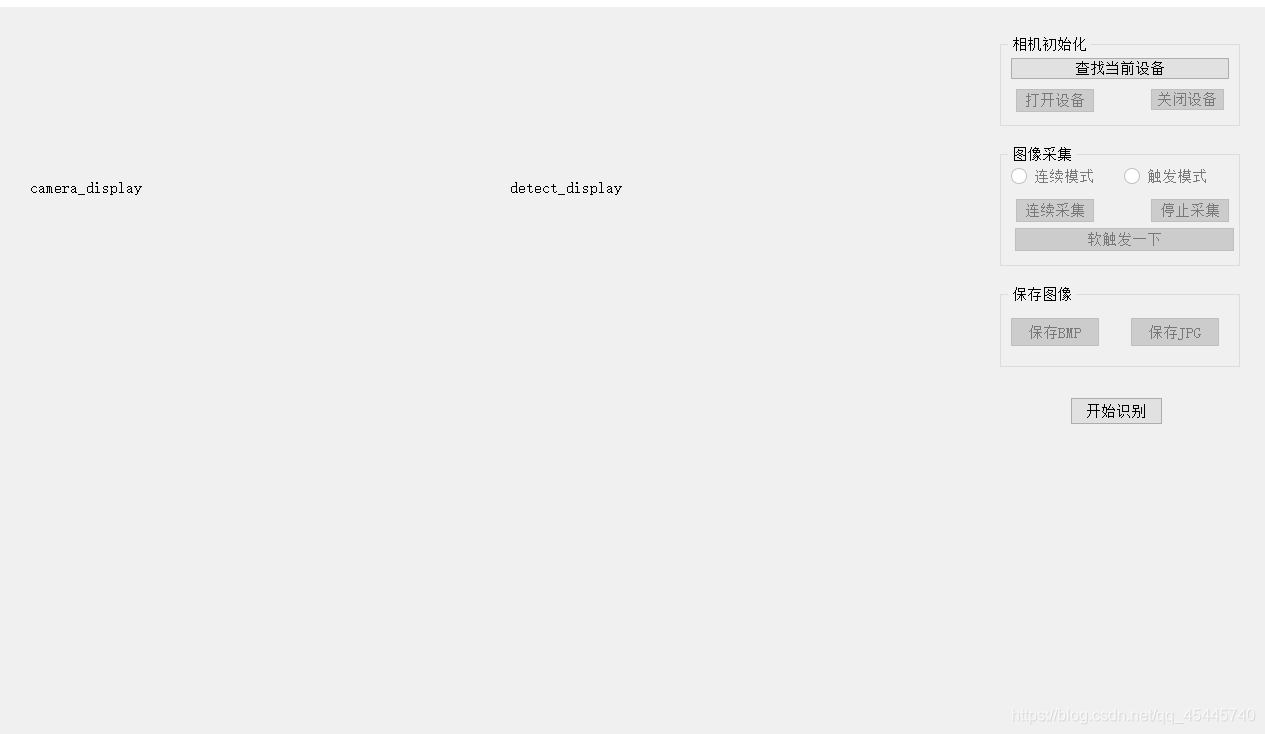

通过相机对物体拍照,后进行目标识别,并返回识别目标的准确位置。这里相机采用海康威视工业相机,人机交互界面选择QT。

界面功能介绍:搜索当前相机的信号,相机可进行图像的连续采集或触发式采集。

2.源码

MvCamera.h

#ifndef _MV_CAMERA_H_

#define _MV_CAMERA_H_

#include "MvCameraControl.h"

#include <string.h>

#ifndef MV_NULL

#define MV_NULL 0

#endif

#include"opencv2/opencv.hpp"

#include"opencv2/imgproc/types_c.h"

using namespace cv;

class CMvCamera

{

public:

CMvCamera();

~CMvCamera();

static int GetSDKVersion();

static int EnumDevices(unsigned int nTLayerType, MV_CC_DEVICE_INFO_LIST* pstDevList);

static bool IsDeviceAccessible(MV_CC_DEVICE_INFO* pstDevInfo, unsigned int nAccessMode);

int Open(MV_CC_DEVICE_INFO* pstDeviceInfo);

int Close();

bool IsDeviceConnected();

int RegisterImageCallBack(void(__stdcall* cbOutput)(unsigned char* pData, MV_FRAME_OUT_INFO_EX* pFrameInfo, void* pUser), void* pUser);

int StartGrabbing();

int StopGrabbing();

int GetImageBuffer(MV_FRAME_OUT* pFrame, int nMsec);

int FreeImageBuffer(MV_FRAME_OUT* pFrame);

int GetOneFrameTimeout(unsigned char* pData, unsigned int* pnDataLen, unsigned int nDataSize, MV_FRAME_OUT_INFO_EX* pFrameInfo, int nMsec);

int DisplayOneFrame(MV_DISPLAY_FRAME_INFO* pDisplayInfo);

int SetImageNodeNum(unsigned int nNum);

int GetDeviceInfo(MV_CC_DEVICE_INFO* pstDevInfo);

int GetGevAllMatchInfo(MV_MATCH_INFO_NET_DETECT* pMatchInfoNetDetect);

int GetU3VAllMatchInfo(MV_MATCH_INFO_USB_DETECT* pMatchInfoUSBDetect);

int GetIntValue(IN const char* strKey, OUT unsigned int* pnValue);

int SetIntValue(IN const char* strKey, IN int64_t nValue);

int GetEnumValue(IN const char* strKey, OUT MVCC_ENUMVALUE* pEnumValue);

int SetEnumValue(IN const char* strKey, IN unsigned int nValue);

int SetEnumValueByString(IN const char* strKey, IN const char* sValue);

int GetFloatValue(IN const char* strKey, OUT MVCC_FLOATVALUE* pFloatValue);

int SetFloatValue(IN const char* strKey, IN float fValue);

int GetBoolValue(IN const char* strKey, OUT bool* pbValue);

int SetBoolValue(IN const char* strKey, IN bool bValue);

int GetStringValue(IN const char* strKey, MVCC_STRINGVALUE* pStringValue);

int SetStringValue(IN const char* strKey, IN const char* strValue);

int CommandExecute(IN const char* strKey);

int GetOptimalPacketSize(unsigned int* pOptimalPacketSize);

int RegisterExceptionCallBack(void(__stdcall* cbException)(unsigned int nMsgType, void* pUser), void* pUser);

int RegisterEventCallBack(const char* pEventName, void(__stdcall* cbEvent)(MV_EVENT_OUT_INFO* pEventInfo, void* pUser), void* pUser);

int ForceIp(unsigned int nIP, unsigned int nSubNetMask, unsigned int nDefaultGateWay);

int SetIpConfig(unsigned int nType);

int SetNetTransMode(unsigned int nType);

int ConvertPixelType(MV_CC_PIXEL_CONVERT_PARAM* pstCvtParam);

int SaveImage(MV_SAVE_IMAGE_PARAM_EX* pstParam);

int SaveImageToFile(MV_SAVE_IMG_TO_FILE_PARAM* pstParam);

int setTriggerMode(unsigned int TriggerModeNum);

int setTriggerSource(unsigned int TriggerSourceNum);

int softTrigger();

int ReadBuffer(cv::Mat& image);

public:

void* m_hDevHandle;

unsigned int m_nTLayerType;

public:

unsigned char* m_pBufForSaveImage;

unsigned int m_nBufSizeForSaveImage;

unsigned char* m_pBufForDriver;

unsigned int m_nBufSizeForDriver;

};

#endif

#pragma once

mythread.h

#ifndef MYTHREAD_H

#define MYTHREAD_H

#include "QThread"

#include "MvCamera.h"

#include "opencv2/opencv.hpp"

#include "opencv2/core/core.hpp"

#include "opencv2/calib3d/calib3d.hpp"

#include "opencv2/highgui/highgui.hpp"

#include <vector>

#include <string>

#include <algorithm>

#include <iostream>

#include <iterator>

#include <stdio.h>

#include <stdlib.h>

#include <ctype.h>

using namespace std;

using namespace cv;

class MyThread :public QThread

{

Q_OBJECT

public:

MyThread();

~MyThread();

void run();

void getCameraPtr(CMvCamera* camera);

void getImagePtr(Mat* image);

void getCameraIndex(int index);

signals:

void mess();

void Display(const Mat* image, int index);

private:

CMvCamera* cameraPtr = NULL;

cv::Mat* imagePtr = NULL;

int cameraIndex = NULL;

int TriggerMode;

};

#endif

#pragma once

PcbDetectv3.h

#pragma once

#include <QtWidgets/QWidget>

#include <QMessageBox>

#include <QCloseEvent>

#include <QSettings>

#include <QDebug>

#include<opencv2\opencv.hpp>

#include<opencv2\dnn.hpp>

#include<fstream>

#include<iostream>

#include "MvCamera.h"

#include "mythread.h"

#include "ui_PcbDetectv3.h"

using namespace std;

using namespace cv;

using namespace cv::dnn;

#define MAX_DEVICE_NUM 2

class PcbDetectv3 : public QWidget

{

Q_OBJECT

public:

PcbDetectv3(QWidget *parent = Q_NULLPTR);

private:

Ui::PcbDetectv3Class ui;

public:

CMvCamera* m_pcMyCamera[MAX_DEVICE_NUM];

MV_CC_DEVICE_INFO_LIST m_stDevList;

cv::Mat* myImage_L = new cv::Mat();

cv::Mat* myImage_R = new cv::Mat();

int devices_num;

private slots:

void OnBnClickedEnumButton();

void OnBnClickedOpenButton();

void OnBnClickedCloseButton();

void Img_display();

void display_myImage_L(const Mat* imagePrt, int cameraIndex);

void display_myImage_R(const Mat* imagePrt, int cameraIndex);

void OnBnClickedContinusModeRadio();

void OnBnClickedTriggerModeRadio();

void OnBnClickedStartGrabbingButton();

void OnBnClickedStopGrabbingButton();

void OnBnClickedSoftwareOnceButton();

void OnBnClickedSaveBmpButton();

void OnBnClickedSaveJpgButton();

void StartRecognize();

private:

void OpenDevices();

void CloseDevices();

public:

MyThread* myThread_LeftCamera = NULL;

MyThread* myThread_RightCamera = NULL;

private slots:

void SetTriggerMode(int m_nTriggerMode);

int GetTriggerMode();

int GetExposureTime();

int GetGain();

int GetFrameRate();

void SaveImage();

public:

bool m_bOpenDevice;

bool m_bStartGrabbing;

int m_nTriggerMode;

int m_bContinueStarted;

MV_SAVE_IAMGE_TYPE m_nSaveImageType;

};

main.cpp

#include "PcbDetectv3.h"

#include <QtWidgets/QApplication>

int main(int argc, char *argv[])

{

QApplication a(argc, argv);

PcbDetectv3 w;

w.show();

return a.exec();

}

PcbDetectv3.cpp

#include "PcbDetectv3.h"

#include <QWidget>

#include <QValidator>

#define TRIGGER_SOURCE 7

#define EXPOSURE_TIME 40000

#define FRAME 30

#define TRIGGER_ON 1

#define TRIGGER_OFF 0

#define START_GRABBING_ON 1

#define START_GRABBING_OFF 0

#define IMAGE_NAME_LEN 64

PcbDetectv3::PcbDetectv3(QWidget *parent): QWidget(parent)

{

ui.setupUi(this);

ui.bntEnumDevices->setEnabled(true);

ui.bntCloseDevices->setEnabled(false);

ui.bntOpenDevices->setEnabled(false);

ui.rbnt_Continue_Mode->setEnabled(false);

ui.rbnt_SoftTigger_Mode->setEnabled(false);

ui.bntStartGrabbing->setEnabled(false);

ui.bntStopGrabbing->setEnabled(false);

ui.bntSoftwareOnce->setEnabled(false);

ui.bntSave_BMP->setEnabled(false);

ui.bntSave_JPG->setEnabled(false);

myThread_LeftCamera = new MyThread;

myThread_RightCamera = new MyThread;

myImage_L = new cv::Mat();

myImage_R = new cv::Mat();

int devices_num = 0;

int m_nTriggerMode = TRIGGER_ON;

int m_bStartGrabbing = START_GRABBING_ON;

int m_bContinueStarted = 0;

MV_SAVE_IAMGE_TYPE m_nSaveImageType = MV_Image_Bmp;

connect(myThread_LeftCamera, SIGNAL(Display(const Mat*, int)), this, SLOT(display_myImage_L(const Mat*, int)));

connect(myThread_RightCamera, SIGNAL(Display(const Mat*, int)), this, SLOT(display_myImage_R(const Mat*, int)));

connect(ui.bntEnumDevices, SIGNAL(clicked()), this, SLOT(OnBnClickedEnumButton()));

connect(ui.bntOpenDevices, SIGNAL(clicked()), this, SLOT(OnBnClickedOpenButton()));

connect(ui.bntCloseDevices, SIGNAL(clicked()), this, SLOT(OnBnClickedCloseButton()));

connect(ui.rbnt_Continue_Mode, SIGNAL(clicked()), this, SLOT(OnBnClickedContinusModeRadio()));

connect(ui.rbnt_SoftTigger_Mode, SIGNAL(clicked()), this, SLOT(OnBnClickedTriggerModeRadio()));

connect(ui.bntStartGrabbing, SIGNAL(clicked()), this, SLOT(OnBnClickedStartGrabbingButton()));

connect(ui.bntStopGrabbing, SIGNAL(clicked()), this, SLOT(OnBnClickedStopGrabbingButton()));

connect(ui.bntSoftwareOnce, SIGNAL(clicked()), this, SLOT(OnBnClickedSoftwareOnceButton()));

connect(ui.bntSave_BMP, SIGNAL(clicked()), this, SLOT(OnBnClickedSaveBmpButton()));

connect(ui.bntSave_JPG, SIGNAL(clicked()), this, SLOT(OnBnClickedSaveJpgButton()));

}

void PcbDetectv3::OnBnClickedEnumButton()

{

memset(&m_stDevList, 0, sizeof(MV_CC_DEVICE_INFO_LIST));

int nRet = MV_OK;

nRet = CMvCamera::EnumDevices(MV_GIGE_DEVICE | MV_USB_DEVICE, &m_stDevList);

devices_num = m_stDevList.nDeviceNum;

if (devices_num > 0)

{

ui.bntOpenDevices->setEnabled(true);

}

}

void PcbDetectv3::OpenDevices()

{

int nRet = MV_OK;

for (unsigned int i = 0, j = 0; j < m_stDevList.nDeviceNum; j++, i++)

{

m_pcMyCamera[i] = new CMvCamera;

m_pcMyCamera[i]->m_pBufForDriver = NULL;

m_pcMyCamera[i]->m_pBufForSaveImage = NULL;

m_pcMyCamera[i]->m_nBufSizeForDriver = 0;

m_pcMyCamera[i]->m_nBufSizeForSaveImage = 0;

m_pcMyCamera[i]->m_nTLayerType = m_stDevList.pDeviceInfo[j]->nTLayerType;

nRet = m_pcMyCamera[i]->Open(m_stDevList.pDeviceInfo[j]);

m_pcMyCamera[i]->setTriggerMode(TRIGGER_ON);

m_pcMyCamera[i]->setTriggerSource(TRIGGER_SOURCE);

}

void PcbDetectv3::OnBnClickedOpenButton()

{

ui.bntOpenDevices->setEnabled(false);

ui.bntCloseDevices->setEnabled(true);

ui.rbnt_Continue_Mode->setEnabled(true);

ui.rbnt_SoftTigger_Mode->setEnabled(true);

ui.rbnt_Continue_Mode->setCheckable(true);

OpenDevices();

}

void PcbDetectv3::CloseDevices()

{

for (unsigned int i = 0; i < m_stDevList.nDeviceNum; i++)

{

if (myThread_LeftCamera->isRunning())

{

myThread_LeftCamera->requestInterruption();

myThread_LeftCamera->wait();

m_pcMyCamera[0]->StopGrabbing();

}

if (myThread_RightCamera->isRunning())

{

myThread_RightCamera->requestInterruption();

myThread_RightCamera->wait();

m_pcMyCamera[1]->StopGrabbing();

}

m_pcMyCamera[i]->Close();

}

memset(&m_stDevList, 0, sizeof(MV_CC_DEVICE_INFO_LIST));

int devices_num = MV_OK;

devices_num = CMvCamera::EnumDevices(MV_GIGE_DEVICE | MV_USB_DEVICE, &m_stDevList);

}

void PcbDetectv3::OnBnClickedCloseButton()

{

ui.bntOpenDevices->setEnabled(true);

ui.bntCloseDevices->setEnabled(false);

ui.rbnt_Continue_Mode->setEnabled(false);

ui.rbnt_SoftTigger_Mode->setEnabled(false);

ui.bntStartGrabbing->setEnabled(false);

ui.bntStopGrabbing->setEnabled(false);

ui.bntSave_BMP->setEnabled(false);

ui.bntSave_JPG->setEnabled(false);

CloseDevices();

}

void PcbDetectv3::OnBnClickedStartGrabbingButton()

{

m_bContinueStarted = 1;

ui.bntStartGrabbing->setEnabled(false);

ui.bntStopGrabbing->setEnabled(true);

ui.bntSave_BMP->setEnabled(true);

ui.bntSave_JPG->setEnabled(true);

int camera_Index = 0;

if (m_nTriggerMode == TRIGGER_ON)

{

for (unsigned int i = 0; i < m_stDevList.nDeviceNum; i++)

{

m_pcMyCamera[i]->StartGrabbing();

camera_Index = i;

if (camera_Index == 0)

{

myThread_LeftCamera->getCameraPtr(m_pcMyCamera[0]);

myThread_LeftCamera->getImagePtr(myImage_L);

myThread_LeftCamera->getCameraIndex(0);

if (!myThread_LeftCamera->isRunning())

{

myThread_LeftCamera->start();

m_pcMyCamera[0]->softTrigger();

m_pcMyCamera[0]->ReadBuffer(*myImage_L);

}

}

if (camera_Index == 1)

{

myThread_RightCamera->getCameraPtr(m_pcMyCamera[1]);

myThread_RightCamera->getImagePtr(myImage_R);

myThread_RightCamera->getCameraIndex(1);

if (!myThread_RightCamera->isRunning())

{

myThread_RightCamera->start();

m_pcMyCamera[1]->softTrigger();

m_pcMyCamera[1]->ReadBuffer(*myImage_R);

}

}

}

}

}

void PcbDetectv3::OnBnClickedStopGrabbingButton()

{

ui.bntStartGrabbing->setEnabled(true);

ui.bntStopGrabbing->setEnabled(false);

for (unsigned int i = 0; i < m_stDevList.nDeviceNum; i++)

{

if (myThread_LeftCamera->isRunning())

{

m_pcMyCamera[0]->StopGrabbing();

myThread_LeftCamera->requestInterruption();

myThread_LeftCamera->wait();

}

if (myThread_RightCamera->isRunning())

{

m_pcMyCamera[1]->StopGrabbing();

myThread_RightCamera->requestInterruption();

myThread_RightCamera->wait();

}

}

}

void PcbDetectv3::Img_display()

{

}

void PcbDetectv3::display_myImage_L(const Mat* imagePrt, int cameraIndex)

{

cv::Mat rgb;

cv::cvtColor(*imagePrt, rgb, CV_BGR2RGB);

QImage QmyImage_L;

if (myImage_L->channels() > 1)

{

QmyImage_L = QImage((const unsigned char*)(rgb.data), rgb.cols, rgb.rows, QImage::Format_RGB888);

}

else

{

QmyImage_L = QImage((const unsigned char*)(rgb.data), rgb.cols, rgb.rows, QImage::Format_Indexed8);

}

QmyImage_L = (QmyImage_L).scaled(ui.label_camera_display->size(), Qt::IgnoreAspectRatio, Qt::SmoothTransformation);

ui.label_camera_display->setPixmap(QPixmap::fromImage(QmyImage_L));

}

void PcbDetectv3::display_myImage_R(const Mat* imagePrt, int cameraIndex)

{

cv::Mat rgb;

cv::cvtColor(*imagePrt, rgb, CV_BGR2RGB);

QImage QmyImage_R;

if (myImage_R->channels() > 1)

{

QmyImage_R = QImage((const unsigned char*)(rgb.data), rgb.cols, rgb.rows, QImage::Format_RGB888);

}

else

{

QmyImage_R = QImage((const unsigned char*)(rgb.data), rgb.cols, rgb.rows, QImage::Format_Indexed8);

}

QmyImage_R = (QmyImage_R).scaled(ui.label_detect_display->size(), Qt::IgnoreAspectRatio, Qt::SmoothTransformation);

ui.label_detect_display->setPixmap(QPixmap::fromImage(QmyImage_R));

}

int PcbDetectv3::GetExposureTime(void)

{

int i = 0;

return i;

}

int PcbDetectv3::GetGain(void)

{

int i = 0;

return i;

}

int PcbDetectv3::GetFrameRate(void)

{

int i = 0;

return i;

}

int PcbDetectv3::GetTriggerMode(void)

{

int i = 0;

return 0;

}

void PcbDetectv3::SetTriggerMode(int m_nTriggerMode)

{

}

void PcbDetectv3::OnBnClickedContinusModeRadio()

{

ui.bntStartGrabbing->setEnabled(true);

m_nTriggerMode = TRIGGER_ON;

}

void PcbDetectv3::OnBnClickedTriggerModeRadio()

{

if (m_bContinueStarted == 1)

{

OnBnClickedStopGrabbingButton();

}

ui.bntStartGrabbing->setEnabled(false);

ui.bntSoftwareOnce->setEnabled(true);

m_nTriggerMode = TRIGGER_OFF;

for (unsigned int i = 0; i < m_stDevList.nDeviceNum; i++)

{

m_pcMyCamera[i]->setTriggerMode(m_nTriggerMode);

}

}

void PcbDetectv3::OnBnClickedSoftwareOnceButton()

{

ui.bntSave_BMP->setEnabled(true);

ui.bntSave_JPG->setEnabled(true);

if (m_nTriggerMode == TRIGGER_OFF)

{

int nRet = MV_OK;

for (unsigned int i = 0; i < m_stDevList.nDeviceNum; i++)

{

m_pcMyCamera[i]->StartGrabbing();

if (i == 0)

{

nRet = m_pcMyCamera[i]->CommandExecute("TriggerSoftware");

m_pcMyCamera[i]->ReadBuffer(*myImage_L);

display_myImage_L(myImage_L, i);

}

if (i == 1)

{

nRet = m_pcMyCamera[i]->CommandExecute("TriggerSoftware");

m_pcMyCamera[i]->ReadBuffer(*myImage_R);

display_myImage_R(myImage_R, i);

}

}

}

}

void PcbDetectv3::OnBnClickedSaveBmpButton()

{

m_nSaveImageType = MV_Image_Bmp;

SaveImage();

}

void PcbDetectv3::OnBnClickedSaveJpgButton()

{

m_nSaveImageType = MV_Image_Jpeg;

SaveImage();

}

void PcbDetectv3::SaveImage()

{

MV_FRAME_OUT_INFO_EX stImageInfo = { 0 };

memset(&stImageInfo, 0, sizeof(MV_FRAME_OUT_INFO_EX));

unsigned int nDataLen = 0;

int nRet = MV_OK;

for (int i = 0; i < devices_num; i++)

{

if (NULL == m_pcMyCamera[i]->m_pBufForDriver)

{

unsigned int nRecvBufSize = 0;

unsigned int nRet = m_pcMyCamera[i]->GetIntValue("PayloadSize", &nRecvBufSize);

m_pcMyCamera[i]->m_nBufSizeForDriver = nRecvBufSize;

m_pcMyCamera[i]->m_pBufForDriver = (unsigned char*)malloc(m_pcMyCamera[i]->m_nBufSizeForDriver);

}

nRet = m_pcMyCamera[i]->GetOneFrameTimeout(m_pcMyCamera[i]->m_pBufForDriver, &nDataLen, m_pcMyCamera[i]->m_nBufSizeForDriver, &stImageInfo, 1000);

if (MV_OK == nRet)

{

if (NULL == m_pcMyCamera[i]->m_pBufForSaveImage)

{

m_pcMyCamera[i]->m_nBufSizeForSaveImage = stImageInfo.nWidth * stImageInfo.nHeight * 3 + 2048;

m_pcMyCamera[i]->m_pBufForSaveImage = (unsigned char*)malloc(m_pcMyCamera[i]->m_nBufSizeForSaveImage);

}

MV_SAVE_IMAGE_PARAM_EX stParam = { 0 };

stParam.enImageType = m_nSaveImageType;

stParam.enPixelType = stImageInfo.enPixelType;

stParam.nBufferSize = m_pcMyCamera[i]->m_nBufSizeForSaveImage;

stParam.nWidth = stImageInfo.nWidth;

stParam.nHeight = stImageInfo.nHeight;

stParam.nDataLen = stImageInfo.nFrameLen;

stParam.pData = m_pcMyCamera[i]->m_pBufForDriver;

stParam.pImageBuffer = m_pcMyCamera[i]->m_pBufForSaveImage;

stParam.nJpgQuality = 90;

nRet = m_pcMyCamera[i]->SaveImage(&stParam);

char chImageName[IMAGE_NAME_LEN] = { 0 };

if (MV_Image_Bmp == stParam.enImageType)

{

if (i == 0)

{

sprintf_s(chImageName, IMAGE_NAME_LEN, "current_image.bmp", stImageInfo.nFrameNum);

}

if (i == 1)

{

sprintf_s(chImageName, IMAGE_NAME_LEN, "Image_w%d_h%d_fn%03d_R.bmp", stImageInfo.nWidth, stImageInfo.nHeight, stImageInfo.nFrameNum);

}

}

else if (MV_Image_Jpeg == stParam.enImageType)

{

if (i == 0)

{

sprintf_s(chImageName, IMAGE_NAME_LEN, "current_image.bmp", stImageInfo.nWidth, stImageInfo.nHeight, stImageInfo.nFrameNum);

}

if (i == 1)

{

sprintf_s(chImageName, IMAGE_NAME_LEN, "Image_w%d_h%d_fn%03d_R.bmp", stImageInfo.nWidth, stImageInfo.nHeight, stImageInfo.nFrameNum);

}

}

FILE* fp = fopen(chImageName, "wb");

fwrite(m_pcMyCamera[i]->m_pBufForSaveImage, 1, stParam.nImageLen, fp);

fclose(fp);

}

}

}

void PcbDetectv3::StartRecognize()

{

ifstream classNamesFile("./model/classes.names");

vector<string> classNamesVec;

if (classNamesFile.is_open())

{

string className = "";

while (std::getline(classNamesFile, className))

classNamesVec.push_back(className);

}

String cfg = "./model/yolo-obj.cfg";

String weight = "./model/yolo-obj_4000.weights";

dnn::Net net = readNetFromDarknet(cfg, weight);

Mat frame = imread("./current_image.bmp");

Mat inputBlob = blobFromImage(frame, 1.0 / 255, Size(608, 608), Scalar());

net.setInput(inputBlob);

std::vector<String> outNames = net.getUnconnectedOutLayersNames();

std::vector<Mat> outs;

net.forward(outs, outNames);

float* data;

Mat scores;

vector<Rect> boxes;

vector<int> classIds;

vector<float> confidences;

int centerX, centerY, width, height, left, top;

float confidenceThreshold = 0.2;

double confidence;

Point classIdPoint;

for (size_t i = 0; i < outs.size(); ++i)

{

data = (float*)outs[i].data;

for (int j = 0; j < outs[i].rows; ++j, data += outs[i].cols)

{

scores = outs[i].row(j).colRange(5, outs[i].cols);

minMaxLoc(scores, 0, &confidence, 0, &classIdPoint);

if (confidence > confidenceThreshold)

{

centerX = (int)(data[0] * frame.cols);

centerY = (int)(data[1] * frame.rows);

width = (int)(data[2] * frame.cols);

height = (int)(data[3] * frame.rows);

left = centerX - width / 2;

top = centerY - height / 2;

classIds.push_back(classIdPoint.x);

confidences.push_back((float)confidence);

boxes.push_back(Rect(left, top, width, height));

}

}

}

vector<int> indices;

NMSBoxes(boxes, confidences, 0.3, 0.2, indices);

Scalar rectColor, textColor;

Rect box, textBox;

int idx;

String className;

Size labelSize;

QString show_text;

show_text = QString::fromLocal8Bit("当前图像的尺寸:");

show_text.append(QString::number(frame.size().width));

show_text.append(QString::fromLocal8Bit("×"));

show_text.append(QString::number(frame.size().height));

show_text.append("\n");

cout << "当前图像的尺寸:" << frame.size() << endl;

for (size_t i = 0; i < indices.size(); ++i)

{

idx = indices[i];

className = classNamesVec[classIds[idx]];

labelSize = getTextSize(className, FONT_HERSHEY_SIMPLEX, 0.5, 1, 0);

box = boxes[idx];

textBox = Rect(Point(box.x - 1, box.y),

Point(box.x + labelSize.width, box.y - labelSize.height));

rectColor = Scalar(idx * 11 % 256, idx * 22 % 256, idx * 33 % 256);

textColor = Scalar(255 - idx * 11 % 256, 255 - idx * 22 % 256, 255 - idx * 33 % 256);

rectangle(frame, box, rectColor, 20, 8, 0);

rectangle(frame, textBox, rectColor, -1, 8, 0);

putText(frame, className.c_str(), Point(box.x, box.y - 2), FONT_HERSHEY_SIMPLEX, 0.5, textColor, 1, 8);

cout << className << ":" << "width:" << box.width << ",height:" << box.height << ",center:" << (box.tl() + box.br()) / 2 << endl;

show_text.append(QString::fromLocal8Bit(className.c_str()));

show_text.append(": width:");

show_text.append(QString::number(box.width));

show_text.append(", height:");

show_text.append(QString::number(box.height));

show_text.append(", center:");

int center_x = (box.tl().x + box.br().x) / 2;

show_text.append(QString::number(center_x));

show_text.append(",");

int center_y = (box.tl().y + box.br().y) / 2;

show_text.append(QString::number(center_y));

show_text.append("\n");

}

ui.label_show_results->setText(show_text);

Mat show_detect_img;

cvtColor(frame, show_detect_img, COLOR_BGR2RGB);

QImage disImage = QImage((const unsigned char*)(show_detect_img.data), show_detect_img.cols, show_detect_img.rows, QImage::Format_RGB888);

ui.label_detect_display->setPixmap(QPixmap::fromImage(disImage.scaled(ui.label_detect_display->size(), Qt::KeepAspectRatio)));

}

MvCamera.cpp

#include "MvCamera.h"

#include <opencv.hpp>

#include"opencv2/opencv.hpp"

#include"opencv2/imgproc/types_c.h"

CMvCamera::CMvCamera()

{

m_hDevHandle = MV_NULL;

}

CMvCamera::~CMvCamera()

{

if (m_hDevHandle)

{

MV_CC_DestroyHandle(m_hDevHandle);

m_hDevHandle = MV_NULL;

}

}

int CMvCamera::GetSDKVersion()

{

return MV_CC_GetSDKVersion();

}

int CMvCamera::EnumDevices(unsigned int nTLayerType, MV_CC_DEVICE_INFO_LIST* pstDevList)

{

return MV_CC_EnumDevices(nTLayerType, pstDevList);

}

bool CMvCamera::IsDeviceAccessible(MV_CC_DEVICE_INFO* pstDevInfo, unsigned int nAccessMode)

{

return MV_CC_IsDeviceAccessible(pstDevInfo, nAccessMode);

}

int CMvCamera::Open(MV_CC_DEVICE_INFO* pstDeviceInfo)

{

if (MV_NULL == pstDeviceInfo)

{

return MV_E_PARAMETER;

}

if (m_hDevHandle)

{

return MV_E_CALLORDER;

}

int nRet = MV_CC_CreateHandle(&m_hDevHandle, pstDeviceInfo);

if (MV_OK != nRet)

{

return nRet;

}

nRet = MV_CC_OpenDevice(m_hDevHandle);

if (MV_OK != nRet)

{

MV_CC_DestroyHandle(m_hDevHandle);

m_hDevHandle = MV_NULL;

}

return nRet;

}

int CMvCamera::Close()

{

if (MV_NULL == m_hDevHandle)

{

return MV_E_HANDLE;

}

MV_CC_CloseDevice(m_hDevHandle);

int nRet = MV_CC_DestroyHandle(m_hDevHandle);

m_hDevHandle = MV_NULL;

return nRet;

}

bool CMvCamera::IsDeviceConnected()

{

return MV_CC_IsDeviceConnected(m_hDevHandle);

}

int CMvCamera::RegisterImageCallBack(void(__stdcall* cbOutput)(unsigned char* pData, MV_FRAME_OUT_INFO_EX* pFrameInfo, void* pUser), void* pUser)

{

return MV_CC_RegisterImageCallBackEx(m_hDevHandle, cbOutput, pUser);

}

int CMvCamera::StartGrabbing()

{

return MV_CC_StartGrabbing(m_hDevHandle);

}

int CMvCamera::StopGrabbing()

{

return MV_CC_StopGrabbing(m_hDevHandle);

}

int CMvCamera::GetImageBuffer(MV_FRAME_OUT* pFrame, int nMsec)

{

return MV_CC_GetImageBuffer(m_hDevHandle, pFrame, nMsec);

}

int CMvCamera::FreeImageBuffer(MV_FRAME_OUT* pFrame)

{

return MV_CC_FreeImageBuffer(m_hDevHandle, pFrame);

}

int CMvCamera::GetOneFrameTimeout(unsigned char* pData, unsigned int* pnDataLen, unsigned int nDataSize, MV_FRAME_OUT_INFO_EX* pFrameInfo, int nMsec)

{

if (NULL == pnDataLen)

{

return MV_E_PARAMETER;

}

int nRet = MV_OK;

*pnDataLen = 0;

nRet = MV_CC_GetOneFrameTimeout(m_hDevHandle, pData, nDataSize, pFrameInfo, nMsec);

if (MV_OK != nRet)

{

return nRet;

}

*pnDataLen = pFrameInfo->nFrameLen;

return nRet;

}

int CMvCamera::DisplayOneFrame(MV_DISPLAY_FRAME_INFO* pDisplayInfo)

{

return MV_CC_DisplayOneFrame(m_hDevHandle, pDisplayInfo);

}

int CMvCamera::SetImageNodeNum(unsigned int nNum)

{

return MV_CC_SetImageNodeNum(m_hDevHandle, nNum);

}

int CMvCamera::GetDeviceInfo(MV_CC_DEVICE_INFO* pstDevInfo)

{

return MV_CC_GetDeviceInfo(m_hDevHandle, pstDevInfo);

}

int CMvCamera::GetGevAllMatchInfo(MV_MATCH_INFO_NET_DETECT* pMatchInfoNetDetect)

{

if (MV_NULL == pMatchInfoNetDetect)

{

return MV_E_PARAMETER;

}

MV_CC_DEVICE_INFO stDevInfo = { 0 };

GetDeviceInfo(&stDevInfo);

if (stDevInfo.nTLayerType != MV_GIGE_DEVICE)

{

return MV_E_SUPPORT;

}

MV_ALL_MATCH_INFO struMatchInfo = { 0 };

struMatchInfo.nType = MV_MATCH_TYPE_NET_DETECT;

struMatchInfo.pInfo = pMatchInfoNetDetect;

struMatchInfo.nInfoSize = sizeof(MV_MATCH_INFO_NET_DETECT);

memset(struMatchInfo.pInfo, 0, sizeof(MV_MATCH_INFO_NET_DETECT));

return MV_CC_GetAllMatchInfo(m_hDevHandle, &struMatchInfo);

}

int CMvCamera::GetU3VAllMatchInfo(MV_MATCH_INFO_USB_DETECT* pMatchInfoUSBDetect)

{

if (MV_NULL == pMatchInfoUSBDetect)

{

return MV_E_PARAMETER;

}

MV_CC_DEVICE_INFO stDevInfo = { 0 };

GetDeviceInfo(&stDevInfo);

if (stDevInfo.nTLayerType != MV_USB_DEVICE)

{

return MV_E_SUPPORT;

}

MV_ALL_MATCH_INFO struMatchInfo = { 0 };

struMatchInfo.nType = MV_MATCH_TYPE_USB_DETECT;

struMatchInfo.pInfo = pMatchInfoUSBDetect;

struMatchInfo.nInfoSize = sizeof(MV_MATCH_INFO_USB_DETECT);

memset(struMatchInfo.pInfo, 0, sizeof(MV_MATCH_INFO_USB_DETECT));

return MV_CC_GetAllMatchInfo(m_hDevHandle, &struMatchInfo);

}

int CMvCamera::GetIntValue(IN const char* strKey, OUT unsigned int* pnValue)

{

if (NULL == strKey || NULL == pnValue)

{

return MV_E_PARAMETER;

}

MVCC_INTVALUE stParam;

memset(&stParam, 0, sizeof(MVCC_INTVALUE));

int nRet = MV_CC_GetIntValue(m_hDevHandle, strKey, &stParam);

if (MV_OK != nRet)

{

return nRet;

}

*pnValue = stParam.nCurValue;

return MV_OK;

}

int CMvCamera::SetIntValue(IN const char* strKey, IN int64_t nValue)

{

return MV_CC_SetIntValueEx(m_hDevHandle, strKey, nValue);

}

int CMvCamera::GetEnumValue(IN const char* strKey, OUT MVCC_ENUMVALUE* pEnumValue)

{

return MV_CC_GetEnumValue(m_hDevHandle, strKey, pEnumValue);

}

int CMvCamera::SetEnumValue(IN const char* strKey, IN unsigned int nValue)

{

return MV_CC_SetEnumValue(m_hDevHandle, strKey, nValue);

}

int CMvCamera::SetEnumValueByString(IN const char* strKey, IN const char* sValue)

{

return MV_CC_SetEnumValueByString(m_hDevHandle, strKey, sValue);

}

int CMvCamera::GetFloatValue(IN const char* strKey, OUT MVCC_FLOATVALUE* pFloatValue)

{

return MV_CC_GetFloatValue(m_hDevHandle, strKey, pFloatValue);

}

int CMvCamera::SetFloatValue(IN const char* strKey, IN float fValue)

{

return MV_CC_SetFloatValue(m_hDevHandle, strKey, fValue);

}

int CMvCamera::GetBoolValue(IN const char* strKey, OUT bool* pbValue)

{

return MV_CC_GetBoolValue(m_hDevHandle, strKey, pbValue);

}

int CMvCamera::SetBoolValue(IN const char* strKey, IN bool bValue)

{

return MV_CC_SetBoolValue(m_hDevHandle, strKey, bValue);

}

int CMvCamera::GetStringValue(IN const char* strKey, MVCC_STRINGVALUE* pStringValue)

{

return MV_CC_GetStringValue(m_hDevHandle, strKey, pStringValue);

}

int CMvCamera::SetStringValue(IN const char* strKey, IN const char* strValue)

{

return MV_CC_SetStringValue(m_hDevHandle, strKey, strValue);

}

int CMvCamera::CommandExecute(IN const char* strKey)

{

return MV_CC_SetCommandValue(m_hDevHandle, strKey);

}

int CMvCamera::GetOptimalPacketSize(unsigned int* pOptimalPacketSize)

{

if (MV_NULL == pOptimalPacketSize)

{

return MV_E_PARAMETER;

}

int nRet = MV_CC_GetOptimalPacketSize(m_hDevHandle);

if (nRet < MV_OK)

{

return nRet;

}

*pOptimalPacketSize = (unsigned int)nRet;

return MV_OK;

}

int CMvCamera::RegisterExceptionCallBack(void(__stdcall* cbException)(unsigned int nMsgType, void* pUser), void* pUser)

{

return MV_CC_RegisterExceptionCallBack(m_hDevHandle, cbException, pUser);

}

int CMvCamera::RegisterEventCallBack(const char* pEventName, void(__stdcall* cbEvent)(MV_EVENT_OUT_INFO* pEventInfo, void* pUser), void* pUser)

{

return MV_CC_RegisterEventCallBackEx(m_hDevHandle, pEventName, cbEvent, pUser);

}

int CMvCamera::ForceIp(unsigned int nIP, unsigned int nSubNetMask, unsigned int nDefaultGateWay)

{

return MV_GIGE_ForceIpEx(m_hDevHandle, nIP, nSubNetMask, nDefaultGateWay);

}

int CMvCamera::SetIpConfig(unsigned int nType)

{

return MV_GIGE_SetIpConfig(m_hDevHandle, nType);

}

int CMvCamera::SetNetTransMode(unsigned int nType)

{

return MV_GIGE_SetNetTransMode(m_hDevHandle, nType);

}

int CMvCamera::ConvertPixelType(MV_CC_PIXEL_CONVERT_PARAM* pstCvtParam)

{

return MV_CC_ConvertPixelType(m_hDevHandle, pstCvtParam);

}

int CMvCamera::SaveImage(MV_SAVE_IMAGE_PARAM_EX* pstParam)

{

return MV_CC_SaveImageEx2(m_hDevHandle, pstParam);

}

int CMvCamera::SaveImageToFile(MV_SAVE_IMG_TO_FILE_PARAM* pstSaveFileParam)

{

return MV_CC_SaveImageToFile(m_hDevHandle, pstSaveFileParam);

}

int CMvCamera::setTriggerMode(unsigned int TriggerModeNum)

{

int tempValue = MV_CC_SetEnumValue(m_hDevHandle, "TriggerMode", TriggerModeNum);

if (tempValue != 0){

return -1;

}

else {

return 0;

}

}

int CMvCamera::setTriggerSource(unsigned int TriggerSourceNum)

{

int tempValue = MV_CC_SetEnumValue(m_hDevHandle, "TriggerSource", TriggerSourceNum);

if (tempValue != 0) {

return -1;

}

else {

return 0;

}

}

int CMvCamera::softTrigger()

{

int tempValue = MV_CC_SetCommandValue(m_hDevHandle, "TriggerSoftware");

if (tempValue != 0)

{

return -1;

}

else

{

return 0;

}

}

int CMvCamera::ReadBuffer(cv::Mat& image)

{

cv::Mat* getImage = new cv::Mat();

unsigned int nRecvBufSize = 0;

MVCC_INTVALUE stParam;

memset(&stParam, 0, sizeof(MVCC_INTVALUE));

int tempValue = MV_CC_GetIntValue(m_hDevHandle, "PayloadSize", &stParam);

if (tempValue != 0)

{

return -1;

}

nRecvBufSize = stParam.nCurValue;

unsigned char* pDate;

pDate = (unsigned char*)malloc(nRecvBufSize);

MV_FRAME_OUT_INFO_EX stImageInfo = { 0 };

tempValue = MV_CC_GetOneFrameTimeout(m_hDevHandle, pDate, nRecvBufSize, &stImageInfo, 500);

if (tempValue != 0)

{

return -1;

}

m_nBufSizeForSaveImage = stImageInfo.nWidth * stImageInfo.nHeight * 3 + 2048;

unsigned char* m_pBufForSaveImage;

m_pBufForSaveImage = (unsigned char*)malloc(m_nBufSizeForSaveImage);

bool isMono;

switch (stImageInfo.enPixelType)

{

case PixelType_Gvsp_Mono8:

case PixelType_Gvsp_Mono10:

case PixelType_Gvsp_Mono10_Packed:

case PixelType_Gvsp_Mono12:

case PixelType_Gvsp_Mono12_Packed:

isMono = true;

break;

default:

isMono = false;

break;

}

if (isMono)

{

*getImage = cv::Mat(stImageInfo.nHeight, stImageInfo.nWidth, CV_8UC1, pDate);

}

else

{

MV_CC_PIXEL_CONVERT_PARAM stConvertParam = { 0 };

memset(&stConvertParam, 0, sizeof(MV_CC_PIXEL_CONVERT_PARAM));

stConvertParam.nWidth = stImageInfo.nWidth;

stConvertParam.nHeight = stImageInfo.nHeight;

stConvertParam.pSrcData = pDate;

stConvertParam.nSrcDataLen = stImageInfo.nFrameLen;

stConvertParam.enSrcPixelType = stImageInfo.enPixelType;

stConvertParam.enDstPixelType = PixelType_Gvsp_BGR8_Packed;

stConvertParam.pDstBuffer = m_pBufForSaveImage;

stConvertParam.nDstBufferSize = m_nBufSizeForSaveImage;

MV_CC_ConvertPixelType(m_hDevHandle, &stConvertParam);

*getImage = cv::Mat(stImageInfo.nHeight, stImageInfo.nWidth, CV_8UC3, m_pBufForSaveImage);

}

(*getImage).copyTo(image);

(*getImage).release();

free(pDate);

free(m_pBufForSaveImage);

return 0;

}

mythread.cpp

#include "mythread.h"

MyThread::MyThread()

{

}

MyThread::~MyThread()

{

terminate();

if (cameraPtr != NULL)

{

delete cameraPtr;

}

if (imagePtr != NULL)

{

delete imagePtr;

}

}

void MyThread::getCameraPtr(CMvCamera* camera)

{

cameraPtr = camera;

}

void MyThread::getImagePtr(Mat* image)

{

imagePtr = image;

}

void MyThread::getCameraIndex(int index)

{

cameraIndex = index;

}

void MyThread::run()

{

if (cameraPtr == NULL){

return;

}

if (imagePtr == NULL){

return;

}

while (!isInterruptionRequested())

{

std::cout << "Thread_Trigger:" << cameraPtr->softTrigger() << std::endl;

std::cout << "Thread_Readbuffer:" << cameraPtr->ReadBuffer(*imagePtr) << std::endl;

emit Display(imagePtr, cameraIndex);

msleep(30);

}

}

效果

(因为放假回家相机没带回来,这里只看下界面效果)

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)