框架中有一个非常重要且好用的包:torchvision,顾名思义这个包主要是关于计算机视觉cv的。这个包主要由3个子包组成,分别是:torchvision.datasets、torchvision.models、torchvision.transforms。

具体介绍可以参考官网:https://pytorch.org/docs/master/torchvision

具体代码可以参考github:https://github.com/pytorch/vision

承接上一篇,今天来看看inception V3的pytorch实现。

关于inception系列的论文笔记可以查看https://blog.csdn.net/sinat_33487968/article/details/83588372

首先因为有很多卷积的操作是重复的,所以定义了一个BasicConv2d的类,

class BasicConv2d(nn.Module):

def __init__(self, in_channels, out_channels, **kwargs):

super(BasicConv2d, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels, bias=False, **kwargs)

self.bn = nn.BatchNorm2d(out_channels, eps=0.001)

def forward(self, x):

x = self.conv(x)

x = self.bn(x)

return F.relu(x, inplace=True)

这个类实现了最基本的卷积加上BN的操作,因为in_channels和out_channels是我们可以自己定义的,而且**kwargs的意思是能接收多个赋值,这也意味着我们我可以定义卷积的stride大小,padding的大小等等。我们将会在下面的inception模块中不断复用这个类。

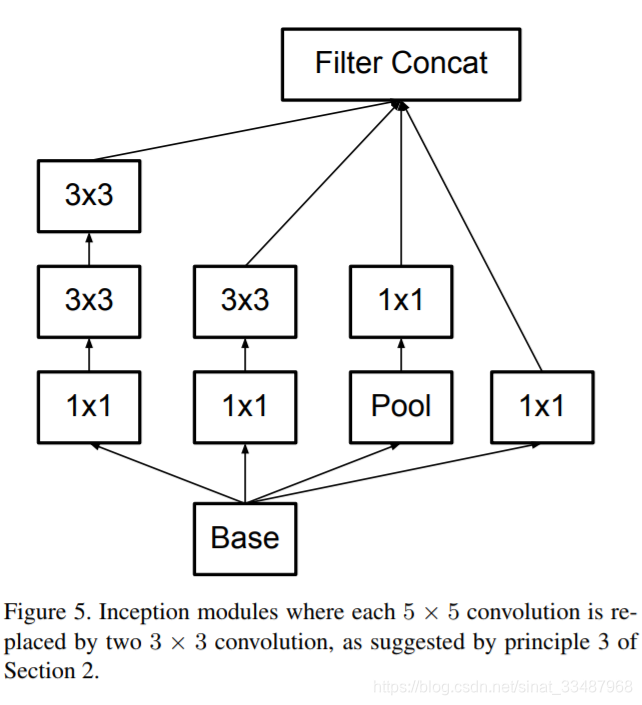

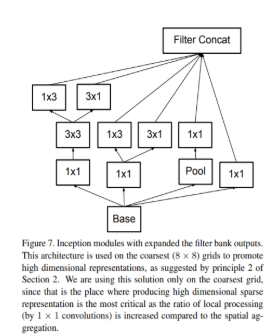

然后inception系列的网络架构最最重点对的当然是module的构建,这里实现了inceptionA~E五种不同结构的inception module,但是我发现并没有在原论文里面完全一样,可能是实现的时候修改了吧。不管怎么样,module的样子大概就是下图这样:

来看看这个inceptionA。这里的结构大致是一个module里面有四个分支,__init__里面就是结构的定义。第一个分支是branch1,只有一个1*1的卷积;第二个分支是两个5*5的卷积;第三个分支是三个3*3的卷积;而第四个分支没有卷积,是一个简单的pooling。你可能会有疑问为什么不同的卷积核的输出大小是一样大,因为这里特别的针对每个分支有不同的padding(零填充),然后每个分支stride的步数都为1,最后就回输出大小相同的卷积结果。

值得我们注意的是最后outputs = [branch1x1, branch5x5, branch3x3dbl, branch_pool]这个操作就是将不同的分支都concaternation相结合在一起。

class InceptionA(nn.Module):

def __init__(self, in_channels, pool_features):

super(InceptionA, self).__init__()

self.branch1x1 = BasicConv2d(in_channels, 64, kernel_size=1)

self.branch5x5_1 = BasicConv2d(in_channels, 48, kernel_size=1)

self.branch5x5_2 = BasicConv2d(48, 64, kernel_size=5, padding=2)

self.branch3x3dbl_1 = BasicConv2d(in_channels, 64, kernel_size=1)

self.branch3x3dbl_2 = BasicConv2d(64, 96, kernel_size=3, padding=1)

self.branch3x3dbl_3 = BasicConv2d(96, 96, kernel_size=3, padding=1)

self.branch_pool = BasicConv2d(in_channels, pool_features, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3dbl = self.branch3x3dbl_1(x)

branch3x3dbl = self.branch3x3dbl_2(branch3x3dbl)

branch3x3dbl = self.branch3x3dbl_3(branch3x3dbl)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch5x5, branch3x3dbl, branch_pool]

return torch.cat(outputs, 1)

同理其他的module也是大同小异,这里就不多说了。我们来看一下特别的network in network in network结构,这里的意思是有一个特殊的module它里面有两重分支。

在这里这个分支叫InceptionE。下面完整的代码可以看到在第二个分支self.branch3x3_1后面有两个层self.branch3x3_2a和self.branch3x3_2b,他们就是在第一层传递之后第二层分叉了,最后又在重点结合在一起。怎么做到的呢?

branch3x3 = [

self.branch3x3_2a(branch3x3),

self.branch3x3_2b(branch3x3),

]

这里就是将两个结果合并在一起,最后再做一次最后的合并:

outputs = [branch1x1, branch3x3, branch3x3dbl, branch_pool]

branch3x3 = torch.cat(branch3x3, 1)

class InceptionE(nn.Module):

def __init__(self, in_channels):

super(InceptionE, self).__init__()

self.branch1x1 = BasicConv2d(in_channels, 320, kernel_size=1)

self.branch3x3_1 = BasicConv2d(in_channels, 384, kernel_size=1)

self.branch3x3_2a = BasicConv2d(384, 384, kernel_size=(1, 3), padding=(0, 1))

self.branch3x3_2b = BasicConv2d(384, 384, kernel_size=(3, 1), padding=(1, 0))

self.branch3x3dbl_1 = BasicConv2d(in_channels, 448, kernel_size=1)

self.branch3x3dbl_2 = BasicConv2d(448, 384, kernel_size=3, padding=1)

self.branch3x3dbl_3a = BasicConv2d(384, 384, kernel_size=(1, 3), padding=(0, 1))

self.branch3x3dbl_3b = BasicConv2d(384, 384, kernel_size=(3, 1), padding=(1, 0))

self.branch_pool = BasicConv2d(in_channels, 192, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch3x3 = self.branch3x3_1(x)

branch3x3 = [

self.branch3x3_2a(branch3x3),

self.branch3x3_2b(branch3x3),

]

branch3x3 = torch.cat(branch3x3, 1)

branch3x3dbl = self.branch3x3dbl_1(x)

branch3x3dbl = self.branch3x3dbl_2(branch3x3dbl)

branch3x3dbl = [

self.branch3x3dbl_3a(branch3x3dbl),

self.branch3x3dbl_3b(branch3x3dbl),

]

branch3x3dbl = torch.cat(branch3x3dbl, 1)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch3x3, branch3x3dbl, branch_pool]

return torch.cat(outputs, 1)

此外还有一个比较特殊的结构是辅助分类结构,这就是在完整网络中间某层输出结果以一定的比例添加到最终结果分类的意思。他跟网络最后的分类是类似的,只是他是在中间分支出来的辅助结果。结构是卷积到一层线性分类,没有之前VGG版本Alexnet版本的全连接,参数大大减少。

class InceptionAux(nn.Module):

def __init__(self, in_channels, num_classes):

super(InceptionAux, self).__init__()

self.conv0 = BasicConv2d(in_channels, 128, kernel_size=1)

self.conv1 = BasicConv2d(128, 768, kernel_size=5)

self.conv1.stddev = 0.01

self.fc = nn.Linear(768, num_classes)

self.fc.stddev = 0.001

def forward(self, x):

# 17 x 17 x 768

x = F.avg_pool2d(x, kernel_size=5, stride=3)

# 5 x 5 x 768

x = self.conv0(x)

# 5 x 5 x 128

x = self.conv1(x)

# 1 x 1 x 768

x = x.view(x.size(0), -1)

# 768

x = self.fc(x)

# 1000

return x

最后来看看Inception V3的完整结构吧。__init__函数里定义网络的结构,有哪些基本模块,并且对权重初始化。foward函数定义了输入数据的流动方向,基本上就是前面的只有卷积层,后面开始使用不同的inception module,最后一层linear线性输出结果。而如果使用aux_logits就会添加辅助分类结构,最后返回的结果也会包括辅助分类的结果。

class Inception3(nn.Module):

def __init__(self, num_classes=1000, aux_logits=True, transform_input=False):

super(Inception3, self).__init__()

self.aux_logits = aux_logits

self.transform_input = transform_input

self.Conv2d_1a_3x3 = BasicConv2d(3, 32, kernel_size=3, stride=2)

self.Conv2d_2a_3x3 = BasicConv2d(32, 32, kernel_size=3)

self.Conv2d_2b_3x3 = BasicConv2d(32, 64, kernel_size=3, padding=1)

self.Conv2d_3b_1x1 = BasicConv2d(64, 80, kernel_size=1)

self.Conv2d_4a_3x3 = BasicConv2d(80, 192, kernel_size=3)

self.Mixed_5b = InceptionA(192, pool_features=32)

self.Mixed_5c = InceptionA(256, pool_features=64)

self.Mixed_5d = InceptionA(288, pool_features=64)

self.Mixed_6a = InceptionB(288)

self.Mixed_6b = InceptionC(768, channels_7x7=128)

self.Mixed_6c = InceptionC(768, channels_7x7=160)

self.Mixed_6d = InceptionC(768, channels_7x7=160)

self.Mixed_6e = InceptionC(768, channels_7x7=192)

if aux_logits:

self.AuxLogits = InceptionAux(768, num_classes)

self.Mixed_7a = InceptionD(768)

self.Mixed_7b = InceptionE(1280)

self.Mixed_7c = InceptionE(2048)

self.fc = nn.Linear(2048, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d) or isinstance(m, nn.Linear):

import scipy.stats as stats

stddev = m.stddev if hasattr(m, 'stddev') else 0.1

X = stats.truncnorm(-2, 2, scale=stddev)

values = torch.Tensor(X.rvs(m.weight.numel()))

values = values.view(m.weight.size())

m.weight.data.copy_(values)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

def forward(self, x):

if self.transform_input:

x_ch0 = torch.unsqueeze(x[:, 0], 1) * (0.229 / 0.5) + (0.485 - 0.5) / 0.5

x_ch1 = torch.unsqueeze(x[:, 1], 1) * (0.224 / 0.5) + (0.456 - 0.5) / 0.5

x_ch2 = torch.unsqueeze(x[:, 2], 1) * (0.225 / 0.5) + (0.406 - 0.5) / 0.5

x = torch.cat((x_ch0, x_ch1, x_ch2), 1)

# 299 x 299 x 3

x = self.Conv2d_1a_3x3(x)

# 149 x 149 x 32

x = self.Conv2d_2a_3x3(x)

# 147 x 147 x 32

x = self.Conv2d_2b_3x3(x)

# 147 x 147 x 64

x = F.max_pool2d(x, kernel_size=3, stride=2)

# 73 x 73 x 64

x = self.Conv2d_3b_1x1(x)

# 73 x 73 x 80

x = self.Conv2d_4a_3x3(x)

# 71 x 71 x 192

x = F.max_pool2d(x, kernel_size=3, stride=2)

# 35 x 35 x 192

x = self.Mixed_5b(x)

# 35 x 35 x 256

x = self.Mixed_5c(x)

# 35 x 35 x 288

x = self.Mixed_5d(x)

# 35 x 35 x 288

x = self.Mixed_6a(x)

# 17 x 17 x 768

x = self.Mixed_6b(x)

# 17 x 17 x 768

x = self.Mixed_6c(x)

# 17 x 17 x 768

x = self.Mixed_6d(x)

# 17 x 17 x 768

x = self.Mixed_6e(x)

# 17 x 17 x 768

if self.training and self.aux_logits:

aux = self.AuxLogits(x)

# 17 x 17 x 768

x = self.Mixed_7a(x)

# 8 x 8 x 1280

x = self.Mixed_7b(x)

# 8 x 8 x 2048

x = self.Mixed_7c(x)

# 8 x 8 x 2048

x = F.avg_pool2d(x, kernel_size=8)

# 1 x 1 x 2048

x = F.dropout(x, training=self.training)

# 1 x 1 x 2048

x = x.view(x.size(0), -1)

# 2048

x = self.fc(x)

# 1000 (num_classes)

if self.training and self.aux_logits:

return x, aux

return x

最后贴上完整的代码:

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.utils.model_zoo as model_zoo

__all__ = ['Inception3', 'inception_v3']

model_urls = {

# Inception v3 ported from TensorFlow

'inception_v3_google': 'https://download.pytorch.org/models/inception_v3_google-1a9a5a14.pth',

}

def inception_v3(pretrained=False, **kwargs):

r"""Inception v3 model architecture from

`"Rethinking the Inception Architecture for Computer Vision" <http://arxiv.org/abs/1512.00567>`_.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

if pretrained:

if 'transform_input' not in kwargs:

kwargs['transform_input'] = True

model = Inception3(**kwargs)

model.load_state_dict(model_zoo.load_url(model_urls['inception_v3_google']))

return model

return Inception3(**kwargs)

class Inception3(nn.Module):

def __init__(self, num_classes=1000, aux_logits=True, transform_input=False):

super(Inception3, self).__init__()

self.aux_logits = aux_logits

self.transform_input = transform_input

self.Conv2d_1a_3x3 = BasicConv2d(3, 32, kernel_size=3, stride=2)

self.Conv2d_2a_3x3 = BasicConv2d(32, 32, kernel_size=3)

self.Conv2d_2b_3x3 = BasicConv2d(32, 64, kernel_size=3, padding=1)

self.Conv2d_3b_1x1 = BasicConv2d(64, 80, kernel_size=1)

self.Conv2d_4a_3x3 = BasicConv2d(80, 192, kernel_size=3)

self.Mixed_5b = InceptionA(192, pool_features=32)

self.Mixed_5c = InceptionA(256, pool_features=64)

self.Mixed_5d = InceptionA(288, pool_features=64)

self.Mixed_6a = InceptionB(288)

self.Mixed_6b = InceptionC(768, channels_7x7=128)

self.Mixed_6c = InceptionC(768, channels_7x7=160)

self.Mixed_6d = InceptionC(768, channels_7x7=160)

self.Mixed_6e = InceptionC(768, channels_7x7=192)

if aux_logits:

self.AuxLogits = InceptionAux(768, num_classes)

self.Mixed_7a = InceptionD(768)

self.Mixed_7b = InceptionE(1280)

self.Mixed_7c = InceptionE(2048)

self.fc = nn.Linear(2048, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d) or isinstance(m, nn.Linear):

import scipy.stats as stats

stddev = m.stddev if hasattr(m, 'stddev') else 0.1

X = stats.truncnorm(-2, 2, scale=stddev)

values = torch.Tensor(X.rvs(m.weight.numel()))

values = values.view(m.weight.size())

m.weight.data.copy_(values)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

def forward(self, x):

if self.transform_input:

x_ch0 = torch.unsqueeze(x[:, 0], 1) * (0.229 / 0.5) + (0.485 - 0.5) / 0.5

x_ch1 = torch.unsqueeze(x[:, 1], 1) * (0.224 / 0.5) + (0.456 - 0.5) / 0.5

x_ch2 = torch.unsqueeze(x[:, 2], 1) * (0.225 / 0.5) + (0.406 - 0.5) / 0.5

x = torch.cat((x_ch0, x_ch1, x_ch2), 1)

# 299 x 299 x 3

x = self.Conv2d_1a_3x3(x)

# 149 x 149 x 32

x = self.Conv2d_2a_3x3(x)

# 147 x 147 x 32

x = self.Conv2d_2b_3x3(x)

# 147 x 147 x 64

x = F.max_pool2d(x, kernel_size=3, stride=2)

# 73 x 73 x 64

x = self.Conv2d_3b_1x1(x)

# 73 x 73 x 80

x = self.Conv2d_4a_3x3(x)

# 71 x 71 x 192

x = F.max_pool2d(x, kernel_size=3, stride=2)

# 35 x 35 x 192

x = self.Mixed_5b(x)

# 35 x 35 x 256

x = self.Mixed_5c(x)

# 35 x 35 x 288

x = self.Mixed_5d(x)

# 35 x 35 x 288

x = self.Mixed_6a(x)

# 17 x 17 x 768

x = self.Mixed_6b(x)

# 17 x 17 x 768

x = self.Mixed_6c(x)

# 17 x 17 x 768

x = self.Mixed_6d(x)

# 17 x 17 x 768

x = self.Mixed_6e(x)

# 17 x 17 x 768

if self.training and self.aux_logits:

aux = self.AuxLogits(x)

# 17 x 17 x 768

x = self.Mixed_7a(x)

# 8 x 8 x 1280

x = self.Mixed_7b(x)

# 8 x 8 x 2048

x = self.Mixed_7c(x)

# 8 x 8 x 2048

x = F.avg_pool2d(x, kernel_size=8)

# 1 x 1 x 2048

x = F.dropout(x, training=self.training)

# 1 x 1 x 2048

x = x.view(x.size(0), -1)

# 2048

x = self.fc(x)

# 1000 (num_classes)

if self.training and self.aux_logits:

return x, aux

return x

class InceptionA(nn.Module):

def __init__(self, in_channels, pool_features):

super(InceptionA, self).__init__()

self.branch1x1 = BasicConv2d(in_channels, 64, kernel_size=1)

self.branch5x5_1 = BasicConv2d(in_channels, 48, kernel_size=1)

self.branch5x5_2 = BasicConv2d(48, 64, kernel_size=5, padding=2)

self.branch3x3dbl_1 = BasicConv2d(in_channels, 64, kernel_size=1)

self.branch3x3dbl_2 = BasicConv2d(64, 96, kernel_size=3, padding=1)

self.branch3x3dbl_3 = BasicConv2d(96, 96, kernel_size=3, padding=1)

self.branch_pool = BasicConv2d(in_channels, pool_features, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3dbl = self.branch3x3dbl_1(x)

branch3x3dbl = self.branch3x3dbl_2(branch3x3dbl)

branch3x3dbl = self.branch3x3dbl_3(branch3x3dbl)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch5x5, branch3x3dbl, branch_pool]

return torch.cat(outputs, 1)

class InceptionB(nn.Module):

def __init__(self, in_channels):

super(InceptionB, self).__init__()

self.branch3x3 = BasicConv2d(in_channels, 384, kernel_size=3, stride=2)

self.branch3x3dbl_1 = BasicConv2d(in_channels, 64, kernel_size=1)

self.branch3x3dbl_2 = BasicConv2d(64, 96, kernel_size=3, padding=1)

self.branch3x3dbl_3 = BasicConv2d(96, 96, kernel_size=3, stride=2)

def forward(self, x):

branch3x3 = self.branch3x3(x)

branch3x3dbl = self.branch3x3dbl_1(x)

branch3x3dbl = self.branch3x3dbl_2(branch3x3dbl)

branch3x3dbl = self.branch3x3dbl_3(branch3x3dbl)

branch_pool = F.max_pool2d(x, kernel_size=3, stride=2)

outputs = [branch3x3, branch3x3dbl, branch_pool]

return torch.cat(outputs, 1)

class InceptionC(nn.Module):

def __init__(self, in_channels, channels_7x7):

super(InceptionC, self).__init__()

self.branch1x1 = BasicConv2d(in_channels, 192, kernel_size=1)

c7 = channels_7x7

self.branch7x7_1 = BasicConv2d(in_channels, c7, kernel_size=1)

self.branch7x7_2 = BasicConv2d(c7, c7, kernel_size=(1, 7), padding=(0, 3))

self.branch7x7_3 = BasicConv2d(c7, 192, kernel_size=(7, 1), padding=(3, 0))

self.branch7x7dbl_1 = BasicConv2d(in_channels, c7, kernel_size=1)

self.branch7x7dbl_2 = BasicConv2d(c7, c7, kernel_size=(7, 1), padding=(3, 0))

self.branch7x7dbl_3 = BasicConv2d(c7, c7, kernel_size=(1, 7), padding=(0, 3))

self.branch7x7dbl_4 = BasicConv2d(c7, c7, kernel_size=(7, 1), padding=(3, 0))

self.branch7x7dbl_5 = BasicConv2d(c7, 192, kernel_size=(1, 7), padding=(0, 3))

self.branch_pool = BasicConv2d(in_channels, 192, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch7x7 = self.branch7x7_1(x)

branch7x7 = self.branch7x7_2(branch7x7)

branch7x7 = self.branch7x7_3(branch7x7)

branch7x7dbl = self.branch7x7dbl_1(x)

branch7x7dbl = self.branch7x7dbl_2(branch7x7dbl)

branch7x7dbl = self.branch7x7dbl_3(branch7x7dbl)

branch7x7dbl = self.branch7x7dbl_4(branch7x7dbl)

branch7x7dbl = self.branch7x7dbl_5(branch7x7dbl)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch7x7, branch7x7dbl, branch_pool]

return torch.cat(outputs, 1)

class InceptionD(nn.Module):

def __init__(self, in_channels):

super(InceptionD, self).__init__()

self.branch3x3_1 = BasicConv2d(in_channels, 192, kernel_size=1)

self.branch3x3_2 = BasicConv2d(192, 320, kernel_size=3, stride=2)

self.branch7x7x3_1 = BasicConv2d(in_channels, 192, kernel_size=1)

self.branch7x7x3_2 = BasicConv2d(192, 192, kernel_size=(1, 7), padding=(0, 3))

self.branch7x7x3_3 = BasicConv2d(192, 192, kernel_size=(7, 1), padding=(3, 0))

self.branch7x7x3_4 = BasicConv2d(192, 192, kernel_size=3, stride=2)

def forward(self, x):

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch7x7x3 = self.branch7x7x3_1(x)

branch7x7x3 = self.branch7x7x3_2(branch7x7x3)

branch7x7x3 = self.branch7x7x3_3(branch7x7x3)

branch7x7x3 = self.branch7x7x3_4(branch7x7x3)

branch_pool = F.max_pool2d(x, kernel_size=3, stride=2)

outputs = [branch3x3, branch7x7x3, branch_pool]

return torch.cat(outputs, 1)

class InceptionE(nn.Module):

def __init__(self, in_channels):

super(InceptionE, self).__init__()

self.branch1x1 = BasicConv2d(in_channels, 320, kernel_size=1)

self.branch3x3_1 = BasicConv2d(in_channels, 384, kernel_size=1)

self.branch3x3_2a = BasicConv2d(384, 384, kernel_size=(1, 3), padding=(0, 1))

self.branch3x3_2b = BasicConv2d(384, 384, kernel_size=(3, 1), padding=(1, 0))

self.branch3x3dbl_1 = BasicConv2d(in_channels, 448, kernel_size=1)

self.branch3x3dbl_2 = BasicConv2d(448, 384, kernel_size=3, padding=1)

self.branch3x3dbl_3a = BasicConv2d(384, 384, kernel_size=(1, 3), padding=(0, 1))

self.branch3x3dbl_3b = BasicConv2d(384, 384, kernel_size=(3, 1), padding=(1, 0))

self.branch_pool = BasicConv2d(in_channels, 192, kernel_size=1)

def forward(self, x):

branch1x1 = self.branch1x1(x)

branch3x3 = self.branch3x3_1(x)

branch3x3 = [

self.branch3x3_2a(branch3x3),

self.branch3x3_2b(branch3x3),

]

branch3x3 = torch.cat(branch3x3, 1)

branch3x3dbl = self.branch3x3dbl_1(x)

branch3x3dbl = self.branch3x3dbl_2(branch3x3dbl)

branch3x3dbl = [

self.branch3x3dbl_3a(branch3x3dbl),

self.branch3x3dbl_3b(branch3x3dbl),

]

branch3x3dbl = torch.cat(branch3x3dbl, 1)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch3x3, branch3x3dbl, branch_pool]

return torch.cat(outputs, 1)

class InceptionAux(nn.Module):

def __init__(self, in_channels, num_classes):

super(InceptionAux, self).__init__()

self.conv0 = BasicConv2d(in_channels, 128, kernel_size=1)

self.conv1 = BasicConv2d(128, 768, kernel_size=5)

self.conv1.stddev = 0.01

self.fc = nn.Linear(768, num_classes)

self.fc.stddev = 0.001

def forward(self, x):

# 17 x 17 x 768

x = F.avg_pool2d(x, kernel_size=5, stride=3)

# 5 x 5 x 768

x = self.conv0(x)

# 5 x 5 x 128

x = self.conv1(x)

# 1 x 1 x 768

x = x.view(x.size(0), -1)

# 768

x = self.fc(x)

# 1000

return x

class BasicConv2d(nn.Module):

def __init__(self, in_channels, out_channels, **kwargs):

super(BasicConv2d, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels, bias=False, **kwargs)

self.bn = nn.BatchNorm2d(out_channels, eps=0.001)

def forward(self, x):

x = self.conv(x)

x = self.bn(x)

return F.relu(x, inplace=True)

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)