环境介绍

准备四台服务器搭建clickhouse集群,(创建两个分片,每个分片一个副本)

在任意三台服务器上安装zookeeper 服务,clickhouse集群依赖zookeeper进行服务器之间的数据同步。

192.168.1.1 ch1.test.com ch1

192.168.1.2 ch2.test.com ch2

192.168.1.3 ch3.test.com ch3

192.168.1.4 ch4.test.com ch4

目录结构设计

1、配置文件的默认目录为/etc/clickhouse-server/

2、数据库数据目录设置为/data/clickhouse/

3、数据库日志的目录设置为/data/clickhouse/

#zookeeper 集群的安装

1、安装zookeeper 集群

1、安装jdk

https://www.oracle.com/java/technologies/downloads/

wget https://dlcdn.apache.org/zookeeper/zookeeper-3.6.3/apache-zookeeper-3.6.3-bin.tar.gz

2、优化jdk

3、安装zookeeper

4、使用systemctl 管理zookeeper服务

[Unit]

Description=Zookeeper service

After=network.target

[Service]

Type=simple

User=root

Group=root

ExecStart=/usr/local/zookeeper363/bin/zkServer.sh start

ExecStop=/usr/local/zookeeper363/bin/zkServer.sh stop

Restart=on-failure

[Install]

WantedBy=multi-user.target

下载最新的稳定版

https://packages.clickhouse.com/rpm/stable

wget https://packages.clickhouse.com/rpm/stable/clickhouse-client-21.8.13.6-2.noarch.rpm

wget https://packages.clickhouse.com/rpm/stable/clickhouse-common-static-21.8.13.6-2.x86_64.rpm

wget https://packages.clickhouse.com/rpm/stable/clickhouse-common-static-dbg-21.8.13.6-2.x86_64.rpm

wget https://packages.clickhouse.com/rpm/stable/clickhouse-server-21.8.13.6-2.noarch.rpm

安装clickhouse

yum localinstall *.prm

或则

rpm -ivh *.rpm

关闭防火墙并检查环境依赖

关闭防火墙

systemctl stop firewalld.service

关闭开机启动防火墙

systemctl disable firewalld.service

设置主机名

hostnamectl --static set-hostname ch1.test.com

验证主机名设置是否生效

hostname -f

ch1.test.com

最后需要配置hosts 文件,配置后的效果如下:

#cat /etc/hosts

192.168.1.1 ch1.test.com ch1

192.168.1.2 ch2.test.com ch2

192.168.1.3 ch3.test.com ch3

192.168.1.4 ch4.test.com ch4

修改配置文件 文件句柄数量

vim /etc/security/limits.d/clickhouse.conf 文件句柄数量的配置

clickhouse soft nofile 262144

clickhouse hard nofile 262144

改配置也可以通过config.xml 的max_open_files参数指定。

设置计划任务 用于恢复因异常原因中断的clickhouse 服务进程,其默认的配置如下

more /etc/cron.d/clickhouse-server

#*/10 * * * * root ((which service > /dev/null 2>&1 && (service clickhouse-server condstart ||:)) || /etc/init.d/clickhouse-server condstart) > /dev/null

2>&1

设置目录权限

chown -R clikchouse.clickhouse /data/clickhouse

修改配置文件xml格式(关键参数说明)

主配置文件config.xml

用户级别的配置文件user.xml

文件注释

说明: 用户及请求相关的设置需要配置在user.xml文件中,如果配置在config.xml,用户相关的配置如果配置到config.xml文件中,服务就无法启动。

NOTE: User and query level settings are set up in “users.xml” file.

If you have accidentally specified user-level settings here, server won’t start.

You can either move the settings to the right place inside “users.xml” file

or add <skip_check_for_incorrect_settings>1</skip_check_for_incorrect_settings> here

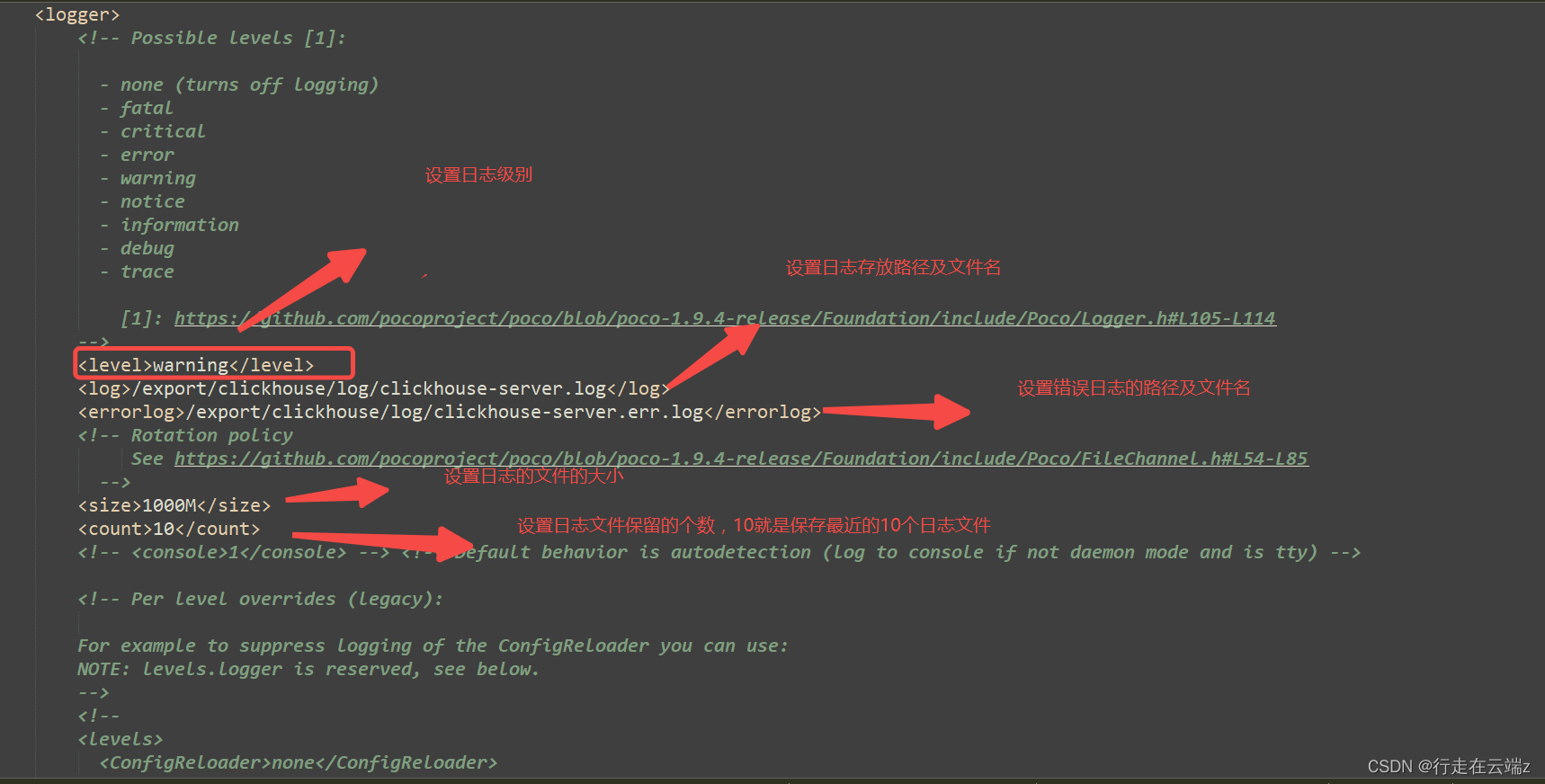

日志设置

端口说明:

-

HTTP:8123端口:使用ODBC、JDBC(DataGrip、Dbeaver) 等驱动和一些web接口如embedded UI 、grafana、redash等都需要链接http 8123端口。

-

TCP 9000 : clickhouse的原生工具需要链接9000端口。

ClickHouse -client和其他本地ClickHouse工具(ClickHouse -benchmark, ClickHouse - copy);

clickhouse-server与其他clickhouse-server进行分布式查询处理;

ClickHouse驱动程序和应用程序支持本机协议

(该协议也被非正式地称为“TCP协议”);

-

TCP 9004 : clickhouse 使用此端口把自己伪装成mysql;

-

TCP 9005 : clickhouse 使用此端口把自己伪装成postgresSQL;

-

https 8443 : 支持使用https

-

<listen_host>::</listen_host> – 监听本机上的所有ipv6 地址

<listen_host>0.0.0.0</listen_host> – 监听本机上的所有ipv4地址

监听指定的ip

<listen_host>127.0.0.1</listen_host>

<listen_host>10.205.110.37</listen_host>

并发量比较大时,需要优化此参数,等待链接的数量。

<listen_backlog>128</listen_backlog>

最大链接数量

<max_connections>4096</max_connections>

** http 1.1 保持链接时间**

<keep_alive_timeout>3</keep_alive_timeout>

最大并发链接数

<max_concurrent_queries>400</max_concurrent_queries>

内存设置

<max_server_memory_usage>0</max_server_memory_usage>

如果此次设置为0,可用内存的大小由参数"max_server_memory_usage_to_ram_ratio" * 可用物理内存的大小。

Default is “max_server_memory_usage_to_ram_ratio” of available physical RAM.

If the value is larger than “max_server_memory_usage_to_ram_ratio” of available physical RAM, it will be cut down。如果使用的内存量大于可用内存时,clickhouse-server服务进程就会杀掉。

<max_thread_pool_size>10000</max_thread_pool_size>

最大线程池的大小。

设置最大使用可用物理内存的比例:

<max_server_memory_usage_to_ram_ratio>0.7</max_server_memory_usage_to_ram_ratio>

设置数据存放目录

/export/clickhouse/

设置临时数据目录

<tmp_path>/export/clickhouse/tmp/</tmp_path>

由’file’引擎表函数可访问的用户提供文件的目录。

<user_files_path>/var/lib/clickhouse/user_files/</user_files_path>

<remote_servers>

集群的名字test_shard_localhost

<test_shard_localhost>

<shard>

<!-- Optional. Whether to write data to just one of the replicas. Default: false (write data to all replicas). -->

**可选的。是否只向一个副本写入数据。默认值:false(写入所有副本)。**

<internal_replication>true</internal_replication> -->

<!-- **数据写入改分片的权重** -->

<!-- <weight>1</weight> -->

<replica>

<host>localhost</host>

<port>9000</port>

<!-- Optional. Priority of the replica for load_balancing. Default: 1 (less value has more priority)可选的。load_balancing副本的优先级。缺省值:1(值越小优先级越高). -->

<!-- <priority>1</priority> -->

</replica>

</shard>

</test_shard_localhost>

<test_cluster_two_shards_localhost>

<shard>

<replica>

<host>localhost</host>

<port>9000</port>

</replica>

</shard>

<shard>

<replica>

<host>localhost</host>

<port>9000</port>

</replica>

</shard>

</test_cluster_two_shards_localhost>

<test_cluster_two_shards>

<shard>

<replica>

<host>127.0.0.1</host>

<port>9000</port>

</replica>

</shard>

<shard>

<replica>

<host>127.0.0.2</host>

<port>9000</port>

</replica>

</shard>

</test_cluster_two_shards>

<test_cluster_two_shards_internal_replication>

<shard>

<internal_replication>true</internal_replication>

<replica>

<host>127.0.0.1</host>

<port>9000</port>

</replica>

</shard>

<shard>

<internal_replication>true</internal_replication>

<replica>

<host>127.0.0.2</host>

<port>9000</port>

</replica>

</shard>

</test_cluster_two_shards_internal_replication>

<open_service_cluster>

<shard>

<internal_replication>true</internal_replication>

<replica>

<host>ch1.test.com</host>

<port>9000</port>

</replica>

<replica>

<host>ch2.test.com</host>

<port>9000</port>

</replica>

</shard>

<shard>

<internal_replication>true</internal_replication>

<replica>

<host>ch3.test.com</host>

<port>9000</port>

</replica>

<replica>

<host>ch4.test.com</host>

<port>9000</port>

</replica>

</shard>

</haigeek_open_service_cluster>

</remote_servers>

zookeeper设置

<zookeeper>

<node>

<host>ch1.test.com</host>

<port>2181</port>

</node>

<node>

<host>ch1.test.com</host>

<port>2181</port>

</node>

<node>

<host>ch1.test.com</host>

<port>2181</port>

</node>

</zookeeper>

给服务器设置一个集群内的喂一次标识

第一台服务器

<macros>

<shard>01</shard>

<replica>app-01-1</replica>

</macros>

第二台服务器

<macros>

<shard>01</shard>

<replica>app-01-2</replica>

</macros>

第三台服务器

<macros>

<shard>02</shard>

<replica>app-02-1</replica>

</macros>

第四台服务器

<macros>

<shard>02</shard>

<replica>replica-02-2</replica>

</macros>