作者:张华 发表于:2023-07-12

版权声明:可以任意转载,转载时请务必以超链接形式标明文章原始出处和作者信息及本版权声明

IPVS是Linux内核态的一个L4 LB (KTCPVS, Kernel TCP Virtual Server则是L7 LB), IPVS通过在Netfilter框架中的不同位置注册自己的处理函数来捕获数据包,并根据与IPVS相关的信息表对数据包进行处理,按照IPVS规则中定义的不同的包转发模式,对数据包进行不同的转发处理。

IPVS有哪些包转发模式:NAT、IP tunneling和Direct Routing。本文要做的实验是关于Direct Routeing (client -> LB -> backend -> client), 即从backend返回的包直接direct routing至client (若仍由LB转发的叫NAT, 不会对传输层的端口做修改叫tunneling).

实验

./generate-bundle.sh --name ussuri-ovn-2203 --replay --run

juju config neutron-api-plugin-ovn dns-servers=10.5.0.15

./tools/vault-unseal-and-authorise.sh

./configure

openstack router remove subnet provider-router private_subnet

openstack subnet delete private_subnet

openstack network delete private

source ./novarc

network=172.16.0.0/24

net_start=`ipcalc $network| awk '$1=="HostMin:" {print $2}'`

net_end=`ipcalc $network| awk '$1=="HostMax:" {print $2}'`

d=${net_start##*.}

net_start=${net_start%.*}.$((++d))

n_id="`openstack network create --enable nosec --disable-port-security -c id -f value`"

sn_id="`openstack subnet create --allocation-pool start=$net_start,end=$net_end \

--subnet-range $network --dhcp --ip-version 4 --network $n_id nosec_subnet -c id -f value`"

openstack router add subnet provider-router $sn_id

openstack keypair create --public-key ~/.ssh/id_rsa.pub mykey

openstack server create --wait --image jammy --flavor m1.small --key-name mykey --nic net-id=$n_id nosecvm1

openstack server create --wait --image jammy --flavor m1.small --key-name mykey --nic net-id=$n_id nosecvm2

openstack server create --wait --image jammy --flavor m1.small --key-name mykey --nic net-id=$n_id nosecvm3

./tools/float_all.sh

IPVS server: nosecvm1=172.16.0.223/fa:16:3e:1e:fe:eb

real server: nosecvm2=172.16.0.75/fa:16:3e:06:8e:ab eth-vip=172.16.0.177/b2:65:dc:e1:a8:e5

client server: nosecvm3=172.16.0.24/fa:16:3e:5f:46:c0

#on nosecvm1

apt install ipvsadm -y

echo 1 > /proc/sys/net/ipv4/ip_forward

ipvsadm -C

ipvsadm -A -t 172.16.0.177:80 -s rr

ipvsadm -a -t 172.16.0.177:80 -r 172.16.0.75 -g

ipvsadm -l -n

#on nosecvm2

sudo apt install nginx net-tools -y

VIP=172.16.0.177

RIP=172.16.0.75

sudo arptables -F

sudo arptables -D INPUT -d $VIP -j DROP

sudo arptables -D OUTPUT -s $VIP -j mangle --mangle-ip-s $RIP

sudo arptables -L -n -v

sudo modprobe -v dummy numdummies=1

sudo ip addr add $VIP/32 dev dummy0

ip link add eth-vip type dummy

ip link set eth-vip up

ip a add $VIP/32 dev eth-vip

arptables -F

arptables -A INPUT -d $VIP -j DROP

arptables -A OUTPUT -s $VIP -j DROP

arptables -L -n -v

#on nosecvm3

curl 172.16.0.177

Then I successfully reproduce the problem.

root@nosecvm3:~# curl 172.16.0.177

curl: (7) Failed to connect to 172.16.0.177 port 80 after 3069 ms: No route to host

Why was my test result (the comment '2023-08-02 08:49 UTC') random last time? That's because I didn't run above arptables commands to suppress the ARP reply from the real server.

I can reproduce the problem again after running above arptables commands and run 'arp -d 172.16.0.177' in client server even if I haven't created p1 (openstack port create --enable-port-security --network nosec p1).

实验要注意一点,必须运行上面的arptables命令来防止client直接访问了real server, 正确地应该是client通过IPVS来访问real server.

分析

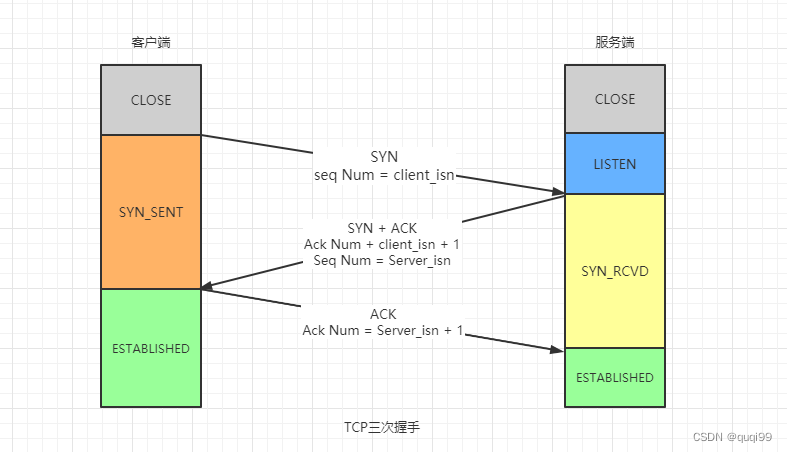

TCP回顾如下:

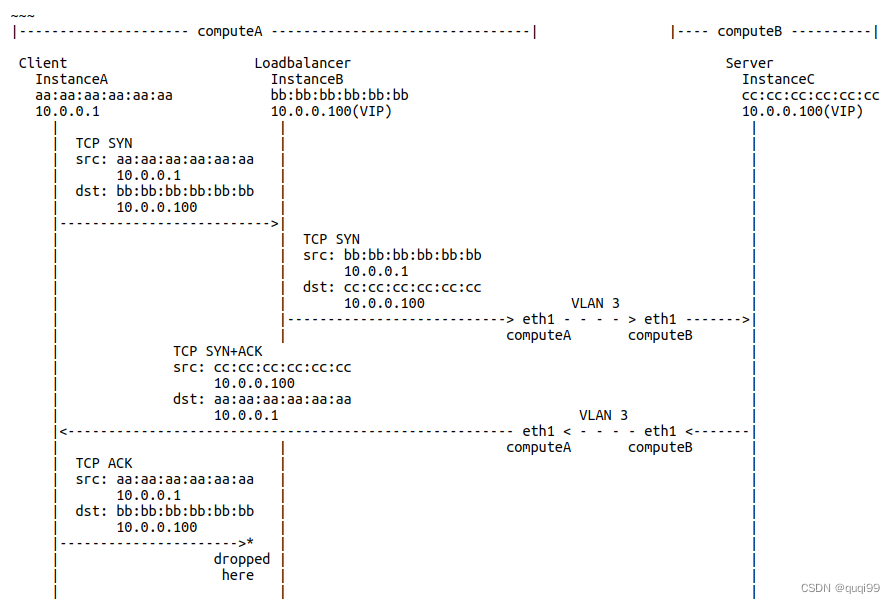

使用了IPVS DR的TCP拓扑如下( https://bugzilla.redhat.com/show_bug.cgi?id=2175037) :

上面的drop package只在ovn中出现, 在ovs中不出现,

“ovn-trace” 与 "ovs-appctl ofproto/trace"确认没有drop ACK包, 所以ACK应该已经到了LB.

所以可能和ovs的conntrack有关,但是问题发生时’ovs-appctl dpctl/dump-conntrack’显示是ESTABLISH

fdb is not suspect because ACK packets are dropped in br-int on the compute node, and br-int doesn’t have NORMAL rules.

这里有一个commit很可疑 - https://github.com/ovn-org/ovn/commit/d17ece7f3706bd1257e181d69705019c0abb7e51

conntract学习 - https://blog.motitan.com/2017/09/02/ovn%E5%AD%A6%E4%B9%A0-5-conntrack/

其他 - 检查conntrck

都disable port SG了,不应该有conntrack了,确认它

while true; do curl 192.168.21.238; sleep 3; done

conntrack -L |grep '192.168.21' |grep -v 'sport=22'

ovs-appctl dpctl/dump-conntrack |grep '192.168.21' |grep -v 'sport=22'

ovs-dpctl dump-conntrack |grep '192.168.21' |grep -v 'sport=22'

ovs-dpctl dump-flows |grep '192.168.21' |grep -v 'sport=22'

其他 - 查看一个port下的流

ovn-nbctl lsp-get-ls neutron_port_id

ovn-sbctl find Port_Binding logical_port=neutron_port_id

ovn-sbctl dump-flows datapath_id_from_previous_command or neutron-<net-id>

20230829更新

VIP: 192.168.22.222

Client: 192.168.22.219

Frontend: 192.168.22.40

Backend: 192.168.22.192

正常的,只有zone7, 10

tcp 6 431998 ESTABLISHED src=192.168.22.219 dst=192.168.22.192 sport=34850 dport=80 src=192.168.22.192 dst=192.168.22.219 sport=80 dport=34850 [ASSURED] mark=0 zone=7 use=1

tcp 6 431998 ESTABLISHED src=192.168.22.219 dst=192.168.22.192 sport=34850 dport=80 src=192.168.22.192 dst=192.168.22.219 sport=80 dport=34850 [ASSURED] mark=0 zone=10 use=1

但有问题的,有zone7, 10, 8

tcp 6 55 SYN_RECV src=192.168.22.219 dst=192.168.22.222 sport=57756 dport=80 src=192.168.22.222 dst=192.168.22.219 sport=80 dport=57756 mark=0 zone=10 use=1

tcp 6 115 SYN_SENT src

=192.168.22.219 dst=192.168.22.222 sport=57756 dport=80 [UNREPLIED] src=192.168.22.222 dst=192.168.22.219 sport=80 dport=57756 mark=0 zone=8 use=1

tcp 6 298 ESTABLISHED src=192.168.22.219 dst=192.168.22.222 sport=57756 dport=80 src=192.168.22.222 dst=192.168.22.219 sport=80 dport=57756 [ASSURED] mark=0 zone=7 use=1

IPVS Route模式路由是非对称的, 此时在conntrack就容易引起问题,OVN运行conntrack两次(ingress and egress), zone是根据port id来的,这样ingress的包被conntrack看成了zone=frontend, 但是egress的包被conntrack看成了zone=backend, 所以不匹配出问题了。

从Client到Backend的包先经过了LB VM, 但由于是IPVS Direct Routing模式从backend回来的SYNACK包没有经过LB VM而是直接到了Client. 所以connection tracking将始终显示SYN_SENT状态. Client得到SYNACK之后的回复包又得经过LB VM这时就会被作为inv给drop掉。

Ingress: Client -> Frontend (IPVS Load Balancer) -> Backend (Web Server)

Egress: Backend (Web Server) -> Client

OVN目前已经优化如针对stateless的SG rule会略过conntrack

https://github.com/ovn-org/ovn/commit/a0f82efdd9dfd3ef2d9606c1890e353df1097a51

这样:

- LB应该disable SG, 因为它是将client来的不同IPs的包发给backend, 我们在openstack中无法使用allowed_address_pairs因为它是来自不同互联网中的随机IP的

- LB也应该disable conction tracking

而目前OVN只会在statelesss模式时disable connection tracking, neutron也是不支持在disable SG还能用 stateless SG的

一个work around是Client得到SYNACK之后的回复包又经过LB VM作为inv设置不被drop掉 (sudo ovn-nbctl set NB_Global . options:use_ct_inv_match=false), 但这个option是全局性的, 这里有一些讨论 - https://mail.openvswitch.org/pipermail/ovs-dev/2021-March/380859.html

Reference

[1] https://zhuanlan.zhihu.com/p/627514565?utm_id=0