说明:项目皆参考于网上,代码也有大部分参考原文。仅用于学习和练习图像处理操作。

项目原文: Bubble sheet multiple choice scanner and test grader using OMR, Python and OpenCV

问题描述:

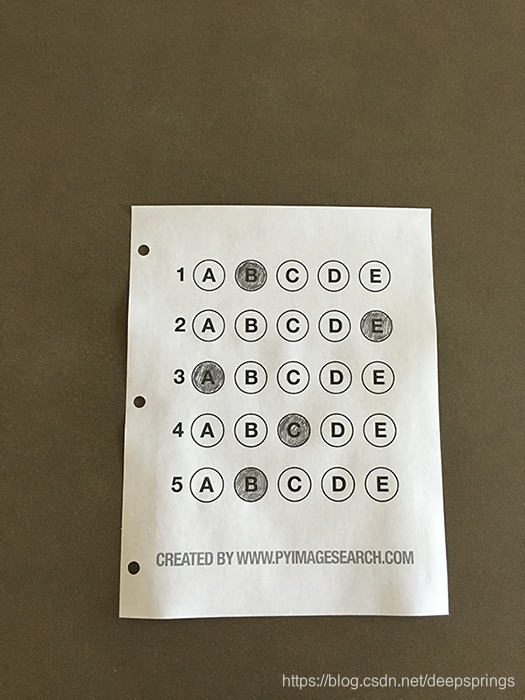

给定下面一张答题卡,识别并依次输出被涂黑的字母 BEACB。

解决思路/步骤:

- 1# 识别图像中答题卡部分,通过透视变换,将图像摆正。

- 2# 识别并提取答题卡中圆形部分。

- 3# 判断被涂黑的部分,输出被涂黑的字母。

代码实现:

#include <opencv2/opencv.hpp>

#include <iostream>

using namespace cv;

using namespace std;

bool cmp1(Rect a, Rect b){

return (a.y<=b.y);

}

bool cmp2(Rect a, Rect b){

return (a.x<=b.x);

}

int main(int argc, char** argv){

if(argc==1) {

cout << "Usage: ProgramName PicturnFile" << endl;

return -1;

}

Mat imgSrc = imread(argv[1]);

if(imgSrc.empty()) {

cout << "Failed to load image!" << endl;

return -1;

}

Mat img = Mat::zeros(imgSrc.size(),imgSrc.type());

cvtColor(imgSrc, img, COLOR_BGR2GRAY);

Mat gray = img.clone();

GaussianBlur(img, img, Size(5,5), 0);

Canny(img, img, 75, 200);

vector<vector<Point>> contours;

vector<Vec4i> hierarchy;

findContours(img, contours, hierarchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE);

// Mat test = Mat::zeros(img.size(), img.type());

// drawContours(test, contours, -1, Scalar(255), 2);

int k = 0;

float area = 0;

for(int i=0; i<contours.size(); i++){

float Area = contourArea(contours[i]);

if(area <= Area) {

area = Area;

k = i;

}

}

vector<Point2f> p;

double len;

len = arcLength(contours[k], true);

approxPolyDP(contours[k], p, len * 0.02, true);

int height = max((int)sqrt((p[0].y-p[1].y)*(p[0].y-p[1].y)),(int)sqrt((p[2].y-p[3].y)*(p[2].y-p[3].y)));

int width = max((int)sqrt((p[0].x-p[3].x)*(p[0].x-p[3].x)),(int)sqrt((p[2].x-p[1].x)*(p[2].x-p[1].x)));

vector<Point2f> pdst = {Point2f(0,0), Point2f(0,width-1), Point2f(height-1,width-1), Point2f(height-1,0)};

Mat m(3, 3, CV_32F);

m = getPerspectiveTransform(p, pdst);

warpPerspective(gray, img, m, Size(height, width));

threshold(img, img, 0, 255, THRESH_BINARY_INV | THRESH_OTSU);

contours.clear();

findContours(img, contours, hierarchy, RETR_EXTERNAL, CHAIN_APPROX_SIMPLE);

vector<Rect> rec;

for(int i=0; i<contours.size(); i++){

Rect rect = boundingRect(contours[i]);

float ar = rect.width/(float)rect.height;

if(rect.width >= 20 && rect.height >= 20 ){

rec.push_back(rect);

}

}

sort(rec.begin(), rec.end(), cmp1);

for(vector<Rect>::iterator it=rec.begin(); it!=rec.end(); it+=5){

sort(it, it+5, cmp2);

}

vector<int> ans(5,0);

for(int i=0; i<5; i++){

int maxcount = 0;

for(int j=0; j<5; j++){

Mat mask = Mat::zeros(img.size(),img.type());

rectangle(mask, rec[i*5+j], Scalar(255), -1);

bitwise_and(mask, img, mask);

int count = countNonZero(mask);

if(count >= maxcount){

maxcount = count;

ans[i] = j;

}

}

}

imshow("img",img);

waitKey(0);

for(int i=0; i<ans.size(); i++){

char out= 'A'+ans[i];

cout << out;

}

return 0;

}

# import the necessary packages

from imutils.perspective import four_point_transform

from imutils import contours

import numpy as np

import argparse

import imutils

import cv2

# construct the argument parse and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-i", "--image", required=True,

help="path to the input image")

args = vars(ap.parse_args())

# define the answer key which maps the question number

# to the correct answer

ANSWER_KEY = {0: 1, 1: 4, 2: 0, 3: 3, 4: 1}

# load the image, convert it to grayscale, blur it

# slightly, then find edges

image = cv2.imread(args["image"])

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

blurred = cv2.GaussianBlur(gray, (5, 5), 0)

edged = cv2.Canny(blurred, 75, 200)

# find contours in the edge map, then initialize

# the contour that corresponds to the document

cnts = cv2.findContours(edged.copy(), cv2.RETR_EXTERNAL,

cv2.CHAIN_APPROX_SIMPLE)

cnts = imutils.grab_contours(cnts)

docCnt = None

# ensure that at least one contour was found

if len(cnts) > 0:

# sort the contours according to their size in

# descending order

cnts = sorted(cnts, key=cv2.contourArea, reverse=True)

# loop over the sorted contours

for c in cnts:

# approximate the contour

peri = cv2.arcLength(c, True)

approx = cv2.approxPolyDP(c, 0.02 * peri, True)

# if our approximated contour has four points,

# then we can assume we have found the paper

if len(approx) == 4:

docCnt = approx

break

# apply a four point perspective transform to both the

# original image and grayscale image to obtain a top-down

# birds eye view of the paper

paper = four_point_transform(image, docCnt.reshape(4, 2))

warped = four_point_transform(gray, docCnt.reshape(4, 2))

# apply Otsu's thresholding method to binarize the warped

# piece of paper

thresh = cv2.threshold(warped, 0, 255,

cv2.THRESH_BINARY_INV | cv2.THRESH_OTSU)[1]

# find contours in the thresholded image, then initialize

# the list of contours that correspond to questions

cnts = cv2.findContours(thresh.copy(), cv2.RETR_EXTERNAL,

cv2.CHAIN_APPROX_SIMPLE)

cnts = imutils.grab_contours(cnts)

questionCnts = []

# loop over the contours

for c in cnts:

# compute the bounding box of the contour, then use the

# bounding box to derive the aspect ratio

(x, y, w, h) = cv2.boundingRect(c)

ar = w / float(h)

# in order to label the contour as a question, region

# should be sufficiently wide, sufficiently tall, and

# have an aspect ratio approximately equal to 1

if w >= 20 and h >= 20 and ar >= 0.9 and ar <= 1.1:

questionCnts.append(c)

# sort the question contours top-to-bottom, then initialize

# the total number of correct answers

questionCnts = contours.sort_contours(questionCnts,

method="top-to-bottom")[0]

correct = 0

# each question has 5 possible answers, to loop over the

# question in batches of 5

for (q, i) in enumerate(np.arange(0, len(questionCnts), 5)):

# sort the contours for the current question from

# left to right, then initialize the index of the

# bubbled answer

cnts = contours.sort_contours(questionCnts[i:i + 5])[0]

bubbled = None

# loop over the sorted contours

for (j, c) in enumerate(cnts):

# construct a mask that reveals only the current

# "bubble" for the question

mask = np.zeros(thresh.shape, dtype="uint8")

cv2.drawContours(mask, [c], -1, 255, -1)

# apply the mask to the thresholded image, then

# count the number of non-zero pixels in the

# bubble area

mask = cv2.bitwise_and(thresh, thresh, mask=mask)

total = cv2.countNonZero(mask)

# if the current total has a larger number of total

# non-zero pixels, then we are examining the currently

# bubbled-in answer

if bubbled is None or total > bubbled[0]:

bubbled = (total, j)

# initialize the contour color and the index of the

# *correct* answer

color = (0, 0, 255)

k = ANSWER_KEY[q]

# check to see if the bubbled answer is correct

if k == bubbled[1]:

color = (0, 255, 0)

correct += 1

# draw the outline of the correct answer on the test

cv2.drawContours(paper, [cnts[k]], -1, color, 3)

# grab the test taker

score = (correct / 5.0) * 100

print("[INFO] score: {:.2f}%".format(score))

cv2.putText(paper, "{:.2f}%".format(score), (10, 30),

cv2.FONT_HERSHEY_SIMPLEX, 0.9, (0, 0, 255), 2)

cv2.imshow("Original", image)

cv2.imshow("Exam", paper)

cv2.waitKey(0)