音频推流java层代码:

package com.example.push.channel;

import static android.media.AudioFormat.CHANNEL_IN_STEREO;

import android.media.AudioFormat;

import android.media.AudioRecord;

import android.media.MediaRecorder;

import com.example.push.LivePusher;

import java.util.concurrent.ExecutorService;

import java.util.concurrent.Executors;

public class AudioChannel {

private int inputSamples;

private ExecutorService executor;

private AudioRecord audioRecord;

private LivePusher mLivePusher;

private int channels=2;

private boolean isLiving;

public AudioChannel(LivePusher livePusher){

mLivePusher=livePusher;

//启动一个线程 线程池

executor = Executors.newSingleThreadExecutor();

//准备录音机 来采集pcm数据 传送到native层

int channelConfig;

if (channels==2){

channelConfig= AudioFormat.CHANNEL_IN_STEREO;

}else {

channelConfig= AudioFormat.CHANNEL_IN_MONO;

}

mLivePusher.native_setAudioEncInfo( 44100, channels) ;

//16位 两个字节

inputSamples= mLivePusher.getInputSamples()*2;

//最小需要的缓冲区

int minBufferSize= AudioRecord.getMinBufferSize( 44100,channelConfig,AudioFormat.ENCODING_PCM_16BIT )*2;

//1、麦克风 2、采样率 3、声道数

audioRecord=new AudioRecord( MediaRecorder.AudioSource.MIC,44100,channelConfig,AudioFormat.ENCODING_PCM_16BIT ,minBufferSize>inputSamples?minBufferSize:inputSamples);

}

public void startLive() {

isLiving=true;

executor.submit( new AudioTeask() );

}

public void stopLive() {

isLiving=false;

}

public void release(){

audioRecord.release();

}

class AudioTeask implements Runnable{

@Override

public void run() {

//启动录音机

audioRecord.startRecording();

byte[] bytes=new byte[inputSamples];

while (isLiving){

int len=audioRecord.read( bytes,0,bytes.length);

if (len>0){

//送去编码

mLivePusher.native_pushAudio( bytes );

}

}

//停止录音机

audioRecord.stop();

}

}

}

和直播推流类似:

extern "C"

JNIEXPORT void JNICALL

Java_com_example_push_LivePusher_native_1setAudioEncInfo(JNIEnv *env, jobject instance, jint sampleRateInHz,

jint channels) {

// TODO: implement native_setAudioEncInfo()

if (audioChannel){

audioChannel->setAudioEncInfo(sampleRateInHz,channels);

}

}

extern "C"

JNIEXPORT jint JNICALL

Java_com_example_push_LivePusher_getInputSamples(JNIEnv *env, jobject instance) {

if (audioChannel){

return audioChannel->getInputSamples();

}

return -1;

}

extern "C"

JNIEXPORT void JNICALL

Java_com_example_push_LivePusher_native_1pushAudio(JNIEnv *env, jobject instance, jbyteArray data_) {

if (!audioChannel || !readyPushing) {

return;

}

jbyte *data = env->GetByteArrayElements(data_, NULL);

audioChannel->encodeData(data);

env->ReleaseByteArrayElements(data_, data, 0);

}

faac初始化

void AudioChannel::setAudioEncInfo(int samplesInHZ, int channels) {

//打开编码器

mChannels=channels;

//3、一次最大能输入编码器的样本数量 要编码的数据个数

//4、最大可能的输出数据 编码后的最大字节数

audioCodec=faacEncOpen(samplesInHZ,channels,&inputSamples,&maxOutputBytes);

//设置编码器参数

faacEncConfigurationPtr config= faacEncGetCurrentConfiguration(audioCodec);

//指定为 mpeg4标准

config->mpegVersion=MPEG4;

//lc标准

config->aacObjectType=LOW;

//16位

config->inputFormat=FAAC_INPUT_16BIT;

//编码出原始数据 既不是adts也不是adif

config->outputFormat=0;

faacEncSetConfiguration(audioCodec,config);

//输出缓冲区 编码后的数据 用这个缓冲区来保存

buffer=new u_char[maxOutputBytes];

}

发送faac编码的音频头

RTMPPacket *AudioChannel::getAudioTag() {

u_char *buf;

u_long len;

faacEncGetDecoderSpecificInfo(audioCodec, &buf, &len);

int bodySize = 2 + len;

RTMPPacket *packet = new RTMPPacket;

RTMPPacket_Alloc(packet, bodySize);

//双声道

packet->m_body[0] = 0xAF;

if (mChannels == 1) {

packet->m_body[0] = 0xAE;

}

packet->m_body[1] = 0x00;

//图片数据

memcpy(&packet->m_body[2], buf, len);

packet->m_hasAbsTimestamp = 0;

packet->m_nBodySize = bodySize;

packet->m_packetType = RTMP_PACKET_TYPE_AUDIO;

packet->m_nChannel = 0x11;

packet->m_headerType = RTMP_PACKET_SIZE_LARGE;

return packet;

}

发送音频数据

void AudioChannel::encodeData(int8_t *data) {

//音频编码 返回编码后数据字节的长度

int bytelen=faacEncEncode(audioCodec, reinterpret_cast<int32_t *>(data), inputSamples, buffer, maxOutputBytes);

if (bytelen > 0) {

//看表

int bodySize = 2 + bytelen;

RTMPPacket *packet = new RTMPPacket;

RTMPPacket_Alloc(packet, bodySize);

//双声道

packet->m_body[0] = 0xAF;

if (mChannels == 1) {

packet->m_body[0] = 0xAE;

}

//编码出的声音 都是 0x01

packet->m_body[1] = 0x01;

//图片数据

memcpy(&packet->m_body[2], buffer, bytelen);

packet->m_hasAbsTimestamp = 0;

packet->m_nBodySize = bodySize;

packet->m_packetType = RTMP_PACKET_TYPE_AUDIO;

packet->m_nChannel = 0x11;

packet->m_headerType = RTMP_PACKET_SIZE_LARGE;

audioCallback(packet);

}

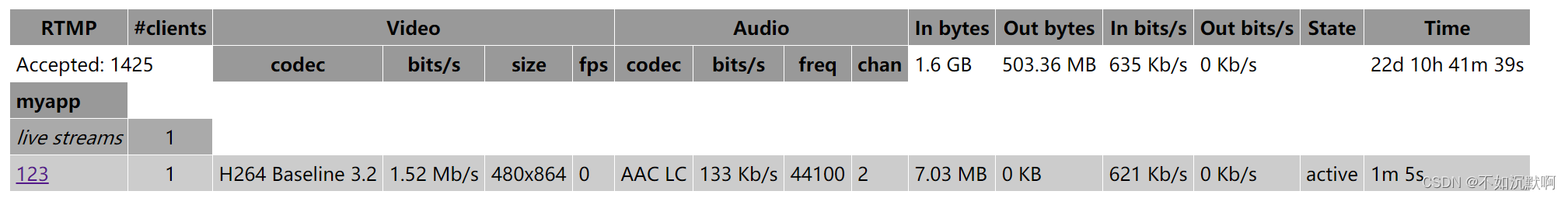

效果图:

链接:https://pan.baidu.com/s/1T6wqTbJweKEeanTz9gR1ow

提取码:9jgr

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)