目录

- 前言

- 爬取目标

- 准备工作

- 代码分析

- 1. 设置翻页

- 2. 获取代理ip

- 3. 发送请求

- 4. 获取详情页地址

- 5. 提取详情信息

- 6. 存入数据库

- 7. 循环实现翻页

- 8. 启动

前言

- 🔥🔥本文已收录于Python爬虫实战100例专栏:《Python爬虫实战100例》

- 📝📝此专栏文章是专门针对Python爬虫实战案例从基础爬虫到进阶爬虫,欢迎免费订阅

爬取目标

我们要爬取的网页是:

http://www.mp.cc/search/1?category=25

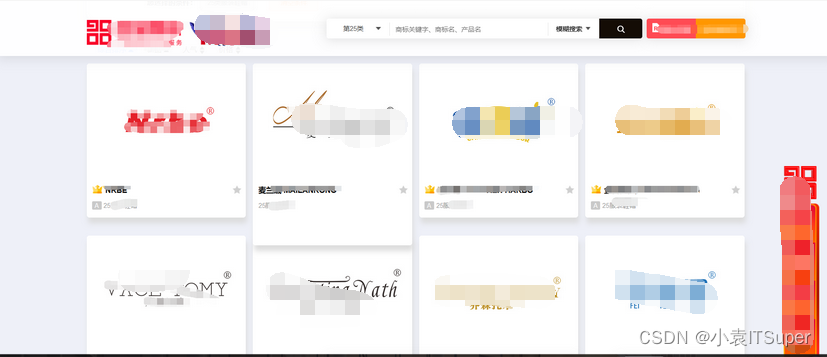

网站主页如下:

1)第一页有39个商标展示,每一个都需要进入网页获取详细信息(未截图完)

红色框就是要爬取的内容

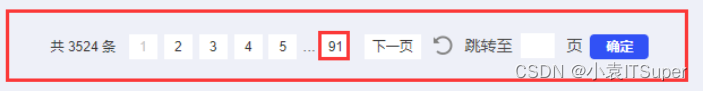

2)一共91页

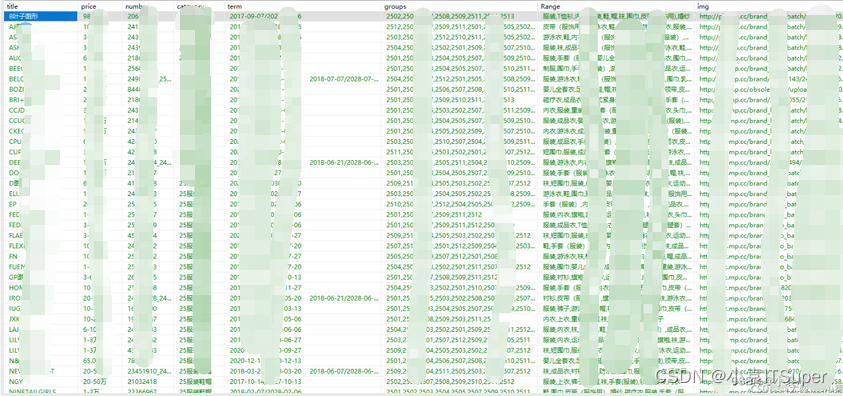

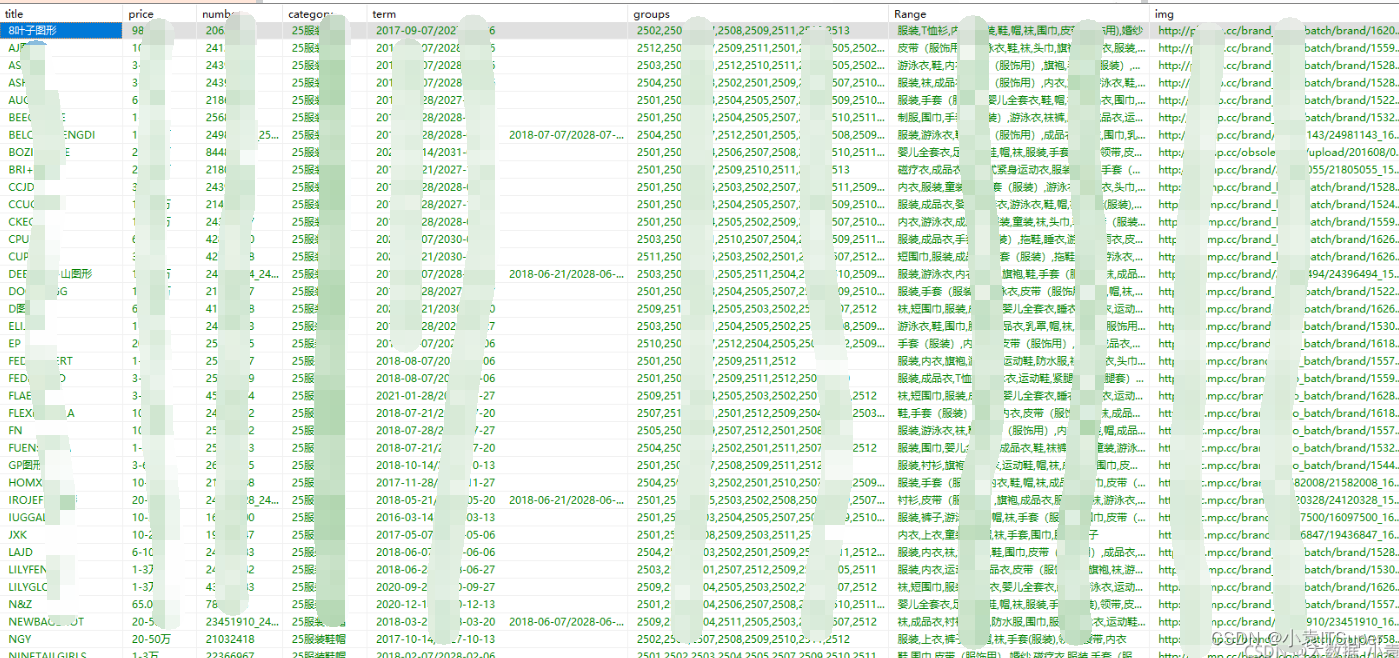

给你们看看我爬取完的效果,保存在SqlServer中:

爬取的内容是:商标名、商标价格、商标编号、所属类别、专用期限、类似群组、注册范围、商标图片地址

准备工作

我用的是python3.8,VScode编辑器,所需的库有:requests、etree、pymssql

开头导入所需用到的导入的库:

import requests

from lxml import etree

import pymssql

建表:

CREATE TABLE "MPSB" (

"title" NVARCHAR(MAX),

"price" NVARCHAR(MAX),

"number" NVARCHAR(MAX),

"category" NVARCHAR(MAX),

"term" NVARCHAR(MAX),

"groups" NVARCHAR(MAX),

"Range" NVARCHAR(MAX),

"img_url" NVARCHAR(MAX)

)

为防止,字段给的不够,直接给个MAX!

代码分析

直接上完整代码,再逐步分析!

import requests

from lxml import etree

import pymssql

import time

class TrademarkSpider:

def __init__(self) :

self.baseurl = "http://www.mp.cc/search/%s?category=%s"

self.headers = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/95.0.4638.69 Safari/537.36"}

def parse_url(self,url):

response = requests.get(url,headers=self.headers)

time.sleep(2)

return response.content.decode()

def get_page_num(self,html_str):

html = etree.HTML(html_str)

div_list = html.xpath("//div[@class='pagination']")

try:

for div in div_list:

a = div.xpath("//a[@class='number']/text()")

index = len(a)-1

page = int(a[index])

except:

page = 1

print(page,'页')

return page

def get_content_list(self,html_str):

html = etree.HTML(html_str)

div_list = html.xpath("//div[@class='item_wrapper']")

item = {}

for div in div_list:

item["href"]= div.xpath("//a[@class='img-container']/@href")

html1 = etree.HTML(html_str)

div_list1 = html1.xpath("//div[@class='item_wrapper']")

detailed_num = len(div_list1)

print(detailed_num)

for i in range(0,detailed_num):

item["href"][i] = 'http://www.mp.cc%s' % item["href"][i]

for value in item.values():

content_list = value

return content_list

def get_information(self,content_list):

information = []

for i in range(len(content_list)):

details_url = content_list[i]

html_str = self.parse_url(details_url)

html = etree.HTML(html_str)

div_list = html.xpath("//div[@class='d_top']")

item = []

for div in div_list:

title = div.xpath("///div[@class='d_top_rt_right']/text()")

price = div.xpath("//div[@class='text price2box']/span[2]/text()")

number = div.xpath("//div[@class='text'][1]/text()")

category = div.xpath("//div[@class='text'][2]/span[@class='cate']/text()")

term = div.xpath("//div[@class='text'][3]/text()")

groups = div.xpath("//div[@class='text'][4]/text()")

Range = div.xpath("//div[@class='text'][5]/text()")

img_url = div.xpath("//img[@class='img_logo']/@src")

title = ' '.join(title).replace('\n', '').replace('\r', '').replace(' ','')

price = ' '.join(price)

number = ' '.join(number).replace('\n', '').replace('\r', '').replace(' ','')

category = ' '.join(category)

term = ' '.join(term).replace('\n', '').replace('\r', '').replace(' ','')

groups = ' '.join(groups).replace('\n', '').replace('\r', '').replace(' ','')

Range = ' '.join(Range).replace('\n', '').replace('\r', '').replace(' ','')

img_url = ' '.join(img_url)

item.append(title)

item.append(price)

item.append(number)

item.append(category)

item.append(term)

item.append(groups)

item.append(Range)

item.append(img_url)

item = tuple(item)

information.append(item)

return information

def insert_sqlserver(self, information):

db = pymssql.connect('.', 'sa', 'yuan427', 'test')

if db:

print("连接成功!")

cursor= db.cursor()

try:

sql = "insert into MPSB (title,price,number,category,term,groups,Range,img_url) values (%s,%s,%s,%s,%s,%s,%s,%s)"

cursor.executemany(sql,information)

db.commit()

print('成功载入......' )

except Exception as e:

db.rollback()

print(str(e))

cursor.close()

db.close()

def run(self):

for type_num in range(1,46):

url = self.baseurl % (1,type_num)

html_str = self.parse_url(url)

page = self.get_page_num(html_str) + 1

for i in range(1,page):

url = self.baseurl % (i,type_num)

x = 0

while x<1:

html_str = self.parse_url(url)

content_list= self.get_content_list(html_str)

information = self.get_information(content_list)

self.insert_sqlserver(information)

x += 1

if __name__ == "__main__":

trademarkSpider = TrademarkSpider()

trademarkSpider.run()

先讲讲我的整体思路再逐步解释:

- 第一步:构造主页的URL地址

- 第二步:发送请求,获取响应

- 第三步:获取第一页中39个详情页地址

- 第四步:获取39个详情页信息

- 第五步:存入SqlServer数据库

- 第六步:实现主页翻页(1-91页)

1. 设置翻页

我们先手动翻页,1-3页:

http://www.mp.cc/search/1?category=25

http://www.mp.cc/search/2?category=25

http://www.mp.cc/search/3?category=25

可以看出来,网址只有中间一个数据在逐步递增,所以就可以构造主页地址,代码如下:

for i in range(1,92):

url = self.baseurl % i

这里做了字符串拼接,baseurl在 __init__(self)中:

self.baseurl = "http://www.mp.cc/search/%s?category=25"

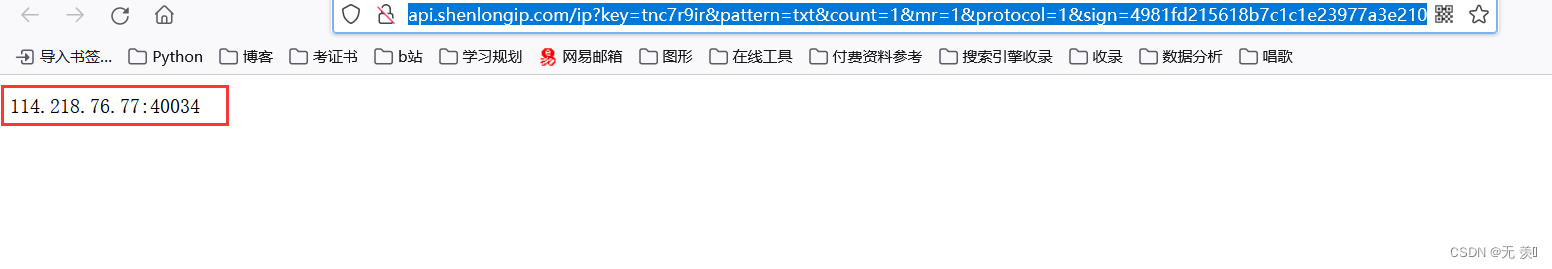

2. 获取代理ip

在爬虫代码中通常需要挂上代理ip来防止网站识别为爬虫程序,博主我用的是神龙的高密代理ip(有新账号注册送的免费套餐,也可以买包月套餐反正挺便宜的),神龙代理ip官网:https://h.shenlongip.com/index?from=seller&did=DrhhLD

(1)登录官网以后先完成个人认证:

(2)将自己电脑添加到IP白名单中:

(3)生成API链接:

(4)查看一下复制的API链接是否能返回代理IP,将链接复制到浏览器打开,OK可以拿到没问题:

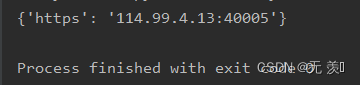

(5) 代码提取代理IP:

import requests

import time

def get_ip():

url = "这里放上自己的API链接即可"

while 1:

try:

r = requests.get(url, timeout=10)

except:

continue

ip = r.text.strip()

proxies = {

'https': '%s' % ip

}

return proxies

print(get_ip())

运行结果(可以拿到没问题):

3. 发送请求

发送请求,获取响应,代码如下:

def __init__(self) :

self.baseurl = "http://www.mp.cc/search/%s?category=25"

self.headers = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/95.0.4638.69 Safari/537.36"}

def parse_url(self,url):

proxies = get_ip()

response = requests.get(url,headers=self.headers,proxies=proxies)

return response.content.decode()

这会就有小伙伴不明白了,你headers什么意思啊?

- 防止服务器把我们认出来是爬虫,所以模拟浏览器头部信息,向服务器发送消息

- 这个 “装” 肯定必须是要装的!!!

4. 获取详情页地址

获取第一页中39个详情页地址,代码如下:

def get_content_list(self,html_str):

html = etree.HTML(html_str)

div_list = html.xpath("//div[@class='item_wrapper']")

item = {}

for div in div_list:

item["href"]= div.xpath("//a[@class='img-container']/@href")

for i in range(39):

item["href"][i] = 'http://www.mp.cc%s' % item["href"][i]

for value in item.values():

content_list = value

print(content_list)

return content_list

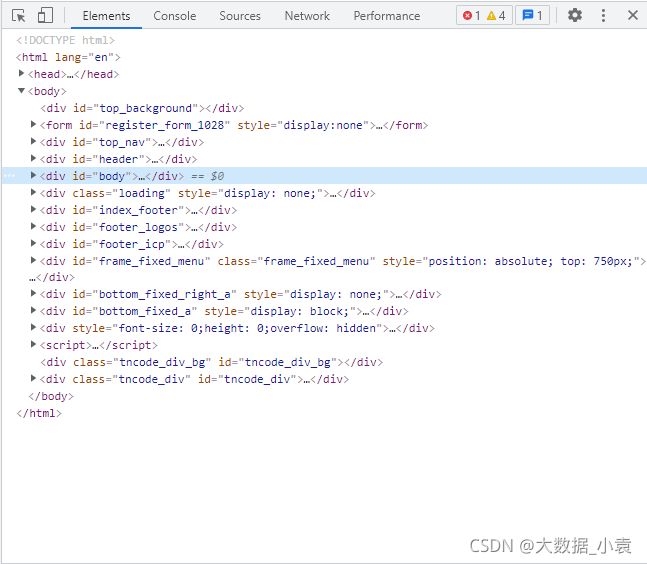

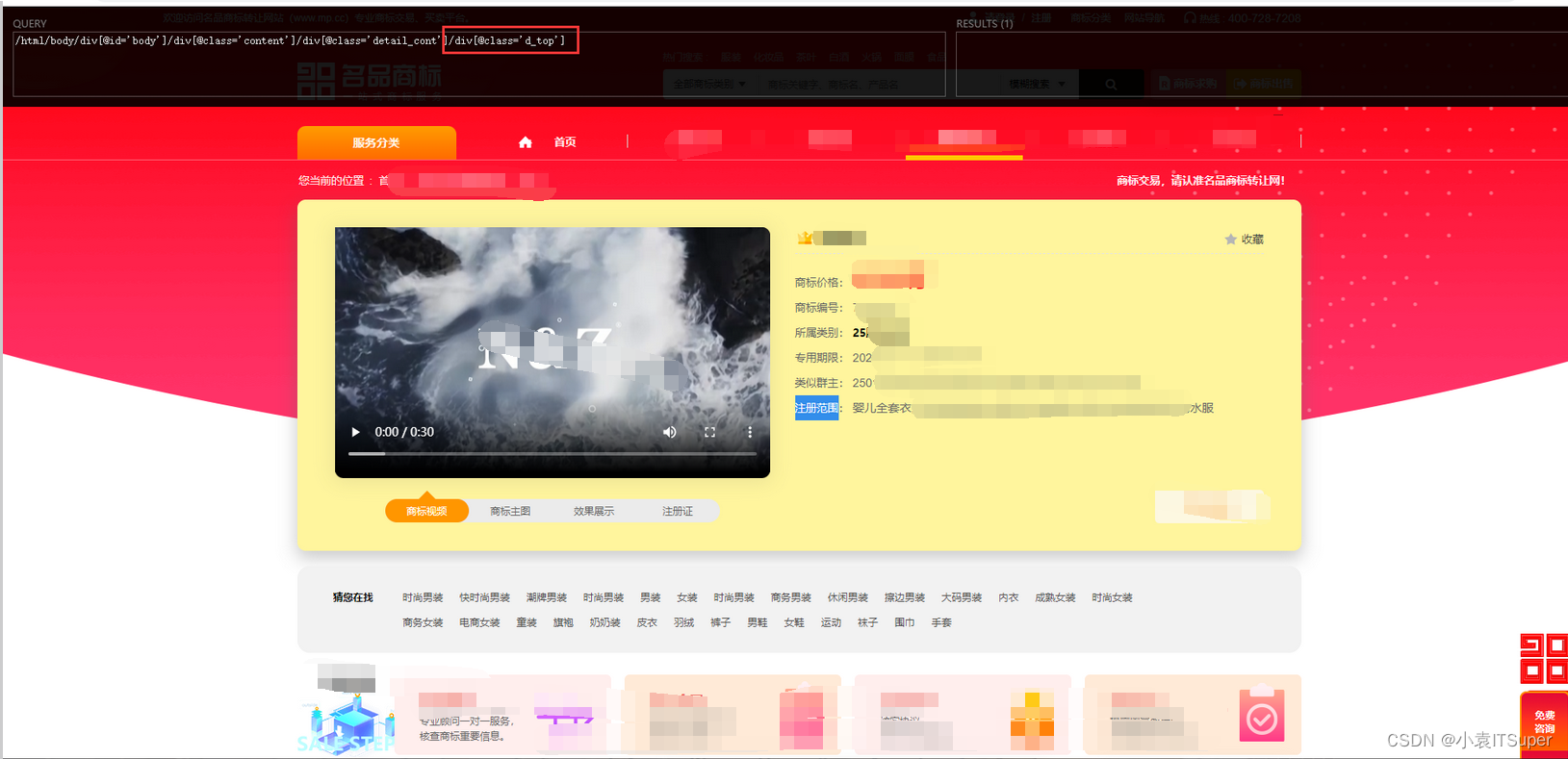

1)我们先把获取到的主页代码转换为Elements对象,就和网页中的一样,如下图: html = etree.HTML(html_str)

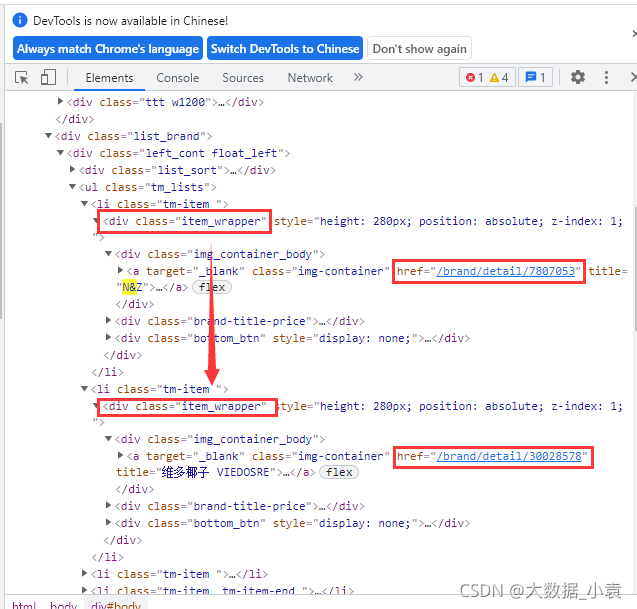

2)写Xpath根据div分组:div_list = html.xpath("//div[@class='item_wrapper']")

如图可以看出每个商标的详情地址都在<div class="item_wrapper">中

3)提取地址

item = {}

for div in div_list:

item["href"]= div.xpath("//a[@class='img-container']/@href")

for i in range(39):

item["href"][i] = 'http://www.mp.cc%s' % item["href"][i]

for value in item.values():

content_list = value

4)效果如下:

['http://www.mp.cc/brand/detail/36449901', 'http://www.mp.cc/brand/detail/26298802', 'http://www.mp.cc/brand/detail/22048146', 'http://www.mp.cc/brand/detail/4159836', 'http://www.mp.cc/brand/detail/9603914', 'http://www.mp.cc/brand/detail/4156243', 'http://www.mp.cc/brand/detail/36575014', 'http://www.mp.cc/brand/detail/39965756', 'http://www.mp.cc/brand/detail/36594259', 'http://www.mp.cc/brand/detail/37941094', 'http://www.mp.cc/brand/detail/38162960', 'http://www.mp.cc/brand/detail/38500643', 'http://www.mp.cc/brand/detail/38025192', 'http://www.mp.cc/brand/detail/37755982', 'http://www.mp.cc/brand/detail/37153272', 'http://www.mp.cc/brand/detail/35335841', 'http://www.mp.cc/brand/detail/36003501', 'http://www.mp.cc/brand/detail/27794101', 'http://www.mp.cc/brand/detail/26400645', 'http://www.mp.cc/brand/detail/25687631', 'http://www.mp.cc/brand/detail/25592319',

'http://www.mp.cc/brand/detail/25593974', 'http://www.mp.cc/brand/detail/24397124', 'http://www.mp.cc/brand/detail/23793395', 'http://www.mp.cc/brand/detail/38517219', 'http://www.mp.cc/brand/detail/36921312', 'http://www.mp.cc/brand/detail/6545326_6545324', 'http://www.mp.cc/brand/detail/8281719', 'http://www.mp.cc/brand/detail/4040639', 'http://www.mp.cc/brand/detail/42819737', 'http://www.mp.cc/brand/detail/40922772', 'http://www.mp.cc/brand/detail/41085317', 'http://www.mp.cc/brand/detail/40122971', 'http://www.mp.cc/brand/detail/39200273', 'http://www.mp.cc/brand/detail/38870472', 'http://www.mp.cc/brand/detail/38037836', 'http://www.mp.cc/brand/detail/37387087', 'http://www.mp.cc/brand/detail/36656221', 'http://www.mp.cc/brand/detail/25858042']

因为我们获取的详情页地址只有半截,所以在做一个拼接!

可能这里有小伙伴要问了为什么你要先存入字典再存列表呢???

可以自己试试如果直接存列表,所有的地址全部都挤在一起了,没法拼接!!!

5. 提取详情信息

分别进行39个详情页,并获取信息,代码如下:

def get_information(self,content_list):

information = []

for i in range(len(content_list)):

details_url = content_list[i]

html_str = self.parse_url(details_url)

html = etree.HTML(html_str)

div_list = html.xpath("//div[@class='d_top']")

item = []

for div in div_list:

title = div.xpath("///div[@class='d_top_rt_right']/text()")

price = div.xpath("//div[@class='text price2box']/span[2]/text()")

number = div.xpath("//div[@class='text'][1]/text()")

category = div.xpath("//div[@class='text'][2]/span[@class='cate']/text()")

term = div.xpath("//div[@class='text'][3]/text()")

groups = div.xpath("//div[@class='text'][4]/text()")

Range = div.xpath("//div[@class='text'][5]/text()")

img = div.xpath("//img[@class='img_logo']/@src")

title = ' '.join(title).replace('\n', '').replace('\r', '').replace(' ','')

price = ' '.join(price)

number = ' '.join(number).replace('\n', '').replace('\r', '').replace(' ','')

category = ' '.join(category)

term = ' '.join(term).replace('\n', '').replace('\r', '').replace(' ','')

groups = ' '.join(groups).replace('\n', '').replace('\r', '').replace(' ','')

Range = ' '.join(Range).replace('\n', '').replace('\r', '').replace(' ','')

img = ' '.join(img)

item.append(title)

item.append(price)

item.append(number)

item.append(category)

item.append(term)

item.append(groups)

item.append(Range)

item.append(img)

item = tuple(item)

information.append(item)

return information

1)根据content_list长度遍历(分别获取详情页地址),并调用parse_url方法获取网页源码

for i in range(len(content_list)):

details_url = content_list[i]

html_str = self.parse_url(details_url)

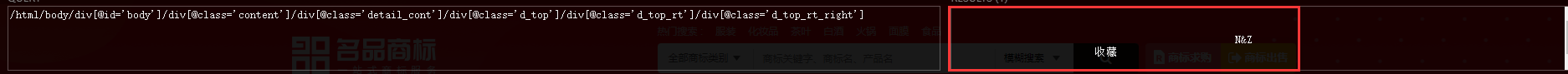

2)根据div分组并获取数据

html = etree.HTML(html_str)

div_list = html.xpath("//div[@class='d_top']")

item = []

for div in div_list:

title = div.xpath("///div[@class='d_top_rt_right']/text()")

price = div.xpath("//div[@class='text price2box']/span[2]/text()")

number = div.xpath("//div[@class='text'][1]/text()")

category = div.xpath("//div[@class='text'][2]/span[@class='cate']/text()")

term = div.xpath("//div[@class='text'][3]/text()")

groups = div.xpath("//div[@class='text'][4]/text()")

Range = div.xpath("//div[@class='text'][5]/text()")

img = div.xpath("//img[@class='img_logo']/@src")

- 好了又要小伙伴要问了XPath怎么写?,这里我用的是XPath Helper工具,就是下图那个黑框可以帮我们写Xpath定位元素,有需要的我可以写个安装使用教程

4)因为个别数据有空格换行符所以要先去掉,如图:

title = ' '.join(title).replace('\n', '').replace('\r', '').replace(' ','')

price = ' '.join(price)

number = ' '.join(number).replace('\n', '').replace('\r', '').replace(' ','')

category = ' '.join(category)

term = ' '.join(term).replace('\n', '').replace('\r', '').replace(' ','')

groups = ' '.join(groups).replace('\n', '').replace('\r', '').replace(' ','')

Range = ' '.join(Range).replace('\n', '').replace('\r', '').replace(' ','')

img = ' '.join(img)

6. 存入数据库

把数据存入数据库,需要注意的是服务器名,账户,密码,数据库名和SQL语句中的表名!

def insert_sqlserver(self, information):

db = pymssql.connect('.', 'sa', 'yuan427', 'test')

if db:

print("连接成功!")

cursor= db.cursor()

try:

sql = "insert into test6 (title,price,number,category,term,groups,Range,img) values (%s,%s,%s,%s,%s,%s,%s,%s)"

cursor.executemany(sql,information)

db.commit()

print('成功载入......' )

except Exception as e:

db.rollback()

print(str(e))

cursor.close()

db.close()

7. 循环实现翻页

循环以上五步,按页数实现翻页

def run(self):

for i in range(1,92):

url = self.baseurl % i

x = 0

while x<1:

html_str = self.parse_url(url)

content_list= self.get_content_list(html_str)

information = self.get_information(content_list)

self.insert_sqlserver(information)

x += 1

8. 启动

if __name__ == "__main__":

trademarkSpider = TrademarkSpider()

trademarkSpider.run()

打开数据库看看是不是我们想要的结果:

O了O了!!!

这也是我第一次写爬虫实战,有讲的不对的地方,希望各位大佬指正!!!,如果有不明白的地方评论区留言回复!兄弟们来个点赞有空就更新爬虫实战!!!

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)