诸神缄默不语-个人CSDN博文目录

TensorFlow是学习深度学习时常用的Python神经网络框架,本文将介绍其部分版本在Linux系统使用pip进行安装的方法。

(注:TensorFlow官方推荐使用pip进行安装。)

作者使用anaconda作为管理虚拟环境的工具。以下工作都在虚拟环境中进行,对Python和Aanaconda的安装及对虚拟环境的管理本文不作赘述,后期可能会撰写相关的博文。

首先进入官网:TensorFlow

TensorFlow安装的总界面:Install TensorFlow 2

文章目录

- 1. TensorFlow 2最新版安装(本文撰写时为2.9.0)

- 2. TensorFlow 1.14 + Keras 2.3.1(安装时间:2022.8.17)

- 3. 其他本文撰写过程中使用的参考资料

1. TensorFlow 2最新版安装(本文撰写时为2.9.0)

官方安装指南:Install TensorFlow 2

用pip安装的指南:Install TensorFlow with pip

TensorFlow基础的系统环境等要求可直接在该网站上查看,已经2022年了,一般电脑都不会这么老吧。

新建anaconda虚拟环境:conda create -n envtf2 python==3.8(Python版本需要是3.7-3.10,本文以3.8为例,主要是因为我需要用3.8版本来安装另一个包)

激活虚拟环境:conda activate envtf2

如果要使用cuda,首先确定本机安装有NVIDIA GPU driver:nvidia-smi(一般都会有的吧,没有的话到得了这一步吗)

安装指定的cudatoolkit和cudnn版本:conda install -c conda-forge cudatoolkit=11.2 cudnn=8.1.0

有两种指定配置路径的方式:

①临时的,每次会话都需要先激活虚拟环境然后:export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$CONDA_PREFIX/lib/

②自动在每次激活虚拟环境后执行此操作(我没有试过,我一直都用的是上面那种方式):

mkdir -p $CONDA_PREFIX/etc/conda/activate.d

echo 'export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$CONDA_PREFIX/lib/' > $CONDA_PREFIX/etc/conda/activate.d/env_vars.sh

更新pip:pip install --upgrade pip

安装TensorFlow:pip install tensorflow

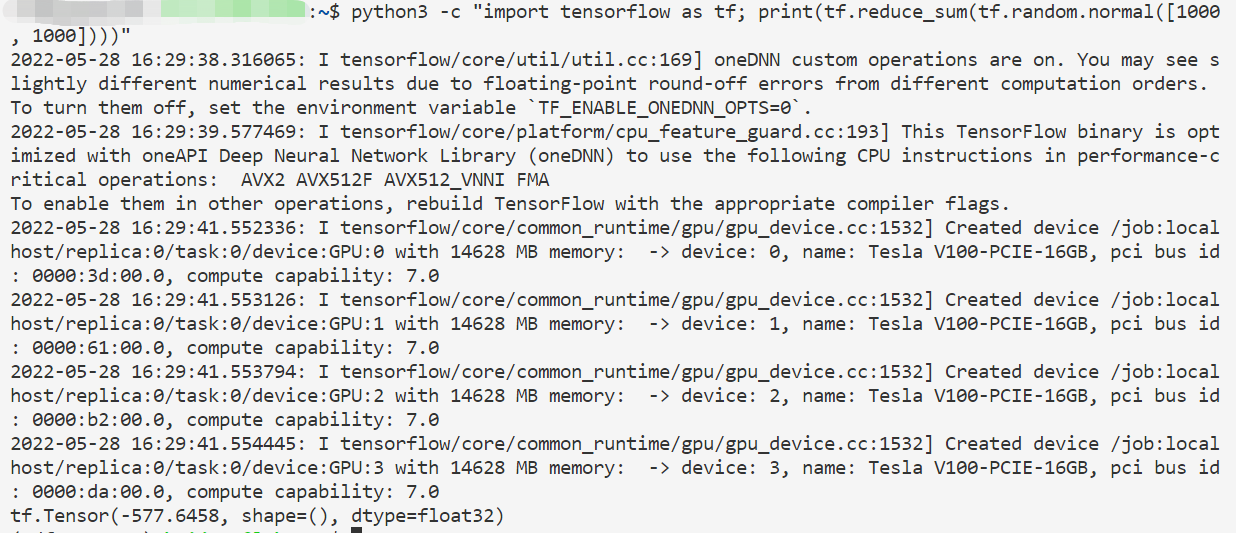

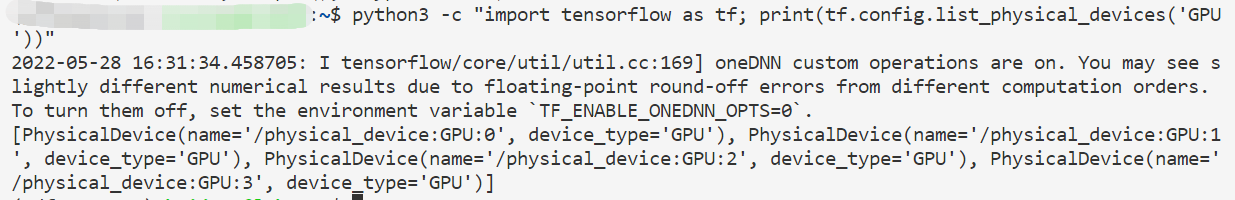

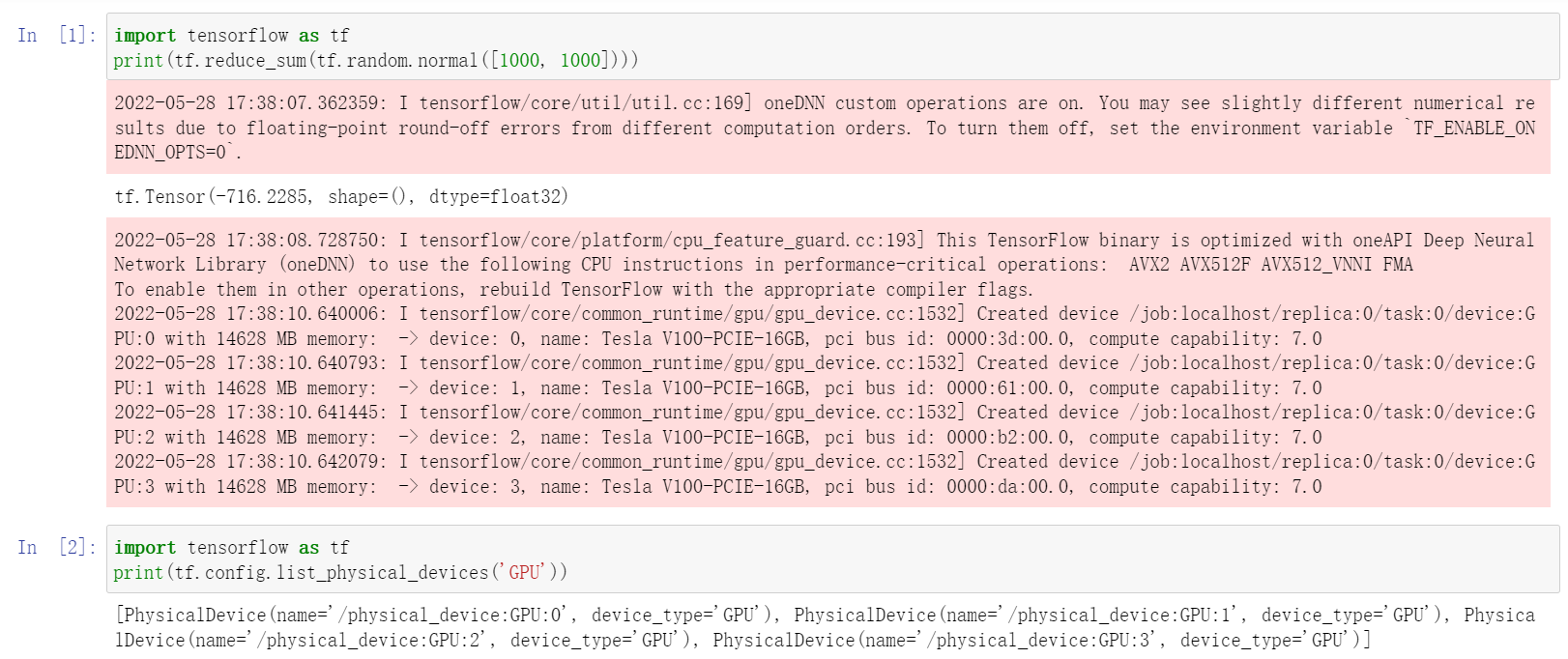

检验CPU版TensorFlow是否可用:python3 -c "import tensorflow as tf; print(tf.reduce_sum(tf.random.normal([1000, 1000])))"

(我的服务器有4张卡)

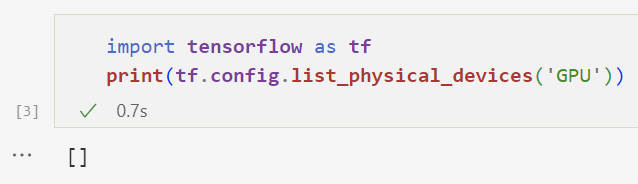

检验GPU版TensorFlow是否可用:python3 -c "import tensorflow as tf; print(tf.config.list_physical_devices('GPU'))"

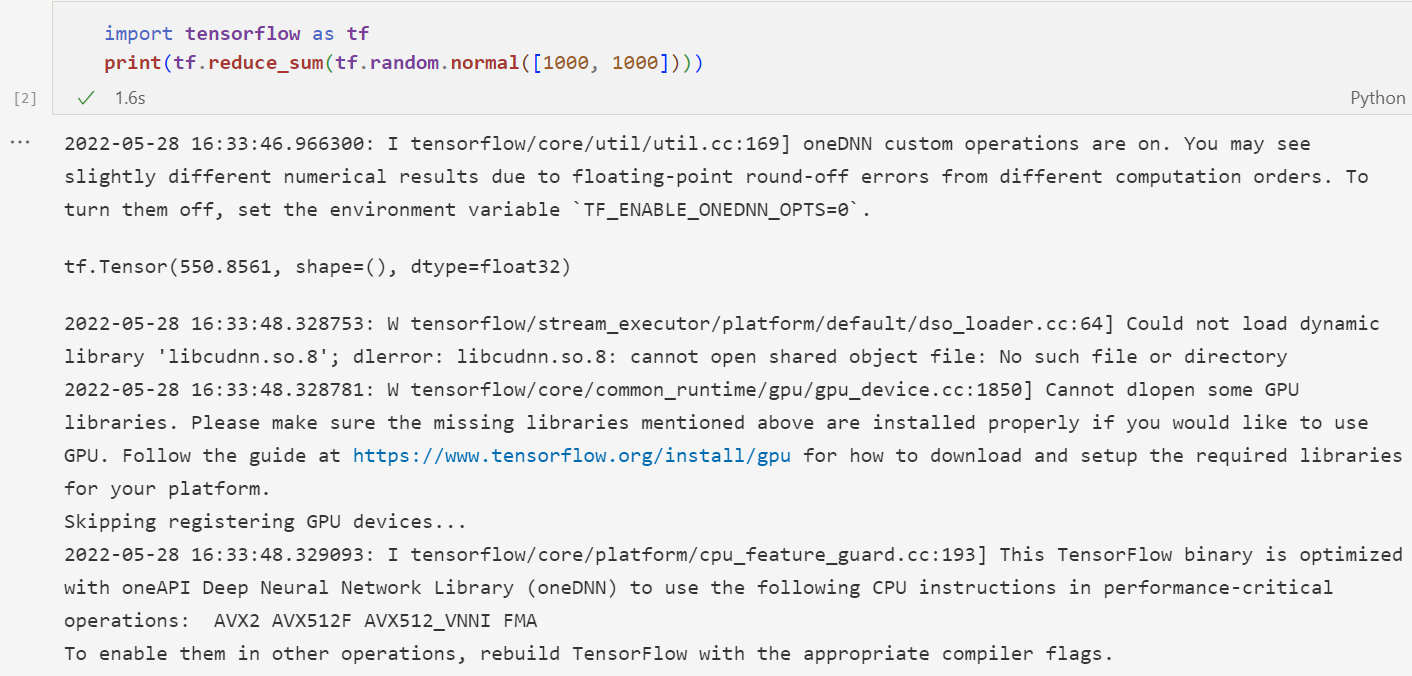

注意,以上操作是在终端上进行的,不能直接放到jupyter notebook。一个失败的例子:

在jupyter notebook上,我直接调用!export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$CONDA_PREFIX/lib/也不行,用os.environ['LD_LIBRARY_PATH']也不行,用$env也不行,就把我整得相当困惑。我看了一下,好像如果用jupyter notebook的话就必须要修改jupyter内核才能用,但是我修改了jupyter kernelspec list路径中的kernel.json后仍然不行。(参考自python - How to set env variable in Jupyter notebook - Stack Overflow)

其他我在网上有看到一些使用全局配置解决此问题的方法,但是我这个服务器上还需要运行别的版本的别的项目,总之不太方便用这个。一般来说我对此问题的解决方法就是不用jupyter notebook来跑TF项目。我看的这些资料可资参考:

解决TensorFlow在terminal中正常但在jupyter notebook中报错的方案 - stardsd - 博客园

Add CUDA Library Path to Jupyterhub Notebook - AIML - wiki.ucar.edu

install pytorch with jupyter - 知乎

所以jupyter notebook上要成功使用TensorFlow GPU功能的话就必须要先在命令行上激活虚拟环境,然后export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$CONDA_PREFIX/lib/,然后调用jupyter notebook命令打开jupyter notebook,这样就能直接正常使用了。

(注意:如果仅安装了ipykernel包,那么VSCode中可以打开notebook文件,但是无法使用jupyter notebook打开能够在浏览器中打开的网页,因此需要安装jupyterlab:pip install jupyterlab(参考Project Jupyter | Installing Jupyter)。VSCode即使在远程服务器上也可以把端口转到本地使用localhost域名在本地浏览器打开,挺方便的)

运行成功的效果:

2. TensorFlow 1.14 + Keras 2.3.1(安装时间:2022.8.17)

这个是苏神bert4keras(https://github.com/bojone/bert4keras)的配置。

见TensorFlow官网(使用 pip 安装 TensorFlow),仅TensorFlow2.2以上支持Python3.8以上,所以我需要一个Python3.7的环境。

新建anaconda虚拟环境:conda create -n envtf114 python=3.7 pip

安装GPU版TensorFlow:pip install tensorflow-gpu==1.14

试用如下代码(来自tensorflow-gpu1.14代码测试_爱听许嵩歌的博客-CSDN博客_tensorflow-gpu测试代码):

import tensorflow as tf

with tf.device('/cpu:0'):

a = tf.constant([1.0, 2.0, 3.0], shape=[3], name='a')

b = tf.constant([1.0, 2.0, 3.0], shape=[3], name='b')

with tf.device('/gpu:2'):

c = a + b

sess = tf.Session(config=tf.ConfigProto(allow_soft_placement=True, log_device_placement=True))

sess.run(tf.global_variables_initializer())

print(sess.run(c))

报错:

Traceback (most recent call last):

File "trytf1.py", line 1, in <module>

import tensorflow as tf

File "env_path/lib/python3.7/site-packages/tensorflow/__init__.py", line 28, in <module>

from tensorflow.python import pywrap_tensorflow # pylint: disable=unused-import

File "env_path/lib/python3.7/site-packages/tensorflow/python/__init__.py", line 52, in <module>

from tensorflow.core.framework.graph_pb2 import *

File "env_path/lib/python3.7/site-packages/tensorflow/core/framework/graph_pb2.py", line 16, in <module>

from tensorflow.core.framework import node_def_pb2 as tensorflow_dot_core_dot_framework_dot_node__def__pb2

File "env_path/lib/python3.7/site-packages/tensorflow/core/framework/node_def_pb2.py", line 16, in <module>

from tensorflow.core.framework import attr_value_pb2 as tensorflow_dot_core_dot_framework_dot_attr__value__pb2

File "env_path/lib/python3.7/site-packages/tensorflow/core/framework/attr_value_pb2.py", line 16, in <module>

from tensorflow.core.framework import tensor_pb2 as tensorflow_dot_core_dot_framework_dot_tensor__pb2

File "env_path/lib/python3.7/site-packages/tensorflow/core/framework/tensor_pb2.py", line 16, in <module>

from tensorflow.core.framework import resource_handle_pb2 as tensorflow_dot_core_dot_framework_dot_resource__handle__pb2

File "env_path/lib/python3.7/site-packages/tensorflow/core/framework/resource_handle_pb2.py", line 42, in <module>

serialized_options=None, file=DESCRIPTOR),

File "/home/wanghuijuan/anaconda3/envs/envtf114/lib/python3.7/site-packages/google/protobuf/descriptor.py", line 560, in __new__

_message.Message._CheckCalledFromGeneratedFile()

TypeError: Descriptors cannot not be created directly.

If this call came from a _pb2.py file, your generated code is out of date and must be regenerated with protoc >= 3.19.0.

If you cannot immediately regenerate your protos, some other possible workarounds are:

1. Downgrade the protobuf package to 3.20.x or lower.

2. Set PROTOCOL_BUFFERS_PYTHON_IMPLEMENTATION=python (but this will use pure-Python parsing and will be much slower).

More information: https://developers.google.com/protocol-buffers/docs/news/2022-05-06#python-updates

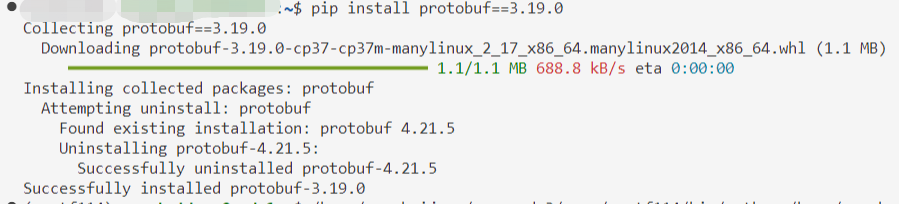

嗯虽然不知道发生了什么总之我从善如流地照着改(参考1. Downgrade the protobuf package to 3.20.x or lower._weixin_44834086的博客-CSDN博客):

pip install protobuf==3.19.0

然后重新运行代码,这回的输出信息变成了:

env_path/lib/python3.7/site-packages/tensorflow/python/framework/dtypes.py:516: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint8 = np.dtype([("qint8", np.int8, 1)])

env_path/lib/python3.7/site-packages/tensorflow/python/framework/dtypes.py:517: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_quint8 = np.dtype([("quint8", np.uint8, 1)])

env_path/lib/python3.7/site-packages/tensorflow/python/framework/dtypes.py:518: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint16 = np.dtype([("qint16", np.int16, 1)])

env_path/lib/python3.7/site-packages/tensorflow/python/framework/dtypes.py:519: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_quint16 = np.dtype([("quint16", np.uint16, 1)])

env_path/lib/python3.7/site-packages/tensorflow/python/framework/dtypes.py:520: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint32 = np.dtype([("qint32", np.int32, 1)])

env_path/lib/python3.7/site-packages/tensorflow/python/framework/dtypes.py:525: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

np_resource = np.dtype([("resource", np.ubyte, 1)])

env_path/lib/python3.7/site-packages/tensorboard/compat/tensorflow_stub/dtypes.py:541: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint8 = np.dtype([("qint8", np.int8, 1)])

env_path/lib/python3.7/site-packages/tensorboard/compat/tensorflow_stub/dtypes.py:542: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_quint8 = np.dtype([("quint8", np.uint8, 1)])

env_path/lib/python3.7/site-packages/tensorboard/compat/tensorflow_stub/dtypes.py:543: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint16 = np.dtype([("qint16", np.int16, 1)])

env_path/lib/python3.7/site-packages/tensorboard/compat/tensorflow_stub/dtypes.py:544: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_quint16 = np.dtype([("quint16", np.uint16, 1)])

env_path/lib/python3.7/site-packages/tensorboard/compat/tensorflow_stub/dtypes.py:545: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint32 = np.dtype([("qint32", np.int32, 1)])

env_path/lib/python3.7/site-packages/tensorboard/compat/tensorflow_stub/dtypes.py:550: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

np_resource = np.dtype([("resource", np.ubyte, 1)])

WARNING:tensorflow:From trytf1.py:11: The name tf.Session is deprecated. Please use tf.compat.v1.Session instead.

WARNING:tensorflow:From trytf1.py:11: The name tf.ConfigProto is deprecated. Please use tf.compat.v1.ConfigProto instead.

2022-08-17 15:27:08.829308: I tensorflow/core/platform/cpu_feature_guard.cc:142] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX2 AVX512F FMA

2022-08-17 15:27:08.865801: I tensorflow/core/platform/profile_utils/cpu_utils.cc:94] CPU Frequency: 1800000000 Hz

2022-08-17 15:27:08.867967: I tensorflow/compiler/xla/service/service.cc:168] XLA service 0x55f031fff480 executing computations on platform Host. Devices:

2022-08-17 15:27:08.868039: I tensorflow/compiler/xla/service/service.cc:175] StreamExecutor device (0): <undefined>, <undefined>

2022-08-17 15:27:08.871550: I tensorflow/stream_executor/platform/default/dso_loader.cc:42] Successfully opened dynamic library libcuda.so.1

2022-08-17 15:27:09.628470: I tensorflow/compiler/xla/service/service.cc:168] XLA service 0x55f0332f1040 executing computations on platform CUDA. Devices:

2022-08-17 15:27:09.628540: I tensorflow/compiler/xla/service/service.cc:175] StreamExecutor device (0): Tesla T4, Compute Capability 7.5

2022-08-17 15:27:09.628560: I tensorflow/compiler/xla/service/service.cc:175] StreamExecutor device (1): Tesla T4, Compute Capability 7.5

2022-08-17 15:27:09.628580: I tensorflow/compiler/xla/service/service.cc:175] StreamExecutor device (2): Tesla T4, Compute Capability 7.5

2022-08-17 15:27:09.628597: I tensorflow/compiler/xla/service/service.cc:175] StreamExecutor device (3): Tesla T4, Compute Capability 7.5

2022-08-17 15:27:09.645921: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1640] Found device 0 with properties:

name: Tesla T4 major: 7 minor: 5 memoryClockRate(GHz): 1.59

pciBusID: 0000:3b:00.0

2022-08-17 15:27:09.650885: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1640] Found device 1 with properties:

name: Tesla T4 major: 7 minor: 5 memoryClockRate(GHz): 1.59

pciBusID: 0000:5e:00.0

2022-08-17 15:27:09.652426: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1640] Found device 2 with properties:

name: Tesla T4 major: 7 minor: 5 memoryClockRate(GHz): 1.59

pciBusID: 0000:b1:00.0

2022-08-17 15:27:09.653863: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1640] Found device 3 with properties:

name: Tesla T4 major: 7 minor: 5 memoryClockRate(GHz): 1.59

pciBusID: 0000:d9:00.0

2022-08-17 15:27:09.654104: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Could not dlopen library 'libcudart.so.10.0'; dlerror: libcudart.so.10.0: cannot open shared object file: No such file or directory

2022-08-17 15:27:09.654223: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Could not dlopen library 'libcublas.so.10.0'; dlerror: libcublas.so.10.0: cannot open shared object file: No such file or directory

2022-08-17 15:27:09.654332: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Could not dlopen library 'libcufft.so.10.0'; dlerror: libcufft.so.10.0: cannot open shared object file: No such file or directory

2022-08-17 15:27:09.654437: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Could not dlopen library 'libcurand.so.10.0'; dlerror: libcurand.so.10.0: cannot open shared object file: No such file or directory

2022-08-17 15:27:09.654540: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Could not dlopen library 'libcusolver.so.10.0'; dlerror: libcusolver.so.10.0: cannot open shared object file: No such file or directory

2022-08-17 15:27:09.654661: I tensorflow/stream_executor/platform/default/dso_loader.cc:53] Could not dlopen library 'libcusparse.so.10.0'; dlerror: libcusparse.so.10.0: cannot open shared object file: No such file or directory

2022-08-17 15:27:09.740681: I tensorflow/stream_executor/platform/default/dso_loader.cc:42] Successfully opened dynamic library libcudnn.so.7

2022-08-17 15:27:09.740741: W tensorflow/core/common_runtime/gpu/gpu_device.cc:1663] Cannot dlopen some GPU libraries. Skipping registering GPU devices...

2022-08-17 15:27:09.740824: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1181] Device interconnect StreamExecutor with strength 1 edge matrix:

2022-08-17 15:27:09.740848: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1187] 0 1 2 3

2022-08-17 15:27:09.740868: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1200] 0: N Y Y Y

2022-08-17 15:27:09.740886: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1200] 1: Y N Y Y

2022-08-17 15:27:09.740904: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1200] 2: Y Y N Y

2022-08-17 15:27:09.740921: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1200] 3: Y Y Y N

Device mapping:

/job:localhost/replica:0/task:0/device:XLA_CPU:0 -> device: XLA_CPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:0 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:1 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:2 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:3 -> device: XLA_GPU device

2022-08-17 15:27:09.742912: I tensorflow/core/common_runtime/direct_session.cc:296] Device mapping:

/job:localhost/replica:0/task:0/device:XLA_CPU:0 -> device: XLA_CPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:0 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:1 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:2 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:3 -> device: XLA_GPU device

WARNING:tensorflow:From trytf1.py:13: The name tf.global_variables_initializer is deprecated. Please use tf.compat.v1.global_variables_initializer instead.

add: (Add): /job:localhost/replica:0/task:0/device:CPU:0

2022-08-17 15:27:09.746145: I tensorflow/core/common_runtime/placer.cc:54] add: (Add)/job:localhost/replica:0/task:0/device:CPU:0

init: (NoOp): /job:localhost/replica:0/task:0/device:CPU:0

2022-08-17 15:27:09.746214: I tensorflow/core/common_runtime/placer.cc:54] init: (NoOp)/job:localhost/replica:0/task:0/device:CPU:0

a: (Const): /job:localhost/replica:0/task:0/device:CPU:0

2022-08-17 15:27:09.746257: I tensorflow/core/common_runtime/placer.cc:54] a: (Const)/job:localhost/replica:0/task:0/device:CPU:0

b: (Const): /job:localhost/replica:0/task:0/device:CPU:0

2022-08-17 15:27:09.746294: I tensorflow/core/common_runtime/placer.cc:54] b: (Const)/job:localhost/replica:0/task:0/device:CPU:0

2022-08-17 15:27:09.748161: W tensorflow/compiler/jit/mark_for_compilation_pass.cc:1412] (One-time warning): Not using XLA:CPU for cluster because envvar TF_XLA_FLAGS=--tf_xla_cpu_global_jit was not set. If you want XLA:CPU, either set that envvar, or use experimental_jit_scope to enable XLA:CPU. To confirm that XLA is active, pass --vmodule=xla_compilation_cache=1 (as a proper command-line flag, not via TF_XLA_FLAGS) or set the envvar XLA_FLAGS=--xla_hlo_profile.

[2. 4. 6.]

其他略,总之那一堆无法打开so文件就说明cuda安装有问题,无法使用GPU。

TensorFlow版本对应GPU版本(图源https://www.tensorflow.org/install/source#gpu):

所以首先安装所需的cudnn和cuda:conda install -c conda-forge cudatoolkit=10.0 cudnn=7.4

报了个非常诡异的bug:

Collecting package metadata (current_repodata.json): done

Solving environment: failed with initial frozen solve. Retrying with flexible solve.

Collecting package metadata (repodata.json): failed

# >>>>>>>>>>>>>>>>>>>>>> ERROR REPORT <<<<<<<<<<<<<<<<<<<<<<

Traceback (most recent call last):

File "anaconda3/lib/python3.9/site-packages/urllib3/response.py", line 700, in _update_chunk_length

self.chunk_left = int(line, 16)

ValueError: invalid literal for int() with base 16: b''

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "anaconda3/lib/python3.9/site-packages/urllib3/response.py", line 441, in _error_catcher

yield

File "anaconda3/lib/python3.9/site-packages/urllib3/response.py", line 767, in read_chunked

self._update_chunk_length()

File "anaconda3/lib/python3.9/site-packages/urllib3/response.py", line 704, in _update_chunk_length

raise InvalidChunkLength(self, line)

urllib3.exceptions.InvalidChunkLength: InvalidChunkLength(got length b'', 0 bytes read)

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "anaconda3/lib/python3.9/site-packages/requests/models.py", line 760, in generate

for chunk in self.raw.stream(chunk_size, decode_content=True):

File "anaconda3/lib/python3.9/site-packages/urllib3/response.py", line 575, in stream

for line in self.read_chunked(amt, decode_content=decode_content):

File "anaconda3/lib/python3.9/site-packages/urllib3/response.py", line 796, in read_chunked

self._original_response.close()

File "anaconda3/lib/python3.9/contextlib.py", line 137, in __exit__

self.gen.throw(typ, value, traceback)

File "anaconda3/lib/python3.9/site-packages/urllib3/response.py", line 458, in _error_catcher

raise ProtocolError("Connection broken: %r" % e, e)

urllib3.exceptions.ProtocolError: ("Connection broken: InvalidChunkLength(got length b'', 0 bytes read)", InvalidChunkLength(got length b'', 0 bytes read))

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "anaconda3/lib/python3.9/site-packages/conda/exceptions.py", line 1114, in __call__

return func(*args, **kwargs)

File "anaconda3/lib/python3.9/site-packages/conda/cli/main.py", line 86, in main_subshell

exit_code = do_call(args, p)

File "anaconda3/lib/python3.9/site-packages/conda/cli/conda_argparse.py", line 90, in do_call

return getattr(module, func_name)(args, parser)

File "anaconda3/lib/python3.9/site-packages/conda/cli/main_install.py", line 20, in execute

install(args, parser, 'install')

File "anaconda3/lib/python3.9/site-packages/conda/cli/install.py", line 259, in install

unlink_link_transaction = solver.solve_for_transaction(

File "anaconda3/lib/python3.9/site-packages/conda/core/solve.py", line 152, in solve_for_transaction

unlink_precs, link_precs = self.solve_for_diff(update_modifier, deps_modifier,

File "anaconda3/lib/python3.9/site-packages/conda/core/solve.py", line 195, in solve_for_diff

final_precs = self.solve_final_state(update_modifier, deps_modifier, prune, ignore_pinned,

File "anaconda3/lib/python3.9/site-packages/conda/core/solve.py", line 300, in solve_final_state

ssc = self._collect_all_metadata(ssc)

File "anaconda3/lib/python3.9/site-packages/conda/common/io.py", line 86, in decorated

return f(*args, **kwds)

File "anaconda3/lib/python3.9/site-packages/conda/core/solve.py", line 463, in _collect_all_metadata

index, r = self._prepare(prepared_specs)

File "anaconda3/lib/python3.9/site-packages/conda/core/solve.py", line 1058, in _prepare

reduced_index = get_reduced_index(self.prefix, self.channels,

File "anaconda3/lib/python3.9/site-packages/conda/core/index.py", line 287, in get_reduced_index

new_records = SubdirData.query_all(spec, channels=channels, subdirs=subdirs,

File "anaconda3/lib/python3.9/site-packages/conda/core/subdir_data.py", line 139, in query_all

result = tuple(concat(executor.map(subdir_query, channel_urls)))

File "anaconda3/lib/python3.9/concurrent/futures/_base.py", line 609, in result_iterator

yield fs.pop().result()

File "anaconda3/lib/python3.9/concurrent/futures/_base.py", line 446, in result

return self.__get_result()

File "anaconda3/lib/python3.9/concurrent/futures/_base.py", line 391, in __get_result

raise self._exception

File "anaconda3/lib/python3.9/concurrent/futures/thread.py", line 58, in run

result = self.fn(*self.args, **self.kwargs)

File "anaconda3/lib/python3.9/site-packages/conda/core/subdir_data.py", line 131, in <lambda>

subdir_query = lambda url: tuple(SubdirData(Channel(url), repodata_fn=repodata_fn).query(

File "anaconda3/lib/python3.9/site-packages/conda/core/subdir_data.py", line 144, in query

self.load()

File "anaconda3/lib/python3.9/site-packages/conda/core/subdir_data.py", line 209, in load

_internal_state = self._load()

File "anaconda3/lib/python3.9/site-packages/conda/core/subdir_data.py", line 374, in _load

raw_repodata_str = fetch_repodata_remote_request(

File "anaconda3/lib/python3.9/site-packages/conda/core/subdir_data.py", line 700, in fetch_repodata_remote_request

resp = session.get(join_url(url, filename), headers=headers, proxies=session.proxies,

File "anaconda3/lib/python3.9/site-packages/requests/sessions.py", line 542, in get

return self.request('GET', url, **kwargs)

File "anaconda3/lib/python3.9/site-packages/requests/sessions.py", line 529, in request

resp = self.send(prep, **send_kwargs)

File "anaconda3/lib/python3.9/site-packages/requests/sessions.py", line 687, in send

r.content

File "anaconda3/lib/python3.9/site-packages/requests/models.py", line 838, in content

self._content = b''.join(self.iter_content(CONTENT_CHUNK_SIZE)) or b''

File "anaconda3/lib/python3.9/site-packages/requests/models.py", line 763, in generate

raise ChunkedEncodingError(e)

requests.exceptions.ChunkedEncodingError: ("Connection broken: InvalidChunkLength(got length b'', 0 bytes read)", InvalidChunkLength(got length b'', 0 bytes read))

`$ /home/wanghuijuan/anaconda3/bin/conda install -c conda-forge cudatoolkit=10.0 cudnn=7.4`

environment variables:

CIO_TEST=<not set>

CONDA_DEFAULT_ENV=

CONDA_EXE=anaconda3/bin/conda

CONDA_PREFIX=

CONDA_PREFIX_1=anaconda3

CONDA_PREFIX_2=

CONDA_PROMPT_MODIFIER=()

CONDA_PYTHON_EXE=anaconda3/bin/python

CONDA_ROOT=anaconda3

CONDA_SHLVL=3

CURL_CA_BUNDLE=<not set>

PATH=/anaconda3/condabin:/home/wanghuijuan/.vscode

-server/bin/6d9b74a70ca9c7733b29f0456fd8195364076dda/bin/remote-cli:/u

sr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:

/usr/local/games:/snap/bin

REQUESTS_CA_BUNDLE=<not set>

SSL_CERT_FILE=<not set>

active environment :

active env location :

shell level : 3

user config file : .condarc

populated config files :

conda version : 4.13.0

conda-build version : 3.21.8

python version : 3.9.12.final.0

virtual packages : __cuda=11.4=0

__linux=4.15.0=0

__glibc=2.27=0

__unix=0=0

__archspec=1=x86_64

base environment :anaconda3 (writable)

conda av data dir :anaconda3/etc/conda

conda av metadata url : None

channel URLs : https://conda.anaconda.org/conda-forge/linux-64

https://conda.anaconda.org/conda-forge/noarch

https://repo.anaconda.com/pkgs/main/linux-64

https://repo.anaconda.com/pkgs/main/noarch

https://repo.anaconda.com/pkgs/r/linux-64

https://repo.anaconda.com/pkgs/r/noarch

package cache : /home/wanghuijuan/anaconda3/pkgs

/home/wanghuijuan/.conda/pkgs

envs directories : /home/wanghuijuan/anaconda3/envs

/home/wanghuijuan/.conda/envs

platform : linux-64

user-agent : conda/4.13.0 requests/2.27.1 CPython/3.9.12 Linux/4.15.0-136-generic ubuntu/18.04.4 glibc/2.27

UID:GID : 1018:1014

netrc file : None

offline mode : False

An unexpected error has occurred. Conda has prepared the above report.

If submitted, this report will be used by core maintainers to improve

future releases of conda.

Would you like conda to send this report to the core maintainers?

[y/N]: y

Upload did not complete.

Thank you for helping to improve conda.

Opt-in to always sending reports (and not see this message again)

by running

$ conda config --set report_errors true

不知道发生了什么,总之换一种安装方式好了(参考TensorFlow-gpu安装和测试(TensorFlow-gpu1.14+Cuda10)_爱学习的小龙的博客-CSDN博客_tensorflowgpu测试):

wget -P files/install_packages https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main/linux-64/cudatoolkit-10.0.130-0.conda

conda install files/install_packages/cudatoolkit-10.0.130-0.conda

重新运行Python代码。和之前一样的输出部分就不写了,直接从不一样的地方开始:

2022-08-17 16:15:43.407219: I tensorflow/stream_executor/platform/default/dso_loader.cc:42] Successfully opened dynamic library libcudart.so.10.0

2022-08-17 16:15:43.409338: I tensorflow/stream_executor/platform/default/dso_loader.cc:42] Successfully opened dynamic library libcublas.so.10.0

2022-08-17 16:15:43.411111: I tensorflow/stream_executor/platform/default/dso_loader.cc:42] Successfully opened dynamic library libcufft.so.10.0

2022-08-17 16:15:43.411878: I tensorflow/stream_executor/platform/default/dso_loader.cc:42] Successfully opened dynamic library libcurand.so.10.0

2022-08-17 16:15:43.415478: I tensorflow/stream_executor/platform/default/dso_loader.cc:42] Successfully opened dynamic library libcusolver.so.10.0

2022-08-17 16:15:43.418072: I tensorflow/stream_executor/platform/default/dso_loader.cc:42] Successfully opened dynamic library libcusparse.so.10.0

2022-08-17 16:15:43.424901: I tensorflow/stream_executor/platform/default/dso_loader.cc:42] Successfully opened dynamic library libcudnn.so.7

2022-08-17 16:15:43.435064: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1763] Adding visible gpu devices: 0, 1, 2, 3

2022-08-17 16:15:43.435492: I tensorflow/stream_executor/platform/default/dso_loader.cc:42] Successfully opened dynamic library libcudart.so.10.0

2022-08-17 16:15:43.441476: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1181] Device interconnect StreamExecutor with strength 1 edge matrix:

2022-08-17 16:15:43.442070: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1187] 0 1 2 3

2022-08-17 16:15:43.442448: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1200] 0: N Y Y Y

2022-08-17 16:15:43.443431: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1200] 1: Y N Y Y

2022-08-17 16:15:43.444206: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1200] 2: Y Y N Y

2022-08-17 16:15:43.444586: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1200] 3: Y Y Y N

2022-08-17 16:15:43.452440: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1326] Created TensorFlow device (/job:localhost/replica:0/task:0/device:GPU:0 with 2446 MB memory) -> physical GPU (device: 0, name: Tesla T4, pci bus id: 0000:3b:00.0, compute capability: 7.5)

2022-08-17 16:15:43.462938: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1326] Created TensorFlow device (/job:localhost/replica:0/task:0/device:GPU:1 with 5244 MB memory) -> physical GPU (device: 1, name: Tesla T4, pci bus id: 0000:5e:00.0, compute capability: 7.5)

2022-08-17 16:15:43.469831: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1326] Created TensorFlow device (/job:localhost/replica:0/task:0/device:GPU:2 with 14259 MB memory) -> physical GPU (device: 2, name: Tesla T4, pci bus id: 0000:b1:00.0, compute capability: 7.5)

2022-08-17 16:15:43.483509: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1326] Created TensorFlow device (/job:localhost/replica:0/task:0/device:GPU:3 with 14259 MB memory) -> physical GPU (device: 3, name: Tesla T4, pci bus id: 0000:d9:00.0, compute capability: 7.5)

Device mapping:

/job:localhost/replica:0/task:0/device:XLA_CPU:0 -> device: XLA_CPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:0 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:1 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:2 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:3 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:GPU:0 -> device: 0, name: Tesla T4, pci bus id: 0000:3b:00.0, compute capability: 7.5

/job:localhost/replica:0/task:0/device:GPU:1 -> device: 1, name: Tesla T4, pci bus id: 0000:5e:00.0, compute capability: 7.5

/job:localhost/replica:0/task:0/device:GPU:2 -> device: 2, name: Tesla T4, pci bus id: 0000:b1:00.0, compute capability: 7.5

/job:localhost/replica:0/task:0/device:GPU:3 -> device: 3, name: Tesla T4, pci bus id: 0000:d9:00.0, compute capability: 7.5

2022-08-17 16:15:43.490300: I tensorflow/core/common_runtime/direct_session.cc:296] Device mapping:

/job:localhost/replica:0/task:0/device:XLA_CPU:0 -> device: XLA_CPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:0 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:1 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:2 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:XLA_GPU:3 -> device: XLA_GPU device

/job:localhost/replica:0/task:0/device:GPU:0 -> device: 0, name: Tesla T4, pci bus id: 0000:3b:00.0, compute capability: 7.5

/job:localhost/replica:0/task:0/device:GPU:1 -> device: 1, name: Tesla T4, pci bus id: 0000:5e:00.0, compute capability: 7.5

/job:localhost/replica:0/task:0/device:GPU:2 -> device: 2, name: Tesla T4, pci bus id: 0000:b1:00.0, compute capability: 7.5

/job:localhost/replica:0/task:0/device:GPU:3 -> device: 3, name: Tesla T4, pci bus id: 0000:d9:00.0, compute capability: 7.5

WARNING:tensorflow:From /home/wanghuijuan/whj_code1/trytf1.py:13: The name tf.global_variables_initializer is deprecated. Please use tf.compat.v1.global_variables_initializer instead.

add: (Add): /job:localhost/replica:0/task:0/device:GPU:2

2022-08-17 16:15:43.495600: I tensorflow/core/common_runtime/placer.cc:54] add: (Add)/job:localhost/replica:0/task:0/device:GPU:2

init: (NoOp): /job:localhost/replica:0/task:0/device:GPU:0

2022-08-17 16:15:43.495642: I tensorflow/core/common_runtime/placer.cc:54] init: (NoOp)/job:localhost/replica:0/task:0/device:GPU:0

a: (Const): /job:localhost/replica:0/task:0/device:CPU:0

2022-08-17 16:15:43.495664: I tensorflow/core/common_runtime/placer.cc:54] a: (Const)/job:localhost/replica:0/task:0/device:CPU:0

b: (Const): /job:localhost/replica:0/task:0/device:CPU:0

2022-08-17 16:15:43.495682: I tensorflow/core/common_runtime/placer.cc:54] b: (Const)/job:localhost/replica:0/task:0/device:CPU:0

[2. 4. 6.]

运行TensorFlow代码时需要在代码前加上这些:

import tensorflow as tf

import os

os.environ["CUDA_DEVICE_ORDER"] = "PCI_BUS_ID"

os.environ["CUDA_VISIBLE_DEVICES"] = "2"

config = tf.ConfigProto()

config.gpu_options.allow_growth = True

Keras就自动安装好了。

直接用bert4keras代码来举个实际用例:

pip install bert4keras

wget -P /data/pretrained_model/chinese_L-12_H-768_A-12 https://storage.googleapis.com/bert_models/2018_11_03/chinese_L-12_H-768_A-12.zip

unzip /data/pretrained_model/chinese_L-12_H-768_A-12/chinese_L-12_H-768_A-12.zip -d /data/pretrained_model/chinese_L-12_H-768_A-12

代码(改自https://github.com/bojone/bert4keras/blob/master/examples/basic_extract_features.py):

import os

os.environ["CUDA_VISIBLE_DEVICES"] = "2"

import time

import numpy as np

from bert4keras.backend import keras

from bert4keras.models import build_transformer_model

from bert4keras.tokenizers import Tokenizer

from bert4keras.snippets import to_array

config_path = '/data/pretrained_model/chinese_L-12_H-768_A-12/chinese_L-12_H-768_A-12/bert_config.json'

checkpoint_path = '/data/pretrained_model/chinese_L-12_H-768_A-12/chinese_L-12_H-768_A-12/bert_model.ckpt'

dict_path = '/data/pretrained_model/chinese_L-12_H-768_A-12/chinese_L-12_H-768_A-12/vocab.txt'

tokenizer = Tokenizer(dict_path, do_lower_case=True)

model = build_transformer_model(config_path, checkpoint_path)

token_ids, segment_ids = tokenizer.encode(u'语言模型')

token_ids, segment_ids = to_array([token_ids], [segment_ids])

print('\n ===== predicting =====\n')

print(model.predict([token_ids, segment_ids]))

"""

输出:

[[[-0.63251007 0.2030236 0.07936534 ... 0.49122632 -0.20493352

0.2575253 ]

[-0.7588351 0.09651865 1.0718756 ... -0.6109694 0.04312154

0.03881441]

[ 0.5477043 -0.792117 0.44435206 ... 0.42449304 0.41105673

0.08222899]

[-0.2924238 0.6052722 0.49968526 ... 0.8604137 -0.6533166

0.5369075 ]

[-0.7473459 0.49431565 0.7185162 ... 0.3848612 -0.74090636

0.39056838]

[-0.8741375 -0.21650358 1.338839 ... 0.5816864 -0.4373226

0.56181806]]]

"""

time.sleep(100)

time.sleep()命令是为了停留一下,显式用nvidia-smi命令看这个程序只在卡2上占用空间,以及具体占用了多大的GPU(占了14G,还是相当大的)

3. 其他本文撰写过程中使用的参考资料

- tensorflow 1.14指定gpu运行设置_愚昧之山绝望之谷开悟之坡的博客-CSDN博客_tensorflow指定gpu

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)