pytorch框架中有一个非常重要且好用的包:torchvision,顾名思义这个包主要是关于计算机视觉cv的。这个包主要由3个子包组成,分别是:torchvision.datasets、torchvision.models、torchvision.transforms。

具体介绍可以参考官网:https://pytorch.org/docs/master/torchvision

具体代码可以参考github:https://github.com/pytorch/vision

torchvision.models这个包中包含alexnet、densenet、inception、resnet、squeezenet、vgg等常用经典的网络结构,并且提供了预训练模型,可以通过简单调用来读取网络结构和预训练模型。

今天我们来解读一下DenseNet的源码实现。如果对DenseNet不是很了解 可以查看这里的论文笔记

https://blog.csdn.net/sinat_33487968/article/details/83684453

DenseNet由Dense Block组成,而Dense Block石油DenseLayer组成,下面就是DenseLayer类,可以看到每一层卷积之前都是用了batchnorm和relu,然后就是1*1的卷积和3*3的卷积。bn_size参数是bottleneck layer瓶颈层数的乘法因子。bottleneck layer就是指在3*3的卷积之前的那层1*1的卷积,它降低输入的feature map的数量。drop_rate是drop out的概率大小。

注意到最后返回值是torch.cat([x, new_features], 1),也就是包括自己本身和提取的feature层堆叠在一起。

class _DenseLayer(nn.Sequential):

def __init__(self, num_input_features, growth_rate, bn_size, drop_rate):

super(_DenseLayer, self).__init__()

self.add_module('norm1', nn.BatchNorm2d(num_input_features)),

self.add_module('relu1', nn.ReLU(inplace=True)),

self.add_module('conv1', nn.Conv2d(num_input_features, bn_size *

growth_rate, kernel_size=1, stride=1, bias=False)),

self.add_module('norm2', nn.BatchNorm2d(bn_size * growth_rate)),

self.add_module('relu2', nn.ReLU(inplace=True)),

self.add_module('conv2', nn.Conv2d(bn_size * growth_rate, growth_rate,

kernel_size=3, stride=1, padding=1, bias=False)),

self.drop_rate = drop_rate

def forward(self, x):

new_features = super(_DenseLayer, self).forward(x)

if self.drop_rate > 0:

new_features = F.dropout(new_features, p=self.drop_rate, training=self.training)

return torch.cat([x, new_features], 1)

有了DenseLayer,就能用它来构建Dense Block。解释一下一些参数的含义:growth_rate (int) - 代表每一层有多少个新的feature层添加进去 (paper里面是k),num_input features(int) - 输入的feature层数,num_layers(int)- 代表了Dense Block有多少层DenseLayers。

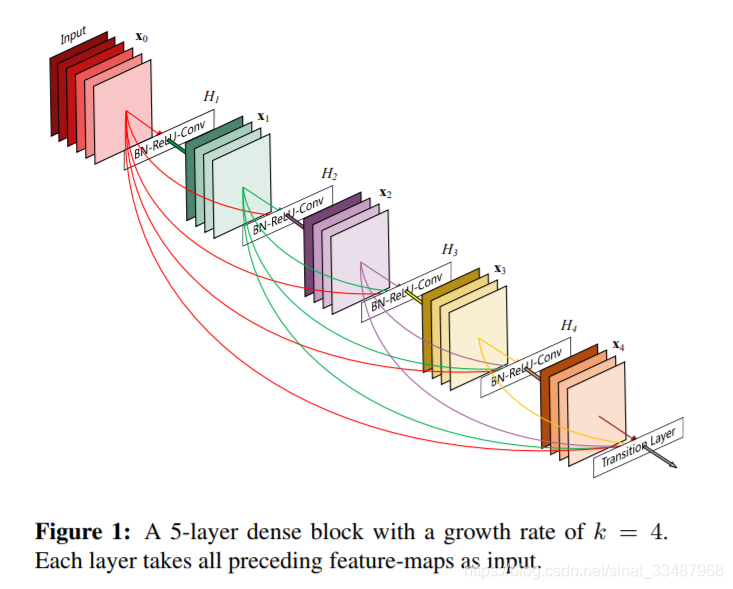

for循环就是根据num_layers逐层添加Dense Block注意每一层的输入num_input features不一样,是因为每一个DenseLayer之后feature的数目都会增加growth rate。直观一点理解,其实就是每一层的DenseLayer附带了自己学习到的feature还有自己本身一起传入下一个DenseLayer。所以后面的输出的结果会不断的增加,下图不能体现数据流动这一点,因为下图不是数据本身,而是代表卷积层。

class _DenseBlock(nn.Sequential):

def __init__(self, num_layers, num_input_features, bn_size, growth_rate, drop_rate):

super(_DenseBlock, self).__init__()

for i in range(num_layers):

layer = _DenseLayer(num_input_features + i * growth_rate, growth_rate, bn_size, drop_rate)

self.add_module('denselayer%d' % (i + 1), layer)

DenseBlock的结构可以看下图。

在Dense Block与Dense Block之间,论文添加了Transition层,这个层的作用是为了进一步提高模型的紧凑性,可以减少过渡层的特征图数量。这里直接就把num_input_features压缩成num_output_features,使用一层卷积实现的。

class _Transition(nn.Sequential):

def __init__(self, num_input_features, num_output_features):

super(_Transition, self).__init__()

self.add_module('norm', nn.BatchNorm2d(num_input_features))

self.add_module('relu', nn.ReLU(inplace=True))

self.add_module('conv', nn.Conv2d(num_input_features, num_output_features,

kernel_size=1, stride=1, bias=False))

self.add_module('pool', nn.AvgPool2d(kernel_size=2, stride=2))

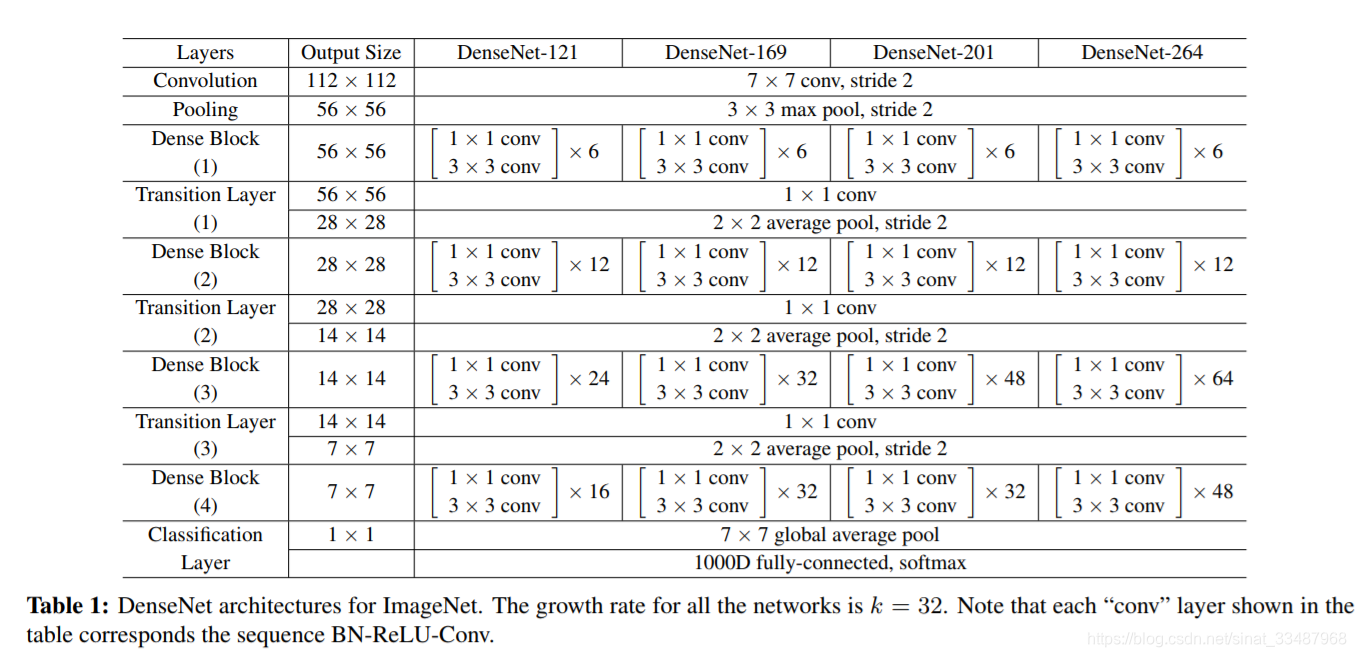

接下来就可以组合成一个DenseNet。DenseNet有不同的版本,不同版本之间可以参考论文给出结构:

每个版本的共同特征就是一开始是7*7的卷积和3*3的maxpooling,中间还加了batchnorm和relu,这部分都被写进了OrderedDict里。接着就要开始遍历构造DenseBlock和Transition Layer,而不同版本的Dense Block每一层具体多少层Dense Layer,是由block_config(一个由4个整数组成的列表)决定。

这里关于Transition的操作,先判断是否是最后一个DenseBlock(最后一个DenseBlock不需要,前面不同的DenseBlock需要Transition Layer),然后就添加一层Transition Layer,输出是输入feature的一半,这样设定应该是根据实验效果确定的。但直观的理解就是这么做之后不管我们多少层DenseBlock,最后输出的数据都是紧凑的,不会无限增大它的厚度。

最后就是常规操作,添加batchnorm和一层线性分类器。后面还未每层的权重参数初始化,如果是卷积核,就使用kaiming_normal,具体可以参考pytorch官网。

class DenseNet(nn.Module):

r"""Densenet-BC model class, based on

`"Densely Connected Convolutional Networks" <https://arxiv.org/pdf/1608.06993.pdf>`_

Args:

growth_rate (int) - how many filters to add each layer (`k` in paper)

block_config (list of 4 ints) - how many layers in each pooling block

num_init_features (int) - the number of filters to learn in the first convolution layer

bn_size (int) - multiplicative factor for number of bottle neck layers

(i.e. bn_size * k features in the bottleneck layer)

drop_rate (float) - dropout rate after each dense layer

num_classes (int) - number of classification classes

"""

def __init__(self, growth_rate=32, block_config=(6, 12, 24, 16),

num_init_features=64, bn_size=4, drop_rate=0, num_classes=1000):

super(DenseNet, self).__init__()

# First convolution

self.features = nn.Sequential(OrderedDict([

('conv0', nn.Conv2d(3, num_init_features, kernel_size=7, stride=2, padding=3, bias=False)),

('norm0', nn.BatchNorm2d(num_init_features)),

('relu0', nn.ReLU(inplace=True)),

('pool0', nn.MaxPool2d(kernel_size=3, stride=2, padding=1)),

]))

# Each denseblock

num_features = num_init_features

for i, num_layers in enumerate(block_config):

block = _DenseBlock(num_layers=num_layers, num_input_features=num_features,

bn_size=bn_size, growth_rate=growth_rate, drop_rate=drop_rate)

self.features.add_module('denseblock%d' % (i + 1), block)

num_features = num_features + num_layers * growth_rate

if i != len(block_config) - 1:

trans = _Transition(num_input_features=num_features, num_output_features=num_features // 2)

self.features.add_module('transition%d' % (i + 1), trans)

num_features = num_features // 2

# Final batch norm

self.features.add_module('norm5', nn.BatchNorm2d(num_features))

# Linear layer

self.classifier = nn.Linear(num_features, num_classes)

# Official init from torch repo.

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.constant_(m.bias, 0)

def forward(self, x):

features = self.features(x)

out = F.relu(features, inplace=True)

out = F.avg_pool2d(out, kernel_size=7, stride=1).view(features.size(0), -1)

out = self.classifier(out)

return out

那么我们就来看看一个具体的版本是怎么构建的:拿DenseNet121举例,block_config=(6,12,24,16)就是上图论文中的参数。pretrained是定义是否需要预训练模型,这里不必太在意他们是怎么确定的,只要知道如果pretrained是True就返回一个已经训练好的模型。

其他不同版本的DeseNet都大同小异,只是block_config的参数不同。这里就不贴出来了。

def densenet121(pretrained=False, **kwargs):

r"""Densenet-121 model from

`"Densely Connected Convolutional Networks" <https://arxiv.org/pdf/1608.06993.pdf>`_

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = DenseNet(num_init_features=64, growth_rate=32, block_config=(6, 12, 24, 16),

**kwargs)

if pretrained:

# '.'s are no longer allowed in module names, but pervious _DenseLayer

# has keys 'norm.1', 'relu.1', 'conv.1', 'norm.2', 'relu.2', 'conv.2'.

# They are also in the checkpoints in model_urls. This pattern is used

# to find such keys.

pattern = re.compile(

r'^(.*denselayer\d+\.(?:norm|relu|conv))\.((?:[12])\.(?:weight|bias|running_mean|running_var))$')

state_dict = model_zoo.load_url(model_urls['densenet121'])

for key in list(state_dict.keys()):

res = pattern.match(key)

if res:

new_key = res.group(1) + res.group(2)

state_dict[new_key] = state_dict[key]

del state_dict[key]

model.load_state_dict(state_dict)

return model

最后贴上源码:

import re

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.utils.model_zoo as model_zoo

from collections import OrderedDict

__all__ = ['DenseNet', 'densenet121', 'densenet169', 'densenet201', 'densenet161']

model_urls = {

'densenet121': 'https://download.pytorch.org/models/densenet121-a639ec97.pth',

'densenet169': 'https://download.pytorch.org/models/densenet169-b2777c0a.pth',

'densenet201': 'https://download.pytorch.org/models/densenet201-c1103571.pth',

'densenet161': 'https://download.pytorch.org/models/densenet161-8d451a50.pth',

}

def densenet121(pretrained=False, **kwargs):

r"""Densenet-121 model from

`"Densely Connected Convolutional Networks" <https://arxiv.org/pdf/1608.06993.pdf>`_

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = DenseNet(num_init_features=64, growth_rate=32, block_config=(6, 12, 24, 16),

**kwargs)

if pretrained:

# '.'s are no longer allowed in module names, but pervious _DenseLayer

# has keys 'norm.1', 'relu.1', 'conv.1', 'norm.2', 'relu.2', 'conv.2'.

# They are also in the checkpoints in model_urls. This pattern is used

# to find such keys.

pattern = re.compile(

r'^(.*denselayer\d+\.(?:norm|relu|conv))\.((?:[12])\.(?:weight|bias|running_mean|running_var))$')

state_dict = model_zoo.load_url(model_urls['densenet121'])

for key in list(state_dict.keys()):

res = pattern.match(key)

if res:

new_key = res.group(1) + res.group(2)

state_dict[new_key] = state_dict[key]

del state_dict[key]

model.load_state_dict(state_dict)

return model

def densenet169(pretrained=False, **kwargs):

r"""Densenet-169 model from

`"Densely Connected Convolutional Networks" <https://arxiv.org/pdf/1608.06993.pdf>`_

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = DenseNet(num_init_features=64, growth_rate=32, block_config=(6, 12, 32, 32),

**kwargs)

if pretrained:

# '.'s are no longer allowed in module names, but pervious _DenseLayer

# has keys 'norm.1', 'relu.1', 'conv.1', 'norm.2', 'relu.2', 'conv.2'.

# They are also in the checkpoints in model_urls. This pattern is used

# to find such keys.

pattern = re.compile(

r'^(.*denselayer\d+\.(?:norm|relu|conv))\.((?:[12])\.(?:weight|bias|running_mean|running_var))$')

state_dict = model_zoo.load_url(model_urls['densenet169'])

for key in list(state_dict.keys()):

res = pattern.match(key)

if res:

new_key = res.group(1) + res.group(2)

state_dict[new_key] = state_dict[key]

del state_dict[key]

model.load_state_dict(state_dict)

return model

def densenet201(pretrained=False, **kwargs):

r"""Densenet-201 model from

`"Densely Connected Convolutional Networks" <https://arxiv.org/pdf/1608.06993.pdf>`_

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = DenseNet(num_init_features=64, growth_rate=32, block_config=(6, 12, 48, 32),

**kwargs)

if pretrained:

# '.'s are no longer allowed in module names, but pervious _DenseLayer

# has keys 'norm.1', 'relu.1', 'conv.1', 'norm.2', 'relu.2', 'conv.2'.

# They are also in the checkpoints in model_urls. This pattern is used

# to find such keys.

pattern = re.compile(

r'^(.*denselayer\d+\.(?:norm|relu|conv))\.((?:[12])\.(?:weight|bias|running_mean|running_var))$')

state_dict = model_zoo.load_url(model_urls['densenet201'])

for key in list(state_dict.keys()):

res = pattern.match(key)

if res:

new_key = res.group(1) + res.group(2)

state_dict[new_key] = state_dict[key]

del state_dict[key]

model.load_state_dict(state_dict)

return model

def densenet161(pretrained=False, **kwargs):

r"""Densenet-161 model from

`"Densely Connected Convolutional Networks" <https://arxiv.org/pdf/1608.06993.pdf>`_

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = DenseNet(num_init_features=96, growth_rate=48, block_config=(6, 12, 36, 24),

**kwargs)

if pretrained:

# '.'s are no longer allowed in module names, but pervious _DenseLayer

# has keys 'norm.1', 'relu.1', 'conv.1', 'norm.2', 'relu.2', 'conv.2'.

# They are also in the checkpoints in model_urls. This pattern is used

# to find such keys.

pattern = re.compile(

r'^(.*denselayer\d+\.(?:norm|relu|conv))\.((?:[12])\.(?:weight|bias|running_mean|running_var))$')

state_dict = model_zoo.load_url(model_urls['densenet161'])

for key in list(state_dict.keys()):

res = pattern.match(key)

if res:

new_key = res.group(1) + res.group(2)

state_dict[new_key] = state_dict[key]

del state_dict[key]

model.load_state_dict(state_dict)

return model

class _DenseLayer(nn.Sequential):

def __init__(self, num_input_features, growth_rate, bn_size, drop_rate):

super(_DenseLayer, self).__init__()

self.add_module('norm1', nn.BatchNorm2d(num_input_features)),

self.add_module('relu1', nn.ReLU(inplace=True)),

self.add_module('conv1', nn.Conv2d(num_input_features, bn_size *

growth_rate, kernel_size=1, stride=1, bias=False)),

self.add_module('norm2', nn.BatchNorm2d(bn_size * growth_rate)),

self.add_module('relu2', nn.ReLU(inplace=True)),

self.add_module('conv2', nn.Conv2d(bn_size * growth_rate, growth_rate,

kernel_size=3, stride=1, padding=1, bias=False)),

self.drop_rate = drop_rate

def forward(self, x):

new_features = super(_DenseLayer, self).forward(x)

if self.drop_rate > 0:

new_features = F.dropout(new_features, p=self.drop_rate, training=self.training)

return torch.cat([x, new_features], 1)

class _DenseBlock(nn.Sequential):

def __init__(self, num_layers, num_input_features, bn_size, growth_rate, drop_rate):

super(_DenseBlock, self).__init__()

for i in range(num_layers):

layer = _DenseLayer(num_input_features + i * growth_rate, growth_rate, bn_size, drop_rate)

self.add_module('denselayer%d' % (i + 1), layer)

class _Transition(nn.Sequential):

def __init__(self, num_input_features, num_output_features):

super(_Transition, self).__init__()

self.add_module('norm', nn.BatchNorm2d(num_input_features))

self.add_module('relu', nn.ReLU(inplace=True))

self.add_module('conv', nn.Conv2d(num_input_features, num_output_features,

kernel_size=1, stride=1, bias=False))

self.add_module('pool', nn.AvgPool2d(kernel_size=2, stride=2))

class DenseNet(nn.Module):

r"""Densenet-BC model class, based on

`"Densely Connected Convolutional Networks" <https://arxiv.org/pdf/1608.06993.pdf>`_

Args:

growth_rate (int) - how many filters to add each layer (`k` in paper)

block_config (list of 4 ints) - how many layers in each pooling block

num_init_features (int) - the number of filters to learn in the first convolution layer

bn_size (int) - multiplicative factor for number of bottle neck layers

(i.e. bn_size * k features in the bottleneck layer)

drop_rate (float) - dropout rate after each dense layer

num_classes (int) - number of classification classes

"""

def __init__(self, growth_rate=32, block_config=(6, 12, 24, 16),

num_init_features=64, bn_size=4, drop_rate=0, num_classes=1000):

super(DenseNet, self).__init__()

# First convolution

self.features = nn.Sequential(OrderedDict([

('conv0', nn.Conv2d(3, num_init_features, kernel_size=7, stride=2, padding=3, bias=False)),

('norm0', nn.BatchNorm2d(num_init_features)),

('relu0', nn.ReLU(inplace=True)),

('pool0', nn.MaxPool2d(kernel_size=3, stride=2, padding=1)),

]))

# Each denseblock

num_features = num_init_features

for i, num_layers in enumerate(block_config):

block = _DenseBlock(num_layers=num_layers, num_input_features=num_features,

bn_size=bn_size, growth_rate=growth_rate, drop_rate=drop_rate)

self.features.add_module('denseblock%d' % (i + 1), block)

num_features = num_features + num_layers * growth_rate

if i != len(block_config) - 1:

trans = _Transition(num_input_features=num_features, num_output_features=num_features // 2)

self.features.add_module('transition%d' % (i + 1), trans)

num_features = num_features // 2

# Final batch norm

self.features.add_module('norm5', nn.BatchNorm2d(num_features))

# Linear layer

self.classifier = nn.Linear(num_features, num_classes)

# Official init from torch repo.

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight)

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.constant_(m.bias, 0)

def forward(self, x):

features = self.features(x)

out = F.relu(features, inplace=True)

out = F.avg_pool2d(out, kernel_size=7, stride=1).view(features.size(0), -1)

out = self.classifier(out)

return out

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)