这次分享的是CAAD-2018比赛中Northwest Security团队的技术报告,该团队在此次比赛中取得了了targeted Attack 方向第三名,non-targeted Attack方向第四名的成绩。

题目:Leverage Open-Source Information To Make An Effective Adversarial Attack Against Deep Learning Model

地址:CAAD_technical_report_team_NWSec

- 研究对抗样本的意义:

- Since then, tremendous efforts have been made to explore this vulnerability and to improve the robustness of neural network.

- On the other hand, competition has been proved to be one effective way to boost the learning on security-related topics.

该团队使用同样的method和 concept 取得了2018 CAAD targeted Attack 方向第三名,non-targeted Attack方向第四名的成绩。

non-targeted and targeted attack攻击方法都是基于多模型的BIM方法。并且在Las Vegas的线下赛中第二名的成绩。

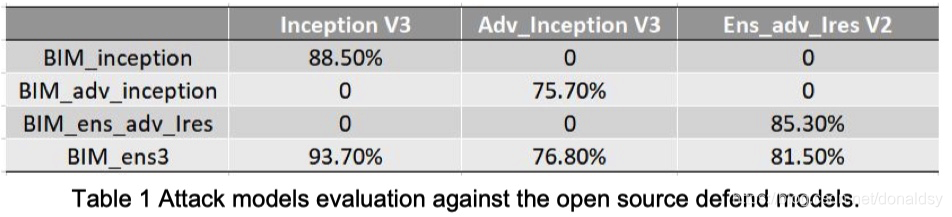

- 4种攻击方法对抗3种防御方法的测试情况:

这里4种攻击方法都是使用的BIM方法,差异体现在梯度计算上。

攻击方法中,前3种都是针对相应的防御方法求梯度,并进行攻击,是白盒攻击,但是这些攻击方法仅仅针对自己的模型有效。

But the white-box attack is only effective against the corresponding defense model, which means poor transferability. Fortunately, researchers have demonstrated that good transferability can be achieved by multi-model ensembling.

经过对可获取的公共对抗方法的评估后,该团队采用了toshi_k团队(NIPs 2017 5th) 的方法,在toshi_k的基础上优化了model selection and hyperparameter tuning的代码。

the key idea来自于Sanxia提出的在对抗攻击中的fused方法。

Non-targeted attack攻击将objective function 定义成到original label的距离,优化目标就是增加该距离。

targeted attack函数将objective function定义成到target label的距离,优化目标就是减少距离。

Adversarially trained defense models usually have unsmooth gradients, which means there are many local minima acting like gradient traps. Toshi_k method applied a 2D Gaussian smoothing over gradient in each iteration, which can effectively remove the local minima.On the other hand, sangxia method added a random perturbation to the calculated adversarial image, which increases the chance of calculation “jumping” out of the local gradient traps.

该团队的伪代码 - pseudocode

x_adv = original_image

For each iteration:

loss = calculate the loss through loss function

gradient = calculate the gradient of loss w.r.t. x_adv

# 2d Gaussian smoothing

gradient = 2D_Gaussian_smoothing(gradient)

# calculate adversarial image x_adv

x_adv = x_adv - alpha * sign(gradient)

# random perturbation

x_adv = x_adv + random_number

Toshi_k 为了提高transferability,集成了以下三种模型(求平均值):

- inception_v3

- adv_inception_v3

- ens_adv_inception_resnet_v2

最优的步长是在1000张给定的图片和包括NWSec开发的最先进的防御软件在内的公共可用防御软件上确定的。the optimal step size could be different based on the pictures and the defender selection。

hyperparameter selection,该团队的攻击方法能够对all the public available adversarial defenders都取得一个高分数。也引入了2017廖方舟团队的most powerful defender

该团队的技术报告是除了冠军之外,分享得最详细的一个,可惜的是没有找到源码,只是在文中放出了伪代码。