Deep Learning 深度学习

清洗数据

学习简单的数据处理经验, 熟悉以后常用的数据集

The objective of this assignment is to learn about simple data curation practices, and familiarize you with some of the data we’ll be reusing later.

本文使用notMNIST数据集用于实践.

这个数据集与经典的MNIST数据集相似,但是更接近生活中的数据,更加难以处理,并且没有MNIST‘干净’。

This notebook uses the notMNIST dataset to be used with python experiments. This dataset is designed to look like the classic MNIST dataset, while looking a little more like real data: it’s a harder task, and the data is a lot less ‘clean’ than MNIST.

from __future__ import print_function

import matplotlib.pyplot as plt

import numpy as np

import os

import sys

import tarfile

from IPython.display import display, Image

from scipy import ndimage

from sklearn.linear_model import LogisticRegression

from six.moves.urllib.request import urlretrieve

from six.moves import cPickle as pickle

%matplotlib inline

首先,下载数据到本地。数据中的单个字体由28*28 的图像构成。数据中有A到J十种字符。训练数据集大概有500K组数据,测试集大概有19000组数据。这样的测试与训练数据集分配方式能够在任何电脑上很快的训练。

First, we’ll download the dataset to our local machine. The data consists of characters rendered in a variety of fonts on a 28x28 image. The labels are limited to ‘A’ through ‘J’ (10 classes). The training set has about 500k and the testset 19000 labelled examples. Given these sizes, it should be possible to train models quickly on any machine.

url = 'http://cn-static.udacity.com/mlnd/'

last_percent_reported = None

def download_progress_hook(count, blockSize, totalSize):

"""A hook to report the progress of a download. This is mostly intended for users with

slow internet connections. Reports every 1% change in download progress.

"""

global last_percent_reported

percent = int(count * blockSize * 100 / totalSize)

if last_percent_reported != percent:

if percent % 5 == 0:

sys.stdout.write("%s%%" % percent)

sys.stdout.flush()

else:

sys.stdout.write(".")

sys.stdout.flush()

last_percent_reported = percent

def maybe_download(filename, expected_bytes, force=False):

"""Download a file if not present, and make sure it's the right size."""

if force or not os.path.exists(filename):

print('Attempting to download:', filename)

filename, _ = urlretrieve(url + filename, filename, reporthook=download_progress_hook)

print('\nDownload Complete!')

statinfo = os.stat(filename)

if statinfo.st_size == expected_bytes:

print('Found and verified', filename)

else:

raise Exception(

'Failed to verify ' + filename + '. Can you get to it with a browser?')

return filename

train_filename = maybe_download('notMNIST_large.tar.gz', 247336696)

test_filename = maybe_download('notMNIST_small.tar.gz', 8458043)

Attempting to download: notMNIST_large.tar.gz

0%....5%....10%....15%....20%....25%....30%....35%....40%....45%....50%....55%....60%....65%....70%....75%....80%....85%....90%....95%....100%

Download Complete!

Found and verified notMNIST_large.tar.gz

Attempting to download: notMNIST_small.tar.gz

0%....5%....10%....15%....20%....25%....30%....35%....40%....45%....50%....55%....60%....65%....70%....75%....80%....85%....90%....95%....100%

Download Complete!

Found and verified notMNIST_small.tar.gz

解压缩数据集,得到十个文件夹,名字为A到J。

Extract the dataset from the compressed .tar.gz file.

This should give you a set of directories, labelled A through J.

num_classes = 10

np.random.seed(133)

def maybe_extract(filename, force=False):

root = os.path.splitext(os.path.splitext(filename)[0])[0]

if os.path.isdir(root) and not force:

print('%s already present - Skipping extraction of %s.' % (root, filename))

else:

print('Extracting data for %s. This may take a while. Please wait.' % root)

tar = tarfile.open(filename)

sys.stdout.flush()

tar.extractall()

tar.close()

data_folders = [

os.path.join(root, d) for d in sorted(os.listdir(root))

if os.path.isdir(os.path.join(root, d))]

if len(data_folders) != num_classes:

raise Exception(

'Expected %d folders, one per class. Found %d instead.' % (

num_classes, len(data_folders)))

print(data_folders)

return data_folders

train_folders = maybe_extract(train_filename)

test_folders = maybe_extract(test_filename)

Extracting data for notMNIST_large. This may take a while. Please wait.

['notMNIST_large/A', 'notMNIST_large/B', 'notMNIST_large/C', 'notMNIST_large/D', 'notMNIST_large/E', 'notMNIST_large/F', 'notMNIST_large/G', 'notMNIST_large/H', 'notMNIST_large/I', 'notMNIST_large/J']

Extracting data for notMNIST_small. This may take a while. Please wait.

['notMNIST_small/A', 'notMNIST_small/B', 'notMNIST_small/C', 'notMNIST_small/D', 'notMNIST_small/E', 'notMNIST_small/F', 'notMNIST_small/G', 'notMNIST_small/H', 'notMNIST_small/I', 'notMNIST_small/J']

Section 1

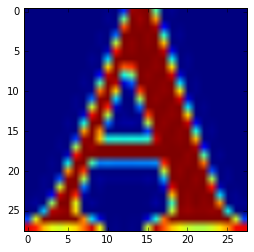

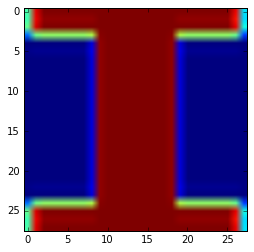

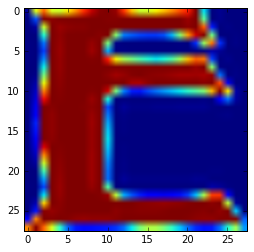

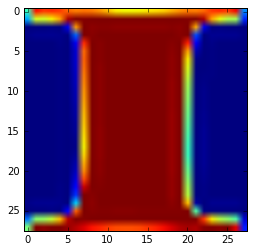

我们现在来看一下部分数据的内容。

文件夹A中的图片类型都是png格式。

每个图片中的字符的字体大小不尽相同。

可以用IPython.display显示图片进行查看。

Let’s take a peek at some of the data to make sure it looks sensible. Each exemplar should be an image of a character A through J rendered in a different font. Display a sample of the images that we just downloaded. Hint: you can use the package IPython.display.

现在我们用一种更具操作性的方式载入图片数据。

我们没必要将所有数据载入内存。

可以分别载入每个类型的数据,存储在硬盘上分别处理。

之后,汇总到一个可具操作性的数据集中。

Now let’s load the data in a more manageable format. Since, depending on your computer setup you might not be able to fit it all in memory, we’ll load each class into a separate dataset, store them on disk and curate them independently. Later we’ll merge them into a single dataset of manageable size.

我们要转换整个数据集为一个3维数组,每个维度分别是image index, x, y.所有值为浮点数,归一化后近似于均值为0的正太分布,并且方差在0.5左右。

这样训练数据会进行的更加容易。

We’ll convert the entire dataset into a 3D array (image index, x, y) of floating point values, normalized to have approximately zero mean and standard deviation ~0.5 to make training easier down the road.

其中一些图像不能读取,对我们的结果不会有很大影响。

A few images might not be readable, we’ll just skip them.

image_size = 28

pixel_depth = 255.0

train_folders = ['notMNIST_large/A', 'notMNIST_large/B', 'notMNIST_large/C', 'notMNIST_large/D', 'notMNIST_large/E', 'notMNIST_large/F', 'notMNIST_large/G', 'notMNIST_large/H', 'notMNIST_large/I', 'notMNIST_large/J']

test_folders = ['notMNIST_small/A', 'notMNIST_small/B', 'notMNIST_small/C', 'notMNIST_small/D', 'notMNIST_small/E', 'notMNIST_small/F', 'notMNIST_small/G', 'notMNIST_small/H', 'notMNIST_small/I', 'notMNIST_small/J']

def load_letter(folder, min_num_images):

"""Load the data for a single letter label."""

image_files = os.listdir(folder)

dataset = np.ndarray(shape=(len(image_files), image_size, image_size),

dtype=np.float32)

print(folder)

num_images = 0

for image in image_files:

image_file = os.path.join(folder, image)

try:

image_data = (ndimage.imread(image_file).astype(float) - pixel_depth / 2) / pixel_depth

if image_data.shape != (image_size, image_size):

raise Exception('Unexpected image shape: %s' % str(image_data.shape))

dataset[num_images, :, :] = image_data

num_images = num_images + 1

except IOError as e:

print('Could not read:', image_file, ':', e, '- it\'s ok, skipping.')

dataset = dataset[0:num_images, :, :]

if num_images < min_num_images:

raise Exception('Many fewer images than expected: %d < %d' %(num_images, min_num_images))

print('Full dataset tensor:', dataset.shape)

print('Mean:', np.mean(dataset))

print('Standard deviation:', np.std(dataset))

return dataset

def maybe_pickle(data_folders, min_num_images_per_class, force=False):

dataset_names = []

for folder in data_folders:

set_filename = folder + '.pickle'

dataset_names.append(set_filename)

if os.path.exists(set_filename) and not force:

print('%s already present - Skipping pickling.' % set_filename)

else:

print('Pickling %s.' % set_filename)

dataset = load_letter(folder, min_num_images_per_class)

try:

with open(set_filename, 'wb') as f:

pickle.dump(dataset, f, pickle.HIGHEST_PROTOCOL)

except Exception as e:

print('Unable to save data to', set_filename, ':', e)

return dataset_names

train_datasets = maybe_pickle(train_folders, 45000)

test_datasets = maybe_pickle(test_folders, 1800)

Pickling notMNIST_large/A.pickle.

notMNIST_large/A

Could not read: notMNIST_large/A/SG90IE11c3RhcmQgQlROIFBvc3Rlci50dGY=.png : cannot identify image file 'notMNIST_large/A/SG90IE11c3RhcmQgQlROIFBvc3Rlci50dGY=.png' - it's ok, skipping.

Could not read: notMNIST_large/A/RnJlaWdodERpc3BCb29rSXRhbGljLnR0Zg==.png : cannot identify image file 'notMNIST_large/A/RnJlaWdodERpc3BCb29rSXRhbGljLnR0Zg==.png' - it's ok, skipping.

Could not read: notMNIST_large/A/Um9tYW5hIEJvbGQucGZi.png : cannot identify image file 'notMNIST_large/A/Um9tYW5hIEJvbGQucGZi.png' - it's ok, skipping.

Full dataset tensor: (52909, 28, 28)

Mean: -0.12825

Standard deviation: 0.443121

Pickling notMNIST_large/B.pickle.

notMNIST_large/B

Could not read: notMNIST_large/B/TmlraXNFRi1TZW1pQm9sZEl0YWxpYy5vdGY=.png : cannot identify image file 'notMNIST_large/B/TmlraXNFRi1TZW1pQm9sZEl0YWxpYy5vdGY=.png' - it's ok, skipping.

Full dataset tensor: (52911, 28, 28)

Mean: -0.00756304

Standard deviation: 0.454492

Pickling notMNIST_large/C.pickle.

notMNIST_large/C

Full dataset tensor: (52912, 28, 28)

Mean: -0.142258

Standard deviation: 0.439806

Pickling notMNIST_large/D.pickle.

notMNIST_large/D

Could not read: notMNIST_large/D/VHJhbnNpdCBCb2xkLnR0Zg==.png : cannot identify image file 'notMNIST_large/D/VHJhbnNpdCBCb2xkLnR0Zg==.png' - it's ok, skipping.

Full dataset tensor: (52911, 28, 28)

Mean: -0.0573678

Standard deviation: 0.455647

Pickling notMNIST_large/E.pickle.

notMNIST_large/E

Full dataset tensor: (52912, 28, 28)

Mean: -0.0698989

Standard deviation: 0.452942

Pickling notMNIST_large/F.pickle.

notMNIST_large/F

Full dataset tensor: (52912, 28, 28)

Mean: -0.125583

Standard deviation: 0.44709

Pickling notMNIST_large/G.pickle.

notMNIST_large/G

Full dataset tensor: (52912, 28, 28)

Mean: -0.0945815

Standard deviation: 0.44624

Pickling notMNIST_large/H.pickle.

notMNIST_large/H

Full dataset tensor: (52912, 28, 28)

Mean: -0.0685222

Standard deviation: 0.454231

Pickling notMNIST_large/I.pickle.

notMNIST_large/I

Full dataset tensor: (52912, 28, 28)

Mean: 0.0307863

Standard deviation: 0.468899

Pickling notMNIST_large/J.pickle.

notMNIST_large/J

Full dataset tensor: (52911, 28, 28)

Mean: -0.153359

Standard deviation: 0.443656

Pickling notMNIST_small/A.pickle.

notMNIST_small/A

Could not read: notMNIST_small/A/RGVtb2NyYXRpY2FCb2xkT2xkc3R5bGUgQm9sZC50dGY=.png : cannot identify image file 'notMNIST_small/A/RGVtb2NyYXRpY2FCb2xkT2xkc3R5bGUgQm9sZC50dGY=.png' - it's ok, skipping.

Full dataset tensor: (1872, 28, 28)

Mean: -0.132626

Standard deviation: 0.445128

Pickling notMNIST_small/B.pickle.

notMNIST_small/B

Full dataset tensor: (1873, 28, 28)

Mean: 0.00535608

Standard deviation: 0.457115

Pickling notMNIST_small/C.pickle.

notMNIST_small/C

Full dataset tensor: (1873, 28, 28)

Mean: -0.141521

Standard deviation: 0.44269

Pickling notMNIST_small/D.pickle.

notMNIST_small/D

Full dataset tensor: (1873, 28, 28)

Mean: -0.0492167

Standard deviation: 0.459759

Pickling notMNIST_small/E.pickle.

notMNIST_small/E

Full dataset tensor: (1873, 28, 28)

Mean: -0.0599148

Standard deviation: 0.45735

Pickling notMNIST_small/F.pickle.

notMNIST_small/F

Could not read: notMNIST_small/F/Q3Jvc3NvdmVyIEJvbGRPYmxpcXVlLnR0Zg==.png : cannot identify image file 'notMNIST_small/F/Q3Jvc3NvdmVyIEJvbGRPYmxpcXVlLnR0Zg==.png' - it's ok, skipping.

Full dataset tensor: (1872, 28, 28)

Mean: -0.118185

Standard deviation: 0.452279

Pickling notMNIST_small/G.pickle.

notMNIST_small/G

Full dataset tensor: (1872, 28, 28)

Mean: -0.0925503

Standard deviation: 0.449006

Pickling notMNIST_small/H.pickle.

notMNIST_small/H

Full dataset tensor: (1872, 28, 28)

Mean: -0.0586893

Standard deviation: 0.458759

Pickling notMNIST_small/I.pickle.

notMNIST_small/I

Full dataset tensor: (1872, 28, 28)

Mean: 0.0526451

Standard deviation: 0.471894

Pickling notMNIST_small/J.pickle.

notMNIST_small/J

Full dataset tensor: (1872, 28, 28)

Mean: -0.151689

Standard deviation: 0.448014

Section 2

Let’s verify that the data still looks good. Displaying a sample of the labels and images from the ndarray. Hint: you can use matplotlib.pyplot.

检验数据是不是完好如初。利用matplotlib.pyplot 显示图片

with open('notMNIST_large/A.pickle', 'rb') as pk_f:

data4show = pickle.load(pk_f)

print(data4show.shape)

pic = data4show[0,:,:]

plt.imshow(pic)

plt.show()

(52909, 28, 28)

Section 3

检查数据集中各个类的数据数量是不是几乎相等。

file_path = 'notMNIST_large/{0}.pickle'

for ele in 'ABCDEFJHIJ':

with open(file_path.format(ele), 'rb') as pk_f:

dat = pickle.load(pk_f)

print('number of pictures in {}.pickle = '.format(ele), dat.shape[0])

number of pictures in A.pickle = 52909

number of pictures in B.pickle = 52911

number of pictures in C.pickle = 52912

number of pictures in D.pickle = 52911

number of pictures in E.pickle = 52912

number of pictures in F.pickle = 52912

number of pictures in J.pickle = 52911

number of pictures in H.pickle = 52912

number of pictures in I.pickle = 52912

number of pictures in J.pickle = 52911

聚合数据,并给数据打标记。A-J分别对应的是0-9.

Merge and prune the training data as needed. Depending on your computer setup, you might not be able to fit it all in memory, and you can tune train_size as needed. The labels will be stored into a separate array of integers 0 through 9.

Also create a validation dataset for hyperparameter tuning.

def make_arrays(nb_rows, img_size):

if nb_rows:

dataset = np.ndarray((nb_rows, img_size, img_size), dtype=np.float32)

labels = np.ndarray(nb_rows, dtype=np.int32)

else:

dataset, labels = None, None

return dataset, labels

def merge_datasets(pickle_files, train_size, valid_size=0):

num_classes = len(pickle_files)

valid_dataset, valid_labels = make_arrays(valid_size, image_size)

train_dataset, train_labels = make_arrays(train_size, image_size)

vsize_per_class = valid_size // num_classes

tsize_per_class = train_size // num_classes

start_v, start_t = 0, 0

end_v, end_t = vsize_per_class, tsize_per_class

end_l = vsize_per_class+tsize_per_class

for label, pickle_file in enumerate(pickle_files):

try:

with open(pickle_file, 'rb') as f:

letter_set = pickle.load(f)

np.random.shuffle(letter_set)

if valid_dataset is not None:

valid_letter = letter_set[:vsize_per_class, :, :]

valid_dataset[start_v:end_v, :, :] = valid_letter

valid_labels[start_v:end_v] = label

start_v += vsize_per_class

end_v += vsize_per_class

train_letter = letter_set[vsize_per_class:end_l, :, :]

train_dataset[start_t:end_t, :, :] = train_letter

train_labels[start_t:end_t] = label

start_t += tsize_per_class

end_t += tsize_per_class

except Exception as e:

print('Unable to process data from', pickle_file, ':', e)

raise

return valid_dataset, valid_labels, train_dataset, train_labels

train_size = 200000

valid_size = 10000

test_size = 10000

image_size = 28

train_datasets = [ele.join(['notMNIST_large/','.pickle'] ) for ele in list('ABCDEFGHIJ') ]

test_datasets = [ele.join(['notMNIST_small/','.pickle'] ) for ele in list('ABCDEFGHIJ') ]

valid_dataset, valid_labels, train_dataset, train_labels = merge_datasets(

train_datasets, train_size, valid_size)

_, _, test_dataset, test_labels = merge_datasets(test_datasets, test_size)

print('Training:', train_dataset.shape, train_labels.shape)

print('Validation:', valid_dataset.shape, valid_labels.shape)

print('Testing:', test_dataset.shape, test_labels.shape)

Training: (200000, 28, 28) (200000,)

Validation: (10000, 28, 28) (10000,)

Testing: (10000, 28, 28) (10000,)

下一步,要随机化数据。

Next, we’ll randomize the data. It’s important to have the labels well shuffled for the training and test distributions to match.

def randomize(dataset, labels):

permutation = np.random.permutation(labels.shape[0])

shuffled_dataset = dataset[permutation,:,:]

shuffled_labels = labels[permutation]

return shuffled_dataset, shuffled_labels

train_dataset, train_labels = randomize(train_dataset, train_labels)

test_dataset, test_labels = randomize(test_dataset, test_labels)

valid_dataset, valid_labels = randomize(valid_dataset, valid_labels)

Section 4

Convince yourself that the data is still good after shuffling!

检查数据是否依然完好

mapping = {key:val for key, val in enumerate('ABCDEFGHIJ')}

def plot_check(matrix, key):

plt.imshow(matrix)

plt.show()

print('the picture should be ', mapping[key])

return None

length = train_dataset.shape[0]-1

for _ in xrange(5):

index = np.random.randint(length)

plot_check(train_dataset[index,:,:], train_labels[index])

the picture should be F

the picture should be I

the picture should be E

the picture should be I

the picture should be C

将数据汇总保存以便以后使用。

Finally, let’s save the data for later reuse:

pickle_file = 'notMNIST.pickle'

try:

f = open(pickle_file, 'wb')

save = {

'train_dataset': train_dataset,

'train_labels': train_labels,

'valid_dataset': valid_dataset,

'valid_labels': valid_labels,

'test_dataset': test_dataset,

'test_labels': test_labels,

}

pickle.dump(save, f, pickle.HIGHEST_PROTOCOL)

f.close()

except Exception as e:

print('Unable to save data to', pickle_file, ':', e)

raise

statinfo = os.stat(pickle_file)

print('Compressed pickle size:', statinfo.st_size)

Compressed pickle size: 690800441

Section 5

By construction, this dataset might contain a lot of overlapping samples, including training data that’s also contained in the validation and test set! Overlap between training and test can skew the results if you expect to use your model in an environment where there is never an overlap, but are actually ok if you expect to see training samples recur when you use it.

Measure how much overlap there is between training, validation and test samples.

数据集中可能有许多重叠的数据。

在训练集中和测试基中,重叠数据的存在可能导致结果的偏差。

但是实际操作中,训练基中出现了一些重复数据是被允许的。

Optional questions:

What about near duplicates between datasets? (images that are almost identical)

Create a sanitized validation and test set, and compare your accuracy on those in subsequent assignments

import multiprocessing as mp

import numpy as np

import cPickle as pk

import collections

def check_overlap(dataset1, start, end, dataset2, q):

'''

compute the number of data in dataset1 existing in dataset2[start to end]

'''

overlappingindex = []

length1 = dataset1.shape[0]

for i in xrange(length1):

for j in xrange(start, end):

error = np.sum(np.sum(np.abs(dataset1[i,:,:]-dataset2[j,:,:])))

if error < 0.2:

overlappingindex.append((i,j))

break

q.put(tuple(overlappingindex))

return None

print('start loading notMNIST.pickle')

with open('notMNIST.pickle', 'rb') as f:

data = pk.load(f)

print('loading finished.')

q = mp.Queue()

train_dataset = data['train_dataset']

valid_dataset = data['valid_dataset']

length = train_dataset.shape[0]

idx = (0, int(length*1.0/3), int(length*2.0/3), length)

processes = []

for i in xrange(3):

processes.append(mp.Process(target=check_overlap, args=(valid_dataset, idx[i], idx[i+1], train_dataset, q)))

for p in processes:

p.start()

for p in processes:

p.join()

results = []

while not q.empty():

results.append(q.get())

print(results)

func = lambda x: [i for xs in x for i in func(xs)] if isinstance(x,collections.Iterable) else [x]

print(func(result))

Section 6

Let’s get an idea of what an off-the-shelf classifier can give you on this data. It’s always good to check that there is something to learn, and that it’s a problem that is not so trivial that a canned solution solves it.

让我们先利用scikit-learn提供的逻辑回归模型尝尝鲜,对数据进行初探。

Train a simple model on this data using 50, 100, 1000 and 5000 training samples. Hint: you can use the LogisticRegression model from sklearn.linear_model.

我们分别将训练集的大小设置为50, 100, 1000, 5000 看看我们的训练效果!

Optional question: train an off-the-shelf model on all the data!

from sklearn.linear_model import LogisticRegression

import cPickle as pickle

import numpy as np

size = 50

image_size =28

print('loading data')

with open('notMNIST.pickle', 'rb') as f:

data = pickle.load(f)

print('finish loading')

train_dt = data['train_dataset']

length = train_dt.shape[0]

train_dt = train_dt.reshape(length, image_size*image_size)

train_lb = data['train_labels']

test_dt = data['test_dataset']

length = test_dt.shape[0]

test_lb = data['test_labels']

test_dt = test_dt.reshape(length, image_size*image_size)

def train_linear_logistic(tdata, tlabel):

model = LogisticRegression(C=1.0, penalty='l1')

print('initializing model size is = {}'.format(size))

model.fit(tdata[:size,:], tlabel[:size])

print('testing model')

y_out = model.predict(test_dt)

print('the accurace of the mode of size = {} is {}'.format(size, np.sum(y_out == test_lb)*1.0/len(y_out) ))

return None

train_linear_logistic(train_dt, train_lb)

size = 100

train_linear_logistic(train_dt, train_lb)

size = 1000

train_linear_logistic(train_dt, train_lb)

size = 5000

train_linear_logistic(train_dt, train_lb)

loading data

finish loading

initializing model size is = 50

testing model

the accurace of the mode of size = 50 is 0.5976

initializing model size is = 100

testing model

the accurace of the mode of size = 100 is 0.6973

initializing model size is = 1000

testing model

the accurace of the mode of size = 1000 is 0.8371

initializing model size is = 5000

testing model

the accurace of the mode of size = 5000 is 0.8609

下一步:实现简单神经网络和随机梯度下降

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)