内容

简短描述:ViT 的简短描述。

编码部分:使用 ViT 对自定义数据集进行二分类。

附录:ViT hypermeters 解释。

简短描述

视觉转换器是深度学习领域中流行的转换器之一。在视觉转换器出现之前,我们不得不在计算机视觉中使用卷积神经网络来完成复杂的任务。随着视觉转换器的引入,我们获得了一个更强大的计算机视觉任务模型。在本文中,我们将学习如何将视觉转换器用于图像分类任务。

下图总结了 Vision Transformer 的分类过程:

编码部分

第 1 步:创建 anaconda 环境并设置所需的库。

下载requirements.txt(链接如下),放在你VIT相关的工程文件夹下,激活anaconda环境:

https://drive.google.com/uc?export=download&id=14xiSObMiBNRPSbwyevZ_hRRk7V3R-txF

conda create --name vit_project python=3.8

conda activate vit_project

pip install -r requirements.txt

第 2 步:自定义数据集的文件夹结构。

确保分类数据集的文件夹结构与下图中的相同:

第 3 步:编码

用到的库:

from __future__ import print_function

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import torch

import torch.nn as nn

import torch.optim as optim

from linformer import Linformer

from PIL import Image

from torch.optim.lr_scheduler import StepLR

from tqdm.notebook import tqdm

from vit_pytorch.efficient import ViT

from sklearn.metrics import roc_curve, roc_auc_score

from sklearn.metrics import confusion_matrix

import torch.utils.data as data

import torchvision

from torchvision.transforms import ToTensor

torch.cuda.is_available()

超参数:

# Hyperparameters:

batch_size = 64

epochs = 20

lr = 3e-5

gamma = 0.7

seed = 142

IMG_SIZE = 128

patch_size = 16

num_classes = 2

数据加载器:

train_ds = torchvision.datasets.ImageFolder("dataset_new_split/train", transform=ToTensor())

valid_ds = torchvision.datasets.ImageFolder("dataset_new_split/val", transform=ToTensor())

test_ds = torchvision.datasets.ImageFolder("dataset_new_split/test", transform=ToTensor())

# Data Loaders:

train_loader = data.DataLoader(train_ds, batch_size=batch_size, shuffle=True, num_workers=4)

valid_loader = data.DataLoader(valid_ds, batch_size=batch_size, shuffle=True, num_workers=4)

test_loader = data.DataLoader(test_ds, batch_size=batch_size, shuffle=True, num_workers=4)

构建模型:

# Training device:

device = 'cuda'

# Linear Transformer:

efficient_transformer = Linformer(dim=128, seq_len=64+1, depth=12, heads=8, k=64)

# Vision Transformer Model:

model = ViT(dim=128, image_size=128, patch_size=patch_size, num_classes=num_classes, transformer=efficient_transformer, channels=3).to(device)

# loss function

criterion = nn.CrossEntropyLoss()

# Optimizer

optimizer = optim.Adam(model.parameters(), lr=lr)

# Learning Rate Scheduler for Optimizer:

scheduler = StepLR(optimizer, step_size=1, gamma=gamma)

自定义模型训练:

# Training:

for epoch in range(epochs):

epoch_loss = 0

epoch_accuracy = 0

for data, label in tqdm(train_loader):

data = data.to(device)

label = label.to(device)

output = model(data)

loss = criterion(output, label)

optimizer.zero_grad()

loss.backward()

optimizer.step()

acc = (output.argmax(dim=1) == label).float().mean()

epoch_accuracy += acc / len(train_loader)

epoch_loss += loss / len(train_loader)

with torch.no_grad():

epoch_val_accuracy = 0

epoch_val_loss = 0

for data, label in valid_loader:

data = data.to(device)

label = label.to(device)

val_output = model(data)

val_loss = criterion(val_output, label)

acc = (val_output.argmax(dim=1) == label).float().mean()

epoch_val_accuracy += acc / len(valid_loader)

epoch_val_loss += val_loss / len(valid_loader)

print(

f"Epoch : {epoch+1} - loss : {epoch_loss:.4f} - acc: {epoch_accuracy:.4f} - val_loss : {epoch_val_loss:.4f} - val_acc: {epoch_val_accuracy:.4f}\n"

)

模型保存和加载以备将来使用:

# Save Model:

PATH = "epochs"+"_"+str(epochs)+"_"+"img"+"_"+str(IMG_SIZE)+"_"+"patch"+"_"+str(patch_size)+"_"+"lr"+"_"+str(lr)+".pt"

torch.save(model.state_dict(), PATH)

模型评估——准确性:

# Performance on Valid/Test Data

def overall_accuracy(model, test_loader, criterion):

'''

Model testing

Args:

model: model used during training and validation

test_loader: data loader object containing testing data

criterion: loss function used

Returns:

test_loss: calculated loss during testing

accuracy: calculated accuracy during testing

y_proba: predicted class probabilities

y_truth: ground truth of testing data

'''

y_proba = []

y_truth = []

test_loss = 0

total = 0

correct = 0

for data in tqdm(test_loader):

X, y = data[0].to('cpu'), data[1].to('cpu')

output = model(X)

test_loss += criterion(output, y.long()).item()

for index, i in enumerate(output):

y_proba.append(i[1])

y_truth.append(y[index])

if torch.argmax(i) == y[index]:

correct+=1

total+=1

accuracy = correct/total

y_proba_out = np.array([float(y_proba[i]) for i in range(len(y_proba))])

y_truth_out = np.array([float(y_truth[i]) for i in range(len(y_truth))])

return test_loss, accuracy, y_proba_out, y_truth_out

loss, acc, y_proba, y_truth = overall_accuracy(model, test_loader, criterion = nn.CrossEntropyLoss())

print(f"Accuracy: {acc}")

print(pd.value_counts(y_truth))

模型评估——ROC 曲线:

# Plot ROC curve:

def plot_ROCAUC_curve(y_truth, y_proba, fig_size):

'''

Plots the Receiver Operating Characteristic Curve (ROC) and displays Area Under the Curve (AUC) score.

Args:

y_truth: ground truth for testing data output

y_proba: class probabilties predicted from model

fig_size: size of the output pyplot figure

Returns: void

'''

fpr, tpr, threshold = roc_curve(y_truth, y_proba)

auc_score = roc_auc_score(y_truth, y_proba)

txt_box = "AUC Score: " + str(round(auc_score, 4))

plt.figure(figsize=fig_size)

plt.plot(fpr, tpr)

plt.plot([0, 1], [0, 1],'--')

plt.annotate(txt_box, xy=(0.65, 0.05), xycoords='axes fraction')

plt.title("Receiver Operating Characteristic (ROC) Curve")

plt.xlabel("False Positive Rate (FPR)")

plt.ylabel("True Positive Rate (TPR)")

# plt.savefig('ROC.png')

plot_ROCAUC_curve(y_truth, y_proba, (8, 8))

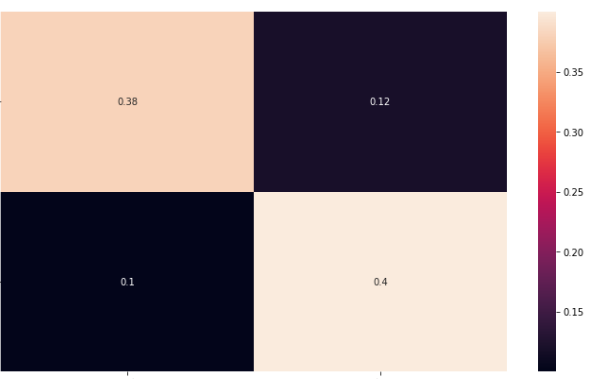

模型评估混淆矩阵

from sklearn.metrics import confusion_matrix

import seaborn as sn

import pandas as pd

y_pred = []

y_true = []

net = model

# iterate over test data

for inputs, labels in test_loader:

output = net(inputs) # Feed Network

output = (torch.max(torch.exp(output), 1)[1]).data.cpu().numpy()

y_pred.extend(output) # Save Prediction

labels = labels.data.cpu().numpy()

y_true.extend(labels) # Save Truth

# constant for classes

classes = ('cats', 'dogs')

# Build confusion matrix

cf_matrix = confusion_matrix(y_true, y_pred)

df_cm = pd.DataFrame(cf_matrix/np.sum(cf_matrix), index = [i for i in classes],

columns = [i for i in classes])

plt.figure(figsize = (12,7))

sn.heatmap(df_cm, annot=True)

# plt.savefig('cm.png')

新图像的模型推理:

# Inference on Single Images (cats-dogs):

test_image = "new_cat_image.jpg"

test_image_null = "new_dog_image.png"

image = Image.open(test_image)

image_null = Image.open(test_image_null)

# Define tensor transform and apply it:

data_transform = transforms.Compose([transforms.Resize((224, 224)), transforms.ToTensor()])

image_t = data_transform(image).unsqueeze(0)

image_null_t = data_transform(image_null).unsqueeze(0)

# Labels:

for inputs, labels in test_loader:

labels = labels.data.cpu().numpy()

# Prediction:

out_cat = model(image_t)

out_dog= model(image_null_t)

print("predicted cat tensor:", out_cat)

print("predicted dog tensor:", out_dog)

print("")

# Print:

if(labels[out_cat.argmax()]== 0):

print("smoke")

else:

print("else")

# Show Image:

plt.figure(figsize=(2, 2))

plt.imshow(image)

plt.show()

# Print:

if(labels[out_dog.argmax()]== 0):

print("cat")

else:

print("dog")

# Show Image Null:

plt.figure(figsize=(2, 2))

plt.imshow(image_null)

plt.show()

附录 :

1. image_size: int (w 或 h 的最大尺寸)

2. patch_size: int (# of patches, image_size 必须能被 patch_size 整除,必须大于 16)

3. num_classes: int (# of classes)

4. dim: int(线性变换后输出张量最后一维nn.Linear(..,dim))

5. depth: int (# of transformer blocks)

6. heads: int (# of heads in Multi-head Attention layer)

7. mlp_dim: int(MLP-前馈层的维度)

8. channels: int (图像通道 = 3)

9. dropout:float(在[0,1]之间——神经元的dropout率)

10. emb_dropout(在[0,1]之间——嵌入的dropout率——通常为0)

ViT 学习率和损失函数:

Optimizer: ADAM 优化器:ADAM

学习率:StepLR(每 #(step_size) 个纪元通过 gamma 衰减 LR)

损失函数:CrossEntropy(记得也试试 BinaryCrossEntropy:nn.BCELoss())