参考

关于coco的格式

https://detectron2.readthedocs.io/en/latest/tutorials/datasets.html#register-a-dataset

注册并训练自己的数据集合https://blog.csdn.net/qq_29750461/article/details/106761382

https://cloud.tencent.com/developer/article/1960793

coco api https://blog.csdn.net/qq_41709370/article/details/108471072

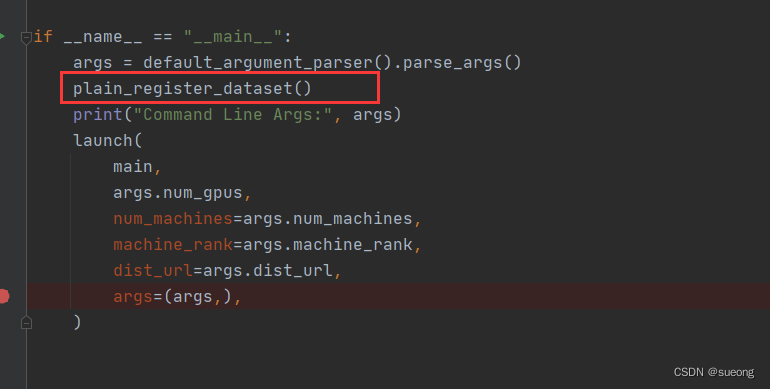

注意这里如果出现说自己的数据没有注册的错误,需要在main里面加上函数plain_register_dataset()注册自己的数据集

数据转换成coco格式

一些坑:

-

bbox对于coco格式来说[x,y,width,height] x,y是左上角的坐标

-

file_name是图片的绝对路径 要写成xxx.jpg

-

有些coco文件里说不是必须的字段但是如果没有会报keyerror

anntations里比如area、iscrowd 可以设置默认值 area可以是bbox的w*h

-

读取图片shape[0]是height shape[1]是witdh

height = img.shape[0]

width = img.shape[1]

注册数据集

"""

A main training script.

This scripts reads a given config file and runs the training or evaluation.

It is an entry point that is made to train standard models in detectron2.

In order to let one script support training of many models,

this script contains logic that are specific to these built-in models and therefore

may not be suitable for your own project.

For example, your research project perhaps only needs a single "evaluator".

Therefore, we recommend you to use detectron2 as an library and take

this file as an example of how to use the library.

You may want to write your own script with your datasets and other customizations.

"""

import logging

import os

from collections import OrderedDict

import cv2

from detectron2.utils.visualizer import Visualizer

import detectron2.utils.comm as comm

from detectron2.checkpoint import DetectionCheckpointer

from detectron2.config import get_cfg

from detectron2.data import MetadataCatalog

from detectron2.engine import DefaultTrainer, default_argument_parser, default_setup, hooks, launch

from detectron2.evaluation import (

CityscapesInstanceEvaluator,

CityscapesSemSegEvaluator,

COCOEvaluator,

COCOPanopticEvaluator,

DatasetEvaluators,

LVISEvaluator,

PascalVOCDetectionEvaluator,

SemSegEvaluator,

verify_results,

)

from detectron2.modeling import GeneralizedRCNNWithTTA

from detectron2.data import DatasetCatalog, MetadataCatalog

from detectron2.data.datasets.coco import load_coco_json

import pycocotools

CLASS_NAMES =["background", "military"]

DATASET_ROOT = '/home/szr/new_dete/detectron2/datasets'

ANN_ROOT = os.path.join(DATASET_ROOT, 'COCOformat')

TRAIN_PATH = os.path.join(DATASET_ROOT, 'JPEGImages')

VAL_PATH = os.path.join(DATASET_ROOT, 'JPEGImages')

TRAIN_JSON = os.path.join(ANN_ROOT, 'train.json')

VAL_JSON = os.path.join(ANN_ROOT, 'val.json')

PREDEFINED_SPLITS_DATASET = {

"coco_my_train": (TRAIN_PATH, TRAIN_JSON),

"coco_my_val": (VAL_PATH, VAL_JSON),

}

def plain_register_dataset():

DatasetCatalog.register("coco_my_train", lambda: load_coco_json(TRAIN_JSON, TRAIN_PATH))

MetadataCatalog.get("coco_my_train").set(thing_classes=CLASS_NAMES,

evaluator_type='coco',

json_file=TRAIN_JSON,

image_root=TRAIN_PATH)

DatasetCatalog.register("coco_my_val", lambda: load_coco_json(VAL_JSON, VAL_PATH))

MetadataCatalog.get("coco_my_val").set(thing_classes=CLASS_NAMES,

evaluator_type='coco',

json_file=VAL_JSON,

image_root=VAL_PATH)

def checkout_dataset_annotation(name="coco_my_val"):

dataset_dicts = load_coco_json(TRAIN_JSON, TRAIN_PATH)

print(len(dataset_dicts))

for i, d in enumerate(dataset_dicts,0):

img = cv2.imread(d["file_name"])

visualizer = Visualizer(img[:, :, ::-1], metadata=MetadataCatalog.get(name), scale=1.5)

vis = visualizer.draw_dataset_dict(d)

cv2.imwrite('out/'+str(i) + '.jpg',vis.get_image()[:, :, ::-1])

if i == 200:

break

def build_evaluator(cfg, dataset_name, output_folder=None):

"""

Create evaluator(s) for a given dataset.

This uses the special metadata "evaluator_type" associated with each builtin dataset.

For your own dataset, you can simply create an evaluator manually in your

script and do not have to worry about the hacky if-else logic here.

"""

if output_folder is None:

output_folder = os.path.join(cfg.OUTPUT_DIR, "inference")

evaluator_list = []

evaluator_type = MetadataCatalog.get(dataset_name).evaluator_type

if evaluator_type in ["sem_seg", "coco_panoptic_seg"]:

evaluator_list.append(

SemSegEvaluator(

dataset_name,

distributed=True,

output_dir=output_folder,

)

)

if evaluator_type in ["coco", "coco_panoptic_seg"]:

evaluator_list.append(COCOEvaluator(dataset_name, output_dir=output_folder))

if evaluator_type == "coco_panoptic_seg":

evaluator_list.append(COCOPanopticEvaluator(dataset_name, output_folder))

if evaluator_type == "cityscapes_instance":

return CityscapesInstanceEvaluator(dataset_name)

if evaluator_type == "cityscapes_sem_seg":

return CityscapesSemSegEvaluator(dataset_name)

elif evaluator_type == "pascal_voc":

return PascalVOCDetectionEvaluator(dataset_name)

elif evaluator_type == "lvis":

return LVISEvaluator(dataset_name, output_dir=output_folder)

if len(evaluator_list) == 0:

raise NotImplementedError(

"no Evaluator for the dataset {} with the type {}".format(dataset_name, evaluator_type)

)

elif len(evaluator_list) == 1:

return evaluator_list[0]

return DatasetEvaluators(evaluator_list)

class Trainer(DefaultTrainer):

"""

We use the "DefaultTrainer" which contains pre-defined default logic for

standard training workflow. They may not work for you, especially if you

are working on a new research project. In that case you can write your

own training loop. You can use "tools/plain_train_net.py" as an example.

"""

@classmethod

def build_evaluator(cls, cfg, dataset_name, output_folder=None):

return build_evaluator(cfg, dataset_name, output_folder)

@classmethod

def test_with_TTA(cls, cfg, model):

logger = logging.getLogger("detectron2.trainer")

logger.info("Running inference with test-time augmentation ...")

model = GeneralizedRCNNWithTTA(cfg, model)

evaluators = [

cls.build_evaluator(

cfg, name, output_folder=os.path.join(cfg.OUTPUT_DIR, "inference_TTA")

)

for name in cfg.DATASETS.TEST

]

res = cls.test(cfg, model, evaluators)

res = OrderedDict({k + "_TTA": v for k, v in res.items()})

return res

def setup(args):

"""

Create configs and perform basic setups.

"""

cfg = get_cfg()

args.config_file = "/home/szr/new_dete/detectron2/configs/COCO-Detection/retinanet_R_50_FPN_3x.yaml"

cfg.merge_from_file(args.config_file)

cfg.merge_from_list(args.opts)

cfg.DATASETS.TRAIN = ("coco_my_train",)

cfg.DATASETS.TEST = ("coco_my_val",)

cfg.DATALOADER.NUM_WORKERS = 4

cfg.INPUT.CROP.ENABLED = True

cfg.INPUT.MAX_SIZE_TRAIN = 640

cfg.INPUT.MAX_SIZE_TEST = 640

cfg.INPUT.MIN_SIZE_TRAIN = (512, 768)

cfg.INPUT.MIN_SIZE_TEST = 640

cfg.INPUT.MIN_SIZE_TRAIN_SAMPLING = 'range'

cfg.MODEL.RETINANET.NUM_CLASSES = 2

cfg.MODEL.WEIGHTS = "detectron2://COCO-Detection/retinanet_R_50_FPN_3x/190397829/model_final_5bd44e.pkl"

cfg.SOLVER.IMS_PER_BATCH = 4

ITERS_IN_ONE_EPOCH = int(1120 / cfg.SOLVER.IMS_PER_BATCH)

cfg.SOLVER.MAX_ITER = (ITERS_IN_ONE_EPOCH * 12) - 1

cfg.SOLVER.BASE_LR = 0.002

cfg.SOLVER.MOMENTUM = 0.9

cfg.SOLVER.WEIGHT_DECAY = 0.0001

cfg.SOLVER.WEIGHT_DECAY_NORM = 0.0

cfg.SOLVER.GAMMA = 0.1

cfg.SOLVER.STEPS = (7000,)

cfg.SOLVER.WARMUP_FACTOR = 1.0 / 1000

cfg.SOLVER.WARMUP_ITERS = 1000

cfg.SOLVER.WARMUP_METHOD = "linear"

cfg.SOLVER.CHECKPOINT_PERIOD = ITERS_IN_ONE_EPOCH - 1

cfg.TEST.EVAL_PERIOD = ITERS_IN_ONE_EPOCH

cfg.freeze()

default_setup(cfg, args)

return cfg

def main(args):

cfg = setup(args)

if args.eval_only:

model = Trainer.build_model(cfg)

DetectionCheckpointer(model, save_dir=cfg.OUTPUT_DIR).resume_or_load(

cfg.MODEL.WEIGHTS, resume=args.resume

)

res = Trainer.test(cfg, model)

if cfg.TEST.AUG.ENABLED:

res.update(Trainer.test_with_TTA(cfg, model))

if comm.is_main_process():

verify_results(cfg, res)

return res

"""

If you'd like to do anything fancier than the standard training logic,

consider writing your own training loop (see plain_train_net.py) or

subclassing the trainer.

"""

trainer = Trainer(cfg)

trainer.resume_or_load(resume=args.resume)

if cfg.TEST.AUG.ENABLED:

trainer.register_hooks(

[hooks.EvalHook(0, lambda: trainer.test_with_TTA(cfg, trainer.model))]

)

return trainer.train()

if __name__ == "__main__":

args = default_argument_parser().parse_args()

plain_register_dataset()

print("Command Line Args:", args)

launch(

main,

args.num_gpus,

num_machines=args.num_machines,

machine_rank=args.machine_rank,

dist_url=args.dist_url,

args=(args,),

)

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)