V4L2接口解析

操作步骤

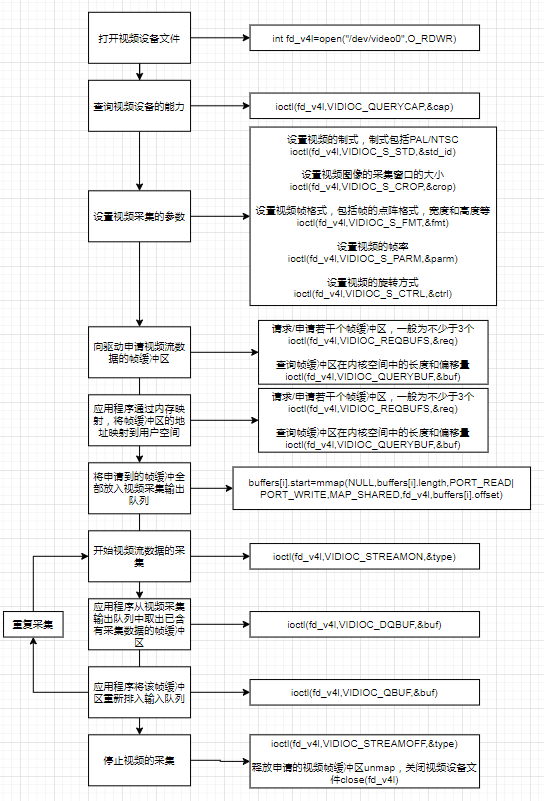

应用程序通过V4L2接口采集视频数据步骤

打开视频设备文件,通过视频采集的参数初始化,通过V4L2接口设置视频图像属性。

申请若干视频采集的帧缓存区,并将这些帧缓冲区从内核空间映射到用户空间,便于应用程序读取/处理视频数据。

将申请到的帧缓冲区在视频采集输入队列排队,并启动视频采集。

驱动开始视频数据的采集,应用程序从视频采集输出队列中取出帧缓冲区,处理后,将帧缓冲区重新放入视频采集输入队列,循环往复采集连续的视频数据。

停止视频采集。

具体实现如下图

图中文字

打开视频设备文件

int fd_v4l=open("/dev/video0",O_RDWR)

查询视频设备的能力

ioctl(fd_v4l,VIDIOC_QUERYCAP,&cap)

设置视频采集的参数

设置视频的制式,制式包括PAL/NTSC

ioctl(fd_v4l,VIDIOC_S_STD,&std_id)

设置视频图像的采集窗口的大小

ioctl(fd_v4l,VIDIOC_S_CROP,&crop)

设置视频帧格式,包括帧的点阵格式,宽度和高度等

ioctl(fd_v4l,VIDIOC_S_FMT,&fmt)

设置视频的帧率

ioctl(fd_v4l,VIDIOC_S_PARM,&parm)

设置视频的旋转方式

ioctl(fd_v4l,VIDIOC_S_CTRL,&ctrl)

向驱动申请视频流数据的帧缓冲区

请求/申请若干个帧缓冲区,一般为不少于3个

ioctl(fd_v4l,VIDIOC_REQBUFS,&req)

查询帧缓冲区在内核空间中的长度和偏移量

ioctl(fd_v4l,VIDIOC_QUERYBUF,&buf)

应用程序通过内存映射,将帧缓冲区的地址映射到用户空间

buffers[i].start=mmap(NULL,buffers[i].length,PORT_READ|PORT_WRITE,MAP_SHARED,fd_v4l,buffers[i].offset)

将申请到的帧缓冲全部放入视频采集输出队列

ioctl(fd_v4l,VIDIOC_QBUF,&buf)

开始视频流数据的采集

ioctl(fd_v4l,VIDIOC_STREAMON,&type)

应用程序从视频采集输出队列中取出已含有采集数据的帧缓冲区

ioctl(fd_v4l,VIDIOC_DQBUF,&buf)

应用程序将该帧缓冲区重新排入输入队列

ioctl(fd_v4l,VIDIOC_QBUF,&buf)

停止视频的采集

ioctl(fd_v4l,VIDIOC_STREAMOFF,&type)

释放申请的视频帧缓冲区unmap,关闭视频设备文件close(fd_v4l)

ioctl控制符

VIDIOC_QUERYCAP 查询设备的属性

VIDIOC_ENUM_FMT 帧格式

VIDIOC_S_FMT 设置视频帧格式,对应struct v4l2_format

VIDIOC_G_FMT 获取视频帧格式等

VIDIOC_REQBUFS 请求/申请若干个帧缓冲区,一般为不少于3个

VIDIOC_QUERYBUF 查询帧缓冲区在内核空间的长度和偏移量

VIDIOC_QBUF 将申请到的帧缓冲区全部放入视频采集输出队列

VIDIOC_STREAMON 开始视频流数据的采集

VIDIOC_DQBUF 应用程序从视频采集输出队列中取出已含有采集数据的帧缓冲区

VIDIOC_STREAMOFF 应用程序将该帧缓冲区重新挂入输入队列

相关结构体解析

1.VIDIOC_QUERYCAP------>struct v4l2_capability

struct v4l2_capability {

__u8 driver[16]; // 驱动模块的名字

__u8 card[32]; // 设备名字

__u8 bus_info[32]; // 总线信息

__u32 version; // 内核版本

__u32 capabilities; // 整个物理设备支持的功能

__u32 device_caps; // 通过这个特定设备访问的功能

__u32 reserved[3];

};

其中capabilities: 4000001通过与各种宏位与,可以获得物理设备的功能属性。比如:

//#define V4L2_CAP_VIDEO_CAPTURE 0x00000001 /* Is a video capture device */ // 是否支持视频捕获

if ((cap.capabilities & V4L2_CAP_VIDEO_CAPTURE) == V4L2_CAP_VIDEO_CAPTURE)

{

printf("Device %s: supports capture.\n", FILE_VIDEO);

}

//#define V4L2_CAP_STREAMING 0x04000000 /* streaming I/O ioctls */ // 是否支持输入输出流控制

if ((cap.capabilities & V4L2_CAP_STREAMING) == V4L2_CAP_STREAMING)

{

printf("Device %s: supports streaming.\n", FILE_VIDEO);

}

2.VIDIOC_ENUM_FMT------>struct v4l2_fmtdesc

struct v4l2_fmtdesc {

__u32 index; /* Format number */

__u32 type; /* enum v4l2_buf_type */

__u32 flags;

__u8 description[32]; /* Description string */

__u32 pixelformat; /* Format fourcc */

__u32 reserved[4];

};

通过这个结构体,可以显示对应的摄像头所支持视频帧格式。

struct v4l2_fmtdesc fmtdesc;

fmtdesc.index = 0;

fmtdesc.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

printf("Supportformat:/n");

while(ioctl(fd, VIDIOC_ENUM_FMT, &fmtdesc) != -1)

{

printf("/t%d.%s/n",fmtdesc.index+1,fmtdesc.description);

fmtdesc.index++;

}

3.VIDIOC_S_FMT&VIDIOC_G_FMT------>struct v4l2_format

查看或设置视频帧格式

struct v4l2_format {

__u32 type; // 帧类型

union {

/* V4L2_BUF_TYPE_VIDEO_CAPTURE */

struct v4l2_pix_format pix; //像素格式

/* V4L2_BUF_TYPE_VIDEO_CAPTURE_MPLANE */

struct v4l2_pix_format_mplane pix_mp;

/* V4L2_BUF_TYPE_VIDEO_OVERLAY */

struct v4l2_window win;

/* V4L2_BUF_TYPE_VBI_CAPTURE */

struct v4l2_vbi_format vbi;

/* V4L2_BUF_TYPE_SLICED_VBI_CAPTURE */

struct v4l2_sliced_vbi_format sliced;

/* V4L2_BUF_TYPE_SDR_CAPTURE */

struct v4l2_sdr_format sdr;

/* user-defined */

__u8 raw_data[200];

} fmt;

};

struct v4l2_pix_format {

__u32 width; // 像素高度

__u32 height; // 像素宽度

__u32 pixelformat; // 像素格式

__u32 field; /* enum v4l2_field */

__u32 bytesperline; /* for padding, zero if unused */

__u32 sizeimage;

__u32 colorspace; /* enum v4l2_colorspace */

__u32 priv; /* private data, depends on pixelformat */

__u32 flags; /* format flags (V4L2_PIX_FMT_FLAG_*) */

__u32 ycbcr_enc; /* enum v4l2_ycbcr_encoding */

__u32 quantization; /* enum v4l2_quantization */

__u32 xfer_func; /* enum v4l2_xfer_func */

};

4.VIDIOC_CROPCAP------>struct v4l2_cropcap

5.VIDIOC_G_PARM&VIDIOC_S_PARM------>struct v4l2_streamparm

设置Stream信息,主要设置帧率。

struct v4l2_streamparm {

__u32 type; /* enum v4l2_buf_type */

union {

struct v4l2_captureparm capture;

struct v4l2_outputparm output;

__u8 raw_data[200]; /* user-defined */

} parm;

};

struct v4l2_captureparm {

/* Supported modes */

__u32 capability;

/* Current mode */

__u32 capturemode;

/* Time per frame in seconds */

struct v4l2_fract timeperframe;

/* Driver-specific extensions */

__u32 extendedmode;

/* # of buffers for read */

__u32 readbuffers;

__u32 reserved[4];

};

// timeperframe

// numerator和denominator可描述为每numerator秒有denominator帧

struct v4l2_fract {

__u32 numerator; // 分子

__u32 denominator; // 分母

};

6.VIDIOC_REQBUFS------>struct v4l2_requestbuffers

申请和管理缓冲区,应用程序和设备有三种交换数据方法,直接read/write(裸机)、内存映射(系统),用户指针(…)。一般在操作系统管理下,都是使用内存映射的方式。

struct v4l2_requestbuffers {

__u32 count; // 缓冲区内缓冲帧的数目

__u32 type; // 缓冲帧数据格式

__u32 memory; //

__u32 reserved[2];

};

enum v4l2_memory {

V4L2_MEMORY_MMAP = 1, // 内存映射

V4L2_MEMORY_USERPTR = 2, // 用户指针

V4L2_MEMORY_OVERLAY = 3,

V4L2_MEMORY_DMABUF = 4,

};

7.VIDIOC_QUERYBUF------>struct v4l2_buffer

struct v4l2_buffer {

__u32 index; // buffer的id

__u32 type; // enum v4l2_buf_type

__u32 bytesused; // buf中已经使用的字节数

__u32 flags; // MMAP 或 USERPTR

__u32 field;

struct timeval timestamp; // 帧时间戳

struct v4l2_timecode timecode;

__u32 sequence; // 队列中的序号

/* memory location */

__u32 memory;

union {

__u32 offset; // 设备内存起始offset

unsigned long userptr; // 指向用户空间的指针

struct v4l2_plane *planes;

__s32 fd;

} m;

__u32 length; // 缓存帧长度

__u32 reserved2;

__u32 reserved;

};

8.VIDIOC_DQBUF

应用程序从视频采集输出队列中取出已含有采集数据的帧缓冲区

9.VIDIOC_STREAMON&VIDIOC_STREAMOFF

开始视频采集和关闭视频采集

10.VIDIOC_QBUF

应用程序将该帧缓冲区重新挂入输入队列

使用V4L2接口采集图片

yuyv格式摄像头

v4l2grab.c

#include <unistd.h>

#include <sys/types.h>

#include <sys/stat.h>

#include <fcntl.h>

#include <stdio.h>

#include <sys/ioctl.h>

#include <stdlib.h>

#include <sys/mman.h>

#include <linux/types.h>

#include <linux/videodev2.h>

#include "v4l2grab.h"

#define TRUE 1

#define FALSE 0

#define FILE_VIDEO "/dev/video0"

#if 1

#define BMP "/usr/image_bmp.bmp"

#define YUV "/usr/image_yuv.yuv"

#else

#define BMP "//home/book/100ask_roc-rk3399-pc/USBvideo/lab_v4l2_yuyv/image_bmp.bmp"

#define YUV "//home/book/100ask_roc-rk3399-pc/USBvideo/lab_v4l2_yuyv/image_yuv.yuv"

#endif

#if 1

#define IMAGEWIDTH 640

#define IMAGEHEIGHT 480

#else

#define IMAGEWIDTH 1920

#define IMAGEHEIGHT 1080

#endif

static int fd;

static struct v4l2_capability cap;

struct v4l2_fmtdesc fmtdesc;

struct v4l2_format fmt,fmtack;

struct v4l2_streamparm setfps;

struct v4l2_requestbuffers req;

struct v4l2_buffer buf;

enum v4l2_buf_type type;

/*缓存RGB颜色数据*/

unsigned char frame_buffer[IMAGEWIDTH*IMAGEHEIGHT*3];

struct buffer

{

void * start;

unsigned int length;

} * buffers;

int init_v4l2(void)

{

int i;

int ret = 0;

//opendev

if ((fd = open(FILE_VIDEO, O_RDWR)) == -1)

{

printf("Error opening V4L interface\n");

return (FALSE);

}

//query cap

/*

* 读video_capability中信息。通过调用 IOCTL 函数和接口命令 VIDIOC_QUERYCAP 查询摄像头的信息,

* 结构体v4l2_capability中有包括驱动名称driver、card、bus_info、version以及属性capabilities。

* 这里我们需要检查一下是否是为视频采集设备V4L2_CAP_VIDEO_CAPTURE以及是否支持流IO操作V4L2_CAP_STREAMING。

*/

if (ioctl(fd, VIDIOC_QUERYCAP, &cap) == -1)

{

printf("Error opening device %s: unable to query device.\n",FILE_VIDEO);

return (FALSE);

}

else

{

printf("driver:\t\t%s\n",cap.driver);

printf("card:\t\t%s\n",cap.card);

printf("bus_info:\t%s\n",cap.bus_info);

printf("version:\t%d\n",cap.version);

printf("capabilities:\t%x\n",cap.capabilities);

if ((cap.capabilities & V4L2_CAP_VIDEO_CAPTURE) == V4L2_CAP_VIDEO_CAPTURE)

{

printf("Device %s: supports capture.\n",FILE_VIDEO);

}

if ((cap.capabilities & V4L2_CAP_STREAMING) == V4L2_CAP_STREAMING)

{

printf("Device %s: supports streaming.\n",FILE_VIDEO);

}

}

//emu all support fmt

/*

* 列举摄像头所支持像素格式。使用命令VIDIOC_ENUM_FMT,获取到的信息通过结构体v4l2_fmtdesc查询。

* 这步很关键,不同的摄像头可能支持的格式不一样,V4L2可以支持的格式很多,/usr/include/linux/videodev2.h文件中可以看到。

*/

fmtdesc.index=0;

fmtdesc.type=V4L2_BUF_TYPE_VIDEO_CAPTURE;

printf("Support format:\n");

while(ioctl(fd,VIDIOC_ENUM_FMT,&fmtdesc)!=-1)

{

printf("\t%d.%s\n",fmtdesc.index+1,fmtdesc.description);

fmtdesc.index++;

}

//set fmt

/*

* 设置像素格式。一般的USB摄像头都会支持YUYV,有些还支持其他的格式。通过前一步对摄像头所支持像素格式查询,下面需要对格式进行设置。

* 命令为VIDIOC_S_FMT,通过结构体v4l2_format把图像的像素格式设置为V4L2_PIX_FMT_YUYV,高度和宽度设置为IMAGEHEIGHT和IMAGEWIDTH。

* 一般情况下一个摄像头所支持的格式是不可以随便更改的,我尝试把把一个只支持YUYV和MJPEG的摄像头格式改为RGB24或者JPEG,都没有成功。

*/

fmt.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

fmt.fmt.pix.pixelformat = V4L2_PIX_FMT_YUYV;//试试这个 V4L2_PIX_FMT_MJPEG, wzp

fmt.fmt.pix.height = IMAGEHEIGHT;

fmt.fmt.pix.width = IMAGEWIDTH;

fmt.fmt.pix.field = V4L2_FIELD_INTERLACED;

if(ioctl(fd, VIDIOC_S_FMT, &fmt) == -1)

{

printf("Unable to set format\n");

return FALSE;

}

if(ioctl(fd, VIDIOC_G_FMT, &fmt) == -1)

{

printf("Unable to get format\n");

return FALSE;

}

/* 为了确保设置的格式作用到摄像头上,再通过命令VIDIOC_G_FMT将摄像头设置读取回来。*/

{

printf("fmt.type:\t\t%d\n",fmt.type);

printf("pix.pixelformat:\t%c%c%c%c\n",fmt.fmt.pix.pixelformat & 0xFF, (fmt.fmt.pix.pixelformat >> 8) & 0xFF,(fmt.fmt.pix.pixelformat >> 16) & 0xFF, (fmt.fmt.pix.pixelformat >> 24) & 0xFF);

printf("pix.height:\t\t%d\n",fmt.fmt.pix.height);

printf("pix.width:\t\t%d\n",fmt.fmt.pix.width);

printf("pix.field:\t\t%d\n",fmt.fmt.pix.field);

}

//set fps

setfps.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

setfps.parm.capture.timeperframe.numerator = 10;

setfps.parm.capture.timeperframe.denominator = 10;

printf("init %s \t[OK]\n",FILE_VIDEO);

return TRUE;

}

int v4l2_grab(void)

{

unsigned int n_buffers;

printf("start grab yuyv\n");

//request for 4 buffers

/* 申请缓存区。使用参数VIDIOC_REQBUFS和结构体v4l2_requestbuffers。v4l2_requestbuffers结构中定义了缓存的数量,系统会据此申请对应数量的视频缓存。 */

req.count=4;

req.type=V4L2_BUF_TYPE_VIDEO_CAPTURE;

req.memory=V4L2_MEMORY_MMAP;

if(ioctl(fd,VIDIOC_REQBUFS,&req)==-1)

{

printf("request for buffers error\n");

}

//mmap for buffers

buffers = malloc(req.count*sizeof (*buffers));

if (!buffers)

{

printf ("Out of memory\n");

return(FALSE);

}

for (n_buffers = 0; n_buffers < req.count; n_buffers++)

{

buf.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

buf.memory = V4L2_MEMORY_MMAP;

buf.index = n_buffers;

//query buffers

if (ioctl (fd, VIDIOC_QUERYBUF, &buf) == -1)

{

printf("query buffer error\n");

return(FALSE);

}

buffers[n_buffers].length = buf.length;

//map

buffers[n_buffers].start = mmap(NULL,buf.length,PROT_READ |PROT_WRITE, MAP_SHARED, fd, buf.m.offset);

if (buffers[n_buffers].start == MAP_FAILED)

{

printf("buffer map error\n");

return(FALSE);

}

}

//queue

for (n_buffers = 0; n_buffers < req.count; n_buffers++)

{

buf.index = n_buffers;

ioctl(fd, VIDIOC_QBUF, &buf);

}

/* 采集视频。使用命令VIDIOC_STREAMON。*/

type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

printf("start grab yuyv 1\n");

ioctl (fd, VIDIOC_STREAMON, &type);

printf("start grab yuyv 2\n");

/* 取出缓存中已经采样的缓存。使用命令VIDIOC_DQBUF。视频数据存放的位置是buffers[n_buffers].start的地址处。 */

ioctl(fd, VIDIOC_DQBUF, &buf);

printf("start grab yuyv 3\n");

printf("grab yuyv OK\n");

return(TRUE);

}

/* 由于采集到的图像数据是YUYV格式,需要进行颜色空间转换,定义了转换函数。 */

int yuyv_2_rgb888(void)

{

int i,j;

unsigned char y1,y2,u,v;

int r1,g1,b1,r2,g2,b2;

char * pointer;

pointer = buffers[0].start;

/* 由于后续RGB的数据要存储到BMP中,而BMP文件中颜色数据是“倒序”,即从下到上,从左到右,因而在向frame_buffer写数据时是从最后一行最左测开始写,每写满一行行数减一。 */

for(i=0;i<IMAGEHEIGHT;i++)

{

for(j=0;j<(IMAGEWIDTH/2);j++)

{

y1 = *( pointer + (i*(IMAGEWIDTH/2)+j)*4);

u = *( pointer + (i*(IMAGEWIDTH/2)+j)*4 + 1);

y2 = *( pointer + (i*(IMAGEWIDTH/2)+j)*4 + 2);

v = *( pointer + (i*(IMAGEWIDTH/2)+j)*4 + 3);

r1 = y1 + 1.042*(v-128);

g1 = y1 - 0.34414*(u-128) - 0.71414*(v-128);

b1 = y1 + 1.772*(u-128);

r2 = y2 + 1.042*(v-128);

g2 = y2 - 0.34414*(u-128) - 0.71414*(v-128);

b2 = y2 + 1.772*(u-128);

if(r1>255)

r1 = 255;

else if(r1<0)

r1 = 0;

if(b1>255)

b1 = 255;

else if(b1<0)

b1 = 0;

if(g1>255)

g1 = 255;

else if(g1<0)

g1 = 0;

if(r2>255)

r2 = 255;

else if(r2<0)

r2 = 0;

if(b2>255)

b2 = 255;

else if(b2<0)

b2 = 0;

if(g2>255)

g2 = 255;

else if(g2<0)

g2 = 0;

*(frame_buffer + ((IMAGEHEIGHT-1-i)*(IMAGEWIDTH/2)+j)*6 ) = (unsigned char)b1;

*(frame_buffer + ((IMAGEHEIGHT-1-i)*(IMAGEWIDTH/2)+j)*6 + 1) = (unsigned char)g1;

*(frame_buffer + ((IMAGEHEIGHT-1-i)*(IMAGEWIDTH/2)+j)*6 + 2) = (unsigned char)r1;

*(frame_buffer + ((IMAGEHEIGHT-1-i)*(IMAGEWIDTH/2)+j)*6 + 3) = (unsigned char)b2;

*(frame_buffer + ((IMAGEHEIGHT-1-i)*(IMAGEWIDTH/2)+j)*6 + 4) = (unsigned char)g2;

*(frame_buffer + ((IMAGEHEIGHT-1-i)*(IMAGEWIDTH/2)+j)*6 + 5) = (unsigned char)r2;

}

}

printf("change to RGB OK \n");

}

int close_v4l2(void)

{

if(fd != -1)

{

close(fd);

return (TRUE);

}

return (FALSE);

}

/*

* 首先,打开视频设备文件,进行视频采集的参数初始化,通过V4L2接口设置视频图像的采集窗口、采集的点阵大小和格式;

* 其次,申请若干视频采集的帧缓冲区,并将这些帧缓冲区从内核空间映射到用户空间,便于应用程序读取/处理视频数据;

* 第三,将申请到的帧缓冲区在视频采集输入队列排队,并启动视频采集;

* 第四,驱动开始视频数据的采集,应用程序从视频采集输出队列取出帧缓冲区,处理完后,将帧缓冲区重新放入视频采集输入队列,循环往复采集连续的视频数据;

* 第五,停止视频采集。

*/

int main(void)

{

FILE * fp1,* fp2;

int grab;

BITMAPFILEHEADER bf;

BITMAPINFOHEADER bi;

fp1 = fopen(BMP, "wb");

if(!fp1)

{

printf("open "BMP" error\n");

return(FALSE);

}

fp2 = fopen(YUV, "wb");

if(!fp2)

{

printf("open "YUV"error\n");

return(FALSE);

}

if(init_v4l2() == FALSE)

{

return(FALSE);

}

//Set BITMAPINFOHEADER

bi.biSize = 40;

bi.biWidth = IMAGEWIDTH;

bi.biHeight = IMAGEHEIGHT;

bi.biPlanes = 1;

bi.biBitCount = 24;

bi.biCompression = 0;

bi.biSizeImage = IMAGEWIDTH*IMAGEHEIGHT*3;

bi.biXPelsPerMeter = 0;

bi.biYPelsPerMeter = 0;

bi.biClrUsed = 0;

bi.biClrImportant = 0;

//Set BITMAPFILEHEADER

bf.bfType = 0x4d42;

bf.bfSize = 54 + bi.biSizeImage;

bf.bfReserved = 0;

bf.bfOffBits = 54;

grab=v4l2_grab();

printf("GRAB is %d\n",grab);

printf("grab ok\n");

fwrite(buffers[0].start, IMAGEHEIGHT*IMAGEWIDTH*2,1, fp2);

printf("save "YUV"OK\n");

yuyv_2_rgb888();

fwrite(&bf, 14, 1, fp1);

fwrite(&bi, 40, 1, fp1);

fwrite(frame_buffer, bi.biSizeImage, 1, fp1);

printf("save "BMP"OK\n");

fclose(fp1);

fclose(fp2);

close_v4l2();

return(TRUE);

}

v4l2grab.h

/****************************************************/

/* */

/* v4lgrab.h */

/* */

/****************************************************/

#ifndef __V4LGRAB_H__

#define __V4LGRAB_H__

#include <stdio.h>

#define FREE(x) if((x)){free((x));(x)=NULL;}

typedef unsigned char BYTE;

typedef unsigned short WORD;

typedef unsigned long DWORD;

/**/

#pragma pack(1)

typedef struct tagBITMAPFILEHEADER{

WORD bfType; // the flag of bmp, value is "BM"

DWORD bfSize; // size BMP file ,unit is bytes

DWORD bfReserved; // 0

DWORD bfOffBits; // must be 54

}BITMAPFILEHEADER;

typedef struct tagBITMAPINFOHEADER{

DWORD biSize; // must be 0x28

DWORD biWidth; //

DWORD biHeight; //

WORD biPlanes; // must be 1

WORD biBitCount; //

DWORD biCompression; //

DWORD biSizeImage; //

DWORD biXPelsPerMeter; //

DWORD biYPelsPerMeter; //

DWORD biClrUsed; //

DWORD biClrImportant; //

}BITMAPINFOHEADER;

typedef struct tagRGBQUAD{

BYTE rgbBlue;

BYTE rgbGreen;

BYTE rgbRed;

BYTE rgbReserved;

}RGBQUAD;

#if defined(__cplusplus)

extern "C" { /* Make sure we have C-declarations in C++ programs */

#endif

//if successful return 1,or else return 0

int openVideo();

int closeVideo();

//data 用来存储数据的空间, size空间大小

void getVideoData(unsigned char *data, int size);

#if defined(__cplusplus)

}

#endif

#endif //__V4LGRAB_H___

mjpg格式摄像头

v4l2grab_mjpg.c

#include <unistd.h>

#include <sys/types.h>

#include <sys/stat.h>

#include <fcntl.h>

#include <stdio.h>

#include <sys/ioctl.h>

#include <stdlib.h>

#include <sys/mman.h>

#include <linux/types.h>

#include <linux/videodev2.h>

#include <string.h>

#include "v4l2grab.h"

#define TRUE 1

#define FALSE 0

#define FILE_VIDEO "/dev/video0"

#if 1

#define JPG "/usr/image_jpg.jpg"

#define BMP "/usr/image_bmp.bmp"

#define YUV "/usr/image_yuv.yuv"

#else

#define BMP "//home/book/100ask_roc-rk3399-pc/USBvideo/lab_v4l2_yuyv_fix/image_bmp.bmp"

#define YUV "//home/book/100ask_roc-rk3399-pc/USBvideo/lab_v4l2_yuyv_fix/image_yuv.yuv"

#endif

#if 1

#define IMAGEWIDTH 640

#define IMAGEHEIGHT 480

#else

//#define IMAGEWIDTH 1920

//#define IMAGEHEIGHT 1080

#define IMAGEWIDTH 3840

#define IMAGEHEIGHT 2160

#endif

static int fd;

static struct v4l2_capability cap;

struct v4l2_fmtdesc fmtdesc;

struct v4l2_format fmt,fmtack;

struct v4l2_streamparm setfps;

struct v4l2_requestbuffers req;

struct v4l2_buffer buf;

enum v4l2_buf_type type;

/*缓存RGB颜色数据*/

unsigned char frame_buffer[IMAGEWIDTH*IMAGEHEIGHT*3];

struct buffer

{

void * start;

unsigned int length;

} * buffers;

int init_v4l2(void)

{

int i;

int ret = 0;

//opendev

if ((fd = open(FILE_VIDEO, O_RDWR)) == -1)

{

printf("Error opening V4L interface\n");

return (FALSE);

}

//query cap

/*

* 读video_capability中信息。通过调用 IOCTL 函数和接口命令 VIDIOC_QUERYCAP 查询摄像头的信息,

* 结构体v4l2_capability中有包括驱动名称driver、card、bus_info、version以及属性capabilities。

* 这里我们需要检查一下是否是为视频采集设备V4L2_CAP_VIDEO_CAPTURE以及是否支持流IO操作V4L2_CAP_STREAMING。

*/

if (ioctl(fd, VIDIOC_QUERYCAP, &cap) == -1)

{

printf("Error opening device %s: unable to query device.\n",FILE_VIDEO);

return (FALSE);

}

else

{

printf("driver:\t\t%s\n",cap.driver);

printf("card:\t\t%s\n",cap.card);

printf("bus_info:\t%s\n",cap.bus_info);

printf("version:\t%d\n",cap.version);

printf("capabilities:\t%x\n",cap.capabilities);//V4L2_CAP_VIDEO_CAPTURE // 是否支持图像获取

printf("device_caps:\t%x\n",cap.device_caps);

if ((cap.capabilities & V4L2_CAP_VIDEO_CAPTURE) == V4L2_CAP_VIDEO_CAPTURE)

{

printf("Device %s: supports capture.\n",FILE_VIDEO);

}

if ((cap.capabilities & V4L2_CAP_STREAMING) == V4L2_CAP_STREAMING)

{

printf("Device %s: supports streaming.\n",FILE_VIDEO);

}

}

//emu all support fmt

/*

* 列举摄像头所支持像素格式。使用命令VIDIOC_ENUM_FMT,获取到的信息通过结构体v4l2_fmtdesc查询。

* 这步很关键,不同的摄像头可能支持的格式不一样,V4L2可以支持的格式很多,/usr/include/linux/videodev2.h文件中可以看到。

*/

fmtdesc.index=0;

fmtdesc.type=V4L2_BUF_TYPE_VIDEO_CAPTURE;

printf("Support format:\n");

while(ioctl(fd,VIDIOC_ENUM_FMT,&fmtdesc)!=-1)

{

printf("\t%d.%s\n",fmtdesc.index+1,fmtdesc.description);

fmtdesc.index++;

}

type = V4L2_BUF_TYPE_VIDEO_CAPTURE;//初始化

struct v4l2_fmtdesc fmt_1;

struct v4l2_frmsizeenum frmsize;

struct v4l2_frmivalenum frmival;

fmt_1.index = 0; //索引

fmt_1.type = type;

while (ioctl(fd, VIDIOC_ENUM_FMT, &fmt_1) >= 0) {

frmsize.pixel_format = fmt_1.pixelformat;

frmsize.index = 0;

while (ioctl(fd, VIDIOC_ENUM_FRAMESIZES, &frmsize) >= 0){

if(frmsize.type == V4L2_FRMSIZE_TYPE_DISCRETE){

printf("line:%d %dx%d\n",__LINE__, frmsize.discrete.width, frmsize.discrete.height);

}else if(frmsize.type == V4L2_FRMSIZE_TYPE_STEPWISE){

printf("line:%d %dx%d\n",__LINE__, frmsize.discrete.width, frmsize.discrete.height);

}

frmsize.index++;

}

fmt_1.index++;

}

//set fmt

/*

* 设置像素格式。一般的USB摄像头都会支持YUYV,有些还支持其他的格式。通过前一步对摄像头所支持像素格式查询,下面需要对格式进行设置。

* 命令为VIDIOC_S_FMT,通过结构体v4l2_format把图像的像素格式设置为V4L2_PIX_FMT_YUYV,高度和宽度设置为IMAGEHEIGHT和IMAGEWIDTH。

* 一般情况下一个摄像头所支持的格式是不可以随便更改的,我尝试把把一个只支持YUYV和MJPEG的摄像头格式改为RGB24或者JPEG,都没有成功。

*/

fmt.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

// fmt.fmt.pix.pixelformat = V4L2_PIX_FMT_YUYV;

fmt.fmt.pix.pixelformat = V4L2_PIX_FMT_MJPEG;//wzp

fmt.fmt.pix.height = IMAGEHEIGHT;

fmt.fmt.pix.width = IMAGEWIDTH;

fmt.fmt.pix.field = V4L2_FIELD_INTERLACED;

// fmt.fmt.pix.field = V4L2_FIELD_NONE;//wzp

if(ioctl(fd, VIDIOC_S_FMT, &fmt) == -1)

{

printf("Unable to set format\n");

return FALSE;

}

if(ioctl(fd, VIDIOC_G_FMT, &fmt) == -1)

{

printf("Unable to get format\n");

return FALSE;

}

/* 为了确保设置的格式作用到摄像头上,再通过命令VIDIOC_G_FMT将摄像头设置读取回来。*/

{

printf("fmt.type:\t\t%d\n",fmt.type);

printf("pix.pixelformat:\t%c%c%c%c\n",fmt.fmt.pix.pixelformat & 0xFF, (fmt.fmt.pix.pixelformat >> 8) & 0xFF,(fmt.fmt.pix.pixelformat >> 16) & 0xFF, (fmt.fmt.pix.pixelformat >> 24) & 0xFF);

printf("pix.height:\t\t%d\n",fmt.fmt.pix.height);

printf("pix.width:\t\t%d\n",fmt.fmt.pix.width);

printf("pix.field:\t\t%d\n",fmt.fmt.pix.field);

}

//set fps

/*

setfps.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

setfps.parm.capture.timeperframe.numerator = 10;

setfps.parm.capture.timeperframe.denominator = 10;

*/

printf("init %s \t[OK]\n",FILE_VIDEO);

return TRUE;

}

int v4l2_grab(void)

{

unsigned int n_buffers;

printf("start grab yuyv\n");

//request for 4 buffers

/* 申请缓存区。使用参数VIDIOC_REQBUFS和结构体v4l2_requestbuffers。v4l2_requestbuffers结构中定义了缓存的数量,系统会据此申请对应数量的视频缓存。 */

req.count=4;

// req.count=1;//wzp

req.type=V4L2_BUF_TYPE_VIDEO_CAPTURE;

req.memory=V4L2_MEMORY_MMAP;

if(ioctl(fd,VIDIOC_REQBUFS,&req)==-1)

{

printf("request for buffers error\n");

}

//mmap for buffers

buffers = malloc(req.count*sizeof (*buffers));

// buffers = (uchar*)malloc(req.count*sizeof (*buffers));//wzp

if (!buffers)

{

printf ("Out of memory\n");

return(FALSE);

}

//将四个已申请到的缓冲帧映射到应用程序,用buffers 指针记录

for (n_buffers = 0; n_buffers < req.count; n_buffers++)

{

memset(&buf,0,sizeof(buf));

buf.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

buf.memory = V4L2_MEMORY_MMAP;

buf.index = n_buffers;

//query buffers

if (ioctl (fd, VIDIOC_QUERYBUF, &buf) == -1)

{

printf("query buffer error\n");

return(FALSE);

}

buffers[n_buffers].length = buf.length;

//map

buffers[n_buffers].start = mmap(NULL,buf.length,PROT_READ |PROT_WRITE, MAP_SHARED, fd, buf.m.offset);

if (buffers[n_buffers].start == MAP_FAILED)

{

printf("buffer map error\n");

return(FALSE);

}

}

//queue

for (n_buffers = 0; n_buffers < req.count; n_buffers++)

{

buf.index = n_buffers;

ioctl(fd, VIDIOC_QBUF, &buf);

}

/* 采集视频。使用命令 VIDIOC_STREAMON。*/

type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

ioctl (fd, VIDIOC_STREAMON, &type);

/* 取出缓存中已经采样的缓存。使用命令 VIDIOC_DQBUF。视频数据存放的位置是buffers[n_buffers].start的地址处。 */

ioctl(fd, VIDIOC_DQBUF, &buf);

printf("grab yuyv OK\n");

return(TRUE);

}

int close_v4l2(void)

{

if(fd != -1)

{

close(fd);

return (TRUE);

}

return (FALSE);

}

/*

* 首先,打开视频设备文件,进行视频采集的参数初始化,通过V4L2接口设置视频图像的采集窗口、采集的点阵大小和格式;

* 其次,申请若干视频采集的帧缓冲区,并将这些帧缓冲区从内核空间映射到用户空间,便于应用程序读取/处理视频数据;

* 第三,将申请到的帧缓冲区在视频采集输入队列排队,并启动视频采集;

* 第四,驱动开始视频数据的采集,应用程序从视频采集输出队列取出帧缓冲区,处理完后,将帧缓冲区重新放入视频采集输入队列,循环往复采集连续的视频数据;

* 第五,停止视频采集。

*/

int main(void)

{

FILE * fp1;

int grab;

BITMAPFILEHEADER bf;

BITMAPINFOHEADER bi;

fp1 = fopen(JPG, "wb");

if(!fp1)

{

printf("open %s error\n", JPG);

return(FALSE);

}

if(init_v4l2() == FALSE)

{

return(FALSE);

}

grab=v4l2_grab();

printf("GRAB is %d\n",grab);

printf("grab ok\n");

fwrite(buffers[0].start, buffers[0].length,1, fp1);

printf("save %s OK\n", JPG);

fclose(fp1);

close_v4l2();

return(TRUE);

}

使用v4l2接口采集视频

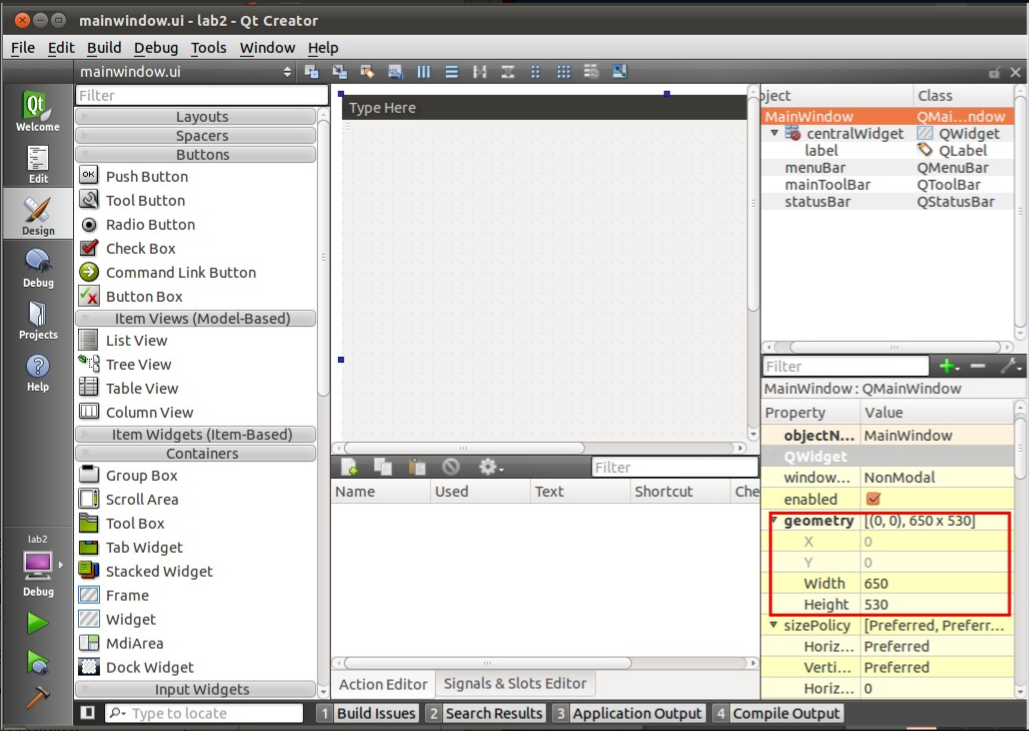

使用QT编程

首先创建一个widget工程,在里面创建一个label

widget.h

#ifndef WIDGET_H

#define WIDGET_H

#include <QWidget>

#include "videodevice.h"

namespace Ui {

class Widget;

}

#define BYTE unsigned char

#define SBYTE signed char

#define SWORD signed short int

#define WORD unsigned short int

#define DWORD unsigned long int

#define SDWORD signed long int

typedef struct tagBITMAPFILEHEADER{

WORD bfType; // the flag of bmp, value is "BM"

DWORD bfSize; // size BMP file ,unit is bytes

DWORD bfReserved; // 0

DWORD bfOffBits; // must be 54

}BITMAPFILEHEADER;

typedef struct tagBITMAPINFOHEADER{

DWORD biSize; // must be 0x28

DWORD biWidth; //

DWORD biHeight; //

WORD biPlanes; // must be 1

WORD biBitCount; //

DWORD biCompression; //

DWORD biSizeImage; //

DWORD biXPelsPerMeter; //

DWORD biYPelsPerMeter; //

DWORD biClrUsed; //

DWORD biClrImportant; //

}BITMAPINFOHEADER;

class Widget : public QWidget

{

Q_OBJECT

public:

explicit Widget(QWidget *parent = nullptr);

~Widget();

private:

Ui::Widget *ui;

void init_bmp_header();

VideoDevice *vd;

QImage *frame;

int rs;

size_t len;

BITMAPFILEHEADER bf;

BITMAPINFOHEADER bi;

#ifdef USE_YUYV

unsigned char *rgb_buffer;

#endif

unsigned char * yuv_buffer_pointer;

private slots:

void paintEvent(QPaintEvent *);

};

#endif // WIDGET_H

widget.cpp

#include "widget.h"

#include "ui_widget.h"

#include <QThread>

Widget::Widget(QWidget *parent) :

QWidget(parent),

ui(new Ui::Widget)

{

ui->setupUi(this);

vd = new VideoDevice(FILE_VIDEO);

frame = new QImage();

#ifdef USE_YUYV

rgb_buffer = (unsigned char *)malloc(IMAGEWIDTH*IMAGEHEIGHT*3*sizeof(unsigned char));

init_bmp_header();

#endif

}

Widget::~Widget()

{

delete ui;

}

void Widget::paintEvent(QPaintEvent *)

{

#ifndef USE_YUYV

QByteArray aa;

QPixmap pic;

rs = vd->get_frame(&yuv_buffer_pointer, &len);

aa.append((char *)yuv_buffer_pointer, len);

frame->loadFromData(aa);

pic=QPixmap::fromImage(*frame,Qt::AutoColor);

pic=pic.scaled(SCALED_WIDTH, SCALED_HEIGHT, Qt::KeepAspectRatio, Qt::SmoothTransformation); // 按比例缩放

ui->label->setPixmap(pic);

rs = vd->unget_frame();

#else

QByteArray aa;

rs = vd->get_frame(&yuv_buffer_pointer, &len);

vd->convert_yuv_to_rgb_buffer(&yuv_buffer_pointer, rgb_buffer);

aa.append((char *)&bf.bfType, 2);

aa.append((char *)&bf.bfSize, 4);

aa.append((char *)&bf.bfReserved, 4);

aa.append((char *)&bf.bfOffBits, 4);

aa.append((char *)&bi.biSize, 4);

aa.append((char *)&bi.biWidth, 4);

aa.append((char *)&bi.biHeight, 4);

aa.append((char *)&bi.biPlanes, 2);

aa.append((char *)&bi.biBitCount, 2);

aa.append((char *)&bi.biCompression, 4);

aa.append((char *)&bi.biSizeImage, 4);

aa.append((char *)&bi.biXPelsPerMeter, 4);

aa.append((char *)&bi.biYPelsPerMeter, 4);

aa.append((char *)&bi.biClrUsed, 4);

aa.append((char *)&bi.biClrImportant, 4);

aa.append((char *)rgb_buffer, IMAGEWIDTH*IMAGEHEIGHT*3);

frame->loadFromData(aa);

ui->label->setPixmap(QPixmap::fromImage(*frame,Qt::AutoColor));

rs = vd->unget_frame();

#endif

}

void Widget::init_bmp_header()

{

//Set BITMAPINFOHEADER

bi.biSize = 40;

bi.biWidth = IMAGEWIDTH;

bi.biHeight = IMAGEHEIGHT;

bi.biPlanes = 1;

bi.biBitCount = 24;

bi.biCompression = 0;

bi.biSizeImage = IMAGEWIDTH*IMAGEHEIGHT*3;

bi.biXPelsPerMeter = 0;

bi.biYPelsPerMeter = 0;

bi.biClrUsed = 0;

bi.biClrImportant = 0;

//Set BITMAPFILEHEADER

bf.bfType = 0x4d42;

bf.bfSize = 54 + bi.biSizeImage;

bf.bfReserved = 0;

bf.bfOffBits = 54;

}

videodevice.h

#ifndef VIDEODEVICE_H

#define VIDEODEVICE_H

#include <QString>

#include <QObject>

#include <QTextCodec>

#define FILE_VIDEO "/dev/video0"

//#define IMAGEWIDTH 3840

//#define IMAGEHEIGHT 2160

#define IMAGEWIDTH 1920

#define IMAGEHEIGHT 1080

//#define IMAGEWIDTH 640

//#define IMAGEHEIGHT 480

#define SCALED_WIDTH 640

#define SCALED_HEIGHT 480

#define JPG "/usr/image_jpg.jpg"

#define BMP "/usr/image_bmp.bmp"

#define YUV "/usr/image_yuv.yuv"

//#define USE_YUYV

#define TRUE 1

#define FALSE 0

#define CLEAR(x) memset(&(x), 0, sizeof(x))

class VideoDevice : public QObject

{

Q_OBJECT

public:

VideoDevice(char* dev_name);

~VideoDevice();

int get_frame(unsigned char ** yuv_buffer_pointer, size_t* len);

int unget_frame();

int convert_yuv_to_rgb_buffer(unsigned char** yuv_buffer_pointer, unsigned char* rgb_buffer);

private:

int open_device();

int init_device();

int start_capturing();

int init_mmap();

int stop_capturing();

int uninit_device();

int close_device();

struct buffer

{

void * start;

size_t length;

};

char* dev_name;

int fd;//video0 file

buffer* buffers;

unsigned int n_buffers;

int index;

};

#endif // VIDEODEVICE_H

videodevice.cpp

#include <stdio.h>

#include <unistd.h>

#include <stdlib.h>

#include <sys/types.h>

#include <sys/stat.h>

#include <sys/ioctl.h>

#include <sys/mman.h>

#include <asm/types.h>

#include <fcntl.h>

#include <linux/fs.h>

#include <errno.h>

#include <string.h>

#include <linux/videodev2.h>

#include <QDebug>

#include "videodevice.h"

VideoDevice::VideoDevice(char* dev_name)

{

this->dev_name = dev_name;

this->fd = -1;

this->buffers = NULL;

this->n_buffers = 0;

this->index = -1;

if(open_device() == FALSE)

{

qDebug("open_device false");

close_device();

}

if(init_device() == FALSE)

{

qDebug("init_device false");

close_device();

}

if(start_capturing() == FALSE)

{

qDebug("start_capturing false");

stop_capturing();

close_device();

}

}

VideoDevice::~VideoDevice()

{

if(stop_capturing() == FALSE)

{

}

if(uninit_device() == FALSE)

{

}

if(close_device() == FALSE)

{

}

}

int VideoDevice::open_device()

{

fd = ::open(dev_name,O_RDWR);

if(fd == -1)

{

qDebug("Error opening V4L interface");

return FALSE;

}

return TRUE;

}

int VideoDevice::close_device()

{

if( ::close(fd) == FALSE)

{

qDebug("Error closing V4L interface");

return FALSE;

}

return TRUE;

}

int VideoDevice::init_device()

{

v4l2_capability cap;

v4l2_fmtdesc fmtdesc;

v4l2_format fmt;

v4l2_streamparm setfps;

enum v4l2_buf_type type;

qDebug("init_device()");

if(ioctl(fd, VIDIOC_QUERYCAP, &cap) == -1)

{

qDebug("Error opening device %s: unable to query device.",dev_name);

return FALSE;

}

else

{

qDebug("driver:\t\t%s",cap.driver);

qDebug("card:\t\t%s",cap.card);

qDebug("bus_info:\t\t%s",cap.bus_info);

qDebug("version:\t\t%d",cap.version);

qDebug("capabili ties:\t%x",cap.capabilities);

qDebug("device_caps:\t%x\n",cap.device_caps);

if ((cap.capabilities & V4L2_CAP_VIDEO_CAPTURE) == V4L2_CAP_VIDEO_CAPTURE)

{

qDebug("Device %s: supports capture.",dev_name);

}

if ((cap.capabilities & V4L2_CAP_STREAMING) == V4L2_CAP_STREAMING)

{

qDebug("Device %s: supports streaming.",dev_name);

}

}

//emu all support fmt, list in /usr/include/linux/videodev2.h

fmtdesc.index=0;

fmtdesc.type=V4L2_BUF_TYPE_VIDEO_CAPTURE;

printf("Support format:\n");

while(ioctl(fd,VIDIOC_ENUM_FMT,&fmtdesc)!=-1)

{

printf("\t%d.%s\n",fmtdesc.index+1,fmtdesc.description);

fmtdesc.index++;

}

//emu all support pixel_fmt,

type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

struct v4l2_fmtdesc fmt_1;

struct v4l2_frmsizeenum frmsize;

fmt_1.index = 0; //索引

fmt_1.type = type;

while (ioctl(fd, VIDIOC_ENUM_FMT, &fmt_1) >= 0) {

frmsize.pixel_format = fmt_1.pixelformat;

frmsize.index = 0;

while (ioctl(fd, VIDIOC_ENUM_FRAMESIZES, &frmsize) >= 0){

if(frmsize.type == V4L2_FRMSIZE_TYPE_DISCRETE){

printf("support pix: %dx%d\n",frmsize.discrete.width, frmsize.discrete.height);

}else if(frmsize.type == V4L2_FRMSIZE_TYPE_STEPWISE){

printf("line:%d %dx%d\n",__LINE__, frmsize.discrete.width, frmsize.discrete.height);

}

frmsize.index++;

}

fmt_1.index++;

}

//set fmt

fmt.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

#ifndef USE_YUYV

fmt.fmt.pix.pixelformat = V4L2_PIX_FMT_MJPEG;//wzp

#else

fmt.fmt.pix.pixelformat = V4L2_PIX_FMT_YUYV;

#endif

fmt.fmt.pix.height = IMAGEHEIGHT;

fmt.fmt.pix.width = IMAGEWIDTH;

fmt.fmt.pix.field = V4L2_FIELD_INTERLACED;

// fmt.fmt.pix.field = V4L2_FIELD_NONE;//wzp

if(ioctl(fd, VIDIOC_S_FMT, &fmt) == -1)

{

qDebug("Unable to set format");

return FALSE;

}

if(ioctl(fd, VIDIOC_G_FMT, &fmt) == -1)

{

qDebug("Unable to get format");

return FALSE;

}

/* Confirm that the setting is successful*/

{

printf("fmt.type:\t\t%d\n",fmt.type);

printf("pix.pixelformat:\t%c%c%c%c\n",fmt.fmt.pix.pixelformat & 0xFF, (fmt.fmt.pix.pixelformat >> 8) & 0xFF,(fmt.fmt.pix.pixelformat >> 16) & 0xFF, (fmt.fmt.pix.pixelformat >> 24) & 0xFF);

printf("pix.height:\t\t%d\n",fmt.fmt.pix.height);

printf("pix.width:\t\t%d\n",fmt.fmt.pix.width);

printf("pix.field:\t\t%d\n",fmt.fmt.pix.field);

}

/* get the fps*/

setfps.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

if(ioctl(fd, VIDIOC_G_PARM, &setfps) == -1)

{

qDebug("Unable to get frame rate");

return FALSE;

}

else

{

qDebug("get fps OK!");

qDebug("timeperframe.numerator:%d",setfps.parm.capture.timeperframe.numerator);

qDebug("timeperframe.denominator:%d",setfps.parm.capture.timeperframe.denominator);

}

//set fps

setfps.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

setfps.parm.capture.timeperframe.numerator = 1;

setfps.parm.capture.timeperframe.denominator = 30;

if(ioctl(fd, VIDIOC_S_PARM, &setfps) == -1)

{

qDebug("Unable to set frame rate");

return FALSE;

}

else

{

qDebug("set fps OK!");

}

/* Confirm that the setting is successful*/

if(ioctl(fd, VIDIOC_G_PARM, &setfps) == -1)

{

qDebug("Unable to get frame rate");

return FALSE;

}

else

{

qDebug("get fps OK!");

qDebug("timeperframe.numerator:\t%d",setfps.parm.capture.timeperframe.numerator);

qDebug("timeperframe.denominator:\t%d",setfps.parm.capture.timeperframe.denominator);

}

//mmap

if(init_mmap() == FALSE )

{

qDebug("cannot mmap!");

return FALSE;

}

return TRUE;

}

int VideoDevice::init_mmap()

{

v4l2_requestbuffers req;

qDebug("init_mmap()");

/* 申请缓存区。使用参数VIDIOC_REQBUFS和结构体v4l2_requestbuffers。v4l2_requestbuffers结构中定义了缓存的数量,系统会据此申请对应数量的视频缓存。 */

req.count = 2;

req.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

req.memory = V4L2_MEMORY_MMAP;

if(ioctl(fd, VIDIOC_REQBUFS, &req) == -1)

{

qDebug("request for buffers error");

return FALSE;

}

if(req.count < 2)

{

return FALSE;

}

//mmap for buffers

buffers = (buffer*)calloc(req.count, sizeof(*buffers));

if(!buffers)

{

qDebug ("Out of memory");

return FALSE;

}

//将2个已申请到的缓冲帧映射到应用程序,用buffers 指针记录

for(n_buffers = 0; n_buffers < req.count; ++n_buffers)

{

v4l2_buffer buf;

buf.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

buf.memory = V4L2_MEMORY_MMAP;

buf.index = n_buffers;

//query buffers

if(ioctl(fd, VIDIOC_QUERYBUF, &buf) == -1)

{

qDebug("query buffer error");

return FALSE;

}

buffers[n_buffers].length = buf.length;

//map

buffers[n_buffers].start =

mmap(NULL, // start anywhere// allocate RAM*4

buf.length,

PROT_READ | PROT_WRITE,

MAP_SHARED,

fd, buf.m.offset);

if(MAP_FAILED == buffers[n_buffers].start)

{

qDebug("buffer map error\n");

return FALSE;

}

}

return TRUE;

}

int VideoDevice::start_capturing()

{

qDebug("start_capturing()");

unsigned int i;

for(i = 0; i < n_buffers; ++i)

{

v4l2_buffer buf;

buf.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

buf.memory =V4L2_MEMORY_MMAP;

buf.index = i;

if(-1 == ioctl(fd, VIDIOC_QBUF, &buf))

{

return FALSE;

}

}

v4l2_buf_type type;

type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

/* 采集视频。使用命令 VIDIOC_STREAMON。*/

if(-1 == ioctl(fd, VIDIOC_STREAMON, &type))

{

return FALSE;

}

return TRUE;

}

int VideoDevice::stop_capturing()

{

qDebug("stop_capturing()");

v4l2_buf_type type;

type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

if(ioctl(fd, VIDIOC_STREAMOFF, &type) == -1)

{

return FALSE;

}

return TRUE;

}

int VideoDevice::uninit_device()

{

qDebug("uninit_device()");

unsigned int i;

for(i = 0; i < n_buffers; ++i)

{

if(-1 == munmap(buffers[i].start, buffers[i].length))

{

qDebug("munmap error");

return FALSE;

}

}

delete buffers;

return TRUE;

}

int VideoDevice::get_frame(unsigned char ** yuv_buffer_pointer, size_t* len)

{

// qDebug("get_frame()");

v4l2_buffer queue_buf;

queue_buf.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

queue_buf.memory = V4L2_MEMORY_MMAP;

/* 取出缓存中已经采样的缓存。使用命令 VIDIOC_DQBUF。视频数据存放的位置是buffers[n_buffers].start的地址处。 */

if(ioctl(fd, VIDIOC_DQBUF, &queue_buf) == -1)

{

qDebug("VIDIOC_DQBUF false");

return FALSE;

}

*yuv_buffer_pointer = (unsigned char *)buffers[queue_buf.index].start;

*len = buffers[queue_buf.index].length;

index = queue_buf.index;

return TRUE;

}

int VideoDevice::unget_frame()

{

// qDebug("unget_frame()");

if(index != -1)

{

v4l2_buffer queue_buf;

queue_buf.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

queue_buf.memory = V4L2_MEMORY_MMAP;

queue_buf.index = index;

if(ioctl(fd, VIDIOC_QBUF, &queue_buf) == -1)

{

qDebug("VIDIOC_QBUF false");

return FALSE;

}

return TRUE;

}

return FALSE;

}

/*yuv格式转换为rgb格式*/

int VideoDevice::convert_yuv_to_rgb_buffer(unsigned char** yuv_buffer_pointer, unsigned char* rgb_buffer)

{

unsigned long in, out = 0;

int y0, u, y1, v;

int r, g, b;

unsigned char* point = *yuv_buffer_pointer;

/* 由于后续RGB的数据要存储到BMP中,而BMP文件中颜色数据是“倒序”,即从下到上,从左到右,因而在向frame_buffer写数据时是从最后一行最左测开始写,每写满一行行数减一。 */

for(in = 0; in < IMAGEWIDTH * IMAGEHEIGHT * 2; in += 4)

{

y0 = point[in + 0];

u = point[in + 1];

y1 = point[in + 2];

v = point[in + 3];

r = y0 + (1.370705 * (v-128));

g = y0 - (0.698001 * (v-128)) - (0.337633 * (u-128));

b = y0 + (1.732446 * (u-128));

if(r > 255) r = 255;

if(g > 255) g = 255;

if(b > 255) b = 255;

if(r < 0) r = 0;

if(g < 0) g = 0;

if(b < 0) b = 0;

rgb_buffer[out++] = r;

rgb_buffer[out++] = g;

rgb_buffer[out++] = b;

r = y1 + (1.370705 * (v-128));

g = y1 - (0.698001 * (v-128)) - (0.337633 * (u-128));

b = y1 + (1.732446 * (u-128));

if(r > 255) r = 255;

if(g > 255) g = 255;

if(b > 255) b = 255;

if(r < 0) r = 0;

if(g < 0) g = 0;

if(b < 0) b = 0;

rgb_buffer[out++] = r;

rgb_buffer[out++] = g;

rgb_buffer[out++] = b;

}

return 0;

}

参考链接:

linux下通过V4L2驱动USB摄像头 https://blog.csdn.net/simonforfuture/article/details/78743800

USB摄像头的图片采集 https://www.cnblogs.com/surpassal/archive/2012/12/19/zed_webcam_lab1.html

视频的采集和动态显示 https://www.cnblogs.com/surpassal/archive/2013/01/12/zed_webcam_lab3.html

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)