一、数据

官网下载太慢,然后我找到了一个整理好的数据集

链接: GTSRB-德国交通标志识别图像数据 .

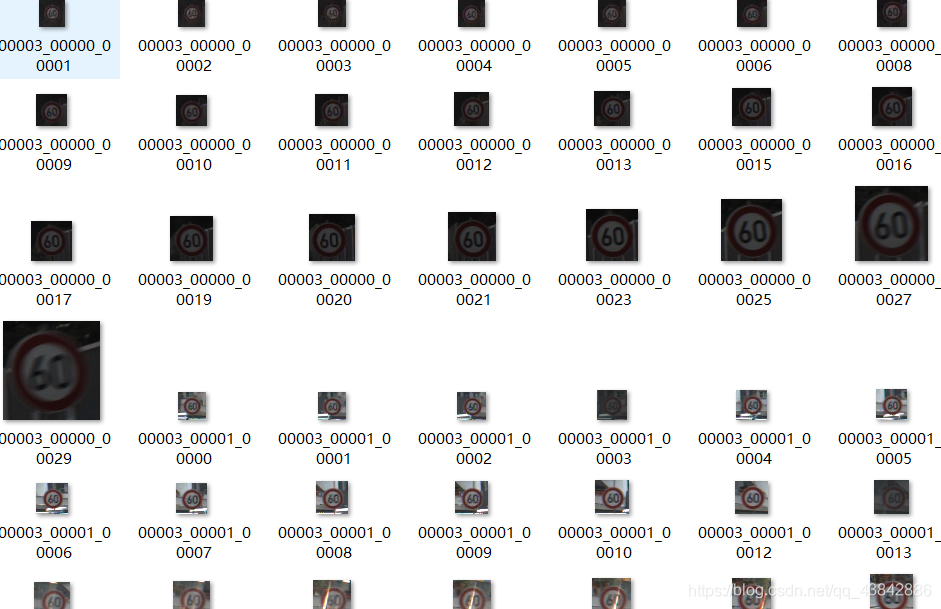

数据集很干净,直接用就好了,它把所有的数据信息单独列了一个csv文件。

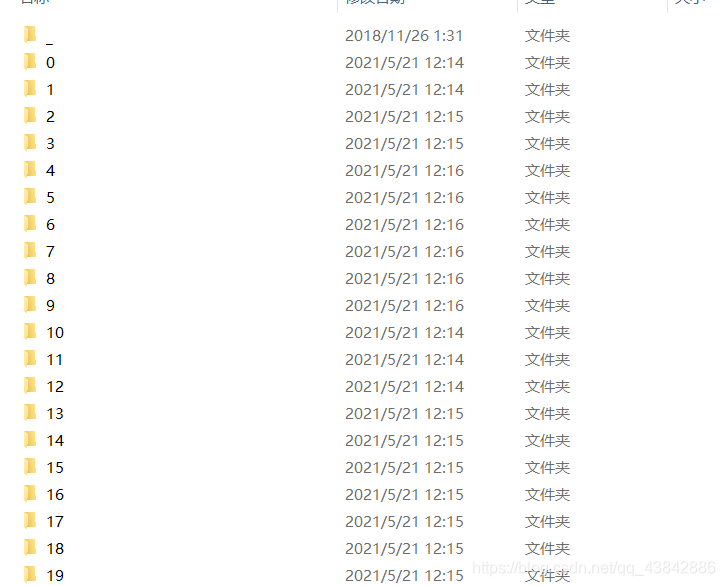

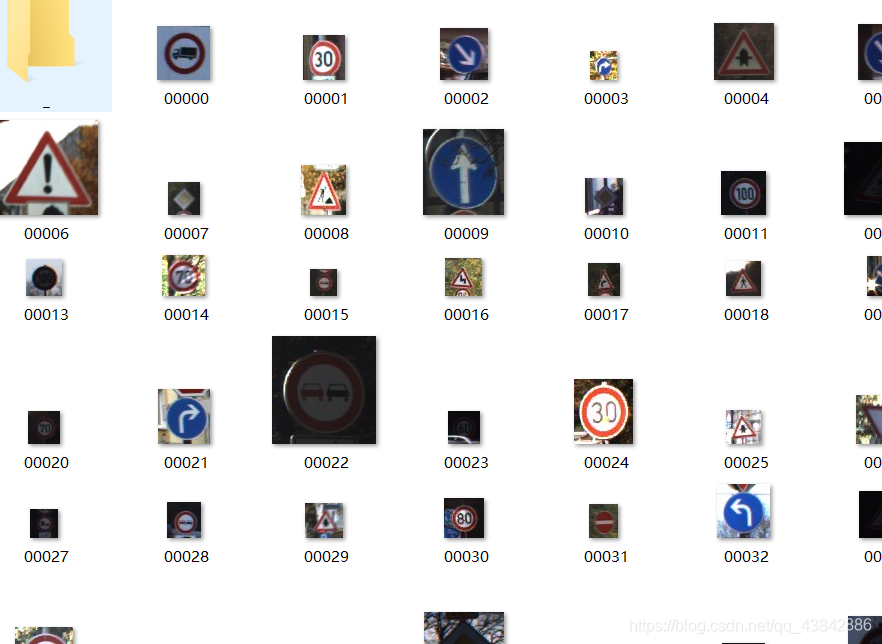

数据集有43个大类 。

每个类按规律排列,需要将其打乱顺序。

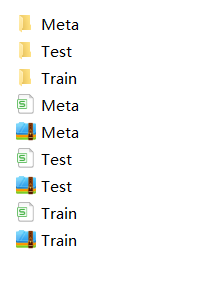

训练集:

测试集:

如图,虽然数据集要干净一些,但是读取测试集和训练集的方法不一样

数据读取

1、训练数据

因为训练集是按规律排列的,而且数据量很大,所以先打乱顺序,将其划分出一个训练集一个测试集。(原数据集里边的测试集不是按类别分的,读出来格式不太一样,就直接用这个了)

import os

import random

import shutil

path = 'F:\GTSRB-德国交通标志识别图像数据\Train'

dirs = []

split_percentage = 0.2

for dirpath, dirnames, filenames in os.walk(path, topdown=False):

for dirname in dirnames:

fullpath = os.path.join(dirpath, dirname)

fileCount = len([name for name in os.listdir(fullpath) if os.path.isfile(os.path.join(fullpath, name))])

files = os.listdir(fullpath)

for index in range((int)(split_percentage * fileCount)):

newIndex = random.randint(0, fileCount - 1)

fullFilePath = os.path.join(fullpath, files[newIndex])

newFullFilePath = fullFilePath.replace('Train', 'Final_Validation')

base_new_path = os.path.dirname(newFullFilePath)

if not os.path.exists(base_new_path):

os.makedirs(base_new_path)

try:

shutil.move(fullFilePath, newFullFilePath)

except IOError as error:

print('skip moving from %s => %s' % (fullFilePath, newFullFilePath))

然后就可以对数据开始处理准备训练:

import shutil

import os

import matplotlib.pyplot as plt

train_set_base_dir = 'F:\GTSRB-德国交通标志识别图像数据\Train'

validation_set_base_dir = 'F:\GTSRB-德国交通标志识别图像数据\Final_Validation'

from keras.preprocessing.image import ImageDataGenerator

train_datagen = ImageDataGenerator(

rescale=1. / 255

)

train_data_generator = train_datagen.flow_from_directory(

directory=train_set_base_dir,

target_size=(48, 48),

batch_size=32,

class_mode='categorical')

validation_datagen = ImageDataGenerator(

rescale=1. /255

)

validation_data_generator = validation_datagen.flow_from_directory(

directory=validation_set_base_dir,

target_size=(48, 48),

batch_size=32,

class_mode='categorical'

)

2、验证数据:

因为老是奇奇怪怪的报错,所以干脆用它给出来的Test文件夹里的测试集验证预测了。

path = 'F:\GTSRB-德国交通标志识别图像数据'

csv_files = []

for dirpath, dirnames, filenames in os.walk(path, topdown=False):

for filename in filenames:

if filename.endswith('.csv'):

csv_files.append(os.path.join(dirpath, filename))

import matplotlib.image as mpimg

test_image=[]

test_lable=[]

x=''

csv=csv_files[1]

base_path = os.path.dirname(csv)

trafficSigns = []

with open(csv,'r',newline='') as file:

header = file.readline()

header = header.strip()

header_list = header.split(',')

print(header_list)

for row in file.readlines():

row_data = row.split(',')

x=row_data[7]

x='F:/GTSRB-德国交通标志识别图像数据/'+x

x=x.strip('\n')

test_lable.append(row_data[6])

test = Image.open(x)

test = test.resize((48,48),Image.ANTIALIAS)

test = np.array(test)

test_image.append(test)

test_data = np.array(test_image)

注:关于Test文件夹里的数据,因为读出来不是generator格式,所以最开始使用训练集抽取出来的20%作为测试集的,最后用test文件预测时出现了这个问题:

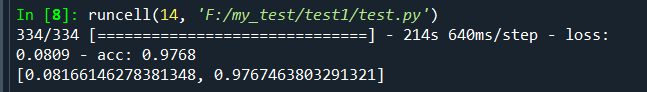

训练集:

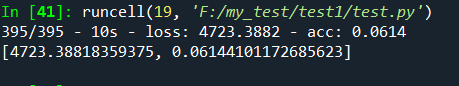

模型评估的分非常高,然后预测时就变成了这样

准确率直接掉到了6%

关于用test文件夹里的数据做评估时,最开始分别输入数据和标签老是报错,最后找到原因是,label数据不能自动进行one-hot编码,所以需要手动进行。

所以我重新做了一下数据处理,干脆将其转换成generator对象。

二、搭建网络

import tensorflow as tf

from keras.models import Sequential

from keras.layers import Conv2D, MaxPool2D, Flatten, Dense, Dropout

model = Sequential()

model.add(Conv2D(filters=32, kernel_size=(3, 3), activation='relu', input_shape=(48, 48, 3)))

model.add(MaxPool2D(pool_size=(2, 2), padding='valid'))

model.add(Conv2D(filters=64, kernel_size=(3, 3), activation='relu'))

model.add(MaxPool2D(pool_size=(2, 2), padding='valid'))

model.add(Conv2D(filters=128, kernel_size=(3, 3), activation='relu'))

model.add(MaxPool2D(pool_size=(2, 2), padding='valid'))

model.add(Conv2D(filters=128, kernel_size=(3, 3), activation='relu'))

model.add(MaxPool2D(pool_size=(2, 2), padding='valid'))

model.add(Flatten())

model.add(Dropout(0.2))

model.add(Dense(units=512, activation='relu'))

model.add(Dense(units=43, activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer='rmsprop', metrics=['acc'])

model.summary()

json_str = model.to_json()

print(json_str)

history = model.fit_generator(

generator=train_data_generator,

steps_per_epoch=100,

epochs=27,

validation_data=validation_data_generator,

validation_steps=50)

model.save('F:/MLCourse/model27epoch.h5')

acc = history.history['acc']

val_acc = history.history['val_acc']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs = range(1, len(acc) + 1)

plt.plot(epochs, acc, 'bo', label='Training acc')

plt.plot(epochs, val_acc, 'b', label='Validation acc')

plt.title('Training and validation accuracy')

plt.legend()

plt.figure()

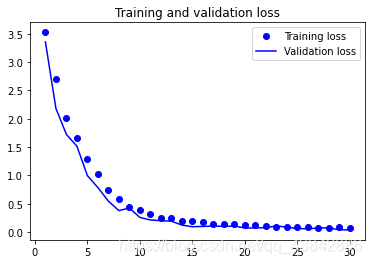

plt.plot(epochs, loss, 'bo', label='Training loss')

plt.plot(epochs, val_loss, 'b', label='Validation loss')

plt.title('Training and validation loss')

plt.legend()

plt.show()

这里只是在测试写的程序,模型就用的普通的卷积层,验证效果并不好,建议下载一些预训练模型。

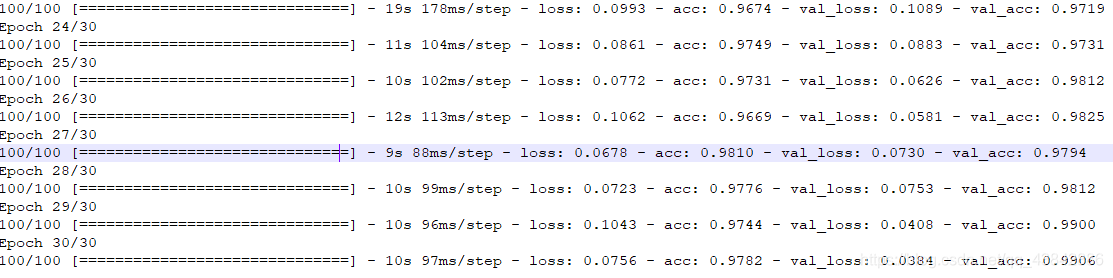

这里最开始训练了30次:

可以看出来27次就过拟合了,训练精度还是蛮高的,可能是数据比较规律。

三、模型预测

因为一直在整数据,不想太麻烦,就把模型单独训练的。在这里加了一层softmax层做概率输出。

new_model = keras.models.load_model('F:/MLCourse/model27epoch.h5')

probability_model = tf.keras.Sequential([new_model,

tf.keras.layers.Softmax()])

predictions = probability_model.predict(test_data)

然后,我就发现差不多只预测对了很少qaq|。所以过了一天我突然想改一改,所以上面重新处理了一下测试集数据,将其进行one-hot编码之后,转化成generator对象。然后重新训练了一次。

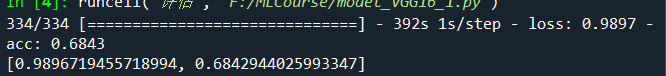

然后我又训练了一次,无论是将混乱的测试集作为训练输入,还是将比较规律的测试集作为输入,最后评估效果都很差,所以我决定重新设计网络。使用VGG16进行预训练。

然后训练完之后,如果用test里的文件和Train里的文件一个验证一个训练的话,验证精度依旧上不去。所以我就混合了一下,做了交叉验证。

然后验证精度就变成了:

四、附录

模块导入

因为怕麻烦,所以分开了很多文件搞的,搞了个大集合

import shutil

import os

import matplotlib.pyplot as plt

import keras

import tensorflow as tf

from PIL import Image

import numpy as np

from keras.preprocessing.image import ImageDataGenerator

from keras.preprocessing import image

import sys

import numpy as np

from tensorflow.keras import datasets, layers, models

from keras.models import Sequential

from keras.layers import Conv2D, MaxPool2D, Flatten, Dense, Dropout

Code

train_set_base_dir = 'F:\GTSRB-德国交通标志识别图像数据\Train'

validation_set_base_dir = 'F:\GTSRB-德国交通标志识别图像数据\Final_Validation'

train_datagen = ImageDataGenerator(

rescale=1. / 255

)

train_data_generator = train_datagen.flow_from_directory(

directory=train_set_base_dir,

target_size=(48, 48),

batch_size=32,

class_mode='categorical')

validation_datagen = ImageDataGenerator(

rescale=1. /255

)

validation_data_generator = validation_datagen.flow_from_directory(

directory=validation_set_base_dir,

target_size=(48, 48),

batch_size=32,

class_mode='categorical'

)

path = 'F:\GTSRB-德国交通标志识别图像数据'

csv_files = []

for dirpath, dirnames, filenames in os.walk(path, topdown=False):

for filename in filenames:

if filename.endswith('.csv'):

csv_files.append(os.path.join(dirpath, filename))

import matplotlib.image as mpimg

test_image=[]

test_lable=[]

x=''

csv=csv_files[1]

base_path = os.path.dirname(csv)

trafficSigns = []

with open(csv,'r',newline='') as file:

header = file.readline()

header = header.strip()

header_list = header.split(',')

print(header_list)

for row in file.readlines():

row_data = row.split(',')

x=row_data[7]

x='F:/GTSRB-德国交通标志识别图像数据/'+x

x=x.strip('\n')

m=row_data[6]

test_lable.append(int(row_data[6]))

test = Image.open(x)

test = test.resize((48,48),Image.ANTIALIAS)

test = np.array(test)

test_image.append(test)

test_data = np.array(test_image)

test_lable = np.array(test_lable)

labels = test_lable

one_hot_labels = tf.one_hot(indices=labels,depth=43, on_value=1, off_value=0, axis=-1, dtype=tf.int32, name="one-hot")

test_datagen = ImageDataGenerator(

rescale=1. /255

)

test_data_generator = test_datagen.flow(

x=test_data,

y=one_hot_labels,

batch_size=32

)

print(test_lable)

import tensorflow as tf

from keras.models import Sequential

from keras.layers import Conv2D, MaxPool2D, Flatten, Dense, Dropout

model = Sequential()

model.add(Conv2D(filters=32, kernel_size=(3, 3), activation='relu', input_shape=(48, 48, 3)))

model.add(MaxPool2D(pool_size=(2, 2), padding='valid'))

model.add(Conv2D(filters=64, kernel_size=(3, 3), activation='relu'))

model.add(MaxPool2D(pool_size=(2, 2), padding='valid'))

model.add(Conv2D(filters=128, kernel_size=(3, 3), activation='relu'))

model.add(MaxPool2D(pool_size=(2, 2), padding='valid'))

model.add(Conv2D(filters=128, kernel_size=(3, 3), activation='relu'))

model.add(MaxPool2D(pool_size=(2, 2), padding='valid'))

model.add(Flatten())

model.add(Dropout(0.2))

model.add(Dense(units=512, activation='relu'))

model.add(Dense(units=43, activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer='rmsprop', metrics=['acc'])

model.summary()

json_str = model.to_json()

print(json_str)

history = model.fit_generator(

generator=train_data_generator,

steps_per_epoch=100,

epochs=27,

validation_data=validation_data_generator,

validation_steps=50)

model.save('F:/MLCourse/model27epoch.h5')

acc = history.history['acc']

val_acc = history.history['val_acc']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs = range(1, len(acc) + 1)

plt.plot(epochs, acc, 'bo', label='Training acc')

plt.plot(epochs, val_acc, 'b', label='Validation acc')

plt.title('Training and validation accuracy')

plt.legend()

plt.figure()

plt.plot(epochs, loss, 'bo', label='Training loss')

plt.plot(epochs, val_loss, 'b', label='Validation loss')

plt.title('Training and validation loss')

plt.legend()

plt.show()

print(train_data_generator[0])

new_model = keras.models.load_model('F:/MLCourse/model27epoch.h5')

probability_model = tf.keras.Sequential([new_model,

tf.keras.layers.Softmax()])

new_model.compile(loss='categorical_crossentropy', optimizer='rmsprop', metrics=['acc'])

new_model.summary()

scores = new_model.evaluate(validation_data_generator)

print(scores)

scores2 = new_model.evaluate(test_data,one_hot_labels, verbose=2)

print(scores2)

predictions = probability_model.predict(test_data)

结语

各种奇怪的报错姿势,小细节really重要。

PS.我去瞅了瞅测试集,有些图片都黑成一坨了,我都看不出来有东西。

PPS.本来考研好累,想搞搞这个放松放松,结果更自闭了.jpg

QAQ|、、、

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)