最近一直在断断续续的调试vins-fuison,odometry总是各种飘,令人头大。记录一下调试过程,供以后学习参考。首先选用一组可靠的视觉惯导传感器,如Realsense D435i,实践表明,传感器的校准十分重要。如果IMU和相机没有很好的校准,那vins肯定跑不起来,下面具体介绍下Realsense D435i的标定过程:

1.IMU校准

Realsense D435i的IMU在出厂时是没有校准的,可以在Realsense运行时打印 /camera/gyro/imu_info 和/camera/accel/imu_info 两个topic,如下图所示,noise_variances和bias_variances都为0。

IMU校准过程参考以下三个链接:

https://github.com/HKUST-Aerial-Robotics/VINS-Fusion/issues/36

https://github.com/engcang/vins-application

https://www.intel.com/content/dam/support/us/en/documents/emerging-technologies/intel-realsense-technology/RealSense_Depth_D435i_IMU_Calib.pdf

按照链接上的内容执行就可以完成了,校准结果如下图所示:

2.IMU标定

标定IMU,参考博客《D435i标定摄像头和IMU笔记三(IMU标定篇)》

D435i标定摄像头和IMU笔记三(IMU标定篇)_Nankel Li的博客-CSDN博客

带你实现IMU和双目相机的联合标定

技术分享 | 带你实现IMU和双目相机的联合标定

带你解读Kalibr和VINS标定参数

技术分享 | 带你解读Kalibr和VINS标定参数

3.IMU和相机联合标定

Kalibr相机校正工具安装与使用笔记

Kalibr相机校正工具安装与使用笔记

利用Kalibr对Intel RealSense D435i进行相机及相机-IMU联合标定

利用Kalibr对Intel RealSense D435i进行相机及相机-IMU联合标定

如何录制标定所需的rosbag

https://github.com/ethz-asl/kalibr/wiki/camera-imu-calibration

视频中展示的标定结果如下:

视频中展示的重投影误差才±0.2个像素,结果很好。

而平常自己标定时,重投影误差在±2个像素左右,效果不太理想;

下图为最近一次的标定结果,左右相机的重投影误差在±0.1个像素左右,效果非常好;

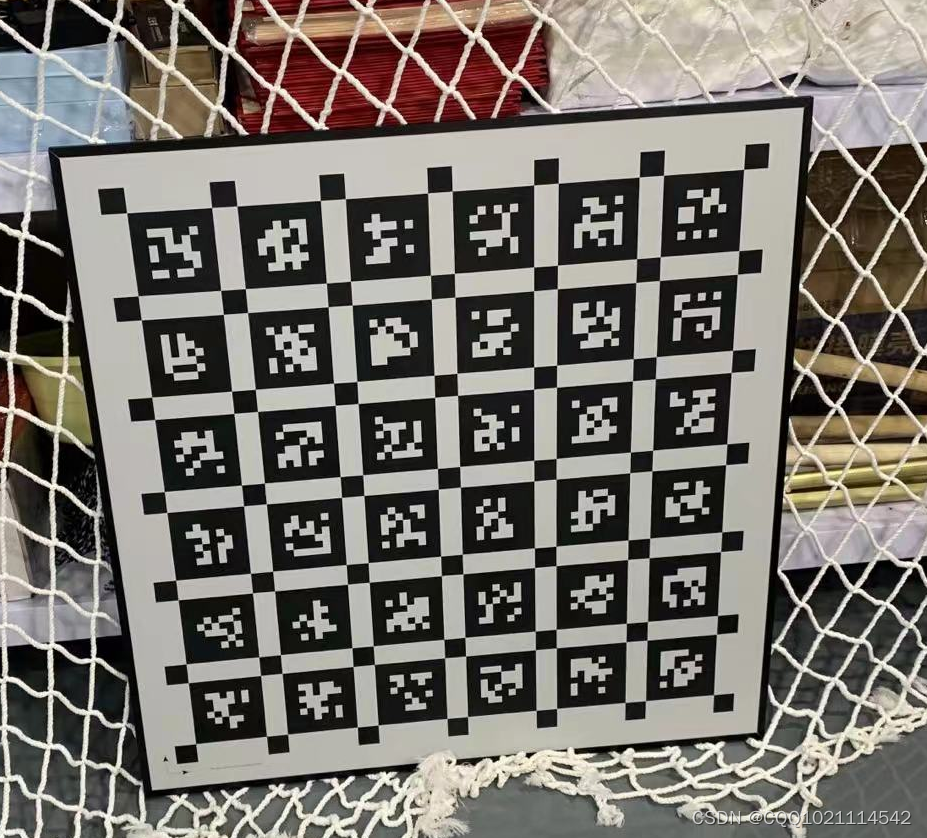

4.使用的标定板图片如下所示,tagSize大小为0.088m,tagSpacing为0.3;理论上讲标定板尽量选大一点的,标定效果会更好;

5.按照标定好的结果修改配置文件,下面贴一下vins-Fusion的参数配置文件内容;

%YAML:1.0

#common parameters

#support: 1 imu 1 cam; 1 imu 2 cam: 2 cam;

imu: 1

num_of_cam: 2

imu_topic: "/camera/imu"

image0_topic: "/camera/infra1/image_rect_raw"

image1_topic: "/camera/infra2/image_rect_raw"

output_path: "/home/cqq/catkin_ws/src/VINS-Fusion/output"

cam0_calib: "left.yaml"

cam1_calib: "right.yaml"

image_width: 640

image_height: 480

# Extrinsic parameter between IMU and Camera.

estimate_extrinsic: 1 # 0 Have an accurate extrinsic parameters. We will trust the following imu^R_cam, imu^T_cam, don't change it.

# 1 Have an initial guess about extrinsic parameters. We will optimize around your initial guess.

body_T_cam0: !!opencv-matrix

rows: 4

cols: 4

dt: d

data: [ 0.9999818, 0.00009544, -0.00603301, 0.00176738,

-0.00008504, 0.99999851, 0.00172311, -0.00258411,

0.00603317, -0.00172257, 0.99998032, -0.02727786,

0.0, 0.0, 0.0, 1.0 ]

body_T_cam1: !!opencv-matrix

rows: 4

cols: 4

dt: d

data: [ 0.99998366, 0.00008914, -0.00571582, -0.0482864,

-0.0000794, 0.99999855, 0.00170365, -0.00266832,

0.00571596, -0.00170317, 0.99998221, -0.02758065,

0.0, 0.0, 0.0, 1.0 ]

#Multiple thread support

multiple_thread: 1

#feature traker paprameters

max_cnt: 150 # max feature number in feature tracking

min_dist: 30 # min distance between two features

freq: 10 # frequence (Hz) of publish tracking result. At least 10Hz for good estimation. If set 0, the frequence will be same as raw image

F_threshold: 1.0 # ransac threshold (pixel)

show_track: 1 # publish tracking image as topic

flow_back: 1 # perform forward and backward optical flow to improve feature tracking accuracy

#optimization parameters

max_solver_time: 0.04 # max solver itration time (ms), to guarantee real time

max_num_iterations: 8 # max solver itrations, to guarantee real time

keyframe_parallax: 10.0 # keyframe selection threshold (pixel)

#imu parameters The more accurate parameters you provide, the better performance

acc_n: 3.0285e-03 # accelerometer measurement noise standard deviation. #0.2 0.04

gyr_n: 2.726e-02 # gyroscope measurement noise standard deviation. #0.05 0.004

acc_w: 3.003e-05 # accelerometer bias random work noise standard deviation. #0.002

gyr_w: 5.798179e-04 # gyroscope bias random work noise standard deviation. #4.0e-5

g_norm: 9.80597397 # gravity magnitude

#unsynchronization parameters

estimate_td: 0 # online estimate time offset between camera and imu

td: 0.007235362544991748 # initial value of time offset. unit: s. readed image clock + td = real image clock (IMU clock)

#loop closure parameters

load_previous_pose_graph: 0 # load and reuse previous pose graph; load from 'pose_graph_save_path'

pose_graph_save_path: "/home/cqq/catkin_ws/src/VINS-Fusion/output" # save and load path

save_image: 0 # save image in pose graph for visualization prupose; you can close this function by setting 0

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)