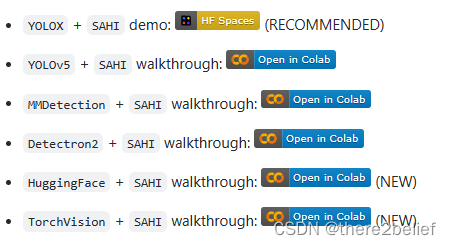

遥感等领域数据大图像检测时,直接对大图检测会严重影响精度,而通用工具多不能友好支持大图分块检测。Slicing Aided Hyper Inference (SAHI)是一个用于辅助大图切片检测预测的包。目前可以良好的支持YOLOX、YOLOv5、MMDetection、Detection2等。代码:obss/sahi: Framework agnostic sliced/tiled inference + interactive ui + error analysis plots (github.com)

在训练时,为了避免在模型的训练预处理管道中由于调整图像大小而丢失小对象,我们在管道的开头添加了随机裁剪。实际上,我们正在原始图像的小块上进行训练,并保留这些图像中的所有信息。例如:

train_pipeline = [

dict(type='LoadImageFromFile'),

dict(type='LoadAnnotations', with_bbox=True),

dict(type='RandomCrop', crop_size=(height, width)),

dict(

type='Resize',

img_scale=[(1333, 640), (1333, 672), (1333, 704), (1333, 736),

(1333, 768), (1333, 800)],

multiscale_mode='value',

keep_ratio=True),

dict(type='RandomFlip', flip_ratio=0.5),

dict(

type='Normalize',

mean=[103.53, 116.28, 123.675],

std=[1.0, 1.0, 1.0],

to_rgb=False),

dict(type='Pad', size_divisor=32),

dict(type='DefaultFormatBundle'),

dict(type='Collect', keys=['img', 'gt_bboxes', 'gt_labels'])

]

在推理时,SAHI的图像预测将输入图像分解为略微重叠的切片,对每个切片进行预测,最后合并每个切片的检测结果,恢复到原始图像上的坐标。

from sahi.model import MmdetDetectionModel

from sahi.predict import get_prediction, get_sliced_prediction, predict

from sahi.utils.cv import visualize_object_predictions

detection_model = MmdetDetectionModel(

model_path=trained_model_path,

config_path=config_path,

device='cuda' # or 'cpu'

)

sliced_pred_result = get_sliced_prediction(

test_img_path,

detection_model,

slice_width=width,

slice_height=height

)

visualize_object_predictions(

image=np.ascontiguousarray(sliced_pred_result.image),

object_prediction_list=sliced_pred_result.object_prediction_list,

rect_th=1,

text_size=0.3,

text_th=1,

color=(0, 0, 0),

output_dir=viz_path,

file_name=test_img_name + "_sliced_pred_result",

export_format=format,

)

效果示例:

参考:

obss/sahi: Framework agnostic sliced/tiled inference + interactive ui + error analysis plots (github.com)

How to detect small objects in (very) large images | by Maximilian Gartz | ML6team

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)