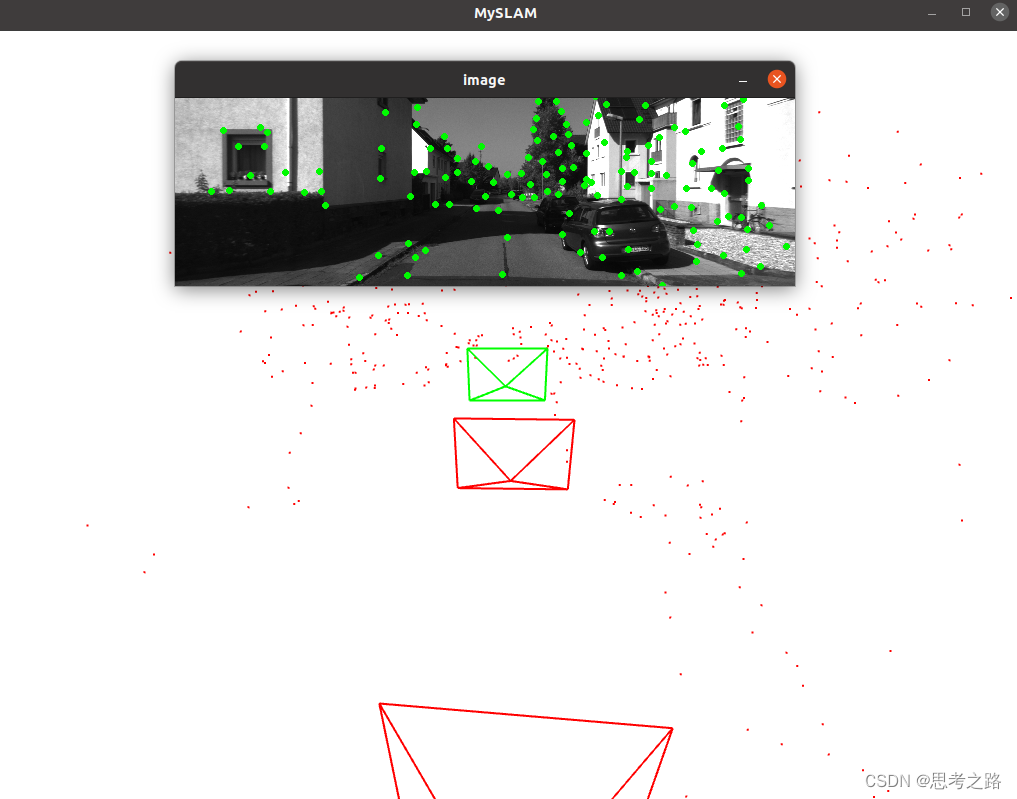

实现一个双目视觉里程计在Kitti数据集中的运行效果。

很有必要多看几遍的例程。

这个视觉里程计由一个光流追踪的前端和一个局部BA的后端组成。双目只需单帧就可初始化,双目存在3D观测,实现效果比单目好。

程序:数据结构+算法

本例程的数据结构是:

- 处理的最基本单元图像。在双目中是一对图像,为一帧。

- 对帧提取特征点。特征点是有很多2D的点。

- 图像之间寻找特征的关联。如果多次看到某个特征,就用三角化方法计算它的3D位置,即路标。

所以图像,特征,路标是这个系统的最基本的结构。其中路标,路标点,地图点都指代3D空间中的点,语义是一样的。

算法:负责提取特征点的算法,负责做三角化的算法,负责处理优化的算法。总的来说有两个模块: - 前端。负责计算相邻图像的特征匹配,往前端中插入图像帧,负责提取图像中的特征点,然后与上一帧进行光流追踪,通过光流结果计算该帧的定位。必要时,应该补充新的特征点并做三角化。前端快速处理保证实时性,前端处理的结果将作为后端优化的初始值。

- 后端。后端是一个较慢的线程,它拿到前端初始化好的关键帧和路标点后,对他们进行优化,然后返回优化结果。后端应该控制优化问题在一定的规模内,不能随时间增长。

这样通过数据结构和算法确定了系统框架,前后端之间放了地图模块来处理他们之间的数据流动。前后段分别在各自的线程中处理数据,前端提取关键帧后,往梯度中添加新的数据;后端检测到地图更新时,运行一次优化,然后把旧的关键帧和地图点去掉,保持优化的规模。

后续的编程除了核心算法外,还需要一些小模块让系统更加方便:

- 需要一个相机类来管理相机的内外参和投影函数;

- 需要一个配置文件管理类,方便从配置文件中读取内容。配置文件中可以记录一些重要的配置参数;

- 算法是在Kitti数据集上运行的,所以需要一个单独的类来按Kitti的存储格式来读取数据;

- 需要一个可视化的模块来观察系统的运行状态,否则就是一连传数值;

实现:

1> 实现基本数据结构

先来实现帧,特征,路标点三个类。对于基本数据结构,通常设为struct,考虑到这些数据会被多个线程同时访问或修改,在关键部分我们需要加线程锁。

Frame结果设计:include/myslam/frame.h

#pragma once

#ifndef MYSLAM_FRAME_H

#define MYSLAM_FRAME_H

#include "myslam/camera.h"

#include "myslam/common_include.h"

namespace myslam {

struct MapPoint;

struct Feature;

struct Frame {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

typedef std::shared_ptr<Frame> Ptr;

unsigned long id_ = 0;

unsigned long keyframe_id_ = 0;

bool is_keyframe_ = false;

double time_stamp_;

SE3 pose_;

std::mutex pose_mutex_;

cv::Mat left_img_, right_img_;

std::vector<std::shared_ptr<Feature>> features_left_;

std::vector<std::shared_ptr<Feature>> features_right_;

public:

Frame() {}

Frame(long id, double time_stamp, const SE3 &pose, const Mat &left,

const Mat &right);

SE3 Pose() {

std::unique_lock<std::mutex> lck(pose_mutex_);

return pose_;

}

void SetPose(const SE3 &pose) {

std::unique_lock<std::mutex> lck(pose_mutex_);

pose_ = pose;

}

void SetKeyFrame();

static std::shared_ptr<Frame> CreateFrame();

};

}

#endif

Feature类:/include/myslam/feature.h

#pragma once

#ifndef MYSLAM_FEATURE_H

#define MYSLAM_FEATURE_H

#include <memory>

#include <opencv2/features2d.hpp>

#include "myslam/common_include.h"

namespace myslam {

struct Frame;

struct MapPoint;

struct Feature {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

typedef std::shared_ptr<Feature> Ptr;

std::weak_ptr<Frame> frame_;

cv::KeyPoint position_;

std::weak_ptr<MapPoint> map_point_;

bool is_outlier_ = false;

bool is_on_left_image_ = true;

public:

Feature() {}

Feature(std::shared_ptr<Frame> frame, const cv::KeyPoint &kp)

: frame_(frame), position_(kp) {}

};

}

#endif

MapPoint类:路标点/include/myslam/mappoint.h

#pragma once

#ifndef MYSLAM_MAPPOINT_H

#define MYSLAM_MAPPOINT_H

#include "myslam/common_include.h"

namespace myslam {

struct Frame;

struct Feature;

struct MapPoint {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

typedef std::shared_ptr<MapPoint> Ptr;

unsigned long id_ = 0;

bool is_outlier_ = false;

Vec3 pos_ = Vec3::Zero();

std::mutex data_mutex_;

int observed_times_ = 0;

std::list<std::weak_ptr<Feature>> observations_;

MapPoint() {}

MapPoint(long id, Vec3 position);

Vec3 Pos() {

std::unique_lock<std::mutex> lck(data_mutex_);

return pos_;

}

void SetPos(const Vec3 &pos) {

std::unique_lock<std::mutex> lck(data_mutex_);

pos_ = pos;

};

void AddObservation(std::shared_ptr<Feature> feature) {

std::unique_lock<std::mutex> lck(data_mutex_);

observations_.push_back(feature);

observed_times_++;

}

void RemoveObservation(std::shared_ptr<Feature> feat);

std::list<std::weak_ptr<Feature>> GetObs() {

std::unique_lock<std::mutex> lck(data_mutex_);

return observations_;

}

static MapPoint::Ptr CreateNewMappoint();

};

}

#endif

最后还需要定义一个地图类:Frame和MapPoint类的对象归地图持有。

#pragma once

#ifndef MAP_H

#define MAP_H

#include "myslam/common_include.h"

#include "myslam/frame.h"

#include "myslam/mappoint.h"

namespace myslam {

class Map {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

typedef std::shared_ptr<Map> Ptr;

typedef std::unordered_map<unsigned long, MapPoint::Ptr> LandmarksType;

typedef std::unordered_map<unsigned long, Frame::Ptr> KeyframesType;

Map() {}

void InsertKeyFrame(Frame::Ptr frame);

void InsertMapPoint(MapPoint::Ptr map_point);

LandmarksType GetAllMapPoints() {

std::unique_lock<std::mutex> lck(data_mutex_);

return landmarks_;

}

KeyframesType GetAllKeyFrames() {

std::unique_lock<std::mutex> lck(data_mutex_);

return keyframes_;

}

LandmarksType GetActiveMapPoints() {

std::unique_lock<std::mutex> lck(data_mutex_);

return active_landmarks_;

}

KeyframesType GetActiveKeyFrames() {

std::unique_lock<std::mutex> lck(data_mutex_);

return active_keyframes_;

}

void CleanMap();

private:

void RemoveOldKeyframe();

std::mutex data_mutex_;

LandmarksType landmarks_;

LandmarksType active_landmarks_;

KeyframesType keyframes_;

KeyframesType active_keyframes_;

Frame::Ptr current_frame_ = nullptr;

int num_active_keyframes_ = 7;

};

}

#endif

2> 前端

定义好基本的数据结构后,我们看来前端的功能,前端需要根据双目图像确定v该镇的位置。每帧都有左右目,进行三角化的时候如何选任意两张图像做三角化,考虑不一致,所以位我们先来确定前端的处理逻辑:

- 前端本身有初始化,正常追踪,追踪丢失三种状态;

- 在初始化状态中,根据左右目之间的光流匹配,寻找可以三角化的地图点,成功建立处是地图;

- 追踪阶段中,前端计算上一帧的特征点到当前帧的光流,根据光流结果计算图像位姿。该计算只使用左目图像,不使用右目;

- 如果追踪到的点较少,就判定当前帧为关键帧。对于关键帧,有以下几个点:

- 提取新的特征点。

- 找到这些点在右图中的对应点,用三角化建立新的路标点。

- 将新的关键帧和路标点加入到地图,并触发一次后端优化。 - 如果追踪丢失,就重置前端系统,重新初始化

#include <opencv2/opencv.hpp>

#include "myslam/algorithm.h"

#include "myslam/backend.h"

#include "myslam/config.h"

#include "myslam/feature.h"

#include "myslam/frontend.h"

#include "myslam/g2o_types.h"

#include "myslam/map.h"

#include "myslam/viewer.h"

namespace myslam {

Frontend::Frontend() {

gftt_ =

cv::GFTTDetector::create(Config::Get<int>("num_features"), 0.01, 20);

num_features_init_ = Config::Get<int>("num_features_init");

num_features_ = Config::Get<int>("num_features");

}

bool Frontend::AddFrame(myslam::Frame::Ptr frame) {

current_frame_ = frame;

switch (status_) {

case FrontendStatus::INITING:

StereoInit();

break;

case FrontendStatus::TRACKING_GOOD:

case FrontendStatus::TRACKING_BAD:

Track();

break;

case FrontendStatus::LOST:

Reset();

break;

}

last_frame_ = current_frame_;

return true;

}

bool Frontend::Track() {

if (last_frame_) {

current_frame_->SetPose(relative_motion_ * last_frame_->Pose());

}

int num_track_last = TrackLastFrame();

tracking_inliers_ = EstimateCurrentPose();

if (tracking_inliers_ > num_features_tracking_) {

status_ = FrontendStatus::TRACKING_GOOD;

} else if (tracking_inliers_ > num_features_tracking_bad_) {

status_ = FrontendStatus::TRACKING_BAD;

} else {

status_ = FrontendStatus::LOST;

}

InsertKeyframe();

relative_motion_ = current_frame_->Pose() * last_frame_->Pose().inverse();

if (viewer_) viewer_->AddCurrentFrame(current_frame_);

return true;

}

bool Frontend::InsertKeyframe() {

if (tracking_inliers_ >= num_features_needed_for_keyframe_) {

return false;

}

current_frame_->SetKeyFrame();

map_->InsertKeyFrame(current_frame_);

LOG(INFO) << "Set frame " << current_frame_->id_ << " as keyframe "

<< current_frame_->keyframe_id_;

SetObservationsForKeyFrame();

DetectFeatures();

FindFeaturesInRight();

TriangulateNewPoints();

backend_->UpdateMap();

if (viewer_) viewer_->UpdateMap();

return true;

}

void Frontend::SetObservationsForKeyFrame() {

for (auto &feat : current_frame_->features_left_) {

auto mp = feat->map_point_.lock();

if (mp) mp->AddObservation(feat);

}

}

int Frontend::TriangulateNewPoints() {

std::vector<SE3> poses{camera_left_->pose(), camera_right_->pose()};

SE3 current_pose_Twc = current_frame_->Pose().inverse();

int cnt_triangulated_pts = 0;

for (size_t i = 0; i < current_frame_->features_left_.size(); ++i) {

if (current_frame_->features_left_[i]->map_point_.expired() &&

current_frame_->features_right_[i] != nullptr) {

std::vector<Vec3> points{

camera_left_->pixel2camera(

Vec2(current_frame_->features_left_[i]->position_.pt.x,

current_frame_->features_left_[i]->position_.pt.y)),

camera_right_->pixel2camera(

Vec2(current_frame_->features_right_[i]->position_.pt.x,

current_frame_->features_right_[i]->position_.pt.y))};

Vec3 pworld = Vec3::Zero();

if (triangulation(poses, points, pworld) && pworld[2] > 0) {

auto new_map_point = MapPoint::CreateNewMappoint();

pworld = current_pose_Twc * pworld;

new_map_point->SetPos(pworld);

new_map_point->AddObservation(

current_frame_->features_left_[i]);

new_map_point->AddObservation(

current_frame_->features_right_[i]);

current_frame_->features_left_[i]->map_point_ = new_map_point;

current_frame_->features_right_[i]->map_point_ = new_map_point;

map_->InsertMapPoint(new_map_point);

cnt_triangulated_pts++;

}

}

}

LOG(INFO) << "new landmarks: " << cnt_triangulated_pts;

return cnt_triangulated_pts;

}

int Frontend::EstimateCurrentPose() {

typedef g2o::BlockSolver_6_3 BlockSolverType;

typedef g2o::LinearSolverDense<BlockSolverType::PoseMatrixType>

LinearSolverType;

auto solver = new g2o::OptimizationAlgorithmLevenberg(

g2o::make_unique<BlockSolverType>(

g2o::make_unique<LinearSolverType>()));

g2o::SparseOptimizer optimizer;

optimizer.setAlgorithm(solver);

VertexPose *vertex_pose = new VertexPose();

vertex_pose->setId(0);

vertex_pose->setEstimate(current_frame_->Pose());

optimizer.addVertex(vertex_pose);

Mat33 K = camera_left_->K();

int index = 1;

std::vector<EdgeProjectionPoseOnly *> edges;

std::vector<Feature::Ptr> features;

for (size_t i = 0; i < current_frame_->features_left_.size(); ++i) {

auto mp = current_frame_->features_left_[i]->map_point_.lock();

if (mp) {

features.push_back(current_frame_->features_left_[i]);

EdgeProjectionPoseOnly *edge =

new EdgeProjectionPoseOnly(mp->pos_, K);

edge->setId(index);

edge->setVertex(0, vertex_pose);

edge->setMeasurement(

toVec2(current_frame_->features_left_[i]->position_.pt));

edge->setInformation(Eigen::Matrix2d::Identity());

edge->setRobustKernel(new g2o::RobustKernelHuber);

edges.push_back(edge);

optimizer.addEdge(edge);

index++;

}

}

const double chi2_th = 5.991;

int cnt_outlier = 0;

for (int iteration = 0; iteration < 4; ++iteration) {

vertex_pose->setEstimate(current_frame_->Pose());

optimizer.initializeOptimization();

optimizer.optimize(10);

cnt_outlier = 0;

for (size_t i = 0; i < edges.size(); ++i) {

auto e = edges[i];

if (features[i]->is_outlier_) {

e->computeError();

}

if (e->chi2() > chi2_th) {

features[i]->is_outlier_ = true;

e->setLevel(1);

cnt_outlier++;

} else {

features[i]->is_outlier_ = false;

e->setLevel(0);

};

if (iteration == 2) {

e->setRobustKernel(nullptr);

}

}

}

LOG(INFO) << "Outlier/Inlier in pose estimating: " << cnt_outlier << "/"

<< features.size() - cnt_outlier;

current_frame_->SetPose(vertex_pose->estimate());

LOG(INFO) << "Current Pose = \n" << current_frame_->Pose().matrix();

for (auto &feat : features) {

if (feat->is_outlier_) {

feat->map_point_.reset();

feat->is_outlier_ = false;

}

}

return features.size() - cnt_outlier;

}

int Frontend::TrackLastFrame() {

std::vector<cv::Point2f> kps_last, kps_current;

for (auto &kp : last_frame_->features_left_) {

if (kp->map_point_.lock()) {

auto mp = kp->map_point_.lock();

auto px =

camera_left_->world2pixel(mp->pos_, current_frame_->Pose());

kps_last.push_back(kp->position_.pt);

kps_current.push_back(cv::Point2f(px[0], px[1]));

} else {

kps_last.push_back(kp->position_.pt);

kps_current.push_back(kp->position_.pt);

}

}

std::vector<uchar> status;

Mat error;

cv::calcOpticalFlowPyrLK(

last_frame_->left_img_, current_frame_->left_img_, kps_last,

kps_current, status, error, cv::Size(11, 11), 3,

cv::TermCriteria(cv::TermCriteria::COUNT + cv::TermCriteria::EPS, 30,

0.01),

cv::OPTFLOW_USE_INITIAL_FLOW);

int num_good_pts = 0;

for (size_t i = 0; i < status.size(); ++i) {

if (status[i]) {

cv::KeyPoint kp(kps_current[i], 7);

Feature::Ptr feature(new Feature(current_frame_, kp));

feature->map_point_ = last_frame_->features_left_[i]->map_point_;

current_frame_->features_left_.push_back(feature);

num_good_pts++;

}

}

LOG(INFO) << "Find " << num_good_pts << " in the last image.";

return num_good_pts;

}

bool Frontend::StereoInit() {

int num_features_left = DetectFeatures();

int num_coor_features = FindFeaturesInRight();

if (num_coor_features < num_features_init_) {

return false;

}

bool build_map_success = BuildInitMap();

if (build_map_success) {

status_ = FrontendStatus::TRACKING_GOOD;

if (viewer_) {

viewer_->AddCurrentFrame(current_frame_);

viewer_->UpdateMap();

}

return true;

}

return false;

}

int Frontend::DetectFeatures() {

cv::Mat mask(current_frame_->left_img_.size(), CV_8UC1, 255);

for (auto &feat : current_frame_->features_left_) {

cv::rectangle(mask, feat->position_.pt - cv::Point2f(10, 10),

feat->position_.pt + cv::Point2f(10, 10), 0, CV_FILLED);

}

std::vector<cv::KeyPoint> keypoints;

gftt_->detect(current_frame_->left_img_, keypoints, mask);

int cnt_detected = 0;

for (auto &kp : keypoints) {

current_frame_->features_left_.push_back(

Feature::Ptr(new Feature(current_frame_, kp)));

cnt_detected++;

}

LOG(INFO) << "Detect " << cnt_detected << " new features";

return cnt_detected;

}

int Frontend::FindFeaturesInRight() {

std::vector<cv::Point2f> kps_left, kps_right;

for (auto &kp : current_frame_->features_left_) {

kps_left.push_back(kp->position_.pt);

auto mp = kp->map_point_.lock();

if (mp) {

auto px =

camera_right_->world2pixel(mp->pos_, current_frame_->Pose());

kps_right.push_back(cv::Point2f(px[0], px[1]));

} else {

kps_right.push_back(kp->position_.pt);

}

}

std::vector<uchar> status;

Mat error;

cv::calcOpticalFlowPyrLK(

current_frame_->left_img_, current_frame_->right_img_, kps_left,

kps_right, status, error, cv::Size(11, 11), 3,

cv::TermCriteria(cv::TermCriteria::COUNT + cv::TermCriteria::EPS, 30,

0.01),

cv::OPTFLOW_USE_INITIAL_FLOW);

int num_good_pts = 0;

for (size_t i = 0; i < status.size(); ++i) {

if (status[i]) {

cv::KeyPoint kp(kps_right[i], 7);

Feature::Ptr feat(new Feature(current_frame_, kp));

feat->is_on_left_image_ = false;

current_frame_->features_right_.push_back(feat);

num_good_pts++;

} else {

current_frame_->features_right_.push_back(nullptr);

}

}

LOG(INFO) << "Find " << num_good_pts << " in the right image.";

return num_good_pts;

}

bool Frontend::BuildInitMap() {

std::vector<SE3> poses{camera_left_->pose(), camera_right_->pose()};

size_t cnt_init_landmarks = 0;

for (size_t i = 0; i < current_frame_->features_left_.size(); ++i) {

if (current_frame_->features_right_[i] == nullptr) continue;

std::vector<Vec3> points{

camera_left_->pixel2camera(

Vec2(current_frame_->features_left_[i]->position_.pt.x,

current_frame_->features_left_[i]->position_.pt.y)),

camera_right_->pixel2camera(

Vec2(current_frame_->features_right_[i]->position_.pt.x,

current_frame_->features_right_[i]->position_.pt.y))};

Vec3 pworld = Vec3::Zero();

if (triangulation(poses, points, pworld) && pworld[2] > 0) {

auto new_map_point = MapPoint::CreateNewMappoint();

new_map_point->SetPos(pworld);

new_map_point->AddObservation(current_frame_->features_left_[i]);

new_map_point->AddObservation(current_frame_->features_right_[i]);

current_frame_->features_left_[i]->map_point_ = new_map_point;

current_frame_->features_right_[i]->map_point_ = new_map_point;

cnt_init_landmarks++;

map_->InsertMapPoint(new_map_point);

}

}

current_frame_->SetKeyFrame();

map_->InsertKeyFrame(current_frame_);

backend_->UpdateMap();

LOG(INFO) << "Initial map created with " << cnt_init_landmarks

<< " map points";

return true;

}

bool Frontend::Reset() {

LOG(INFO) << "Reset is not implemented. ";

return true;

}

}

后端优化:

#include "myslam/backend.h"

#include "myslam/algorithm.h"

#include "myslam/feature.h"

#include "myslam/g2o_types.h"

#include "myslam/map.h"

#include "myslam/mappoint.h"

namespace myslam {

Backend::Backend() {

backend_running_.store(true);

backend_thread_ = std::thread(std::bind(&Backend::BackendLoop, this));

}

void Backend::UpdateMap() {

std::unique_lock<std::mutex> lock(data_mutex_);

map_update_.notify_one();

}

void Backend::Stop() {

backend_running_.store(false);

map_update_.notify_one();

backend_thread_.join();

}

void Backend::BackendLoop() {

while (backend_running_.load()) {

std::unique_lock<std::mutex> lock(data_mutex_);

map_update_.wait(lock);

Map::KeyframesType active_kfs = map_->GetActiveKeyFrames();

Map::LandmarksType active_landmarks = map_->GetActiveMapPoints();

Optimize(active_kfs, active_landmarks);

}

}

void Backend::Optimize(Map::KeyframesType &keyframes,

Map::LandmarksType &landmarks) {

typedef g2o::BlockSolver_6_3 BlockSolverType;

typedef g2o::LinearSolverCSparse<BlockSolverType::PoseMatrixType>

LinearSolverType;

auto solver = new g2o::OptimizationAlgorithmLevenberg(

g2o::make_unique<BlockSolverType>(

g2o::make_unique<LinearSolverType>()));

g2o::SparseOptimizer optimizer;

optimizer.setAlgorithm(solver);

std::map<unsigned long, VertexPose *> vertices;

unsigned long max_kf_id = 0;

for (auto &keyframe : keyframes) {

auto kf = keyframe.second;

VertexPose *vertex_pose = new VertexPose();

vertex_pose->setId(kf->keyframe_id_);

vertex_pose->setEstimate(kf->Pose());

optimizer.addVertex(vertex_pose);

if (kf->keyframe_id_ > max_kf_id) {

max_kf_id = kf->keyframe_id_;

}

vertices.insert({kf->keyframe_id_, vertex_pose});

}

std::map<unsigned long, VertexXYZ *> vertices_landmarks;

Mat33 K = cam_left_->K();

SE3 left_ext = cam_left_->pose();

SE3 right_ext = cam_right_->pose();

int index = 1;

double chi2_th = 5.991;

std::map<EdgeProjection *, Feature::Ptr> edges_and_features;

for (auto &landmark : landmarks) {

if (landmark.second->is_outlier_) continue;

unsigned long landmark_id = landmark.second->id_;

auto observations = landmark.second->GetObs();

for (auto &obs : observations) {

if (obs.lock() == nullptr) continue;

auto feat = obs.lock();

if (feat->is_outlier_ || feat->frame_.lock() == nullptr) continue;

auto frame = feat->frame_.lock();

EdgeProjection *edge = nullptr;

if (feat->is_on_left_image_) {

edge = new EdgeProjection(K, left_ext);

} else {

edge = new EdgeProjection(K, right_ext);

}

if (vertices_landmarks.find(landmark_id) ==

vertices_landmarks.end()) {

VertexXYZ *v = new VertexXYZ;

v->setEstimate(landmark.second->Pos());

v->setId(landmark_id + max_kf_id + 1);

v->setMarginalized(true);

vertices_landmarks.insert({landmark_id, v});

optimizer.addVertex(v);

}

edge->setId(index);

edge->setVertex(0, vertices.at(frame->keyframe_id_));

edge->setVertex(1, vertices_landmarks.at(landmark_id));

edge->setMeasurement(toVec2(feat->position_.pt));

edge->setInformation(Mat22::Identity());

auto rk = new g2o::RobustKernelHuber();

rk->setDelta(chi2_th);

edge->setRobustKernel(rk);

edges_and_features.insert({edge, feat});

optimizer.addEdge(edge);

index++;

}

}

optimizer.initializeOptimization();

optimizer.optimize(10);

int cnt_outlier = 0, cnt_inlier = 0;

int iteration = 0;

while (iteration < 5) {

cnt_outlier = 0;

cnt_inlier = 0;

for (auto &ef : edges_and_features) {

if (ef.first->chi2() > chi2_th) {

cnt_outlier++;

} else {

cnt_inlier++;

}

}

double inlier_ratio = cnt_inlier / double(cnt_inlier + cnt_outlier);

if (inlier_ratio > 0.5) {

break;

} else {

chi2_th *= 2;

iteration++;

}

}

for (auto &ef : edges_and_features) {

if (ef.first->chi2() > chi2_th) {

ef.second->is_outlier_ = true;

ef.second->map_point_.lock()->RemoveObservation(ef.second);

} else {

ef.second->is_outlier_ = false;

}

}

LOG(INFO) << "Outlier/Inlier in optimization: " << cnt_outlier << "/"

<< cnt_inlier;

for (auto &v : vertices) {

keyframes.at(v.first)->SetPose(v.second->estimate());

}

for (auto &v : vertices_landmarks) {

landmarks.at(v.first)->SetPos(v.second->estimate());

}

}

}

实现效果:

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)